Image from Life Science Databases via Wikimedia Commons

Edited by Matthew A. McIntosh / 03.06.2018

Historian

Brewminate Editor-in-Chief

1 – History of Cognition

1.1 – Introduction

“Cognition” is a term for a wide swath of mental functions that relate to knowledge and information processing.

1.1.1 – Cogito Ergo Sum

Maybe you’ve heard the phrase I think , therefore I am, or perhaps even the Latin version: Cogito ergo sum. This simple expression is one of enormous philosophical importance, because it is about the act of thinking. Thought has been of fascination to humans for many centuries, with questions like What is thinking? and How do people think? and Why do people think? troubling and intriguing many philosophers, psychologists, scientists, and others.

The word “cognition” is the closest scientific synonym for thinking. It comes from the same root as the Latin word cogito, which is one of the forms of the verb “to know.” Cognition is the set of all mental abilities and processes related to knowledge, including attention, memory, judgment, reasoning, problem solving, decision making, and a host of other vital processes.

Human cognition takes place at both conscious and unconscious levels. It can be concrete or abstract. It is intuitive, meaning that nobody has to learn or be taught how to think. It just happens as part of being human. Cognitive processes use existing knowledge but are capable of generating new knowledge through logic and inference.

1.1.2 – Aristotle (384-322 BCE)

The study of human cognition began over two thousand years ago. The Greek philosopher Aristotle was interested in many fields, including the inner workings of the mind and how they affect the human experience. He also placed great importance on ensuring that his studies and ideas were based on empirical evidence (scientific information that is gathered through observation and careful experimentation).

1.1.3 – Descartes (1596-1650)

René Descartes was a seventeenth-century philosopher who coined the famous phrase I think, therefore I am (albeit in French). The simple meaning of this phrase is that the act of thinking proves that a thinker exists. Descartes came up with this idea when trying to prove whether anyone could truly know anything despite the fact that our senses sometimes deceive us. As he explains, “We cannot doubt of our existence while we doubt.”

1.1.4 – Wilhelm Wundt (1832-1920)

Wilhelm Wundt is considered one of the founding figures of modern psychology; in fact, he was the first person to call himself a psychologist. Wundt believed that scientific psychology should focus on introspection, or analysis of the contents of one’s own mind and experience. Though today Wundt’s methods are recognized as being subjective and unreliable, he is one of the important figures in the study of cognition because of his examination of human thought processes.

1.2 – Cognition, Psychology, and Cognitive Science

The term “cognition” covers a wide swath of processes, everything from memory to attention. These processes can be analyzed through the lenses of many different fields: linguistics, anesthesia, neuroscience, education, philosophy, biology, computer science, and of course, psychology, to name a few. Because of the number of disciplines that study cognition to some degree, the term can have different meanings in different contexts. For example, in psychology, “cognition” usually refers to processing of neural information; in social psychology the term “social cognition” refers to attitudes and group attributes.

These numerous approaches to the analysis of cognition are synthesized in the relatively new field of cognitive science, the interdisciplinary study of mental processes and functions.

2 – Attention

2.1 – Introduction

2.1.1 – Overview

Attention is a limited resource used to selectively concentrate on some information while ignoring other perceivable information.

Attention is the behavioral and cognitive process of selectively concentrating on a discrete stimulus while ignoring other perceivable stimuli. It is a major area of investigation within education, psychology, and neuroscience. Attention can be thought of as the allocation of limited processing resources: your brain can only devote attention to a limited number of stimuli. Attention comes into play in many psychological topics, including memory (stimuli that are more attended to are better remembered), vision, and cognitive load.

2.1.2 – Visual Attention

Generally speaking, visual attention is thought to operate as a two-stage process. In the first stage, attention is distributed uniformly over the external visual scene and the processing of information. In the second stage, attention is concentrated to a specific area of the visual scene; it is focused on a specific stimulus. There are two major models for understanding how visual attention operates, both of which are loose metaphors for the actual neural processes occurring.

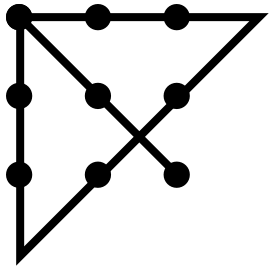

2.1.3 – Spotlight Model

Spotlight model: In the spotlight model, the focus is the central area of attention, which takes in highly detailed information. The fringe takes in less detailed information, and the margin is the cutoff for taking in any information.

The term “spotlight” was inspired by the work of William James, who described attention as having a focus, a margin, and a fringe. The focus is the central area that extracts “high-resolution” information from the visual scene where attention is directed. Surrounding the focus is the fringe of attention, which extracts information in a much more crude fashion. This fringe extends out to a specified area, and the cutoff is called the margin.

2.1.4 – Zoom-Lens Model

First introduced in 1986, this model inherits all the properties of the spotlight model, but it has the added property of changing in size. This size-change mechanism was inspired by the zoom lens one might find on a camera, and any change in size can be described by a trade-off in the efficiency of processing. The zoom-lens of attention can be described in terms of an inverse trade-off between the size of focus and the efficiency of processing. Because attentional resources are assumed to be fixed, the larger the focus is, the slower processing will be of that region of the visual scene, since this fixed resource will be distributed over a larger area.

2.2 – Cognitive Load

Think of a computer with limited memory storage: you can only give it so many tasks before it is unable to process more. Brains work on a similar principle, called the cognitive load theory. “Cognitive load” refers to the total amount of mental effort being used in working memory. Attention requires working memory; therefore devoting attention to something increases cognitive load.

2.3 – Multitasking and Divided Attention

Multitasking can be defined as the attempt to perform two or more tasks simultaneously; however, research shows that when multitasking, people make more mistakes or perform their tasks more slowly. Each task increases cognitive load; attention must be divided among all of the component tasks to perform them.

Older research involved looking at the limits of people performing simultaneous tasks like reading stories while listening to and writing something else, or listening to two separate messages through different ears (i.e., dichotic listening). The vast majority of current research on human multitasking is based on performance of doing two tasks simultaneously, usually involving driving while performing another task such as texting, eating, and speaking to passengers in the vehicle or talking on a cell phone. This research reveals that the human attentional system has limits to what it can process: driving performance is worse while engaged in other tasks; drivers make more mistakes, brake harder and later, get into more accidents, veer into other lanes, and are less aware of their surroundings when engaged in the previously discussed tasks.

2.4 – Selective Attention

Studies show that if there are many stimuli present (especially if they are task-related), it is much easier to ignore the non-task-related stimuli, but if there are few stimuli the mind will perceive the irrelevant stimuli as well as the relevant.

Some people can process multiple stimuli with practice. For example, trained Morse-code operators have been able to copy 100% of a message while carrying on a meaningful conversation. This relies on the reflexive response that emerges from “overlearning” the skill of Morse-code transcription so that it is an autonomous function requiring no specific attention to perform.

3 – Classification and Categorization

3.1 – Introduction

Categorization is the process through which ideas and objects are recognized, differentiated, classified, and understood.

Categorization is the process through which ideas and objects are recognized, differentiated, classified, and understood. The word “categorization” implies that objects are sorted into categories, usually for some specific purpose. This process is vital to cognition. Our minds are not capable of treating every object as unique; otherwise, we would experience too great a cognitive load to be able to process the world around us. Therefore, our minds develop ” concepts,” or mental representations of categories of objects. Categorization is fundamental in language, prediction, inference, decision making, and all kinds of environmental interaction.

There are many theories of how the mind categorizes objects and ideas. However, over the history of cognitive science and psychology, three general approaches to categorization have been named.

3.2 – Classical Categorization

This type of categorization dates back to the classical period in Greece. Plato introduced the approach of grouping objects based on their similar properties in his Socratic dialogues; Aristotle further explored this approach in one of his treatises by analyzing the differences between classes and objects. Aristotle also applied intensively the classical categorization scheme in his approach to the classification of living beings (which uses the technique of applying successive narrowing questions: Is it an animal or vegetable? How many feet does it have? Does it have fur or feathers? Can it fly?), establishing the basis for natural taxonomy.

According to the classical view, categories should be clearly defined, mutually exclusive, and collectively exhaustive. This way, any entity of the given classification universe belongs unequivocally to one, and only one, of the proposed categories. Most modern forms of categorization do not have such a cut-and-dried system.

3.3 – Conceptual Clustering

Conceptual clustering is a modern variation of the classical approach, and derives from attempts to explain how knowledge is represented. In this approach, concepts are generated by first formulating their conceptual descriptions and then classifying the entities according to the descriptions. So for example, under conceptual clustering, your mind has the idea that the cluster DOG has the description “animal, furry, four-legged, energetic.” Then, when you encounter an object that fits this description, you classify that object as being a dog.

Conceptual clustering brings up the idea of necessary and sufficient conditions. For instance, for something to be classified as DOG, it is necessary for it to meet the conditions “animal, furry, four-legged, energetic.” But those conditions are not sufficient; other objects can meet those conditions and still not be a dog. Different clusters have different requirements, and objects have different levels of fitness for different clusters. This comes up in fuzzy sets.

3.4 – Fuzzy Sets

Conceptual clustering is closely related to fuzzy-set theory, in which objects may belong to one or more concepts, in varying degrees of fitness. Our example of the class DOG is a fuzzy set. Perhaps “fox” belongs to this cluster (animal, furry, four-legged, energetic), but not with the same degree of fitness that “wolf” does. Different objects can fit a cluster better than others; fuzzy-set theory is not binary, so it is not always clear whether an object belongs to a cluster or not.

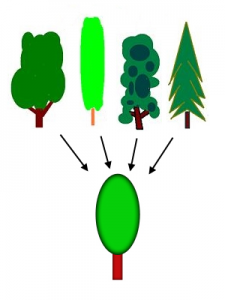

3.5 – Prototype Theory

Prototype theory: Under prototype theory, all treelike things will be judged based on an individual’s prototype of a tree. The middle category TREE is more salient than the high-level category PLANT or the low-level category ELM.

4 – Executive Function and Control

4.1 – Introduction

Executive functions are involved in handling novel situations and regulating behavior; they mature and develop over time.

“Executive function” is an umbrella term for the management, regulation, and control of cognitive processes, including working memory, reasoning, problem solving, social inhibition, planning, and execution. The executive system is a theoretical cognitive system that manages the processes of executive function. This system is thought to rely on the prefrontal areas of the frontal lobe, but while these areas are necessary for executive function, they are not solely sufficient.

4.2 – Role of the Executive System

The executive system is thought to be heavily involved in handling novel situations outside the domain of the routine, automatic psychological processes (i.e., ones that are handled by learned schemas or set behaviors). There are five types of situation where routine behavior is insufficient for optimal performance, in which the executive system comes into play:

- planning or decision making;

- error correction or troubleshooting;

- novel situations with unrehearsed reactions;

- dangerous or technically difficult situations;

- overcoming of a strong habitual response; resisting temptation.

A prepotent response is a response for which immediate reinforcement (positive or negative) is available or is associated with that response. Executive functions tend to be invoked when it is necessary to override prepotent responses that would otherwise occur automatically. For example, on being presented with a potentially rewarding stimulus like a piece of chocolate cake, a person might have the prepotent “automatic” response to take a bite. But if this behavior conflicts with internal plans (such as a diet), the executive system might be engaged to inhibit that response.

4.3 – Anatomy of the Executive System

The prefrontal cortex: The different parts of the prefrontal cortex are vital to executive function.

Historically, the executive functions have been thought to be regulated by the prefrontal regions of the frontal lobes, but this is a matter of ongoing debate. Though prefrontal regions of the brain are necessary for executive function, it seems that non-frontal regions come into play as well. The most likely explanation is that while the frontal lobes participate in all executive functions, other brain regions are necessary. The major frontal structures involved in executive function are:

- Dorsolateral prefrontal cortex: associated with verbal and design fluency, set shifts, planning, response inhibition, working memory, organizational skills, reasoning, problem solving, and abstract thinking.

- Anterior cingulate cortex: inhibition of inappropriate responses, decision making, and motivated behaviors.

- Orbitofrontal cortex: impulse control, maintenance of set, monitoring ongoing behavior, socially appropriate behavior, representing the value of rewards of sensory stimuli.

4.4 – Development of the Executive System

The abilities of the executive system mature at different rates over time because the brain continues to mature and develop connections well into adulthood. Therefore, a developmental framework is helpful. Executive-function development corresponds to the development of the growing brain; as the processing capacity of the frontal lobes (and other interconnected regions) increases, the core executive functions emerge. Growth spurts also occur in the development of the executive functions; their maturation is not a linear process.

In early childhood, the primary executive functions to emerge are working memory and inhibitory control. Cognitive flexibility, goal-directed behavior, and planning also begin to develop, but are not fully functional. During preadolescence, there are major increases in verbal working memory, goal-directed behavior, selective attention, cognitive flexibility, and strategic planning. In adolescence, these functions all become better integrated as they continue developing. During early adulthood (from ages 20 to 29) executive functions are at their peak, but in later adulthood these systems begin to decline. Cognitive flexibility is resilient, however, and does not usually start declining until around age 70.

5 – Reasoning and Inference

5.1 – Introduction

Reason is the capacity for consciously making sense of things, applying logic, establishing and verifying facts, and changing or justifying practices, institutions, and beliefs based on new or existing information. It is considered to be a definitive characteristic of human nature, and it is associated with a wide range of fields, from science to philosophy.

Reason and reasoning (i.e., the ability to apply reason) are associated with thinking, cognition, and intelligence. Like habit or intuition, reason is one of the ways that an idea progresses to a related idea, helping people understand concepts like cause and effect, or truth and falsehood. We use reason to form inferences—conclusions drawn from propositions or assumptions that are supposed to be true.

There is more than one way to start with information and arrive at an inference; thus, there is more than one way to reason. Each has its own strengths, weaknesses, and applicability to the real world.

5.1.1 – Deduction

In this form of reasoning a person starts with a known claim or general belief, and from there determines what follows. Essentially, deduction starts with a hypothesis and examines the possibilities within that hypothesis to reach a conclusion. Deductive reasoning has the advantage that, if your original premises are true in all situations and your reasoning is correct, your conclusion is guaranteed to be true. However, deductive reasoning has limited applicability in the real world because there are very few premises which are guaranteed to be true all of the time.

A syllogism is a form of deductive reasoning in which two statements reach a logical conclusion. An example of a syllogism is, “All dogs are mammals; Kirra is a dog; therefore, Kirra is a mammal.”

5.1.2 – Induction

Sherlock Holmes, master of reasoning: In this video, we see the famous literary character Sherlock Holmes use both inductive and deductive reasoning to form inferences about his friends. As you can see, inductive reasoning can lead to erroneous conclusions. Can you distinguish between his deductive (general to specific) and inductive (specific to general) reasoning?

Inductive reasoning makes broad inferences from specific cases or observations. In this process of reasoning, general assertions are made based on specific pieces of evidence. Scientists use inductive reasoning to create theories and hypotheses. An example of inductive reasoning is, “The sun has risen every morning so far; therefore, the sun rises every morning.” Inductive reasoning is more practical to the real world because it does not rely on a known claim; however, for this same reason, inductive reasoning can lead to faulty conclusions. A faulty example of inductive reasoning is, “I saw two brown cats; therefore, the cats in this neighborhood are brown.”

5.1.3 – Abduction

Abductive reasoning is based on creating and testing hypotheses using the best information available. Abductive reasoning is used in a person’s daily decision making because it works with whatever information is present—even if it is incomplete information. Essentially, this type of reasoning involves making educated guesses about the unknowable from observed phenomena. Examples of abductive reasoning include a doctor making a diagnosis based on test results and a jury using evidence to pass judgment on a case: in both scenarios, there is not a 100% guarantee of correctness—just the best guess based on the available evidence.

The difference between abductive reasoning and inductive reasoning is a subtle one; both use evidence to form guesses that are likely, but not guaranteed, to be true. However, abductive reasoning looks for cause-and-effect relationships, while induction seeks to determine general rules.

6 – Problem Solving

6.1 – Introduction

Solving a problem is reaching a goal state; there are many things that can stand in the way of solving a problem, but many strategies that can help.

The human mind is a problem-solving machine. You may not realize it, but as you walk through the world, you are solving problems every second—everything from “I’m running late, what’s the quickest way for me to get to class?” to “Where did I leave my wallet?” to “What should my psychology paper be about?”

In psychology, “problem solving” refers to a way of reaching a goal from a present condition, where the present condition is either not directly moving toward the goal, is far from it, or needs more complex logic in order to find steps toward the goal. It is considered the most complex of all intellectual functions, since it is a higher-order cognitive process that requires the modulation and control of basic skills. There are considered to be two major domains in problem solving: mathematical problem solving, which involves problems capable of being represented by symbols, and personal problem solving, where some difficulty or barrier is encountered.

6.2 – Barriers to Problem Solving

There are many common mental constructs that impede our ability to correctly solve problems in the most efficient manner possible.

6.2.1 – Mental Set and Functional Fixedness

A mental set is an unconscious tendency to approach a problem in a particular way. Our mental sets are shaped by our past experiences and habits. For example, if the last time your computer froze you restarted it and it worked, that might be the only solution you can think of the next time it freezes.

Functional fixedness is a special type of mental set that occurs when the intended purpose of an object hinders a person’s ability to see its potential other uses. So for example, say you need to open a can of broth but you only have a hammer. You might not realize that you could use the pointy, two-pronged end of the hammer to puncture the top of the can, since you are so accustomed to using the hammer as simply a pounding tool.

6.2.2 – Unnecessary Constraints

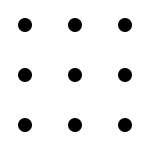

[LEFT]: The dot problem: In the dot problem, described below, solvers must attempt to connect all nine dots with no more than four lines, without lifting their pen from the paper.

[RIGHT]: The dot problem’s solution: Most solvers do not recognize that they can go outside the box and draw longer lines to connect the dots.

This is a barrier that shows up in problem solving that causes people to unconsciously place boundaries on the task at hand. A famous example of this barrier to problem solving is the dot problem. In this problem, there are nine dots arranged in a 3 x 3 square. The solver is asked to draw no more than four lines, without lifting their pen or pencil from the paper, that connect all of the dots. What often happens is that the solver creates an assumption in their mind that they must connect the dots without letting the lines go outside the square of dots. The solvers are literally unable to think outside the box. Standardized procedures of this nature often involve mentally invented constraints of this kind.

6.2.3 – Irrelevant Information

Irrelevant information is information that is presented as part of a problem, but which is unrelated or unimportant to that problem and will not help solve it. Typically, it detracts from the problem-solving process, as it may seem pertinent and distract people from finding the most efficient solution. An example of a problem hindered by irrelevant information is this:

15% of people in Topeka have unlisted telephone numbers. You select 200 names at random from the Topeka phone book. How many of these people have unlisted phone numbers?

The answer, of course, is none of them: if they are in the phone book, they do not have unlisted numbers. But the extraneous information at the beginning of the problem makes many people believe they have to perform a mathematical calculation of some sort. This is the trouble that irrelevant information can cause.

6.3 – Problem Solving Strategies

There are many strategies that can make solving a problem easier and more efficient. Two of them, algorithms and heuristics, are of particularly great psychological importance.

6.3.1 – Heuristic

A heuristic is a rule of thumb, a strategy, or a mental shortcut that generally works for solving a problem (particularly decision-making problems). It is a practical method, one that is not a hundred percent guaranteed to be optimal or even successful, but is sufficient for the immediate goal. The advantage of heuristics is that they often reduce the time and cognitive load required to solve a problem; the disadvantage is that they cannot always be relied on to solve the problem—just most of the time.

6.3.2 – Algorithm

An algorithm is a series of sets of steps for solving a problem. Unlike a heuristic, you are guaranteed to get the correct solution to the problem; however, an algorithm may not necessarily be the most efficient way of solving the problem. Additionally, you need to know the algorithm (i.e., the complete set of steps), which is not usually realistic for the problems of daily life.

The difference between an algorithm and a heuristic can be summed up in the example of trying to find a Starbucks (or some other national chain) in a city. An algorithm would be a series of steps: “Walk in an increasingly large grid pattern around the city blocks until you find a Starbucks or you have looked at every street.” But a heuristic could simply be, “Well, usually they’re at busy intersections; I’ll just walk to the nearest busy intersection.”

6.3.3 – Other Strategies

There are many other ways of solving a problem. The most effective depends on the type of problem and the resources at hand.

- Abstraction: solving the problem in a model of the system before applying it to the real system.

- Analogy: using a solution for a similar problem.

- Brainstorming: suggesting a large number of solutions and developing them until the best is found.

- Divide and conquer: breaking down a large, complex problem into smaller, solvable problems.

- Hypothesis testing: assuming a possible explanation to the problem and trying to prove (or, in some contexts, disprove) the assumption.

- Lateral thinking: approaching solutions indirectly and creatively.

- Means-ends analysis: choosing an action at each step to move closer to the goal.

- Morphological analysis: assessing the output and interactions of an entire system.

- Proof: try to prove that the problem cannot be solved. The point where the proof fails will be the starting point for solving it.

- Reduction: transforming the problem into another problem for which solutions exist.

- Root-cause analysis: identifying the cause of a problem.

- Trial and error: testing possible solutions until the right one is found.

7 – Decision Making

7.1 – Introduction

Decision making is the cognitive process that results in the selection of a course of action or belief from several possibilities. It can be thought of as a particular type of problem solving; the problem is considered solved when a solution that is deemed satisfactory is reached.

7.2 – Heuristics

Heuristics are simple rules of thumb that people often use to form judgments and make decisions; think of them as mental shortcuts. Heuristics can be very useful in reducing the time and mental effort it takes to make most decisions and judgments; however, because they are shortcuts, they don’t take into account all information and can thus lead to errors.

7.2.1 – The Availability Heuristic

Lottery ticket: Lotteries take advantage of the availability heuristic: winning the lottery is a more vivid mental image than losing the lottery, and thus people perceive winning the lottery as being more likely than it is.

In psychology, availability is the ease with which a particular idea can be brought to mind. When people estimate how likely or how frequent an event is on the basis of its availability, they are using the availability heuristic. When an infrequent event can be brought easily and vividly to mind, this heuristic overestimates its likelihood. For example, people overestimate their likelihood of dying in a dramatic event such as a tornado or a terrorist attack. Dramatic, violent deaths are usually more highly publicized and therefore have a higher availability. On the other hand, common but mundane events (like heart attacks and diabetes) are harder to bring to mind, so their likelihood tends to be underestimated. This heuristic is one of the reasons why people are more easily swayed by a single, vivid story than by a large body of statistical evidence. It affects decision making in a number of ways: people decide not to fly on a plane after hearing about a plane crash, but if their doctor says they should change their diet or they’ll be at risk for heart disease, they may think “Well, it probably won’t happen.” Since the former leaps to mind more easily than the latter, people perceive it as more likely.

7.2.2 – The Representativeness Heuristic and the Base-Rate Fallacy

The representativeness heuristic is seen when people use categories—when deciding, for example,whether or not a person is a criminal. An individual object or person has a high representativeness for a category if that object or person is very similar to a prototype of that category. When people categorize things on the basis of representativeness, they are using the representativeness heuristic. While it is effective for some problems, this heuristic involves attending to the particular characteristics of the individual, ignoring how common those categories are in the population (called the base rates). Thus, people can overestimate the likelihood that something has a very rare property, or underestimate the likelihood of a very common property. This is called the base-rate fallacy, and it is the cause of many negative stereotypes based on outward appearance. Representativeness explains many of the ways in which human judgments break the laws of probability.

7.2.3 – The Anchor-and-Adjustment Heuristic

Anchoring and adjustment is a heuristic used in situations where people must estimate a number. It involves starting from a readily available number—the “anchor”—and shifting either up or down to reach an answer that seems plausible. However, people do not shift far enough away from the anchor to be random; thus, it seems that the anchor contaminates the estimate, even if it is clearly irrelevant. In one experiment, subjects watched a number being selected from a spinning “wheel of fortune.” They had to say whether a given quantity was larger or smaller than that number. For instance, they were asked, “Is the percentage of African countries that are members of the United Nations larger or smaller than 65%?” They then tried to guess the true percentage. Their answers correlated with the arbitrary number they had been given. Insufficient adjustment from an anchor is not the only explanation for this effect. The anchoring effect has been demonstrated by a wide variety of experiments, both in laboratories and in the real world. It remains when the subjects are offered money as an incentive to be accurate, or when they are explicitly told not to base their judgment on the anchor. The effect is stronger when people have to make their judgments quickly. Subjects in these experiments lack introspective awareness of the heuristic—that is, they deny that the anchor affected their estimates.

7.2.4 – The Framing Effect

The framing effect is a phenomenon that affects how people make decisions. It is an example of cognitive bias, in which people react to a choice in different ways depending on how it is presented (e.g., as a loss or a gain). A very famous example of the framing effect comes from a 1981 experiment in which subjects were asked to choose between two treatments for an imaginary 600 people affected by a deadly disease. Treatment A was predicted to result in 400 deaths, whereas Treatment B had a 33% chance that no one would die but a 66% chance that everyone would die. This choice was then presented to participants either with positive framing (how many people would live) or negative framing (how many people would die), as delineated here: Positive framing: “Treatment A will save 200 lives; Treatment B has a 33% chance of saving all 600 people and a 66% chance of saving no one.” Negative framing: “Treatment A will let 400 people die; Treatment B has a 33% chance of no one dying and a 66% chance of everyone dying.” Treatment A was chosen by 72% of participants when it was presented with positive framing, but only by 22% of participants when it was presented with negative framing, despite the fact that it was the same treatment both times. The framing effect has a huge impact on how people make decisions. People tend to be risk-averse: They won’t gamble for a gain, but they will gamble to avoid a certain loss (e.g., choosing Treatment B when presented with negative framing).

8 – Social Cognition

8.1 – Introduction

Social cognition is the encoding, storage, retrieval, and processing of information about members of the same species; from a human perspective, it is simply the ability to think about and understand others. Social cognition is a specific approach of social psychology (the area of psychology that studies how people’s thoughts and behaviors are influenced by the presence of others) that uses the methods of cognitive science. Because of this it has a heavy emphasis on information processing: How do people process information about the people around them, and how does that affect their own perceptions of the world?

8.2 – Schemas

In schema theory, when we see or think of a concept, a mental representation or “schema” is activated that brings to mind other related information, usually unconsciously. Through schema activation, judgments are formed based on internal assumptions in addition to information actually available in the environment.

Similarly, a notable theory of social cognition is social-schema theory. This theory suggests that we have mental representations for specific social situations. For example, if you meet your new teacher, your “teacher schema” may be activated, and you may therefore automatically associate this person with wisdom and authority if that is how you have experienced past teachers.

When a schema is more “accessible,” this means that it can be more quickly activated and used in a particular situation. Two cognitive processes that increase the accessibility of schemas are salience and priming. In social cognition, salience is the degree to which a particular social object stands out relative to other social objects in a situation. The higher the salience of an object, the more likely that schemas for that object will be made accessible. For example, if there is one female in a group of seven males, female gender schemas may be more accessible and influence the group’s thinking and behavior toward the female group member. “Priming” refers to any experience immediately prior to a situation that causes a schema to be more accessible. For example, watching a scary movie late at night might increase the accessibility of frightening schemas, increasing the likelihood that a person will perceive shadows and background noises as potential threats.

8.3 – Cultural Differences in Social Cognition

Social psychologists have become increasingly interested in the influence of culture on social cognition. Although people of all cultures use schemas to understand the world, the content of our schemas has been found to differ for individuals based on their cultural upbringing. For example, one study interviewed a Scottish settler and a Bantu herdsman from Swaziland and compared their schemas about cattle. Because cattle are essential to the lifestyle of the Bantu people, the Bantu herdsman’s schemas for cattle were far more extensive than the schemas of the Scottish settler. The Bantu herdsmen was able to distinguish his cattle from dozens of others, while the Scottish settler was not.

Studies have found that culture influences social cognition in other ways too. In fact, cultural influences have been found to shape some of the basic ways in which people automatically perceive and think about their environment. For example, a number of studies have found that people who grow up in East Asian cultures such as China and Japan tend to develop holistic thinking styles, whereas people brought up in Western cultures like Australia and the USA tend to develop analytic thinking styles. The typically Eastern holistic thinking style is a type of thinking in which people focus on the overall context and the ways in which objects relate to each other. For example, if an Easterner was asked to judge how a classmate is feeling, she might scan everyone’s face in the class, and then use that information to judge how the individual is feeling. On the other hand, the typically Western analytic thinking style is a type of thinking style in which people focus on individual objects and neglect to consider the surrounding context. For example, if a Westerner was asked to judge how a classmate is feeling she might focus only on the classmate’s face in order to make the judgment.

8.4 – Neuroscience of Social Cognition

Phineas Gage: Phineas Gage’s brain damage became an important case study in the field of psychology; damage to his frontal lobe with a tamping iron (pictured) changed his social behavior, leading psychologists to believe that there were neural aspects of behavior.

People with autism, psychosis, antisocial personality disorder, and other disorders show differences in social behavior compared to their unaffected peers. Whether social cognition is entirely underpinned by neural mechanisms is still an open question. However, cases like Phineas Gage’s suggest that there is some kind of relationship between neural activity and social behavior.

Originally published by Lumen Learning – Boundless Psychology under a Creative Commons Attribution-ShareAlike 3.0 Unported license.