By Dr. James Fieser / 04.01.2011

Professor of Philosophy

University of Tennessee at Martin

Introduction

Larry Phillips Jr.& Emil Mătăsăreanu (the High Incident Bandits), 1997 Robbery / Creative Commons

One February morning two armed gunmen wearing black ski masks entered a Los Angeles bank, fired their machine guns and ordered everyone to the ground. Bank personnel gave them $300,000, but when leaving they were met by police who had already surrounded the building. Undaunted, the two men stepped into the street, firing at the officers. The police responded in kind, but their bullets bounced off the robbers who were covered head to toe with body armor. More officers were called in as the robbers casually walked down the road, shooting at everything in sight. Soon 200 police were on the scene, but even then they were so overpowered by the robbers that they had to borrow additional guns and ammunition from a local gun store. The robbers fired over 1,200 bullets during the 45 minute battle. Eventually, one gunman, who was shot 11 times, ended his life with a bullet to his head. The other surrendered after being shot 29 times, but he died five minutes later from blood loss. Miraculously, no one else died, although many were injured.

In a bizarre postscript to this story, three years later family members of the second gunman took the police to court, claiming that officers intentionally let him bleed to death. During the trial, the lawyer for the gunman’s family conceded that the robber “did a very bad thing. He robbed a bank and shot a lot of people.” Nevertheless, the attorney argued, the police had a responsibility to get him medical treatment, which they failed to do. In a vote of 9 to 3, the jury found that the gunman’s rights were not violated.

This story presents us with an intricate web of ethical concepts: bad behavior, devastating consequences, moral outrage, rights, responsibilities, selfishness and greed. It’s one thing for us to intuitively feel that the gunmen were immoral, but it’s another to say clearly what that immorality consisted of. For 2,500 years philosophers have been trying to unravel the mysteries of ethical judgments, and their theories differ dramatically. In this chapter we will look at some of the more famous of these theories.

MORAL OBJECTIVISM AND RELATIVISM

Creative Commons

An initial puzzle about morality concerns its ultimate source: where does morality come from? Do we create it or is it etched into the stars? Imagine that in a distant country two bank robbers in body armor blasted off 1,200 bullets; when they finally surrendered the Mayor gave the gunmen the Keys to the City in reward for their outstanding display of strength and courage. How would you respond to this story? On the one hand you might say that the Mayor was crazy: what the gunmen did was simply wrong and their actions should be punished, not rewarded. There’s an unchanging standard of justice that everyone must abide by, wherever they are in the world. This is the position of moral objectivism, which, at the risk of oversimplification, has three main features that many traditional philosophers embrace. First, morality is objective in the sense that ultimate moral standards exists independently of humans. Often this is described as a spiritual realm which is fixed and permanent, unlike the physical world of human society that is in constant flux. Second, moral standards don’t change throughout time; they are eternal. The true standards of morality that people followed thousands of years ago still apply today and will apply in the future. Third, moral standards are universal in the sense that they apply to everyone. No one can claim to be immune from the demands that true morality places on us all.

On the other hand, upon hearing this revised story about the gunmen, you might insist that the Mayor did nothing wrong by rewarding them: that’s just how they do things in that country. We have one concept of justice, they have another; neither way is superior, they’re just different. This is the position of moral relativism, which also has three key ingredients. First, morality is a purely human invention. People create morality, and it by no means exists independently of humans, such as in some higher spiritual realm. Second, moral standards change throughout time and from country to country. Wherever we go in the world we will find radically different moral values. Third, moral standards do not apply universally to all people; it is instead relative to our unique situations.

Moral Objectivism

Moral objectivism and moral relativism each have long and distinguished histories. The most important proponent of objectivism was Plato (428–348 BCE), who developed a two-tiered picture of the universe. The lower level consists of physical things like rocks, trees, houses and physical human bodies. Things at this level are a major disappointment; they shift and change, they cause misery, and they distract us from genuine truth. The higher level is spiritual in nature, and consists of unchanging truths, particularly those of mathematics and morality. When we behave morally, we are in fact molding our actions after the higher spiritual standard of justice, that is, after the forms, as Plato calls them. When acting justly, for example, we are in fact setting aside human conventions and grasping the ultimate form of justice. In addition to justice, there are forms of charity, courage, wisdom, and perfect goodness.

According to Plato, when relativists claim that morality is an ever-changing human creation, they are simply blinded by the corrupt physical world and incapable of mentally perceiving the forms. Relativists are not entirely to be blamed, he argues, because spiritual vision is a difficult thing to acquire. We need to be awakened to the flaws in the world around us, and use a special and often hidden mental capacity to access the spiritual realm. As radical as Plato’s view of morality is, it was nevertheless embraced by philosophers throughout history, and it only declined in popularity in the past 200 years. Its special appeal is its emphasis on the universal nature of morality. All of us at one point or another in our lives have felt that some moral principles apply to everyone. What Hitler did to the Jews, for example, was simply wrong, and no justification can be made on the grounds of the German culture of that time.

While Plato’s theory of the forms is one of the more philosophically sophisticated accounts of moral objectivism, there are other suggested ways of grounding morality in an objective reality. One of these is religious: morality is a creation of God’s will and is commanded by him for all of us to perform. God’s very nature, then, is the source and substance of morality; in a sense, the objective nature of morality is God’s mind. While believers in many religious traditions have embraced this theory, it has an important obstacle: even if God does exist, it’s not clear how we know what moral values he has commanded. Some suggest that God speaks to us directly, or that God has given us a moral conscience, or that God has inscribed moral rules into scripture. Each of these avenues of divine revelation, though, requires a great deal of trust. Should we believe someone who says that God told him to bomb an abortion clinic? Should we trust a political leader whose conscience tells him to wage a religious war against an enemy country? Should we trust a religious leader’s scriptural interpretation that some races are superior to others? These are extreme examples, but they suggest that claims to know God’s mind cannot be taken at face value. Rather, we often evaluate claims of divine revelation based on an independent standard of rightness that has nothing to do with God or religion.

Moral Relativism

Moral relativism is the offspring of philosophy’s long and controversial skeptical tradition. According to the Greek skeptic Sextus Empiricus, if we want to achieve peace of mind, we should reject extreme notions of objective moral truth. Look around the world, he says, and you’ll find nothing but conflicting opinions about morality. One philosopher says that morality consists of pursuing pleasure, another says that it consists of resigning oneself to God’s will. Equally conflicting are people’s actual moral actions. One society eats their dead relatives, another feeds them to vultures. One society practices incest, another condemns it. He describes here conflicting attitudes about sexual morality:

We consider it shameful to have sex with a woman in public, but it is not thought so by some of the people from India. They had sex publicly with indifference, like the philosopher Crates, as the story goes. Additionally, prostitution is shameful and disgraceful among us, but for many of the Egyptians it is highly respected. They say that women with the greatest number of lovers wear an ornamental ankle ring as a proud token of their accomplishments. Some of the girls marry after collecting a dowry beforehand by means of prostitution. We find the Stoics maintaining that it is acceptable to keep company with a prostitute or to live on the profits of prostitution. [Outlines of Skepticism, 3:24]

According to Sextus, moral diversity is everywhere on virtually every issue. What, he asks, gives us the authority to prefer one person’s or society’s views over another? We’re in no position to make the judgment call. The safe thing to do is to doubt the existence of objective morality, and see that all moral issues “are matters of convention and are relative.”

The central point here is the argument from cultural variation, which is this:

(1) If morality is objective, then we would not see widespread cultural variation in moral matters.

(2) There is in fact wide spread cultural variation in moral matters.

(3) Therefore, morality is not objective.

In Premise 1 the skeptic asks us if the notion of objective morality makes any sense if people always behave in radically different ways. Objective morality may indeed be a pretty thought, but if it doesn’t reflect reality, then it is only a pretty thought. According to Premise 2, anthropological studies clearly show the enormous diversity in moral attitudes throughout the world. We regularly read about strange moral practices of foreign cultures, such as women who are stoned to death for having premarital sex. This makes us cringe on our side of the world, but people in the foreign country itself believe they are defending moral values that hold together their society. We have no choice, the skeptic concludes, but to reject moral objectivism and accept moral relativism as the only real alternative.

How might moral objectivists respond to this argument? Regarding Premise 1, according to objectivists, some people and societies are evil, and it’s no surprise that they concoct their own self-serving standards. Human nature is inherently flawed, perhaps because we are constructed out of flimsy material stuff, or perhaps because we are psychologically crippled. In either case, there is a barrier between us and the higher realm of moral truth, and weak people will wallow in their human-made filth.

Regarding Premise 2, the objectivist continues, skeptics have greatly exaggerated the diversity that we see in moral matters. There is indeed great variation in cultural practices around the world, but how much of this is really moral? Many differences involve standards of mere taste or etiquette. For example, at great length Sextus describes strange funeral practices. There’s no question that these rituals are important in their respective societies, but they are matters of taste which cultures can rightfully determine for themselves. In their own ways, they all show an underlying respect for the deceased. Further, some values seem to be consistently endorsed by all cultures around the world, such as prohibitions against murder. It’s hard to take seriously any reports of societies that entirely lack this value. Thus, according to the objectivist, the entire argument from cultural variation falls flat.

The Moderate Compromise

The objectivist and relativist positions described above are rather extreme positions: either all moral values are unchanging, or all moral values are ever-changing human inventions. In spite of the enormous popularity of these extreme views, it seems that concessions are needed on both sides. Contrary to objectivists, cultural attitudes about some serious issues really do vary greatly, and it’s unreasonable to hunt for objective values that underlie these. For example, some societies feel that premarital sex and homosexuality are perfectly permissible; in other societies these are capital offences. However, contrary to relativists, some values appear consistently in human societies. This is so with prohibitions against murder and stealing. These values may not necessarily be grounded in the spirit-realm, but they are indeed universally endorsed within human societies – even though they are not always followed.

There are two ways that we might mediate between extreme objectivism and extreme relativism. The first assumes that there are at least some objectively-grounded moral standards, which are fixed and unchanging. These standards, though, are few in number and very general in nature. Examples are that we should educate our children, avoid harming others, and help others in need. However, it is left to individual societies to interpret these abstract standards and derive more specific moral rules from these. For example, when attempting to clarify the moral obligation to educate our children, some societies may mandate compulsory public education as a way of teaching children; others may assign the task to parents in the home. Similarly, when interpreting the general moral standard that we should avoid harming others, societies might disagree as to what counts as “harm”. There is thus objectivity, permanence and universality with the most general moral principles. At the same time, though, there is relativity with the specific moral rules that we derive from these.

The second way of mediating between extreme objectivism and relativism is to emphasize the role of human social instincts. Assume for the moment that the skeptic is correct: there is no higher independent realm of morality and the concept is only a philosophical fable. Assume further that some key moral values are purely social creations and vary radically in different cultures – such as rules about sexual activity. Nevertheless, some moral values appear to be uniform, such as the need to avoid harming others and the need to show kindness and charity. These might be the result of social instincts that have formed in humans over thousands of years of biological evolution. We are social animals by our nature, and thus must have some instinctively-driven way of living in peace with each other. Some moral values then might be grounded in instinct, and so will appear uniform from person to person and throughout the world. Technically speaking, though, these values would not be eternal and unchanging: just as they emerged through evolution, they may just as quickly dispel through future evolutionary development. Nevertheless, for the time being there is some naturally-grounded uniformity that stands midway between the objectivist and relativist extremes.

Taken separately or together as a package, the above two compromises may help bridge the gap between our conflicting intuitions between objectivism and relativism. Ultimately, though, neither completely settles the issue, since at some level we’d still want to ask whether any moral values are completely permanent and unchanging.

SELFISHNESS

When people rob banks, we presume that they are motivated through selfishness. In fact, much of our behavior throughout the day is motivated by self-oriented desires – to eat, sleep, relax, make money, impress other people. The interesting question, though, is whether all of our human actions are ultimately self-oriented. A postman was once delivering mail on his usual route when he saw smoke coming from a house. Knowing that an elderly man lived there, he knocked down the door and entered the house which, as he saw, had just caught fire from a kerosene heater. He dragged the old man towards the door, but both were overcome with smoke. Firefighters soon showed up and carried them to safety, although both were permanently injured. What motivates ordinary people to perform heroic deeds like this? One explanation is that people aren’t 100% selfish, and there is some instinctive capacity within human nature to help others irrespective of our private interests. That is, we have an instinct to act altruistically. But wait, the critic might say. Just because a postman rescues someone from a burning building, that doesn’t mean that he acted purely altruistically. Maybe he knew that he’d instantly become a hero, and he thought it was worth the risk to receive that honor. Maybe he thought that it was part of his postal job description, and he didn’t want to be reprimanded by his boss. His actions may have been motivated by entirely selfish reasons, regardless of how altruistic they appear on the surface. That is, human nature may be entirely egoistic. The dispute is one of human psychology, and, more precisely, the competing principles are these:

Psychological egoism: human conduct is selfishly motivated, and we cannot perform actions from any other motive.

Psychological altruism: human beings are at least occasionally capable of acting selflessly.

The question is certainly an interesting one, but what does it have to do with morality? The answer is embedded in a basic moral principle: ought implies can. Decoded, this means that we are morally obligated to do only those things that we are capable of doing. It makes sense to say that I’m morally obligated to avoid shoplifting since that is within my power. However, it does not make sense to say that I personally am responsible for curing cancer; lacking the required biochemical expertise, it’s not within my power to even attempt this, let alone accomplish this. Suppose, now, someone said that I have an obligation to donate to charity from completely altruistic motives – with no expectation of a tax break or any other benefit. Fulfilling that obligation depends in part on whether I’m psychologically capable of acting altruistically. This, though, is precisely what the egoist would deny. In short, if I am locked into behaving selfishly, then I have no moral responsibility to ever behave altruistically.

The Case for Egoism

One of the more notorious defenders of egoism was British philosopher Thomas Hobbes (1588–1679). Hobbes believed that human beings are biological machines that follow the same kind of physical laws that make clocks run. Our thought processes and actions are governed by our physiological makeup, and if we want to truly understand why we behave as we do, we must look to biology. He describes in detail how our various biologically-based emotions drive our conduct, and he makes clear that selfishness is the dominant motive behind our choices. Our instinctive selfishness emerges most dramatically, according to Hobbes, when life’s necessities such as food and shelter are in scarce supply and we quickly compete to acquire them before our rivals do.

When exploring the issue of egoism, Hobbes looks at two specific motives that we commonly think of as being altruistic: pity and charity. For example, suppose that, out of a genuine sense of pity and charity, the postman rescued the old man from the burning house. Ordinarily, we’d assume that these feelings are directed towards the victim, and not a reflection of the postman’s selfishness. However, according to Hobbes, even when we think such actions are altruistic, there is really a hidden selfish motivation. With any act of pity, we imagine ourselves in a position of distress. The postman thus imagined himself in the burning house and, based on that fictitious mental image, rescued the old man. With charity, Hobbes argued, we take special delight in exercising our power over other people. The postman thus wanted to feel the power of preserving the old man’s life, and so he attempted the rescue. Although we may not agree with Hobbes’s specific psychological explanations of pity and charity, he nevertheless gives us a way of understanding so-called altruistic actions, namely, seeing them all as arising from hidden selfish motivations. Following Hobbes’s lead, we can construct a basic argument for psychological egoism here:

(1) If we can adequately explain some phenomenon with one principle rather than two, then we should reject the second principle.

(2) So-called altruistic behavior can be adequately explained through psychological egoism.

(3) Therefore we should reject the principle of psychological altruism.

Premise 1 is a basic principle of simplicity, which is sometimes called Ockham’s Razor, named after the medieval philosopher William of Ockham who regularly relied on it. Premise 2 maintains that we don’t really need the principle of instinctive altruism. This claim is particularly compelling since we already accept that selfishness is indeed a motivating principle of human nature. The question is whether we need to introduce another to explain so-called altruistic behavior. Hobbes says that we don’t.

The Case for Altruism

When Hobbes’s writings appeared, readers of the time were horrified by his defense of psychological egoism. In fact, Hobbes forced the issue and made it almost a requirement for moral philosophers after him to take a stand on the issue one way or another. One such philosopher was British clergyman Joseph Butler (1692-1752) who defended the principle of instinctive altruism against Hobbes’s attack. According to Butler, Hobbes’s fundamental error was oversimplifying the basic principles of human motivation. It looks neat and tidy to reduce everything to a single motive of selfishness as Hobbes did, but, Butler says, human nature is too complex to allow for this easy solution. Butler thus attacks Premise 2 in the above argument – while not disputing the principle of simplicity in Premise 1. He notes two specific errors of oversimplification in Hobbes’s theory.

First, according to Butler, Hobbes oversimplifies the notion of “selfishness” – or “self-love” as he also calls it. Yes, many of our actions are motivated specifically by self-love. However, some human inclinations might superficially appear to be the same as self-love, such as hunger and esteem, although they are actually different inclinations. Suppose, for example, that I am hungry and I eat a sandwich. Suppose that I even enjoy the experience of eating the sandwich. My motivation here is simply hunger and not self-love, since even if I hate myself I would still be motivated to eat and enjoy the sandwich. Similarly, with esteem, even if I hate myself, I could still desire to be valued by other people. So, according to Butler, Hobbes’s mistake was to reduce all self-oriented motives to the single theme of self-love. The egoist might accept Butler’s criticism and state more cautiously: all human action is motivated by a group of self-oriented motives. This revised version is still very much egoistic, and it denies instinctive altruism. However, by conceding this point, serious harm is done to the argument from simplicity. The main appeal of psychological egoism is its ability to offer a simplified account of human conduct. Now, though, we are not talking about a single selfish motivation, but a collection of self-oriented motives. At this stage, what would it hurt to toss in a couple of altruistic motives as well?

Second, Butler argues that we have an instinctive motive of benevolence that underlies human friendship, compassion, love, parental inclinations and other feelings. This becomes evident when we examine Hobbes’s egoistic interpretation of charity. If charity was motivated solely by our delight in exercising power over others, then how could we distinguish charity from sadistic cruelty which is precisely the same motive? The postman, for example, didn’t have to risk his own life to enjoy having power over the old man. He could have just stood there and watched, all the while delighting in the fact that he had the power to let the old man die. Obviously, says Butler, there’s something much more to charity than a power trip, and that extra something is instinctive benevolence. It’s simply not that easy to explain away human kindness.

Egoism and the Struggle for Survival

Evolutionary biologists from Darwin onwards have been captivated by the egoism-altruism debate. The theory of evolution is based on the assumption that organisms struggle to survive: those with the best survival skills live, and the rest eventually die. Selfishness is an integral part of survival, and it is difficult to find a place for self-sacrifice and kindness in the evolutionary struggle. The fact is, though, that people do sometimes behave kindly towards others—at least appearing to be altruistic—and this also requires an evolutionary explanation.

Evolutionary biologists largely reject the purest form of altruism, namely 100% selfless acts of kindness. Here’s why. Suppose that I and a fellow caveman are foraging through the woods looking for food. The pickings are slim, but at the same time we both spot a single apple on a tree. Suppose further that I have some instinct of genuine altruism in me, but the caveman is purely egoistic. So, moved by sympathy, I give the caveman my apple. Sadly, I starve to death and thus fail to pass my altruistic instinct on to my offspring. Meanwhile the egoist wines and dines a cavewomen with the apple, they mate, and he passes his egoistic traits onto their children. The moral of the story is that genuine altruism is not conducive to survival, and if any early humans ever had that trait, it would have been eliminated from the human gene pool long ago. But even if we reject genuine altruism from an evolutionary standpoint, there are still two types of benevolent behavior that evolution can explain: that which we regularly show towards our family, and that which we occasionally show towards strangers.

Regarding the first, apparent altruism towards family members might be explained through the notion of kin selection. It begins with the fact that there is an increased survival rate for organisms that care for their kin. Those that don’t will die out. We’ve thus evolved so that I am instinctively inclined to improve the chances for survival of my family or tribe, and not just my own survival. I am fighting to preserve my genes, and not simply myself. It then may be very natural for me to make major sacrifices for my children who will perpetuate my genes. As to the second, apparent altruism towards strangers might be explained through the concept of reciprocal altruism. On this view I am essentially selfish, but I will be kind to other people when it aids my own survival in the long run. This involves figuring out who is on my side, who is against me, and making alliances with the right group at the right time. I may not be fully conscious of exactly why I make the partnerships that I do. One evolutionary biologist argued that even the revered Mother Theresa benefited personally from her association with the Catholic Church, and her seemingly altruistic actions were a part of the arrangement. This is far removed from the genuine altruism that Butler envisioned, but it nevertheless preserves the idea of kindness towards others within the framework of human evolution.

REASON AND EMOTION

Sometimes people don’t want to talk about politics or religion because of the strong emotions that those topics generate. Many moral controversies are also like this, such as abortion, homosexuality, capital punishment, and animal rights. Many animal rights advocates, for example, are so enraged by current practices towards animals that they will vandalize fur stores, break into laboratories and set free animals used in experiments, or picket slaughter houses. The animal rights group PETA – People for the Ethical Treatment of Animals – launched a project called “Holocaust on your Plate.” One of its posters showed Nazi concentration-camp prisoners crammed together in bunks and compared this to chickens jam-packed into cages on a modern factory egg farm. Enraged Jewish groups denounced the project for trivializing the suffering of Jewish victims. Without question there is some connection between morality and emotion. Some philosophers have gone so far as to say that moral assessments are only expressions of our feelings. On the other hand, other philosophers have staunchly maintained that morality is fundamentally a matter of human reason and not emotion. For them, emotional appeals, such as those by PETA, are completely irrelevant to moral decision making, and we need to approach the subject calmly and rationally.

Moral Reasoning: Detecting Truth and Motivating Behavior

Many philosophers identify two distinct features of moral reasoning: (1) it discovers moral truths, and (2) it motivates us to abide by moral standards. Concerning the first, philosophers have proposed different ways of discovering moral truths. We’ve already looked at Plato’s view that moral truths reside in the spirit-realm, which we access through our reason. For Plato, when I try to access the spirit-realm, I must look beyond the distorted physical world down here on earth and have something like a mystical experience. I mentally reach into the heavens and grasp moral notions, in much the same manner as religious believers look to the heavens for divine guidance. Many philosophers agreed with Plato that moral reasoning was a sort of religious experience, but others downplayed that angle. Instead, they argued, moral reasoning is an ability to recognize moral laws that God implanted in human nature. There is no mystical experience, but only an awareness of instinctive moral principles. Moral intuitions, they maintained, are much like inborn conceptions of mathematics: to grasp them we only need to be attentive to the voice of reason. Whether acquired through a mystical experience or a natural intuition, though, the point is still the same: moral reasoning gives us access to moral truths.

Consider next the second feature of moral reasoning – that it motivates us to abide by moral values. It doesn’t do me much good to discover moral truth and devise a moral game plan if in the end I don’t follow these things. Everything in morality leads to proper behavior, and without the right motivation, my best reasoning on an issue remains only theoretical. Many philosophers argue that moral reasoning in itself motivates us to do the right thing. There are in fact several motivations that influence my conduct. Suppose that I am considering lying to my boss about being sick, just so I can get the day off. My motivations for skipping might be laziness and the desire to do something more entertaining. My motivation for not skipping might be fears about getting caught. Moral reasoning, though, puts forward one additional motivation: reason tells us that it is wrong to lie. To the extent that human beings are rational animals, I will be at least somewhat motivated by this rationally-supplied moral principle. Some philosophers felt that moral reasoning is the only motive that matters when making a genuinely moral choice. Emotional motivations, they argue, misguide us from the right course of action.

The Is-Ought Problem

Hume was among the first philosophers to seriously challenge the notion of moral reasoning. He was familiar with standard theories on the subject, but felt that morality was so intertwined with emotion, that there was almost no room left for reason. He targeted both claims above, that human reason discovers eternal moral truths and also motivates us to be moral. He argued on the contrary that human emotion is responsible for both of these tasks. Regarding principle 1 – the rational discovery of moral truth – Hume asks you to perform a mental experiment. Think about any horrible deed, such as a murder, and try to discover any special fact about it that constitutes its immorality. After all, the job of reason is to discover facts, and if there was some factual moral truth surrounding murder, surely your reason would spot it. What, though, do you actually find? You will certainly not perceive any eternal moral truth at play. All you will see is an arrangement of bodily movements, feelings, motives and thoughts, but you will never rationally detect the immorality itself. Instead, he argues, “you must turn your reflection into your own breast,” and find an emotion of disapproval within you. Your emotional reaction alone, then, constitutes the moral assessment regarding the murder. Hume’s basic argument is this:

- If reason discovered moral truths, then we would be able to identify a uniquely immoral factual quality in an action such as murder.

- We cannot identify any such factual quality, but will only find our emotional reaction.

- Therefore, reason does not discover moral truths, and, instead, all moral assessments are emotional reactions.

Moral assessments, he believes, are much like our evaluations of the artistic beauty that we might find in a painting: “it lies in yourself, and not in the object.”

Regarding principle 2 – that reason motivates us to be moral – Hume argues that this position rests on bad psychology. Human reason has no ability whatsoever to influence human actions; reason simply provides us with facts, but does not tell us what we should actually do with them. Imagine that I could prevent a nuclear explosion, but in the process of disarming the bomb I would get a tiny scratch on my finger. Of course, I should go ahead and stop the explosion, regardless of the scratch. However, Hume argues, it is our emotionsthat incline us to make this choice; reason doesn’t care one way or the other. I must first desire to place the safety of the world above the safety of my finger. So too with all moral choices, such as donating to charity. Pile on as many reasons for charitable behavior that you like: charity is an intuitive obligation; charity follows from the Golden Rule; charity makes other people happy. We still won’t be motivated to act charitably unless we are emotionally inclined to do so.

Hume’s attack on moral reasoning is encapsulated in the famous motto that we cannot derive ought from is. That is, I can’t simply conclude that I have a moral obligation (an ought statement) on the basis of rational facts that I’m presented with (is statements). Suppose that I made the following argument:

Premise: charity is a human instinct.

Conclusion: therefore we ought to be charitable.

My premise is a factual statement about what is the case, and my conclusion is a statement of obligation about what we ought to do. The problem, as Hume sees it, is that even if charity is a human instinct, this does not necessarily mean that we are morally obligated to donate to charity. Vengeance is also a human instinct, but it is one which we should suppress. Moral obligation comes from our emotional reactions, and not from a mere presentation of facts.

Moral Utterances Express Feelings

A.J. Ayer

British philosopher Alfred Jules Ayer (1910-1989) agreed with Hume, but felt that the attack on moral reasoning could be pushed a little further. Ayer asks us to distinguish between two kinds of utterances, namely, factual reports and nonfactual expressions:

Factual reports:

I’m a fan of that team.

A cup of rotten milk is on the table.

I had fun in high school.

Nonfactual expressions:

Go team go!

Rotten milk, yuck!

Oh, for the good old days of high school!

The difference between these two groups is that factual reports are either true or false statements about the world, and nonfactual expressions aren’t. That is, for each of these factual reports we can meaningfully ask whether they are true or false, such as “Is it true or false that I’m a fan of that team?” “Is it true or false that a cup of rotten milk is on the table?” With nonfactual expressions, though, it’s completely nonsense to ask, for example, “Is it true or false go team go?” “Is it true or false rotten milk, yuck?” Nonfactual expressions merely vent our feelings and don’t report any facts at all.

Ayer then asks us to decide in which of these two groups moral utterances should go. Suppose that I say “Donating to charity is a good thing.” At first glance, this appears to be a factual report, since it seems that we can meaningfully ask “Is it true or false that donating to charity is a good thing?” Ayer warns, though, that we should not be fooled by first appearances. Moral utterances like this really belong in the second category of nonfactual expressions. When I say “Donating to charity is a good thing,” what I really mean is something like “Hooray for Charity!” Similarly, when I say “Stealing is a bad thing,” I really mean “Boo for stealing!” Moral utterances merely vent feelings, they report nothing factual at all. Ayer’s position is called emotivism: moral utterances express feelings, but do not report facts.

Emotivism attacks moral reasoning at an entirely new level. Philosophers in the past commonly felt that utterances such as “Donate to charity is a good thing” are factual reports that we make through the use of our reason. For Plato, this utterance reports that “charity is an eternal moral truth.” For Sextus Empiricus, it reports that “society approves of charity.” Even Hume might take this to mean “I approve of charity” – which is a report about one’s personal feelings. For Ayer, though, moral utterances don’t even rise to the level of factual reports about our feelings. They are emotional hisses, boos, hoorays and bravos, much like an animal might express when excited.

Many philosophers today are reluctant to embrace Hume’s and Ayer’s dismal assessment of moral reasoning. No doubt, there are genuine limits to human reasoning, but in moral matters it seems that reason does provide some kind of guide. Even if there is some emotional component to moral assessments, we still rely on reason to sift through the facts and weigh the arguments pro and contra on various controversies. For example, are chicken coops really like Nazi concentration camps as PETA would have us believe? Reason certainly has something to say on this matter. On the one hand, there is a similarity to the degree that in both cases conscious living creatures are painfully crammed into close quarters against their preferences. On the other hand, though, there is an enormous difference between the mental capacities of chickens and humans, and concentration camp prisoners experience pain on many more levels than do pent up chickens. So, while it may be wrong to inflict any pain on chickens, the comparison with concentration camp prisoners is greatly exaggerated. And that’s what reason tells us on this issue without the aid of emotion.

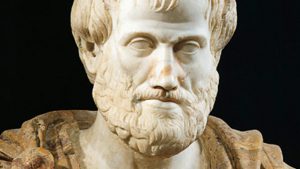

VIRTUES

Philosophers of the ancient world were consumed with the idea of developing moral character. We read in the book of Proverbs that “The wise person conceals his knowledge, but the foolish person blurts out nonsense.” This recommends that we cultivate the character trait of wisdom and flee from foolishness. Confucius notes four characteristics of a superior person: “when conducting himself he is humble; when serving superiors he is respectful; when helping others he is kind; when ordering people he is just.” Here, humility, respect, kindness and justice are qualities that we should adopt. Let’s return to our opening example of the Los Angeles bank robbers and examine it from the perspective of character traits. The bad guys were greedy in their desire for money, unjust in stealing it from others, and malicious by shooting everyone in sight. The good guys, on the other hand, exhibited bravery as they were overpowered by the robbers’ machineguns. They showed ingenuity by borrowing more powerful weapons from the local gun store. They were persistent by battling it out for 45 minutes, not letting the robbers escape. These good qualities are what we call virtues and the bad qualities vices. The most influential theory of virtues was developed by the Greek philosopher Aristotle (384–322 BCE), which we will examine here.

The Virtuous Mean

Aristotle defined human beings as “rational animals.” That is, humans have the major biological features that we also find in dogs and cats, but at the same time we have an extra psychological ability to reason, which elevates us above the other animals. Morality, according to Aristotle, is an interesting interplay between the animalistic and rational components of human nature. Let’s begin with the animalistic elements. Fido the dog has many animalistic urges that get him through the day. He gets frightened when facing danger, he enjoys eating food, he gets angry at intruders, and he desires companionship. Humans have these same animalistic drives, and several more that are unique to us. We have desires to donate money to the needy, to feel good about ourselves, and to make people laugh. Today we might say that all of these drives were naturally implanted in both animals and people to aid in survival. If Fido didn’t get frightened when facing danger, he’d walk into a bear cave, wander off a cliff, or do something that would quickly put an end to his life. Human drives serve largely the same function. Even our human desire to amuse others is important for social acceptance, which in turn aids in our survival.

Much of our human psychology, then, is rooted in purely animalistic urges. The difference between Fido and me, though, is that I have the rational ability to control my animalistic drives. I can train myself to suppress my urges when needed, and act out on them when the situation demands it. Take, for example, the natural inclination to get angry. We need this drive to keep people from harming us. If I never got angry, Aristotle argues, people would steal my belongings, hit me for amusement, and harass my family. On the other hand, it’s not good for me to have a short fuse and fly into a rage if my waiter puts too much ice in my drink. I need to display the right amount of anger in the right situation.

As a rational creature, then, my job is to develop the right kind of mental habits that regulate my natural urges. These good mental habits are moral virtues, and they will be at a happy medium between two bad habits, or vices. For example, if I properly restrain my urge to get angry, then I will have the moral virtue of good temper. If I don’t restrain myself enough, I will have the vice of ill-temper. If I restrain myself too much and never get angry, I will have the vice of spiritlessness. Aristotle lists about a dozen virtues that follow this same formula: virtues that stand at a mean between vices of deficiency and vices of excess. Here is a chart of some especially interesting ones:

Natural Urge || Vice of Deficiency | Virtuous Mean | Vice of Excess

Anger || Spiritlessness Good Temper Ill-temper

Fear of danger || Cowardice Courage Rashness

Pleasure || Insensibility Temperance Intemperance

Give money || Stinginess Generosity Extravagance

Self-worth || Self-loathing Self-respect Arrogance

On the surface, it doesn’t seem too difficult to find the proper middle ground with all of these virtues. To be courageous, for example, we just avoid being too cowardly or too rash. However, Aristotle argues that finding that middle ground is easier said than done. Suppose I am a police officer and I understand that courage involves knowing how to regulate my fear of danger. Am I cowardly if I don’t chase down an armed bank robber? And how many bullets does the robber have to fire before my pursuit of him becomes rash? Developing virtues takes much time and training, and often begins in childhood education.

There’s something unusual about Aristotle’s theory: while he tells us everything we need to know about virtues, he says almost nothing about moral rules, such as don’t kill, and don’t steal. He certainly was aware of the function that moral rules play in people’s lives, and he himself had to regulate his conduct by the rules of law within his native city of Athens. He may have simply felt that virtues were the most central part of moral philosophy and thus focused only on that aspect. In any event, in contemporary times it has led to the theory that virtues alone should be the focus of morality, not rules. Let’s call this the virtue-alone theory.

Virtues and Gender

Carol Gilligan

Many feminist philosophers have embraced the virtue-alone view of morality. To see why, consider the age old dispute about whether men and women differ in important psychological ways. We are all familiar with stereotypical male/female differences. Young boys play with toy guns and swords, and roughhouse with each other. Young girls play with doll houses and interact more gently. Stereotypes go beyond aggression, though. When older, men are better at mathematics and other analytical tasks, while women have stronger communication skills. Psychologists dispute about how much of this difference is inborn, and how much is the result of centuries of social conditioning in male-dominated societies. The fact remains, though, that there are at least some psychological marks of distinction between men and women, and some of these may be relevant to theories of morality. Feminist philosophers have noted one major area of distinction: men seem to be fond of categorizing things and inventing rules. Women are less inclined to do this and seem to be more sensitive to the uniqueness of particular situations. Sometimes rule-following may be necessary, as when, for example, designing bridges and skyscrapers. Other times, though, obsession with rules does more harm than good, and this may be the case with rule-based approaches to morality.

The virtue-alone theory addresses the concerns of feminist philosophers in three ways. First, virtue theory is less male-oriented since it downplays rules. Second, the development of virtuous habits is an educational task that requires us to be sensitive to the nuances of our surroundings, which is a specialty of female thinking. Third, virtue theory allows us to introduce new ideals into our value system which are more overtly female in character. One almost indisputable fact about female psychology is that women tend to be more nurturing than men. Women now dominate the fields of counseling, social work, primary education, and nursing. Even with all the changes in modern society, women are still more involved in child-rearing than men. American philosopher Carol Gilligan has argued that, while women have taken care of men, men themselves “tended to assume or devalue that care” both in their theories of human nature and in their daily lives. Thus, an important female virtue that we can add to Aristotle’s list is care.

Virtues and Rules

While the virtue-alone theory has supporters, critics charge that we can’t dismiss moral rules that easily. They make their case with two main arguments. The first involves a paradox: misused virtues can actually become vices. Imagine that a person possessed a string of virtues, such as intelligence, ingenuity, patience, and calmness. “Ah,” we might say, “this person must certainly be a good human being.” However, these are qualities that we find in the most skilled thieves. In fact, the thief will become more immoral in proportion as he has these qualities. According to critics, this is precisely why the focus of morality should be on unchanging rules, not on virtues. As long as we follow moral rules such as “don’t steal” then we won’t misuse virtues such as intelligence. Our virtues will still be an asset to our moral conduct. For example, when you act on the moral rule “help others in need” you can draw your virtue of intelligence and find the most effective way of assisting the needy. Your primary focus, though, should be on the rule, and not the virtue that assists you in carrying it out.

The second argument against the virtue-alone theory asks us to examine what takes place when we morally praise or blame someone. Suppose, for example, that I get drunk and run over someone with my car. I’m then thrown in jail and the local newspapers make me look like a horrible villain. “But wait,” I say, “I’m actually a very good man. I have all of the virtues, no vices, and in fact I don’t even drink. This was a special occasion and, in the midst of a celebration, I had a lapse of judgment which I never had before.” While my explanation might make me seem less villainous and may even get me some sympathy, I will still be morally blamed for my action and punished. Moral praise and blame, according to the critic, is really a question of whether our actions conform to moral rules, and it is not a question of whether we possess moral virtues. The principal reason is that everything you know about me is based on my actions. You can’t read my mind to see what mental habits are ingrained in my psyche. You only see my actions, and whether they conform to the right rules. Ethics ultimately involves a rigorous science according to which we discover these rules. By comparison, speculating about virtuous habits is child’s play.

What should we conclude about this battle between rule-based and virtue-alone morality? First, an exclusive focus on moral rules does seem to distort the age-old tradition of moral thinking, and some philosophers seem to have erred by eliminating virtues from their moral theories. However, we may rightfully question whether moral rules should be entirely replaced with virtues, as some virtue theorists have suggested. Just as virtues are a longstanding part of our moral tradition, so too are moral rules, and for thousands of years the two approaches have harmoniously coexisted. Second, it seems clear that Aristotle’s original list of virtues needs amending. Aristotle felt that women and slaves held a lower place in the human social order and, so, his list of moral virtues has the feel of an aristocratic male. The list of virtues that we endorse today should certainly reflect our growing traditions of human equality. To the extent that nurturing has been an undervalued female characteristic, it makes sense to acknowledge the virtue of care. However, we may also rightfully question whether moral theories should be predominantly female in character, with its sole emphasis on care, as some writers have suggested. Male/female differences aside, human beings are multifaceted and we may never find a single virtuous character trait that fully encompasses the complex nature of moral obligation.

DUTIES

Just as we can examine the morality of the Los Angeles bank robbery in terms of virtues and vices, we can similarly list the moral rules that the robbers violated. The two obvious ones are “Don’t kill” and “Don’t steal.” These are obligations – or duties – that are acknowledged in cultures throughout the world. A 4,000 year old Babylonian text succinctly lists our principal moral duties: “Has he intruded upon his neighbor’s house, approached his neighbor’s wife, shed his neighbor’s blood, stolen his neighbor’s garment?” We find these basic principles in the Ten Commandments and in other religious codes of ethics. We don’t need to prove the authority of these duties; we somehow naturally accept them as facts. This is the basis of a theory of morality called duty theory.

Duties to God, Oneself and Others

The basic elements of modern duty theory were developed by German philosopher Samuel Pufendorf (1632-1694). God, he argues, has implanted a natural sense of morality within us all, which gives us the precise guidance we need for proper social interaction. These instinctive moral principles are as natural to us as the ability to speak languages, and are so firmly rooted in our minds that we can’t wipe them out. We discover and understand them through the use of our reason, and, to that extent, morality is embedded in the rational part of human nature. All of our moral obligations, according to Pufendorf, are of three types: duties to God, oneself, and others. Let’s briefly look at each of these groups.

According to Pufendorf, we have two principal duties to God: know that God exists and obey God. Like many philosophers of his time, Pufendorf believed that God’s existence could be rationally proven – such as by observing complex design within nature and inferring that it must have been produced by a cosmic designer. Pufendorf feels that this kind of knowledge of God is not optional for us, but is actually a moral requirement. Next, we have a moral obligation to obey God in various ways, such as honoring him, worshiping him, and praying to him. While religious thinkers of the time agreed wholeheartedly with Pufendorf’s view of duties to God, in later centuries most philosophers were more cautious on this subject. Even if God exists and we have some obligation to him, it seems to be more of a religious duty than a moral one.

Pufendorf believed that human nature has both a mental and a physical component, and, so, our duties to ourselves fall into these two groups. Regarding our duties to our minds, he argues that we should develop our talents. Learn a trade, write a book, play an instrument; it’s up to us to decide what we should do, but we should choose something. Even if I can live comfortably off inheritance money without ever working, I am still under a moral obligation to develop some ability. Regarding duties to our bodies, we shouldn’t intentionally do things that cause us physical harm and, most seriously, we should not commit suicide under any circumstance. Like duties to God, philosophers today are a little suspicious about the existence of moral duties to oneself. Don’t I have a right to be a lazy couch potato as long as I’m not a burden to others? Don’t I have a right to die if I’m terminally ill and in intense pain?

The most important – and least controversial – group of moral duties is the final one, namely, duties to others. Here we find the usual obligations, such as don’t steal, murder, or lie. These also fall into different subgroups. There are, for example, special duties that we have to our families, such as caring for our children, being faithful to our spouses, and respecting our parents. We have special duties to the people in our local communities, such as being charitable to them, and other obligations to our governments, such as obeying the laws.

The Categorical Imperative

Pufendorf’s duty theory was ultimately eclipsed by that of German philosopher Immanuel Kant (1724–1804). What remains important about Pufendorf’s theory, though, is the idea that morality involves instinctive moral obligations that are unwavering and do not depend upon our private desires. Kant believed that Pufendorf’s theory was on the whole correct, but argued that we don’t need to memorize a long list of distinct duties to God, oneself and others. At bottom, Kant maintained, there is only a single principle of moral obligation, which he dubbed the categorical imperative. All of our distinct duties, as valid as they may be, are just applications of this.

Before telling us exactly what the categorical imperative is, Kant explains how moral judgments differ from other value judgments that are less morally crucial. Throughout the day people tell us that we ought to do certain things. We ought to read some book, we ought to take better care of our health, we ought to donate to some charity. Although all of these injunctions may involve some kind of obligation upon us, few of these are genuinely moral obligations. How can we tell the difference? According to Kant, non-moral obligations are merely hypothetical imperatives: they tell us that if we want to achieve some goal, then we should perform some act. If I want to lose weight, then I should go on a diet. If I want to be entertained, then I should read a specific book. The reason that these are non-moral obligations is because nothing requires me to have those specific goals. Maybe I don’t want to lose weight. Maybe I’m not interested in literary entertainment. Moral obligations are entirely different, though. They do not depend on our personal preferences and are obligatory no matter what. Truly moral obligations are categorical imperatives – that is, absolute commands. “Don’t kill,” “Don’t murder,” and “Don’t lie” are certainly of this sort.

The ultimate categorical imperative, for Kant, is a very broad moral principle that we can apply in every circumstance. He paradoxically gives four versions of it, claiming that they say the same thing from different perspectives. One version, though, is especially powerful: treat people as an end and never only as a means to an end. Most simply, this means that we should treat people with dignity, and not as mere objects. Behind this principle is a crucial distinction between two kinds of value that we find in things: instrumental and intrinsic. Something has instrumental value when it is a tool to accomplish something else. My house key, for example, is valuable since it opens my front door. If I change the lock, the key no longer serves a purpose and I toss it in the garbage. Most things that we see have only instrumental value. Our jobs are valuable because they give us money. Money is valuable since it allows us to buy stuff. By contrast, intrinsically-valuable things are important in and of themselves, regardless of what further benefit they have. Happiness, for example, is intrinsically valuable. If I say “I’m happy right now,” it would be odd for you to ask “what further benefit do you get from being happy?” Happiness is simply valuable for its own sake. Armed with this distinction between instrumental and intrinsic value, Kant’s categorical imperative tells us that we should not use people as instruments for our own benefit, like house keys, but treat them as intrinsically valuable beings, just as we would view our own happiness. People are intrinsically valuable, Kant thinks, because we have free wills and can shape our surroundings according to our own design. We can’t say that about a house key. Because we are unique in this respect, we must treat people with a special dignity.

The beauty of this principle is that it tells us very clearly why specific actions are right or wrong. If I donate to charity, I am recognizing the intrinsic value of the person I am donating to. When I develop my talents, I recognize my own intrinsic value. On the other hand, if I steal a pack of gum from the corner store, I am treating the store owner as a mere object for my own benefit. If a man cheats on his wife, he treats both his wife and mistress as mere objects of gratification. Kant envisioned the categorical imperative as a more precise version of the Golden Rule, and, to a large extent the principle achieves that aim.

Duties to Animals and the Environment

Samuel von Pufendorf

Ethicists of the past felt that moral obligations applied principally to human beings, and perhaps also to God. Pufendorf, for example, held that God implanted moral obligations in people as a means of enabling us to live in peaceful human societies. Animals were irrelevant to that mission. Kant felt that we only have moral obligations to creatures that are capable of making free and rational decisions. Animals, he believed, can’t do that: they operate only on instinct, and don’t have the mental capacity for anything like rational thought. Not only do animals lack rationality, Kant says, they aren’t even conscious of themselves. Even though they might give the appearance of being in pain, for example, they don’t have a conscious experience of pain itself. While we have no direct duties toward animals themselves, Kant argued, we nevertheless have indirectduties based on how cruelty to animals might impact human beings. Suppose that you routinely set dogs’ tails on fire just for the fun of it. Eventually, that sadistic thrill will wear off and you’ll be inclined to work your way up the food chain and torture humans for your entertainment. Thus, according to Kant, while setting dogs’ tails on fire won’t violate a direct duty to dogs themselves, we have an indirect duty to avoid such conduct because it inclines us to be cruel to humans.

Today this reasoning seems quite bizarre. Even though some animals such as chickens lack the mental capacity for rational thought, they still are conscious of pain, and it is morally wrong to torture them. This suggests that at least some moral duties go a step beyond human beings and apply to any conscious creature with a capacity for experiencing pain. Part of the reason for this shift in attitude since Kant’s day is that we know more about animal physiology than we used to, and we know that even animals like chickens are biologically closer to us than we previously thought. When we move up the animal hierarchy, many creatures – such as dogs, cats and chimpanzees – have even greater mental capacities. They obviously can’t play chess or write computer programs, but some have the rational abilities to solve problems, make tools, and learn languages. Further, they are not just aware of their surroundings, as chickens are, but are also self-aware. That is, they are aware of themselves moving through time and have something like hopes and dreams for the future. Along with these greater mental capacities, then, comes greater moral duties on our part, specifically to respect and to some extent facilitate their goals. Some of these moral duties towards animals have worked into legal codes – laws about cruel and inhumane treatment of pets, and even regulations about how animals should be treated in slaughterhouses. The question today really isn’t so much of whether we have moral responsibilities towards animals, but how far those duties extend. Is it wrong to eat or experiment on animals, just as it is wrong to do with humans? These issues are still up for debate.

Pushing the issue of non-human duties even further, we can ask whether we have any obligations to plants as well as animals. Plants clearly aren’t conscious, and it is impossible to inflict them with pain as we might a chicken. But we have growing concerns about the depletion of rain forests and the mass extinction of thousands of plant species on a regular basis. There may be nothing wrong with killing a bunch of weeds in my back yard, but if we slash and burn the last members of an endangered plant species, that’s a different story. As with duties towards animals, though, there are two ways that we can look at our responsibility towards plant species.

First, maybe our duties to the environment are only indirect, based on how human beings will be adversely affected by environmental irresponsibility. If we chop down all of the rainforests, the earth’s temperature will skyrocket, and we’ll all die. If we wipe out too many plant species, we disrupt the food chain, and we starve. Even if we are not particularly fond of plants, there are lots of human-centered reasons to acknowledge an indirect duty towards them. Second, maybe our duties to the environment are direct, and we have moral responsibilities to plant species themselves for their own sake, irrespective of their impact on human interests. On this view, plant species in and of themselves have a moral standing, similar to the way that humans do, and this generates a direct moral duty towards them by us. Direct duties to the environment, though, is a tough sell. It doesn’t seem like an obvious moral duty, on the same level as duties to humans or even duties to higher animals. Advocates of this ecological approach often draw attention to the interconnectedness of all things, and point out that it doesn’t make sense to talk about human duties and ignore the surrounding fabric of life into which humans are woven. Some advocates believe that it requires an environmental awakening – a kind of mystical experience – for a person to grasp the independent moral standing of plant species and environmental systems. But people who are incapable of having that kind of experience may be left scratching their heads in wonder.

UTILITARIANISM

Duty theory seems like a good way of understanding our moral responsibilities – to people, animals and the environment. What could be wrong with it? The problem, as critics see it, is that these so-called instinctive duties are pure fabrications. Pufendorf felt that God permanently implanted duties in our nature; Kant felt that duties are an integral part of human reasoning. They are not, though, as fixed and universal as these duty theorists have maintained, which we see most evidently in the various duties that have come and gone over the centuries. Once considered essential to morality, duties to God have been cast aside. Suicide used to be among the top moral crimes, but now we have a right to die. Now we have newly-discovered duties to animals and the environment. The concept of instinctive duties seems to be just a sophisticated justification for personal convictions, or, worse yet, for personal prejudices. Wouldn’t it be great if we could arrive at our moral principles more objectively? More scientifically?

In the late 18th century, a philosophical movement called utilitarianism aimed at doing just that. The concept was simple: we measure right and wrong by considering the pleasing and painful consequences of our behavior upon ourselves and others. We no longer have to root through human instincts to discover our alleged duties. Instead, we simply examine the publicly observable consequences of our actions, and tally the pleasures and pains that result. What the Los Angeles bank robbers did was wrong, for example, because their actions produced far more pain than pleasure. They themselves died painfully, and caused extreme pain to those who they shot. By contrast, the charitable acts that Mother Theresa did throughout her life were morally good because they produced great pleasures for the needy, which overbalanced the modest pains that she herself endured through self-sacrifice.

The Utilitarian Calculus

Jeremy Bentham

British philosopher Jeremy Bentham (1748-1832) was an important developer of this theory. A stickler for details, he argued that it wasn’t good enough to simply have a gut feeling about whether an action produced more pleasure than pain. To be scientific, we must quantify – that is, assign number values to – the pleasing and painful consequences of an action. We then tally the numbers, and assess the total score. Suppose that the Los Angeles bank robbers produced 100 total units of pain, and only 5 units of pleasure – pleasure, perhaps, from the money that gun store owners received from the sale of the weapons and ammunition. Since the “pain” score is higher than the “pleasure” score, we thereby pronounce the bank robbers’ conduct as morally wrong. Bentham called this approach the utilitarian calculus.

Not all pleasures and pains carry the same weight, he argues, and to be accurate we must account for their differences. For example, the pleasure I receive from eating a slice of pizza is not as weighty as the pleasure of winning a million dollar lottery. Bentham noted seven important factors in assessing the differences between various pleasures and pains:

(1) Intensity: how extreme the pleasures and pains are.

(2) Duration: how long the pleasures and pains last.

(3) Certainty: whether the pleasurable and painful consequences are certain or only probable.

(4) Remoteness: whether the pleasures and pains are immediate or in the distant future.

(5) Fruitfulness: whether similar pleasures and pains will follow.

(6) Purity: whether the pleasure is mixed with pain.

(7) Extent: whether other people experience pleasure or pain.

Thus, a pleasure gets more points if it is intense, long, certain, and immediate. For example, the pleasures from an amusement park visit would be intense, certain and immediate, but comparatively short. We add more points if that action produces further pleasures down the road, such as the pleasing memories I might have next year of the amusement park visit. We deduct points for pains that are mixed with pleasures, such as nausea from too many rides on the rollercoaster. Finally, for each person that is affected by an action, we repeat steps 1-6. For example, I might get sick on the rollercoaster and throw up on other riders. We must then analyze the intensity, duration, certainty, remoteness, fruitfulness and purity of the pain I inflicted on those riders.

Bentham believed that his utilitarian calculus could have a sweeping impact on society, both with how we determine morality in our daily lives and how government officials would craft legislation for the betterment of everyone. Gone would be the days when are actions and social policies would be guided by mere moral hunches. The utilitarian calculus gives us a precise formula for making moral decisions based on hard facts.

Higher Pleasures and Rules

John Stuart Mill

Bentham was the godfather and teacher of a child prodigy, John Stuart Mill (1806–1873). As a young man, Mill adopted Bentham’s vision of utilitarianism and valiantly defended it against a growing number of criticisms. When a little older and wiser, though, he began finding fault with his teacher’s views, and went so far as to say that Bentham was not a particularly good moral theorist. Mill continued to embrace utilitarianism – and in fact became the most famous 19th century advocate of the theory. However, he departed from Bentham in two important ways. First, Mill rejected the mathematical approach of Bentham’s utilitarian calculus. In fact, Mill argued, it is impossible to assign numerical values to all pleasures. Some pleasures, Mill conceded, can be quantified. For example, on a scale of 1 to 10, I can assign a value of “2” to the pleasure I get from eating a slice of pizza and a “4” to a visit to the beach. These, though, are merely bodily pleasures that appeal to the lower and baser features of human nature. As rational creatures, though, we are capable of experiencing higher mental pleasures, such as the joys of playing chess, listening to fine music, and viewing great works of art. We can also take pleasure in designing bridges, planning cities and solving social problems like poverty, hunger and illness. These pleasures, though, cannot be measured and plugged into a numerical calculation. We all recognize their merit, and in fact, Mill says, we value them more than bodily pleasures. So, when determining whether something is right or wrong, we survey the consequences and give greater weight to the higher and more dignifying pleasures that result. There are no numbers to tally or scores to compare. It is more of an intuitive assessment.

Second, Mill questioned whether we really need to evaluate the consequences of each one of our actions individually. Bentham’s utilitarian calculus directs us to tally the pleasure and pain of every action that we perform – a position called act utilitarianism. The problem with this is that we don’t have time to morally evaluate each of our actions throughout the day. Life would grind to a halt. Instead, Mill argues, we should just keep following moral rules as we have been doing: don’t kill, don’t steal, don’t lie. However, we should submit those rules for utilitarian evaluation. That is, we must evaluate whether those rules bring about more pleasure than pain. Consider these two possible rules: (1) Don’t steal, and (2) It’s OK to steal when you feel like it. Assuming that we’d follow them consistently, we should ask which rule would bring about the greatest amount of pleasure in society. Clearly it would be the first. If we allowed people to steal when they felt like it – with no moral or legal penalties – the concept of property ownership would go out the window. You couldn’t open a business because people would just walk in and take what they want. You couldn’t plant food in your back yard for the same reason. For that matter, you couldn’t even count on having a house to live in since every time you’d leave it, you’d be at risk of someone else moving in. On balance, adopting the second rule would have disastrous consequences. Mill’s rule-based approach is representative of a position called rule utilitarianism.

According to Mill, most of our moral rules today have developed through a kind of utilitarian trial and error. Thousands of years ago people adopted the moral rules that clearly benefited society the most. We don’t really have to reinvent the wheel and create our favorite moral principles all over again. In fact, Mill argues, we would rarely ever need to evaluate a moral rule on utilitarian grounds. One exception might be when newer and more specific issues come along. For example, should health care costs be paid for by the government? Should we ban ownership of assault weapons? The answer in each of these cases would rest on which rule would produce the most pleasure. Another exception would be in situations when two moral rules conflict and we can’t follow both. The most famous illustration of this is the case of the inquiring murderer. Suppose that you see a man run down the road, and then jump into a dumpster. Another man carrying a gun comes around the corner and asks, “Did you just see someone run through here?” It’s clear to you that the guy in the dumpster is a dead man if you tell the truth. You are now caught in a dilemma between two moral principles: don’t lie, and don’t cause harm to others. According to Mill, you should resolve this specific conflict as an act utilitarian would: determine which course of action would produce the most pleasure and least pain. Clearly, the best course of action here is to lie to the would-be killer and avoid causing harm to the man in the dumpster. Once the situation is resolved, you return to following the usual moral rules.

Reactions from Duty Theorists

From the time that utilitarianism first emerged, duty theorists were unimpressed with its so-called scientific approach. Two specific problems were raised, which even today remain among the most serious challenges to utilitarianism. First, there’s no question that utilitarianism aims at a precision far beyond what duty theorists ever dreamed of. The problem, though, is that its standard of precision is too high. Could we ever fully evaluate every positive and negative consequence of the Los Angeles bank robbers’ conduct? Once the story hit the media, millions of people had reactions to it, which we could never fully calculate. Many of these reactions were no doubt painful feelings of revulsion towards the robbers’ brutal conduct. But these feelings would have been mixed with pleasing feelings from the entertaining nature of the story itself. A few years later the story was made into a movie, which, undoubtedly made money for some people and thus produced even more pleasure. For all we know, two actors on the movie set fell in love and had a child who will grow up and some day discover a cure for cancer. We just don’t know, and we are not in a position to precisely calculate all the consequences. One early critic of utilitarianism held that even the wisest of people will only ever have a faint glimpse of the consequences of an action. “The nature of general consequences,” he argued, “is too comprehensive to be embraced by the human understanding, too dark to be penetrated by human discernment.” Rule utilitarianism attempts to sidestep this issue by having us look at rules, such as “Don’t steal” rather than specific actions such as those of the Los Angeles bank robbers. Thus, we don’t need to predict every consequence of every action. The problem re-emerges, though, when we evaluate the consequences of rules that we might want to adopt. The long-range consequences of any moral rule are well beyond our ability to grasp.

Second, utilitarian reasoning may lead us to adopt actions or rules that conflict with important traditional values. Suppose that I kidnap you and make you my slave. I’ll have you mow the yard, clean out the cat box, overhaul the engine in my car, and any other unpleasant task that I can think of. I’ll also share you with my neighbors so that you can relieve them of their unpleasant tasks as well. Your life will certainly be miserable, but through your services our lives will be considerably happier and might actually outweigh your misery. So, on utilitarian reasoning, enslaving you is the morally right thing to do. Again, rule utilitarians attempt to address this problem by focusing on rules rather than actions. They argue that we need to consider the consequences of adopting rules regarding slavery, and a rule allowing slavery would produce more pain than pleasure. However, critics argue, a carefully crafted rule permitting slavery might produce more pleasure than pain. What if we enslaved only people with docile personalities and then sterilized them before they could reproduce? They would be more content in their condition than the average person would be, and we would prevent slavery from becoming hereditary. Duty theorists would object that this kind of slavery would still be wrong, regardless of the pleasure/pain tally.

As we’ve seen, the key motivation for adopting utilitarianism is that it frees us from unreliable and prejudicial moral duties that are allegedly grounded in reason and instinct. It places morality squarely in the arena of public observation where we can impartially analyze our actions or rules. This, at least, is what utilitarianism hopes to achieve. Does it accomplish this? Far from it, duty theorists argue. The data that utilitarians need for their evaluation will always be fragmentary and so their conclusions will be flawed. And even utilitarianisms’ best evaluations may take us far from traditional notions of morality. Utilitarians, thus, seem to be getting exactly what they hoped for: they’ve freed themselves from intuitive duties, but in the process have created a frightening system that allows the ends to justify the means.