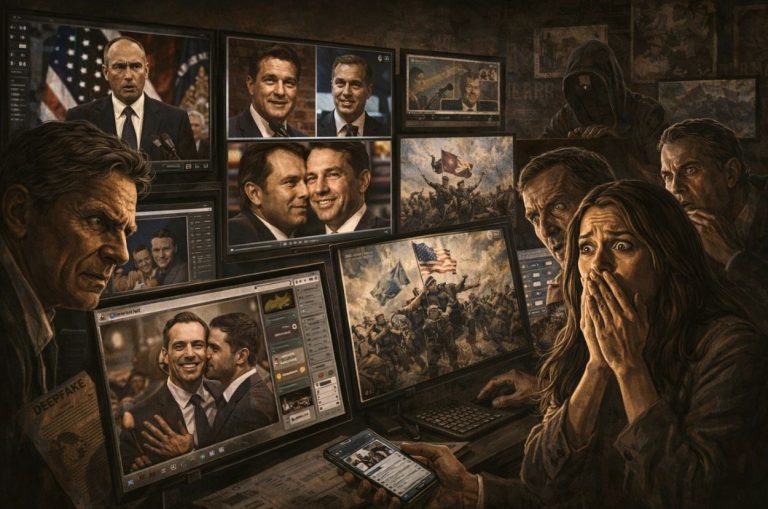

There are two different approaches to combat misinformation, one reactive, the other proactive.

Abstract

In recent years, interest in the psychology of fake news has rapidly increased. We outline the various interventions within psychological science aimed at countering the spread of fake news and misinformation online, focusing primarily on corrective (debunking) and pre-emptive (prebunking) approaches. We also offer a research agenda of open questions within the field of psychological science that relate to how and why fake news spreads and how best to counter it: the longevity of intervention effectiveness; the role of sources and source credibility; whether the sharing of fake news is best explained by the motivated cognition or the inattention accounts; and the complexities of developing psychometrically validated instruments to measure how interventions affect susceptibility to fake news at the individual level.

Fake news can have serious societal consequences for science, society, and the democratic process (Lewandowsky et al., 2017). For example, belief in fake news has been linked to violent intentions (Jolley & Paterson, 2020), lower willingness to get vaccinated against the coronavirus disease 2019 (COVID-19; Roozenbeek, Schneider, et al., 2020), and decreased adherence to public health guidelines (van der Linden, Roozenbeek, et al., 2020). Fake rumours on the WhatsApp platform have inspired mob lynchings (Arun, 2019) and fake news about climate change is undermining efforts to mitigate the biggest existential threat of our time (van der Linden et al., 2017).

In light of this, interest in the “psychology of fake news” has skyrocketed. In this article, we offer a rapid review and research agenda of how psychological science can help effectively counter the spread of fake news, and what factors to take into account when doing so.1

Current Approaches to Countering Misinformation

Overview

Scholars have largely offered two different approaches to combat misinformation, one reactive, the other proactive. We review each approach in turn below.

Reactive Approaches: Debunking and Fact-Checking

The first approach concerns the efficacy of debunking and debiasing (Lewandowsky et al., 2012). Debunking misinformation comes with several challenges, as doing so reinforces the (rhetorical frame of the) misinformation itself. A plethora of research on the illusory truth effect suggests that the mere repetition of information increases its perceived truthfulness, making even successful corrections susceptible to unintended consequences (Effron & Raj, 2020; Fazio et al., 2015; Pennycook et al., 2018). Despite popular concerns about potential backfire-effects, where a correction inadvertently increases the belief in—or reliance on—misinformation itself, research has not found such effects to be commonplace (e.g., see Ecker et al., 2019; Swire-Thompson et al., 2020; Wood & Porter, Reference Wood and Porter2019). Yet, there is reason to believe that debunking misinformation can still be challenging in light of both (politically) motivated cognition (Flynn et al., 2017), and the continued influence effect (CIE) where people continue to retrieve false information from memory despite acknowledging a correction (Chan et al., 2017; Lewandowsky et al., 2012; Walter & Tukachinsky, 2020). In general, effective debunking requires an alternative explanation to help resolve inconsistencies in people’s mental model (Lewandowsky, Cook, et al., 2020). But even when a correction is effective (Ecker et al., 2017; MacFarlane et al., 2020), fact-checks are often outpaced by misinformation, which is known to spread faster and further than other types of information online (Petersen et al., 2018).

Proactive Approaches: Inoculation Theory and Prebunking

In light of the shortcomings of debunking, scholars have called for more proactive interventions that reduce whether people believe and share misinformation in the first place. Prebunking describes the process of inoculation, where a forewarning combined with a pre-emptive refutation can confer psychological resistance against misinformation. Inoculation theory (McGuire, 1970; McGuire & Papageorgis, 1961) is the most well-known psychological framework for conferring resistance to persuasion. It posits that pre-emptive exposure to a weakened dose of a persuasive argument can confer resistance against future attacks, much like a medical vaccine builds resistance against future illness (Compton, 2013; McGuire, 1964). A large body of inoculation research across domains has demonstrated its effectiveness in conferring resistance against (unwanted) persuasion (for reviews, see Banas & Rains, 2010; Lewandowsky & van der Linden, 2021), including misinformation about climate change (Cook et al., 2017; van der Linden et al., 2017), conspiracy theories (Banas & Miller, 2013; Jolley & Douglas, 2017), and astroturfing by Russian bots (Zerback et al., 2020).

In particular, the distinction between active vs. passive defences has seen renewed interest (Banas & Rains, Reference Banas and Rains2010). As opposed to traditional passive inoculation where participants receive the pre-emptive refutation, during active inoculation participants are tasked with generating their own “antibodies” (e.g., counter-arguments), which is thought to engender greater resistance (McGuire & Papageorgis, 1961). Furthermore, rather than inoculating people against specific issues, research has shown that making people aware of both their own vulnerability and the manipulative intent of others can act as a more general strategy for inducing resistance to deceptive persuasion (Sagarin et al., 2002).

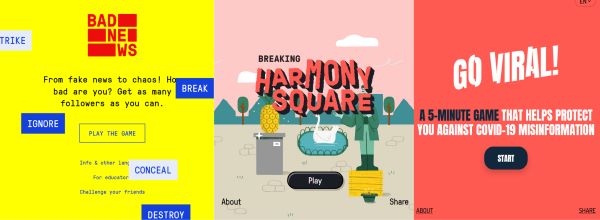

Perhaps the most well-known example of active inoculation is Bad News (Roozenbeek & van der Linden, 2019b), an interactive fake news game where players are forewarned and exposed to weakened doses of the common techniques that are used in the production of fake news (e.g., conspiracy theories, fuelling intergroup polarization). The game simulates a social media feed and over the course of 15 to 20 minutes lets players actively generate their own “antibodies” in an interactive environment. Similar games have been developed for COVID-19 misinformation (Go Viral!, see Basol et al.), climate misinformation (Cranky Uncle, see Cook, 2019) and political misinformation during elections (Harmony Square, see Roozenbeek & van der Linden, 2020). A growing body of research has shown that after playing “fake news” inoculation games, people are; (a) better at spotting fake news, (b) more confident in their ability to identify fake news, and (c) less likely to report sharing fake news with others in their network (Basol et al., 2020; Roozenbeek, van der Linden, et al., 2020; Roozenbeek & van der Linden, 2019a, 2019b, 2020). Figure 1 shows screenshots from each game.

An Agenda for Future Research on Fake News Interventions

Overview

Although these advancements are promising, in this section, we outline several open questions to bear in mind when designing and testing interventions aimed at countering misinformation: How long their effectiveness remains detectable, the relevance of source effects, the role of inattention and motivated cognition, and the complexities of developing psychometrically validated instruments to measure how interventions affect susceptibility to misinformation.

The Longevity of Intervention Effects

Reflecting a broader lack of longitudinal studies in behavioural science (Hill et al., 2013; Marteau et al., 2011; Nisa et al., 2019), most research on countering misinformation does not look at effects beyond two weeks (Banas & Rains, 2010). While Swire, Berinsky, et al. (Swire, Berinsky, Lewandowsky and Ecker 2017) found that most effects had expired one week after a debunking intervention, Guess et al. (Guess, Lerner, Lyons, Montgomery, Nyhan, Reifler and Sircar 2020) report that three weeks after a media-literacy intervention effects can either dissipate or endure.

Evidence from studies comparing interventions indicates that expiration rates may vary depending on the method, with inoculation-based effects generally staying intact for longer than narrative, supportive, or consensus-messaging effects (e.g., see Banas & Rains, 2010; Compton & Pfau, 2005; Maertens, Anseel, et al., 2020; Niederdeppe et al., 2015; Pfau et al., 1992). Although some studies have found inoculation effects to decay after two weeks (Compton, 2013; Pfau et al., 2009; Zerback et al., 2020), the literature is converging on an average inoculation effect that lasts for at least two weeks but largely dissipates within six weeks (Ivanov et al., 2018; Maertens, Roozenbeek, 2020). Research on booster sessions indicates that the longevity of effects can be prolonged by repeating interventions or regular assessment (Ivanov et al., 2020; Pfau et al., 2006).

Gaining deeper insights into the longevity of different interventions, looking beyond immediate effects, and unveiling the mechanisms behind decay (e.g., interference and forgetting), will shape future research towards more enduring interventions.

Source Effects

When individuals are exposed to persuasive messages (Petty & Cacioppo, 1986; Wilson & Sherrell, 1993) and evaluate whether claims are true or false (Eagly & Chaiken, 1993), a large body of research has shown that source credibility matters (Briñol & Petty, 2009; Chaiken & Maheswaran, 1994; Maier et al., 2017; Pornpitakpan, 2004; Sternthal et al., 1978). A significant factor contributing to source credibility is similarity between the source and message receiver (Chaiken & Maheswaran, 1994; Metzger et al., 2003), particularly attitudinal (Simons et al., 1970) and ideological similarity (Marks et al., 2019).

Indeed, when readers attend to source cues, source credibility affects evaluations of online news stories (Go et al., 2014; Greer, 2003; Sterrett et al., 2019; Sundar et al., 2007) and in some cases, sources impact the believability of misinformation (Amazeen & Krishna, 2020; Walter & Tukachinsky, 2020). In general, individuals are more likely to trust claims made by ideologically congruent news sources (Gallup, 2018) and discount news from politically incongruent ones (van der Linden, Panagopoulos, et al., 2020). Furthermore, polarizing sources can boost or retract from the persuasiveness of misinformation, depending on whether or not people support the attributed source (Swire, Berinsky, 2017; Swire, Ecker, et al., 2017).

For debunking, organizational sources seem more effective than individuals (van der Meer & Jin, 2020; Vraga & Bode, 2017) but only when information recipients actively assess source credibility (van Boekel et al., 2017). Indeed, source credibility may matter little when individuals do not pay attention to the source (Albarracín et al., 2017; Sparks & Rapp, 2011), and despite highly credible sources the continued influence of misinformation may persist (Ecker & Antonio, 2020). For prebunking, evidence suggests that inoculation interventions are more effective when they involve high-credibility sources (An, 2003). Yet, sources may not impact accuracy perceptions of obvious fake news (Hameleers, 2020), political misinformation (Dias et al., 2020; Jakesch et al., 2019), or fake images (Shen et al., 2019), potentially because these circumstances reduce news receivers’ attention to the purported sources. Overall, relatively little remains known about how people evaluate sources of political and non-political fake news.

Inattention versus Motivated Cognition

At present, there are two dominant explanations for what drives susceptibility to and sharing of fake news. The motivated reflection account proposes that reasoning can increase bias. Identity-protective cognition occurs when people with better reasoning skills use this ability to come up with reasons to defend their ideological commitments (Kahan et al., 2007). This account is based on findings that those who have the highest levels of education (Drummond & Fischhoff, 2017), cognitive reflection (Kahan, 2013), numerical ability (Kahan et al., 2017), or political knowledge (Taber et al., 2009) tend to show more partisan bias on controversial issues.

The inattention account, on the other hand, suggests that people want to be accurate but are often not thinking about accuracy (Pennycook & Rand, 2019, 2020). This account is supported by research finding that deliberative reasoning styles (or cognitive reflection) are associated with better discernment between true and false news (Pennycook & Rand, 2019). Additionally, encouraging people to pause, deliberate, or think about accuracy before rating headlines (Bago et al., 2020; Fazio, 2020; Pennycook et al., 2020) can lead to more accurate identification of false news for both politically congruent and politically-incongruent headlines (Pennycook & Rand, 2019).

However, both theoretical accounts suffer from several shortcomings. First, it is difficult to disentangle whether partisan bias results from motivated reasoning or selective exposure to different (factual) beliefs (Druckman & McGrath, 2019; Tappin et al., 2020). For instance, although ideology and education might interact in a way that enhances motivated reasoning in correlational data, exposure to facts can neutralize this tendency (van der Linden et al., 2018). Paying people to produce more accurate responses to politically contentious facts also leads to less polarized responses (Berinsky, Reference Berinsky2018; Bullock et al., 2013; Bullock & Lenz, 2019; Jakesch et al., 2019; Prior et al., Reference Prior, Sood and Khanna2015; see also Tucker, 2020). On the other hand, priming partisan identity-based motivations leads to increased motivated reasoning (Bayes et al., 2020; Prior et al., 2015).

Similarly, a recent re-analysis of Pennycook and Rand (Pennycook and Rand 2019) found that while cognitive reflection was indeed associated with better truth discernment, it was not associated with less partisan bias (Batailler et al.,). Other work has found large effects of partisan bias on judgements of truth (see also Tucker, 2020; van Bavel & Pereira, 2018). One study found that animosity toward the opposing party was the strongest psychological predictor of sharing fake news (Osmundsen et al., 2020). Additionally, when Americans were asked for top-of-mind associations with the word “fake news,” they most commonly answered with news media organizations from the opposing party (e.g., Republicans will say “CNN,” and Democrats will say “Fox News”; van der Linden, Panagopoulos, et al., 2020). It is therefore clear that future research would benefit from explicating how interventions target both motivational and cognitive accounts of misinformation susceptibility.

Psychometrically Validated Measurement Instruments

To date, no psychometrically validated scale exists that measures misinformation susceptibility or people’s ability to discern fake from real news. Although related scales exist, such as the Bullshit Receptivity scale (BSR; Pennycook et al., 2015) or the conspiracy mentality scales (Brotherton et al., 2013; Bruder et al., 2013; Swami et al., 2010), these are only proxies. To measure the efficacy of fake news interventions, researchers often collect (e.g., Cook et al., 2017; Guess et al., 2020; Pennycook et al., 2020; Swire, Berinsky, et al., 2017; van der Linden et al., 2017) or create (e.g., Roozenbeek, Maertens, et al., 2020; Roozenbeek & van der Linden, 2019b) news headlines and let participants rate the reliability or accuracy of these headlines on binary (e.g., true vs. false) or Likert (e.g., reliability 1-7) scales, resulting in an index assumed to depict how skilled people are at detecting misinformation. These indices are often of limited psychometric quality, and can suffer from varying reliability and specific item-set effects (Roozenbeek, Maertens, et al., 2020).

Recently, more attention has been given to the correct detection of both factual and false news, with some studies indicating people improving on one dimension, while not changing on the other (Guess et al., 2020; Pennycook et al., 2020; Roozenbeek, Maertens, 2020). This raises questions about the role of general scepticism, and what constitutes a “good” outcome of misinformation interventions in a post-truth era (Lewandowsky et al., 2017). Relatedly, most methods fail to distinguish between veracity discernment and response bias (Batailler et al.,). In addition, stimuli selection is often based on a small pool of news items, which limits the representativeness of the stimuli and thus their external validity.

A validated psychometric test that provides a general score, as well as reliable subscores for false and factual news detection (Roozenbeek, Maertens, 2020), is therefore required. Future research will need to harness modern psychometrics to develop a new generation of scales based on a large and representative pool of news headlines. An example is the new Misinformation Susceptibility Test (MIST, see Maertens, Götz, et al., 2020).

Better measurement instruments combined with an informed debate on desired outcomes, should occupy a central role in the fake news intervention debate.

Implications for Policy

A number of governments and organizations have begun implementing prebunking and debunking strategies as part of their efforts to limit the spread of false information. For example, the Foreign, Commonwealth and Development Office and the Cabinet Office in the United Kingdom and the Department of Homeland Security in the United States have collaborated with researchers and practitioners to develop evidence-based tools to counter misinformation using inoculation theory and prebunking games that have been scaled across millions of people (Lewsey, 2020; Roozenbeek & van der Linden, 2019b, Roozenbeek and van der Linden 2020). Twitter has also placed inoculation messages on users’ news feeds during the 2020 United States presidential election to counter the spread of political misinformation (Ingram, 2020).

With respect to debunking, Facebook collaborates with third-party fact checking agencies that flag misleading posts and issue corrections under these posts (Bode & Vraga, 2015). Similarly, Twitter uses algorithms to label dubious Tweets as misleading, disputed, or unverified (Roth & Pickles, 2020). The United Nations has launched “Verified”—a platform that builds a global base of volunteers who help debunk misinformation and spread fact-checked content (United Nations Department of Global Communications, 2020).

Despite these examples, the full potential of applying insights from psychology to tackle the spread of misinformation remains largely untapped (Lewandowsky, Smillie, et al., 2020; Lorenz-Spreen et al., 2020). Moreover, although individual-level approaches hold promise for policy, they also face limitations, including the uncertain long-term effectiveness of many interventions and limited ability to reach sub-populations most susceptible to misinformation (Nyhan, 2020; Swire, Berinsky, et al., 2017). Hence, interventions targeting consumers could be complemented with top-down approaches, such as targeting the sources of misinformation themselves, discouraging political elites from spreading misinformation through reputational sanctions (Nyhan & Reifler, 2015), or limiting the reach of posts published by sources that were flagged as dubious (Allcott et al., 2019).

Conclusion

We have illustrated the progress that psychological science has made in understanding how to counter fake news, and have laid out some of the complexities to take into account when designing and testing interventions aimed at countering misinformation. We offer some promising evidence as to how policy-makers and social media companies can help counter the spread of misinformation online, and what factors to pay attention to when doing so.

See footnotes and references at source.

Originally published by The Spanish Journal of Psychology 24 (04.12.2021) under the terms of a Creative Commons Attribution-Noncommercial-Sharealike 4.0 International license.