On October 29, 1969, an experiment at UCLA sparked a communication revolution, the implications of which are still unfolding nearly five decades later.

By Dr. Giovanni Navarria

Associate, Sydney Democracy Network

School of Social and Political Sciences (SSPS)

University of Sydney

The Spark

Introduction

In the late hours of October 29, 1969, an apparently insignificant experiment carried out in a lab in the University of California in Los Angeles (UCLA) would spark a revolution, the implications of which are still unfolding nearly five decades later.

It was a rather unusual revolution. Firstly, it was peaceful and harmless. Unlike the three “days of rage” demonstrations organised by the Weatherman group earlier that month in Chicago, that evening in Los Angeles saw no violence, let alone any sign of rage; no one was arrested, the streets remained quiet and all windows were left intact. The police were never even aware of what was going on at UCLA.

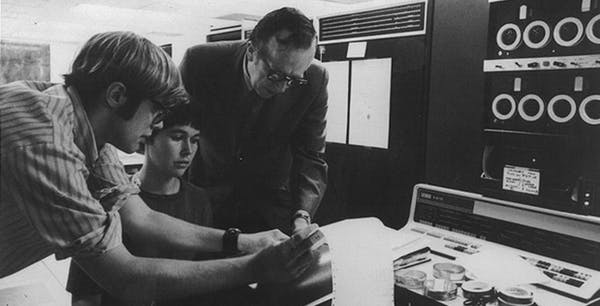

The experiment had nothing to do with politics. The two unlikely rebels were not the ringleaders of a radical political organisation, but rather, two University researchers: Leonard Kleinrock, a Professor of Computer Science, and Charley Kline, one of his post-graduate students.

Far from sharpening knives and devising complicated plots to overthrow the world’s social order, the two spent the evening rather quietly, shut inside their lab, focusing all of their energy on what appeared to be a complex, yet merely technical problem: how to establish a communication link between two computers hundreds of miles apart, with one located at UCLA and the other at the Stanford Research Institute (SRI).

That two computers can “talk” to each other and exchange information is the precisely kind of magic we are liable to take for granted in today’s world of technological marvels. Our precious smartphones receive and send data all the time. These processes are often so automatic that we remain (happily) oblivious to them. But in 1969, “communication” between machines was still very much an unsolved puzzle, at least until the fateful night of October 29.

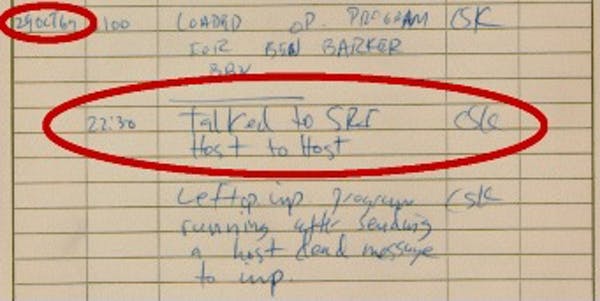

For most of the evening, success eluded the researchers. Only around 10:30pm, after several frustrating attempts, was Kline’s computer finally able to ‘talk’ to its counterpart at SRI. It was, however, a rather stuttered beginning.

Kline was only able to send the “l” and the “o” of the command line ‘login’ before the system crashed and had to be rebooted. Still, even after the communication link between the two computers was finally successfully established later that night, it seems more than fitting that a friendly “Lo”, a common abbreviation of “Hello”, was the first historic message ever sent over the ARPANET, the experimental computer network built at the end of the 1960s to allow researchers from UCLA, SRI, the University of California Santa Barbara (UCSB) and the University of Utah to work together and share resources.

The message marked an important milestone, and though its load was trivial in terms of mere bytes, its significance was immeasurable. It carried with it the seeds of a new age that would soon revolutionise the way in which not only machines, but also people, communicate with each other. With their stuttered “hello”, Kleinrock and Kline accidentally gave birth to a whole new world of possibility. Their experiment represented the first exploration into a brand new communication galaxy, the shape and scope of which was to be determined by each new communication link added to it.

Thank You, Sputnik

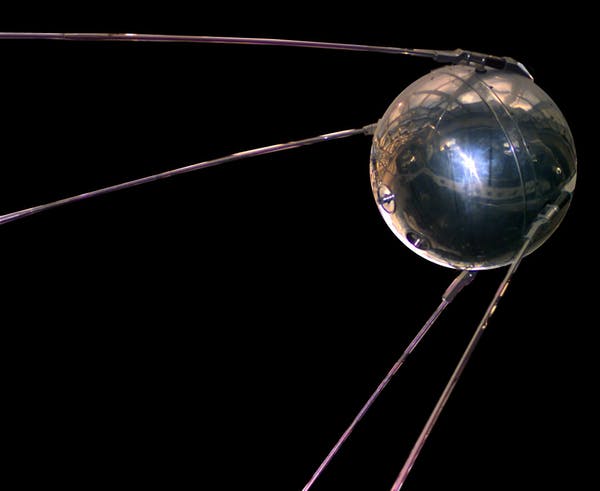

The seeds of the ARPANET experiment, however, were sown more than a decade earlier, on October 4, 1957, the very same day the space race began at the Baikonur Cosmodrome in Kazakhstan with the launch of the Soviet satellite Sputnik.

While only a handful of people were aware of the experiment taking place at UCLA, the Baikonur’s launch didn’t go unnoticed. Press agencies around the world recognised the historic moment and went into a frenzy. The Sputnik, only 58 centimetres in diameter and coming in at just over 80 kilograms, was the first artificial object to float into space, beyond the limits of our earth-bound lives.

The launch was also very significant politically. In the mist of the Cold War, the Sputnik was both a scientific slap in the face for Americans and a new threat facing the West. It was “the smoking gun” that left no doubt that the Soviets were on the road to colonising space. From all the way up there they would soon be able to spy on their enemies without interruption and (the worst of possible nightmares) stealthily drop nuclear bombs on American soil.

It was now clear that, contrary to popular belief, the Russians were no longer behind the Americans in terms of technology. Indeed, it was looking to be rather the opposite. As one of Senator Lyndon Johnson’s aides, George E. Reedy, put it perfectly in November 1957: “It took [the Russians] four years to catch up to our atomic bomb and nine months to catch up to our hydrogen bomb. Now we are trying to catch up to their satellite”.

The Sputnik’s wakeup call sent the US Administration into panic and produced two important consequences: one direct, clear from the beginning, and one unintended, which took several years to materialise.

The first consequence was political: in jump-starting the space race, the Sputnik opened a new front in the Cold War between the Soviet Union and the United States. To catch up with the Russians, President Dwight Eisenhower assigned to the newly established Advanced Research Project Agency (ARPA) the coordination of all Defence Research and Development programs (R&D). However, ARPA’s involvement with the space race didn’t last long. Despite a budget exceeding $2 billion and some initial success with Explorer 1 and Vanguard 1, the first two American satellites sent into space, the US Government decided to set up a new agency, the National Aeronautics and Space Administration (NASA) to maximise their space effort and cope more efficiently with the political pressure spawned by the Sputnik’s feat.

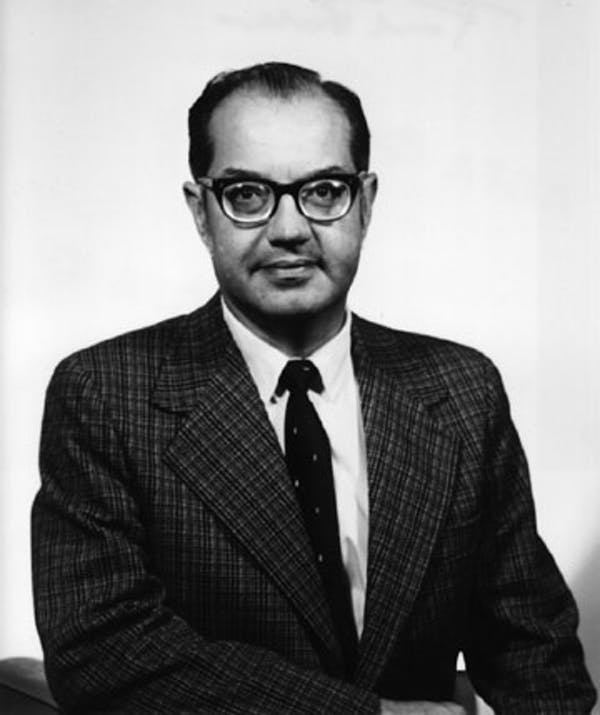

In the summer of ’58, NASA was born. A civil federal agency with the stated mission to ‘plan, direct, and conduct aeronautical and space activities’, it effectively stripped ARPA of all its space and rocket projects. To avoid falling into oblivion, the agency had to quickly reinvent itself and find new goals and new sectors for its projects. With a reduced but still considerable budget, ARPA found the solution to its problems in the brand new sector of pure research (Computer Science) and the visionary leadership of Joseph Carl R. Licklider. The long-term effect of ARPA’s new path was the second (and unintended) consequence of the Sputnik: a new galaxy of communication, first called ARPANET and later, the Internet.

Man–Computer Symbiosis

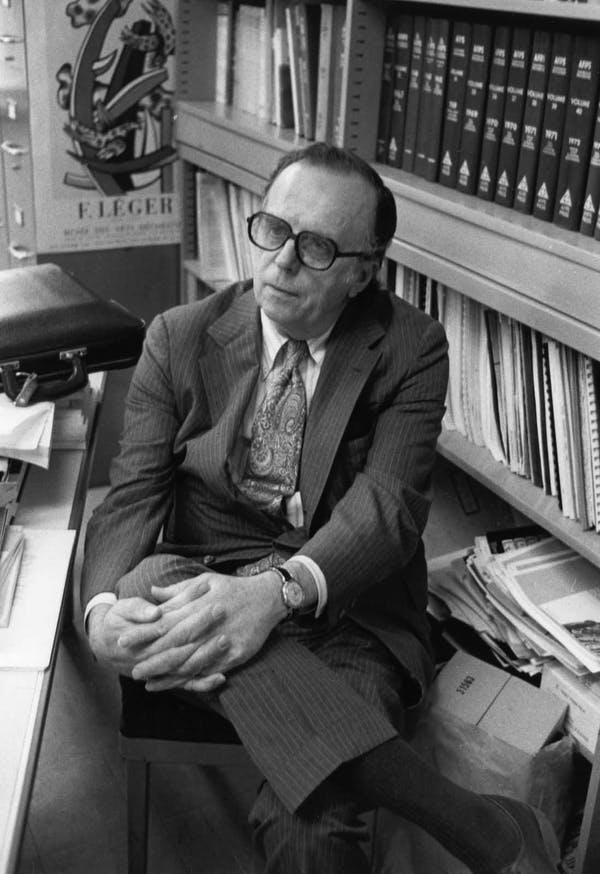

Licklider, by all accounts a brilliant scientist, strongly believed the future would be shaped by computers that were linked into a network. Computer networks, he argued, would become critical for expanding the potential of human thinking beyond all known limits. Thinking, Licklider reasoned, is often burdened with unnecessary tasks that limit our capacity to really be creative. His argument was informed by the results of an experiment he had conducted in the same year that Sputnik reached space.

Entirely focused on his own working routine, the experiment proved that about 85 percent of his “thinking time” was devoted to activities that were not intellectual, but rather purely clerical or mechanical. Much more time, Licklider found out, “went into finding or obtaining information than into digesting it”. If science could find a suitable, more reliable, and faster substitute for the human brain for those clerical activities, Licklider theorised, this would result in an unparalleled enhancement of the quality and depth of our thinking process. If machines could take care of “clerical” activities, humans would have more time and energy to dedicate to “thinking”, “imagining”, creativity and interactivity.

Licklider’s ideas went beyond his era’s traditional conception of computers as big calculators. He envisioned a much more interactive and complex environment in which computers played a role in the natural extension of humanity.

In his seminal 1960 paper Man–Computer Symbiosis, Licklider wrote that, in the near future “human brains and computing machines will be coupled together very tightly”. The resulting symbiosis, he postulated, will “think as no human brain has ever thought and process data in a way not approached by the information-handling machines we know today”. By the early 1960s it was clear to Licklider that computers were destined to become an integral part of human life. He was thinking of what he later called, with a certain emphasis, “the intergalactic network”, the perfect symbiosis between computers and humans. The ultimate goal of this symbiosis was to improve significantly the quality of people’s lives.

Time Sharing

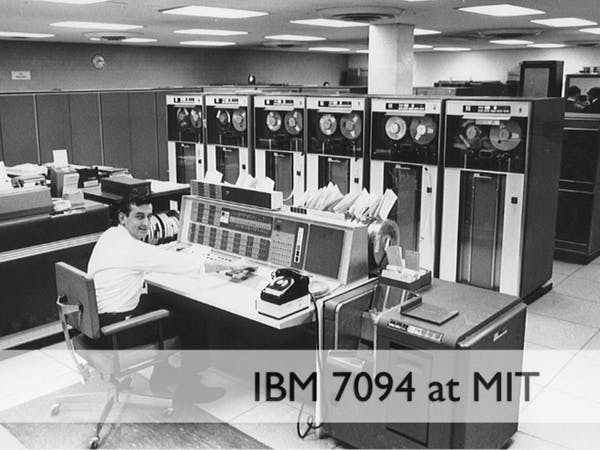

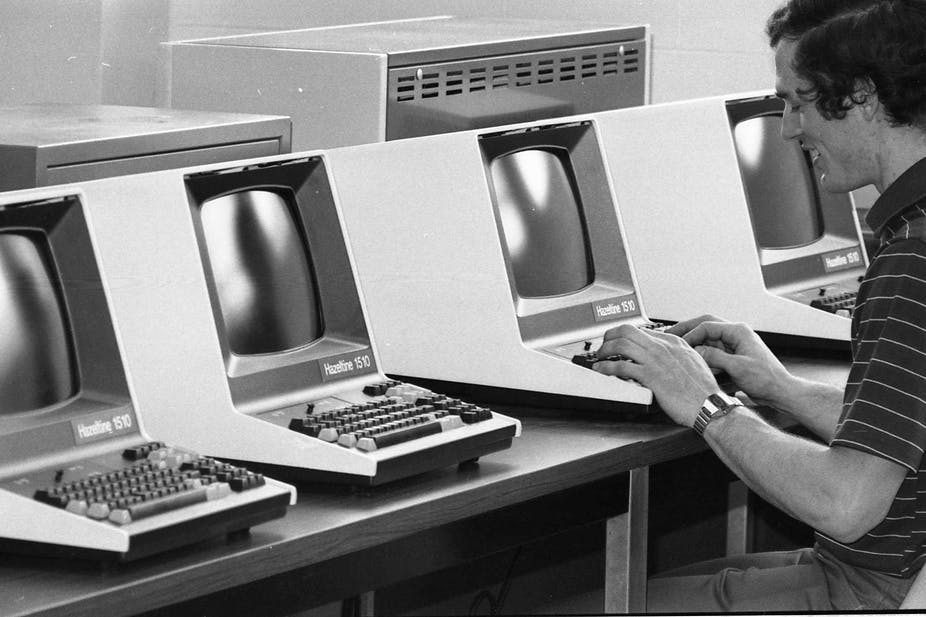

Licklider lived in an age when computers were nothing like the one we used today. They were gigantic and expensive, with exorbitant price tags (ranging from $500,000 to several million dollars.)

When he arrived at ARPA on October 1, 1962, Licklider quickly realised that to overcome the unsustainable costs of ARPA funded computers research centres, the centres had to be forced to buy time–sharing computers.

Time-sharing systems were designed to allow multiple users to connect to a powerful mainframe computer simultaneously, and to interact with it via a console that ran their applications by sharing processor time. Before time–sharing systems were adopted, computers, even the most expensive ones, were bound to do jobs serially: one at a time. This meant that computers were often idle as they waited for its users’ input or computation result.

Time-sharing systems, then, guaranteed the most effective use of a computer’s processing power.

If the first step was to force universities to use their funds to buy time-sharing systems, the next step was to allow access to off-site resources via other computers. In other words, to make those recourses available via a network.

The computers we use today, including our small but incredibly powerful smartphones, run simultaneous applications all the time. While I am writing this piece, I can play music, receive email, run a diagnostic on my hard-drive, search a database, and much more. In the pre-Internet world, in the era of expensive mainframe computers, running each task would require a dedicated machine.

Moreover, despite their formidable size and price tag, mainframe computers were only capable of performing a limited number of computational tasks, which was usually tailored to the needs of whoever owned or rented them. If an experiment required a variety of tasks, it would require the use of more than one computer. However, given the prohibitive costs of the hardware, most research centres could not afford more than one machine. So the solution to the problem had to be found elsewhere: resource-sharing via a computer network. But Building such network was by no means a simple task.

During the previous decade, the lack of homogeneity in the language of computer programming had created a Babel of multiple systems and debug procedures that slowed the development of computer science. For Licklider, it was clear that the man-computer symbiosis he had envisioned could only materialise after the different systems learned to speak the same language, and after they were integrated into a super-network.

The initial push for time-sharing was instrumental in breeding a new culture among computer scientists. “Networking” centred around the need for common standards to facilitate communication through different systems, became indispensable for time-sharing to be effective. Though initially confined to the elitist realm of computer science, in the long term (and with the spread of the Internet), networking has become the norm in the organising processes of many human activities that we take for granted today.

The Network Begins to Take Shape

Introduction

In 1962, ARPA’s Command and Control Research Division became the Information Processing Techniques Office (IPTO), and the IPTO, first under J. C. R. Licklider and then under Ivan Sutherland, became a key ally to the development of computer science.

The main function of the IPTO was to select, fund and coordinate US-based research projects that focused on advanced computer and network technologies. The IPTO had an estimated annual budget of $19 million, with individual grants ranging from $500 thousand to $3 million. Following the path traced by Licklider, it was at the IPTO and under the leadership of a young prodigy named Larry Roberts that the Internet began to shape.

Until the end of the 1960s, running tasks on computers remotely meant transferring data along the telephone line. This system was essentially flawed. The analogue circuits of telephone network could not guarantee reliability, the connection remained on once activated, and performed too slowly to be considered efficient.

During these early years, it was not uncommon for whole sets of information and input to be lost in the journey from the a terminal computer to the mainframe computer (located remotely), with the whole procedure (not a simple one) having to be restarted and the information re-sent. This procedure was by all means burdensome: it was highly ineffective, costly (the line remained in use for a long period of time while computers waited for inputs) and time consuming.

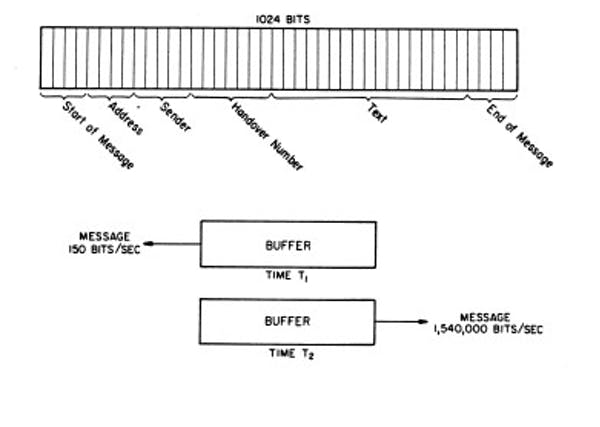

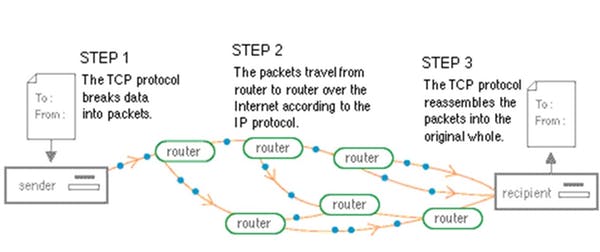

The solution to the problem was called packet-switching, a simple and efficient method to rapidly store-and-forward standard units of data (1024 bits) across a computer network. Each packet is handled as if it were a hot potato, rapidly passed from one point of the network to the next, until it reaches its intended recipient.

Like the ones sent through the post system, each packet contains information about the sender and its destination, and also carries a sequence number that allows the end-receiver to reassemble the message in its original form.

The theory behind the system was elaborated independently yet simultaneously by three researchers: Leonard Kleinrock, then at the Massachusetts Institute of Technology (MIT), Donald Davies at the National Physical Laboratory (NPL) in the UK, and Paul Baran at RAND Corporation in California. Though Davies’ own word “packet” was eventually accepted as the most appropriate term to refer to the new theory, it was Baran’s work on distributed networks that was later adopted as the blue-print of the ARPANET.

Distributed Communication Networks

RAND (Research and Development) Corporation, founded in 1946 and based in Santa Monica, California, is to this day a non-profit institution that provides research and analysis in a wide range of fields with the aim of helping the development of public policies and improving decision-making processes. During the Cold War era, RAND researchers produced possible war scenarios (like the hypothetical aftermath of a nuclear attack by the Russians on American soil for the US government. Among other things, RAND’s researchers attempted to predict the number of casualties, the degree of reliability of the communication system and the possible danger of a black-out in the chain of command if a nuclear conflict suddenly broke out.

Paul Baran was one of the key researchers at RAND. In 1964 he published a paper titled On Distributed Communications in which he outlined a communication system resilient enough to survive a nuclear attack. Though it was impossible to build a system of communication that could guarantee the endurance of all single points, Baran posited that it was reasonable to imagine a system which would force the enemy to destroy “n of n stations”. So “if n is made sufficiently large”, Baran wrote, “it can be shown that highly survivable system structures can be built even in the thermonuclear era”.

Baran was the first to postulate that communication networks can only be built around two core structures: “centralised (or star) and distributed (or grid or mesh)”. From this he derived three possible types of networks: A) centralised, B) decentralised and C) distributed. Of the three types, the distributed was found to be far more reliable in the event of a military strike.

A and B represented types of systems where the “destruction of a single central node destroys communication between the end stations”. By contrast, the distributed network (C) was different. In theory, one could remove or destroy one of its parts without causing great harm to the economy or function of the whole network. When a part of a distributed network is no longer functioning, the task performed by that part of the network can easily be moved to a different section.

Unfortunately, Baran’s ideal network was ahead of its time. Redundancy – the number of nodes attached to each node – is a key element to increasing the strength of any distributed network.. Baran’s required level of redundancy (at least three or four nodes attached to each node) can only be properly sustained in a fully developed digital environment, which in the 1960s was not yet available. Baran was surrounded by analogue technology; we, by contrast, live now in a rapidly expanding digital age.

Thanks, but Not Interested

Nevertheless, Baran’s speedy store-and-forward distributed network was highly-efficient and required very little storage at node level; the entire system had an estimated cost of $60 million to support 400 switching nodes and in turn service l00,000 users. And though RAND believed in the project, it failed to find the partners with whom to build it.

First the Air Force and then AT&T turned down RAND’s proposal in 1965. AT&T argued that such a network was neither feasible, nor a better option to its own existing telephone network. But according to Baran, the telephone company simply believed that ‘it can’t possibly work. And, if did, damned if we are going to set up any competitor to ourselves.’

If AT&T had accepted the proposal, the Internet could have been a commercial enterprise from the start, and may well have ended up completely different to the one that we use today.

The Department of Defence (DoD) also got involved, but failed to seize the moment – and with it the chance of turning Baran’s project, from its early stages, into a military network. After examining RAND’s proposal in 1965, the DoD, for reasons of political power struggle with the Air Force, decided to put the project under the supervision of the Defence Communication Agency (DCA). This was a strategic mistake. The Agency was the least desirable manager for the project. Not only did it lack any technical competence in digital technology, it also had a bad reputation as the parking lot for employees that had been rejected by the other government agencies. As Baran put it:

If you were to talk about digital operation [with someone from the DCA] they would probably think it had something to do with using your fingers to press buttons.

There was, however, some truth in considering Baran’s network model impracticable. It was, at least, a decade ahead of its time. Some of its component didn’t even exist yet. Baran had, for example, imagined a number of mini computers to be used as routers, but this technology simply wasn’t available in 1965. Hence, Baran’s vision became economical only when, a few years later, the mini-computer was invented.

ARPANET Begins to Take Shape

It was only in 1969, at UCLA (not that far from Santa Monica where Baran worked), that the first cornerstone of the Internet was finally laid, and the ARPANET, the first computer network was built.

Paradoxically, what had began a decade earlier as a military answer to a Cold War threat (the Sputnik), turned a completely different kind of network.

In the initial plan for the ARPANET presented at the CM Symposium in Gatlinburg during the October of 1967, Larry Roberts, the project leader, listed a series of reasons to build the network. None of them were concerned with military issues. Instead, they looked towards sharing data load between computers, providing an electronic mail service, sharing data and programmes and finally, towards providing a service to log in and use computers remotely.

In the original ARPANET Program Plan, published a year later (3 June 1968), Roberts wrote:

The objective of this program is twofold: (1) to develop techniques and obtain experience on interconnecting computers in such a way that a very broad class of interactions are possible, and (2) to improve and increase computer research productivity through resource sharing.

During the first half of the Sixties, Licklider had pushed for IPTO grant recipients to use their funds to buy time-sharing computers. The move aimed to help optimise the use of resources and reduce the overall costs of ARPA’s project. This was, however, not enough. To be truly effective, those computers had to be linked together in a network; and that, in turn, implied the computers had to be able to communicate with each other.

In 1965 this communication problem became startlingly clear to Robert Taylor, a former NASA System Engineer, who, initially hired as Ivan Sutherland’s deputy, became IPTO’s director when Sutherland left in 1966. Taylor quickly realised that the fast growing community of research centres sponsored by his office was very complex but poorly organised.

In stark contrast with the rising sense of community shared by individual researchers throughout the country (a community fostered mainly by participating at academic conferences), each centre was barely interacting with the others. In fact, resource sharing was limited to one mainframe computer at a time. This lack of interaction was partially due to the lack of both a streamlined procedure and a network infrastructure to access remotely located resources.

At the time, if researchers wanted to use the resources (applications and data) stored in a computer at their UCLA campus, they had to log in through a terminal. This procedure became more cumbersome when the researchers needed to access another resource, for instance a graphic application, which was not loaded on their mainframe computer, but was instead only available at another computer, in another location, let’s say in Stanford. In that case, the researchers were required to log into the computer at Stanford from a different terminal with a different password and a different user name, using a different programming language. There was no possible mode of communication between the different mainframe computers. In essence, these computers would have been like aliens speaking different idioms to each other.

Taylor saw this issue as wasting funds and resources, and his direct experiences with problem were a daily source of frustration. Due to the incompatibility of hardware and software, in order to use the three terminals available in his office at the Pentagon, Taylor was required to remember three different log in procedures and use three different programming languages and operating systems every morning.

For the IPTO, and in turn for ARPA, the lack of communication and compatibility between the hardware and software of their many funded research centres was causing a widening black hole in the annual budget: as each contractor had different computing needs (i.e. it needed different resources in terms of hardware and software), the IPTO had to handle several (sometimes similar) requests each year to meet those needs.

Like Licklider, Taylor understood that, in most cases, the costs could be optimised and greatly reduced by creating an easily accessible network of resource-sharing mainframe computers.

In such a network, each computer would have to be different, with different specialisations, applications and hardware. The next step, then, was to create that network.

In 1966, after a brief and quite informal meeting with Charles Herzfeld, then Director of ARPA, Taylor was given an initial budget of $1 million to start building an experimental network called ARPANET. The network would link some of the IPTO funded computing sites. The decision took no more than a few minutes. Taylor explained:

I had no proposals for the ARPANET. I just decided that we were going to build a network that would connect these interactive communities into a larger community in such a way that a user of one community could connect to a distant community as though that user were on his local system. First I went to Herzfeld and said, this is what I want to do, and why. That was literally a 15-minute conversation.

Then, Herzfeld asked: “How much money do you need to get it off the ground?” And Taylor, without thinking too much of it, said “a million dollars or so, just to get it organised”. Herzfeld’s answer was instantaneous: “You’ve got it”.

The ARPANET Comes to Life

Introduction

After Charles Herzfeld, the Director of ARPA, gave his blessing to commence the first stage of the ARPANET project, Robert Taylor began circulating the plan to some ARPA’s contractors. Just like Baran, Taylor soon found that good ideas are not always easy to sell. The initial reaction to the ARPANET was one of suspicion: “Most of the people I talked to,” said Taylor, “were not initially enamoured with the idea”. Many feared that a network would be “an opportunity for someone else to come in and use their [computing] cycles”. However, the project finally started coming to life when Lawrence G. Roberts, a very talented researcher from the Lincoln Lab at MIT, was chosen as the ARPANET project manager.

Only 29 at the time, Roberts had already worked on another groundbreaking experiment in computer networks. In 1966, with his colleague Thomas Marrill, Roberts used the Western Union Telephone Line to link two super computers across the country (the Q–32 at the System Development Corporation in Santa Monica, California, and the TX–2 at the Lincoln Lab, in Lexington, Massachusetts) in a time-shared environment.

The experiment was a “testing environment” that aimed to verify whether it was possible to build a computer network on a continental scale “without enforcing standardisation”. Since the network was built “to overcome the problems of computer incompatibility”, it would have been ill-advised to enforce a standard protocol “as a prerequisite of membership in the network”. Instead, Roberts and Merrill argued, for a network to work efficiently, it required maximum flexibility.

If a protocol which is good enough to be put forward as a standard is designed, adherence to this standard should be encouraged but not required.

The idea of flexibility is an important building block of the Internet we use today. It allows the development of different networks, with different standards, all of which are able to connect with each other. This variety of networks and the lack of enforced standardisation, in time, have become a very important asset of the Internet. It has also made it a much more difficult environment to control as a whole.

From a purely technical perspective, Marrill and Roberts’ experiment proved that it was possible to connect different computers and share resources between them. However, both researchers faced the same problem Baran had foreseen for his distributed adaptive network: “dial communications based on the telephone network were too slow and unreliable to be operationally useful”.

As such, one of the important lessons learned from the experiment with the Q–32 and the TX–2 was that, in order to improve the speed and reliability the network, the programmers had to use packet–switching.

Larry Roberts was an advocate for “knowledge sharing”, believing that “sharing” everyone’s work was the only way to advance knowledge. Inspired by Licklider’s ideas of the Intergalactic Network, Roberts was fascinated by the untapped potential of a wide communication network linking symbiotically people, machines and resources. As Roberts explained:

At that point [in 1962], we had all of these people doing different things everywhere, and they were all not sharing their research very well. So you could not use anything anybody else did. Everything I did was useless to the rest of the world, because it was on the TX-2 and it was a unique machine. So unless the software was transportable, the only thing it was useful for was writing technical papers, which was a very slow process. So, what I concluded was that we had to do something about communications, and that really, the idea of the galactic network that Lick[lider] talked about, probably more than anybody, was something that we had to start seriously thinking about.

As soon as he was appointed the ARPANET Program Manager, Roberts began sketching out plans for the network. His starting point was the lesson he learnt while working with Marrill on linking the Q–32 and the TX–2 computers. He drew several sketches of the possible topology and, after discussing the network specifications with many fellow researchers – who included, among others, Licklider, Kleinrock, Donald Davies, Davies’ representative at the Gatlinburg Symposium, Roger Scantlebury, and Baran in Santa Monica – Roberts came up with two indispensable features for the network: a computer interface protocol that all 16 research groups participating in the project could accept; with the capacity to support the estimated 500, 000 packets of traffic per day between the 35 computers that were connected to the 16 hosts.

Who Should Build It?

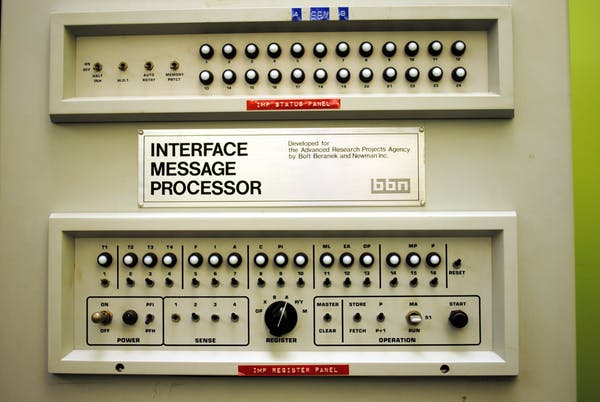

As originally envisioned by Baran, the ARPANET was set to be a fully distributed network that made use of routers (small computers called Interface Message Processors – IMPs) at every node to speed up communication between computers. Each router had four critical tasks to accomplish:

- to receive packets of data from both the computers connected to it,

- break the message blocks into 128 byte packets, or 1024 bits (In his study of packet-switching, Donald Davies theorised that “the length of a packet can be any multiple of 128 bits up to 1,024 bits”. The 128-bit unit length guaranteed some measure of “flexibility to the size of packets” without ever overloading the computer while handling them.

- add the destination and the sender address, and

- use a “dynamically updated routing table”, or an updated map of the routes available in the network (“considering both line availability and queue lengths”) to send the packet over whichever free line was currently the fastest route toward the destination.

As in Baran’s distributed network, at each node, Roberts wrote, the “minicomputer would acknowledge it and repeat the routing process independently”.

On July 29, 1968, ARPA issued a “request for quotation” (RFQ) to several companies in the computer sector to build the network switches (the IMPs).

Some major companies, including IBM and Control Data Corporation (CDC), declined the offer, on the ground that packet–switching would never work. Others responded with detailed proposals. At the end of the day, the two best contenders for the contract were Bolt, Beranek and Newman (BBN) and Raytheon. The former was a small company, the latter a major Defence contractor. Normally, Raytheon would have been the favourite to win this kind of contract. Yet, contrary to the Department of Defence logic, but in line with ARPA’s unconventional approach, in January 1969, BBN was awarded the $1 million contract to build four IMPs for a four-site network by the end of that year. The success of BBN’s bid was a clear sign of the anti-bureaucratic nature around which the Internet was originally built.

However small, BBN was, in the words of one of its most famous researchers Robert Khan, “the cognac of the research business, very distilled”. It was a sort of haven where people like Licklider worked, where dozens of graduate students and faculty members from either Harvard or MIT, free from any university duties but research, were encouraged “to do interesting things and move on to the next interesting thing”. They weren’t required to try “to capitalise on them once they had been developed.”

Moreover, unlike the other bidders, Frank Heart (Head of the Computer System Division at BBN) and his team submitted a 200-page detailed proposalwith flowcharts, calculations and tables explaining how the IMP network would work.

Certainly the well-crafted proposal was an important element in BBN’s winning bid, but it was not the only reason. In the decision taken by ARPA’s committee to award the contract to the team led by Frank Heart, two factors were decisive. First was Roberts’ personal acquaintance with many of the researchers at BBN. Some of them like Heart and Kahn had already informally participated in the early development of the ARPANET project. Licklider, who regularly collaborated with Roberts, also had strong ties with BBN. The second factor was Roberts’ dislike for bureaucracy: as per the style of major defence contractors, Raytheon’s proposal was very complex and presupposed an even more complex and multi-layered team in order to manage it.

In Roberts’ experience, Raytheon’s weighty bureaucratic structure would have not only made things more complicated, it would ultimately slowed down the whole project as well. Dealing with companies like Raytheon meant wasting half of your time trying to find the right person to talk to about any problems the project encountered. On the other hand, the BBN team was small and simple: Frank Heart was the head of the team and the whole communication process between ARPA and BBN only required a telephone call between Roberts and Heart.

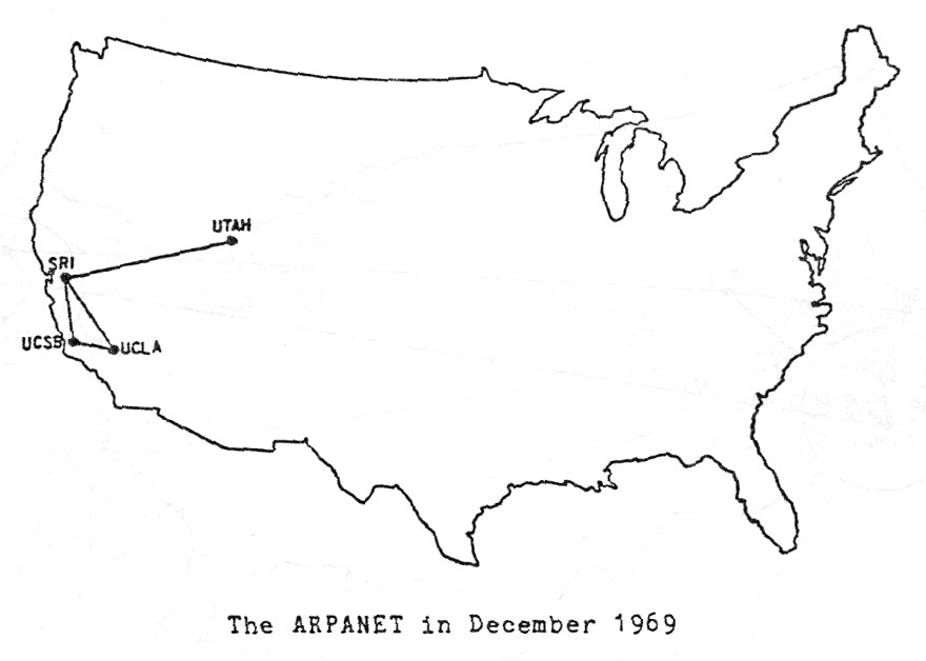

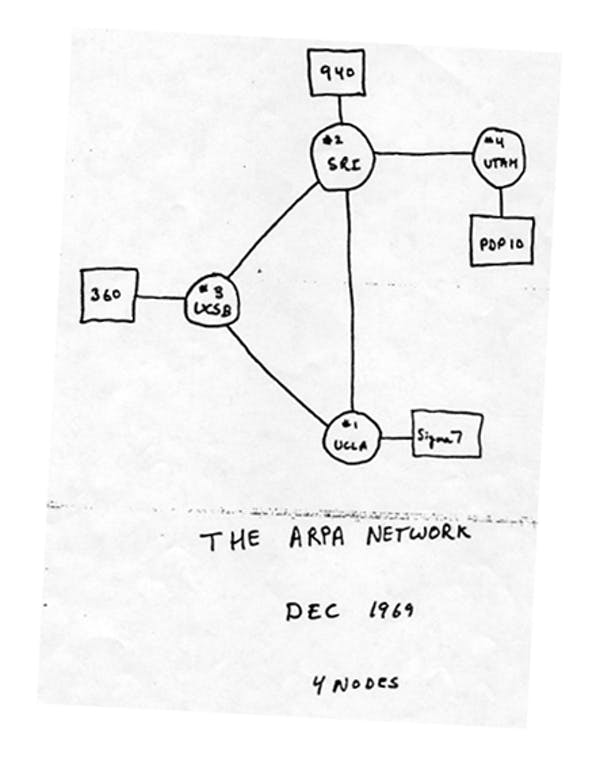

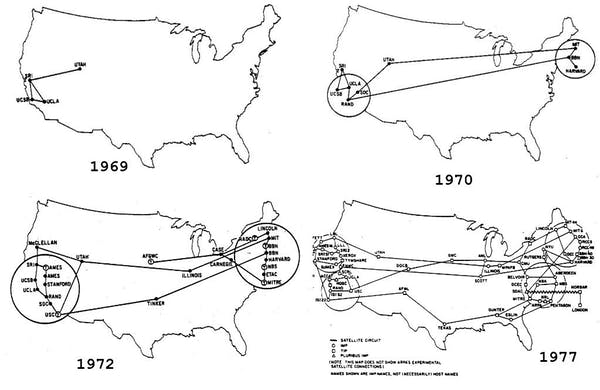

The first four nodes of the ARPANET Network were the University of California Los Angeles (UCLA), the University of California Santa Barbabra (UCSB), the University of Utah, and the Stanford Research Institute (SRI).

The first computer was installed at UCLA September 1, 1969. UCLA was chosen because of Leonard Kleinrock and his ARPA’s funded Network Measurement Center. The centre focused on analysing and measuring the network traffic, as well as producing relevant statistics to be used in the implementation of the network. Stanford was selected because of Doug Engelbart’s Augmentation of Human Intellect project.

Engelbart was already an eminent figure in computer science (he is most renowned for the invention of the mouse). His work on developing a series of tools (a database, a text–preparation system, and a user–friendly interface messaging system) was vital for Roberts to make the network more user–friendly. The first connection between UCLA and SRI was the product of Kleinrock and Kline’s experiment on October 29, 1969. The first message ever sent over the ARPANET took place at 2230 hours. It was a message transmission between the UCLA SDS Sigma 7 Host computer and the SRI SDS 940 Host computer.

From ARPANET to the Internet

Introduction

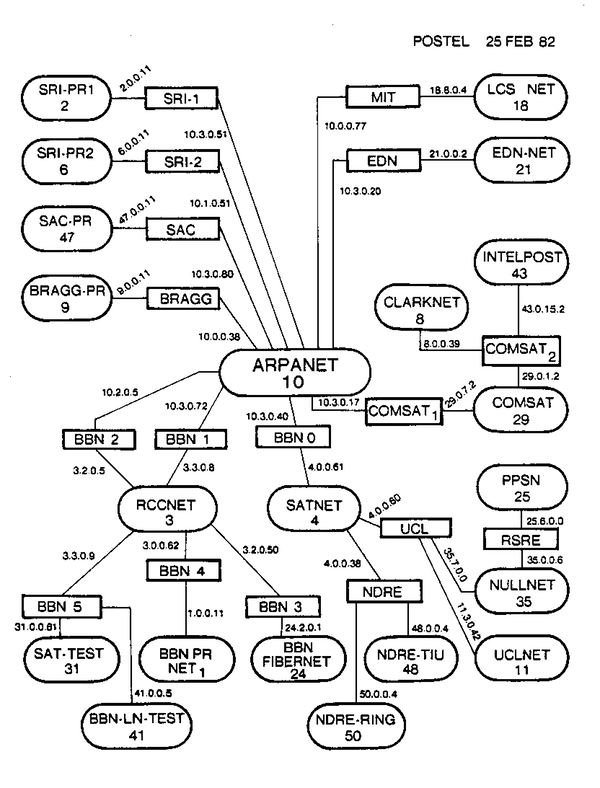

In today’s hyper-tech world, almost any new device (even a fridge, let alone phones or computers) is born “smart” enough to connect easily with the global network. This is possible because at the core of this worldwide infrastructure we call the Internet is a set of shared communication standards, procedures and formats called protocols. However, when in the early 1970s, the first four-nodes of the ARPANET became fully functional things were a bit more complicated. Exchanging data between different computers (let alone different computer networks) was not as easy as it is today. Finally, there was a reliable packet-switching network to connect to, but no universal language to communicate through it. Each host, in fact, had a set of specific protocols and to login users were required to know the host’s own ‘language’. Using ARPANET was like being given a telephone and unlimited credit only to find out that the only users we can call don’t speak our language.

Predictably, the new network was scarcely used at the beginning. Excluding, in fact, the small circle of people directly involved in the project, a much larger crowd of potential users (e.g. graduate students, researchers and the many more who might have benefited from it) seemed wholly uninterested in using the ARPANET. The only thing that kept the network going in those early months was people changing jobs. In face, when researchers relocated to one of the other network sites – for instance from UCLA to Stanford – then, and only then, the usage of those sites’ resources increased. The reason was quite simple: the providential migrants brought the gift knowledge with them. They knew the procedures in use in the other site, and hence they knew how to “talk” with the host computer in their old department.

To find a solution to this frustrating problem, Roberts and his staff established a specific group of researchers – most of them still graduate students – to develop the host-to-host software. The group was initially called the Network Working Group (NWG) and was led by a UCLA graduate student, Steve Crocker. Later, in 1972, the group changed its name in International Network Working Group (INWG) and the leadership passed from Crocker to Vint Cerf. In the words of Crocker:

The Network Working Group consists of interested people from existing or potential ARPA network sites. Membership is not closed. The [NWG] is concerned with the HOST software, the strategies for using the network, and initial experience with the network.

The NWG was a special body (the first of its kind) concerned not only with monitoring and questioning the network’s technical aspects, but, more broadly, with every aspect of it, even the moral or philosophical ones. Thanks to Crocker’s imaginative leadership, the discussion in the group was facilitated by a highly original, and rather democratic method, still in use five decades later. To communicate with the whole group, all a member needed to do was to send a simple Request for Comment (RFC). To avoid stepping on someone’s toes, the notes were to be considered “unofficial” and with “no status”. Membership to the group was not closed and “notes may be produced at any site by anybody”. The minimum length of a RFC was, and still is “one sentence”.

The openness of the RFC process helped encourage participation among the members of a very heterogeneous group of people, ranging from graduate students to professors and program managers. Following a “spirit of unrestrained participation in working group meetings”, the RFC method proved to be a critical asset for the people involved in the project. It helped them reflect openly about the aims and goals of the network, within and beyond its technical infrastructure.

The significance of both the RFC method and the NWG goes far beyond the critical part they played in setting up the standards for today’s Internet. Both helped shape and strengthen a new revolutionary culture that in the name of knowledge and problem-solving tends to disregard power hierarchies as nuisances, while highlighting networking as the only path to find the best solution to a problem, any problem. Within this kind of environment, it is not one’s particular vision or idea that counts, but the welfare of the environment itself: that is, the network.

This particular culture informs the whole communication galaxy we call today the Internet; in fact, it is one of its defining elements. The offspring of the marriage between the RFC and the NGW are called web-logs, web forums, email lists, and of course social media while Internet-working is now a key-aspect in many processes of human interaction, ranging from solving technical issues, to finding solution to more complex social or political matters.

Widening the Network

The NWG however needed almost two years to write the software, but eventually, by 1970 the ARPANET had its first host-to-host protocol, the Network Control Protocol (NCP). By December 1970 the original four-node network had expanded to 10 nodes and 19 hosts computers. Four months later, the ARPANET had grown to 15 nodes and 23 hosts.

By this time, despite delivering “data packets” for more than a year, the ARPANET showed almost no sign of “useful interactions that were taking place on [it]”. The hosts were plugged in, but they all lacked the right configuration (or knowledge) to properly use the network. To make “the world take notice of packet switching”, Roberts and his colleagues decided to give a public demonstration of the ARPANET and its potentials at the International Conference on Computer Communication (ICCC) held in Washington, D.C., in October 1972.

The demonstration was a success: “[i]t really marked a major change in the attitude towards the reality of packet switching” said Robert Kahn. It involved – among other things – demonstrating how tools for network measurement worked, displaying the IMPs network traffic, editing text at a distance, file transfers, and remote logins.

It was just a remarkable panoply of online services, all in that one room with about fifty different terminals.

The demonstration fully succeeded in showing how packet-switching worked to people that were not involved in the original project. It inspired others to follow the example set by Larry Roberts’ network. International nodes located in England and Norway were added in 1973; and in the following years, others packet-switching networks, independent from ARPANET, appeared worldwide. This passage from a relatively small experimental network to one (in principle) encompassing the whole world confronted the ARPANET’s designers with a new challenge: how to make different networks, that used different technologies and approaches, able to communicate with each other?

The concept of “Internetting”, or “open-architecture networking”, first introduced in 1972, illustrates the critical need for the network to expand beyond its limited restricted circle of host computers.

The existing Network Control Protocol (NCP) didn’t meet the requirements. It had been designed to manage communication host-to-host within the same network. To build a true open reliable and dynamic network of networks what was needed was a new general protocol. It took several years, but eventually, by 1978, Robert Kahn and Vint Cerf (two of the BBN guys) succeeded in designing it. They called it Transfer Control Protocol/Internet Protocol (TCP/IP). As Cerf explained

‘the job of the TCP is merely to take a stream of messages produced by one HOST and reproduce the stream at a foreign receiving HOST without change.’

To give an example: when a user sends or retrieve information across the Internet – e.g., access Web pages or upload files to a server – the TCP on the sender’s machine breaks the message into packets and send them out. The IP is instead the part of the protocol concerned with “the addressing and forwarding” of those individual packets. The IP is a critical part of our daily Internet experience: without it, it would be practically impossible to locate the information we are looking for among the billions of machines connected to the network today.

On the receiving end, the TCP helps reassemble all the packets into the original messages, checking errors and sequence order. Thanks to TCP/IP the exchange of data packets between different and distant networks was finally possible

Cerf and Khan’s new protocol opened up new possible avenues of collaboration between the ARPANET and all the other networks around the world that had been inspired by ARPA’s work. The foundations for a worldwide network were laid, and the doors were wide open for anyone to join in.

Expansion of the ARPANET

In the years that followed, the ARPANET consolidated and expanded, all while remaining virtually unknown to the general public. On July 1, 1975, the network was placed under the direct control of the Defense Communication Agency (DCA). By then there were already 57 nodes in the network. The larger it grew, the more difficult it was to determine who was actually using it. There were, in fact, no tools to check the network users’ activity. The DCA began to worry. The mix of fast growth rate and lack of control could potentially become a serious issue for national security. The DCA, trying to control the situation, issued a series of warnings against any unauthorised access and use of the network. In his last newsletter before retiring to civilian life, the DCA’s appointed ARPANET Network Manager, Major Joseph Haughney wrote:

Only military personnel or ARPANET sponsor-validated persons working on government contracts or grants may use the ARPANET. […] Files should not be [exchanged] by anyone unless they are files that have been announced as ARPANET-public or unless permission has been obtained from the owner. Public files on the ARPANET are not to be considered public files outside of the ARPANET, and should not be transferred, or their contents given or sold to the general public without permission of DCA or the ARPANET sponsors.

However, these warnings were largely ignored as most of the networked nodes had, Haughney put it, “weak or nonexistent host access to the control mechanism”. By the early 1980s, the network was essentially an open access area for both authorised and non-authorised users. This situation was made worse by the drastic drop in computer prices. With the potential number of machines capable of connecting to the network increasing constantly, the concern over its vulnerability rose to new heights.

The 1983 hit film, War Games, about a young computer whiz who manages to connect to the super computer at NORAD and almost start World Word III from his bedroom, perfectly captured the mood of the militaries towards the network. By the end of that year, the Department of Defense ‘in its biggest step to date against illegal penetration of computers’ – as The New York Times reported – “split a global computer network into separate parts for military and civilian users, thereby limiting access by university- based researchers, trespassers and possibly spies”.

The ARPANET was effectively divided in two distinct networks: one still called ARPANET, mainly dedicated to research, and the other called MILNET, a military operational network, protected by strong security measures like encryption and restricted access control.

By the mid 1980s the network was widely used by researchers and developers. But it was also being picked up by a growing number of other communities and networks. The transition towards a privatised Internet took ten more years, and it was largely handled by the National Science Foundation (NSF). The NSF’s own network NFTNET had started using the ARPANET as its backbone since 1984, but by 1988 the NSF had already initiated the commercialisation and privatisation of the Internet by promoting the development of “private” and “long-haul networks”. The role of these private networks was to build new or maintain existing local/regional networks, while providing access to their users to the whole Internet.

The ARPANET was officially decommissioned in 1990, whilst in 1995 the NFTNET was shut down and the Internet effectively privatised. By then, the network – no longer the private enclave of computer scientists or militaries – had become the Internet, a new galaxy of communication ready to be fully explored and populated.

The Internet

During its early stages, between the 60s and 70s, the communication galaxy spawned by the ARPANET was not only mostly uncharted space, but, compared to today’ standards, also mainly empty. It continued as such well into the 90s, before the technology pioneered with the ARPANET project became the backbone of the Internet.

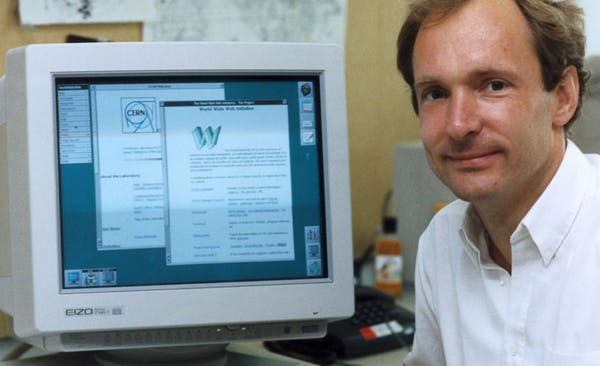

In 1992, during its first phase of popularisation, the global networks connected to the Internet exchanged about 100 Gigabytes (GB) of traffic per day. Since then, data traffic has grown exponentially along with the number of users and the network’s popularity. A decade later, thanks to Tim Berners Lee’s World Wide Web (1989), there is an ever increasing availability of cheap and powerful tools to navigate the galaxy, not to mention the explosion of social media from 2005 onward. And so, ‘per day’ became ‘per second’, and in 2014 global Internet traffic peaked at 16,000 GBps, with experts forecasting the number to quadruple before the decade is out.

Still, numbers can sometimes be deceptive, as well as frustratingly confusing for the non-expert reader. What hides beneath their dry technicality is a simple fact: the enduring impact of that first stuttered hello at UCLA on October 29, 1969 has dramatically transcended the apparent technical triviality of making two computers talk to each other. Nearly five decades after Kleinrock and Kline’s experiment in California, the Internet has arguably become a driving force in the daily routines of more than three billion people worldwide. For a growing number of users, a mere minute of life on the Internet is to be part, simultaneously, of an endless stream of shared experiences that include, among other things, watching over 165,000 hours of video, being exposed to 10 million adverts, playing nearly 32,000 hours of music and sending and receiving over 200 million emails.

Albeit at different levels of participation, the lives of almost half of the world population are increasingly shaped by this expanding communication galaxy.

We use the global network almost for everything. ‘I’m on the Internet’, ‘Check the Internet’, ‘It’s on the Internet’ and other similar stock phrases have become portmanteau for an increasing range of activities: from chatting with friends to looking for love; from going on shopping sprees to studying for a University degree; from playing a game to earning a living; from becoming a sinner to connecting with God; from robbing a stranger to stalking a former lover; the list is virtually endless.

But there is much more than this. The expansion of the Internet is deeply entangled with the sphere of politics. The more people embrace this new age of communicative abundance, the more it affects the way in which we exercise our political will in this world. Barack Obama’s victory in 2008, the Indignados in Spain in 2011, the Five Star Movement in Italyin 2013, Julian Assange’s Wikileaks and Edward Snowden’s revelations of the NSA’s secret system of surveillance are but a handful of examples that show how, in just the last decade, the Internet has changed the way in which we engage with politics and challenge power. The Snowden’s files, however, also highlight the other, much darker side of the story: the more we become networked, the more we become obliviously exploitable, searchable, and monitored.

Several decades after the journey began, we have yet to reach the full potential of the ‘Intergalactic Network’ imagined by Licklider in the early 1960s. However, the quasi-perfect symbiosis between humans and computers that we experience every day, albeit not without shadows, it is arguably one of humanity’s greatest accomplishments.

Originally published by The Conversation, 10.29.2016, under the terms of a Creative Commons Attribution/No derivatives license.