By Zolon Farsane

Technology Analyst

What Is Really Being Asked?

There has been much speculation regarding what the FBI is asking Apple to do. Some say the FBI wants Apple to write a whole new operating system (OS) with a backdoor to the encryption, and others say the FBI wants a master key to current versions of the OS.

Public records reveal that the FBI is asking Apple to unlock the phone.

Specifically, they are asking Apple to disable the failed password attempt counter. This will allow the FBI to attempt to “brute force” the iPhone without the software deleting the phone’s content after too many attempts.

Why Are They Asking?

This question begins with who owned the phone.

One of the shooters in the 2015 San Bernardino attack had a an iPhone issued by San Bernardino County that the FBI suspects contains information about possible further attacks.

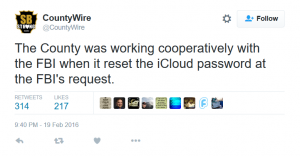

While the county was in control of the iPhone, they changed the password on it. According to their own Tweet, they did so at the request of the FBI. This indicates that the County actually did have full access to the phone at the time it was in their possession.

When this was done, the iPhone’s automatic backup to the Cloud was disabled. The user then has to re-enter the password set on the phone to reengage backup to the Cloud. This is a default behavior.

To make matters worse, it would appear the person at the county who reset the password does not remember the new password and possibly did not communicate what that change to anyone else.

When an iPhone is tied to an Enterprise Account, for email as example, the administrator of the profile can set the phone to delete all “personal” information, including Enterprise Information, when the passcode is entered improperly a number of times.

Where Is the Confusion?

The FBI, the county, and even Apple have all admitted part of the issue is that changing the password disabled the Cloud backup from updating.

This would imply they have a backup. While it may not be the most recent, it will contain something.

Yet the FBI still insists they need access to the iPhone.

The county says they changed the password at the request of the FBI. The issue with this appears in the fact that the number one rule in digital forensics is simple: CHANGE NOTHING.

Actually it is, “Rule 1. An examination should never be performed on the original media.”. The reason for this is so that no one changes anything on the original media.

This implies that the FBI and the county broke their own procedures, causing an issue that required the FBI to make the request of Apple to disable the password counter.

This is not the first case of the FBI asking Apple and others to break into phones. Why then is this one suddenly looked at as precedent?

Can What the FBI Asked Be Done?

Short answer:

Yes.

Long answer:

With a lot of time and work that would not only cause the data recovered to possibly hold no value upon retrieval, but that will set a legal precedent for the FBI and other government entities to circumvent your privacy rights as they deem fit.

In order to properly retrieve the data from the phone, the device should be set so that the phone and data can no longer be changed and a bit-by-bit copy can be made to new media that can be gone through without any risk to the original. The exact duplicate, bit-by-bit copy, will include all data on the phone in its current state. This will allow the FBI can brute force the copy all they want without worry of losing the original.

This is something the FBI can already do, and currently does, with all forms of computer hardware that are confiscated through proper methods and current laws.

Points of Interest

On February 20th, San Bernardino County CAO David West responded to an email from Ars Technica journalist Cyrus Farivar with a statement from the FBI including the following:

“Through previous testing, we know that direct data extraction from an iOS device often provides more data than an iCloud backup contains,” the FBI wrote. “Even if the password had not been changed and Apple could have turned on the auto-backup and loaded it to the cloud, there might be information on the phone that would not be accessible without Apple’s assistance as required by the All Writs Act order, since the iCloud backup does not contain everything on an iPhone.”

This is true. The only things backed up are what is tagged by the Enterprise, the user, and what is tagged in the Cloud.

Yes, you can actually tag a full phone backup via the Cloud, on the Cloud. As the county is the Enterprise in this case, they also can do this.

As with any digital storage device, this would still be a “change” of the device, which is against what I pointed out as “Rule 1 of Digital Forensics”.

On February 22nd, Apple CEO Tim Cook ordered the release of a Q&A with their stance on the FBI’s request. In that is one key point:

“Law enforcement agents around the country have already said they have hundreds of iPhones they want Apple to unlock if the FBI wins this case.”

See the release: http://www.apple.com/customer-letter/answers/

This implies that not only has the FBI stated that what they are requesting has changed, but that Apple has actually been denying the requests.

Not only has the FBI request been changed, but it has also been muddied by the public as a whole.

Let’s look closer at Apple’s statement.

To seek authority ordering a company to do what Apple has been asked to do, the FBI needed a case with a high enough profile to end up in the public eye. They also needed a case that would have a strong attachment of fear. It is with fear that we most willingly give up our privacy.

The Patriot Act, with bipartisan support, is a prime example of this.

Because the general public, and our own Congress, does not have a full understanding of the technology involved, this allows the core issue to take on the needed tone: “We need to protect the public by having the required tools.”

Implications

To be clear – yes, the terrorist act was horrific. The possibility of more of these acts is terrifying. Of course the FBI should take all possible actions necessary to stop future attacks. But there is a limit.

This is where things get conflicted for me. My day job actually plays on both sides of this particular fence – the privacy of the user, the enterprise, and even our own government as opposed to “the good of the public.”

If we allow our fears to be played against us so that we allow any other organization or person(s) to force access to our phone and digital life as a whole, then we should not be surprised when we discover an organization is already doing this.

The outcry and public backlash from the revelations that Edward Snowden released dealing with the NSA phone tapping was enormous. Even those who called Snowden a traitor, including myself, said that the NSA was overstepping its bounds. The NSA was invading our privacy on a grand scale. In that case, the NSA was following the perceived allowances given to them through the Patriot Act and other legislation that has been passed since 9/11.

A common response to a case like this, which was heard often since the first few cases of digital privacy started hitting the news, was, “I have nothing to hide.”

The issue with such a response is that we all have something to hide. If you have nothing to hide, please email me a copy of your medical records, and pictures of everything in your wallet. I will just keep a copy of them on my personal website.

We have HIPAA (Health Insurance Portability and Accountability Act – includes Privacy), PCI DSS (Payment Card Industry Data Security Standard – includes Privacy) PII (Personal Identifiable Information Protections), and other legislation just for these reasons.

The public not only expects, but demands, a level of protected privacy. We cannot give this up without thinking of the consequences of what we are allowing.

In this case, Apple is being supported by other companies that are in direct competition with them, companies that have vowed to knock Apple off the pedestal they currently occupy. That should make you think about the ethical nature of these actions – when corporations that are known for collecting data on you want to protect your privacy. To the point of helping their competition.

Even the NSA, the same agency that was trampling our privacy in the first place when dealing with our phones, has stated that they don’t want this.

Can There Be a Meeting of the Minds?

A solution is possible.

Apple and other companies should create a way for digital forensics to be followed that provides for imaging the device so that a chain of evidence can be followed properly without destruction of said evidence.

This does not mean giving a backdoor to encryption or any other privacy methods. It only allows for a clone, but that clone should only be created with a proper warrant that can be contested in court by the owner(s) of said device(s) on a case by case basis.

The FBI and all other organizations should follow set digital forensics best practices. Those organizations that do not should be held accountable for it and not shift blame to the creators of the devices or software.

Then there is the issue of warrants. This case is a quandary in that the Enterprise (county) owns the device and local laws protect the user of the device, but the user is dead. I cannot speak to the legal aspects of that and will yield this to a follow-up article by one of our legal experts.

The Bait-and-Switch

This case is a “red herring”. The county had full access to the device and followed the FBI’s request that in turn actually made the device inaccessible, not following necessary and recognized methods in digital forensics when handling evidence.

These methods, already in place and followed in other cases, show that the high profile nature in this case is being abused in order for the FBI to get something even the NSA says the government does not need – access to all your data.

1 thought on “Apple versus the FBI – Decoding the Debacle”

Comments are closed.