Image from event-horizon / Creative Commons

Edited by Matthew A. McIntosh / 02.25.2018

Historian

Brewminate Editor-in-Chief

1 – Introduction to Sensation

1.1 – Introduction

Sensation involves the relay of information from sensory receptors to the brain and enables a person to experience the world around them.

1.1.1 – Overview

Sensation and perception are two separate processes that are very closely related. Sensation is input about the physical world obtained by our sensory receptors, and perception is the process by which the brain selects, organizes, and interprets these sensations. In other words, senses are the physiological basis of perception. Perception of the same senses may vary from one person to another because each person’s brain interprets stimuli differently based on that individual’s learning, memory, emotions, and expectations.

1.1.2 – The Senses

fMRI and the senses: This fMRI chart shows some of the neural activation that takes place during sensation. The occipital lobe is activated during visual stimulation, for example.

There are five classical human senses: sight, sound, taste, smell, and touch. Two other senses, kinesthesia and the vestibular senses, have become widely recognized by scientists. Kinesthesia is the perception of the positioning of the parts of the body, commonly known as “body awareness.” Vestibular senses detect gravity, linear acceleration (such as speeding up or slowing down on a straight road), and rotary acceleration (such as speeding up or slowing down around a curve). Both kinesthesia and the vestibular senses help us to balance.

Sensory information (such as taste, light, odor, pressure, vibration, heat, and pain) is perceived through the body’s sensory receptors. These sensory receptors include the eyes, ears, mouth, nose, hands, and feet (and the skin as a whole). Rod and cone receptors in the retina of the eye perceive light; cilia in the ear perceive sound; chemical receptors in the nasal cavities and mouth perceive smell and taste; and muscle spindles, as well as pressure, vibration, heat and pain receptors in the skin, perceive the many sensations of touch.

Specialized cells in the sensory receptors convert the incoming energy (e.g., light) into neural impulses. These neural impulses enter the cerebral cortex of the brain, which is made up of layers of neurons with many inputs. These layers of neurons in the function like mini microprocessors, and it is their job to organize the sensations and interpret them in the process of perception.

1.1.3 – Motor Homunculus

Motor Homunculus: The motor homunculus is a theoretical visualization of the locations in the cortex that correspond to motor and sensory function in the body.

The “motor homunculus” is a theoretical physical representation of the human body within the brain. It is a neurological “map” of the anatomical divisions of the body. Within the primary motor cortex, motor neurons are arranged in an orderly manner—parallel to the structure of the physical body, but inverted. The toes are represented at the top of the cerebral hemisphere, while the mouth is represented at the bottom of the hemisphere, closer to the part of the brain known as the lateral sulcus. These representations lie along a fold in the cortex called the central sulcus. The homunculus is split in half across the brain, with motor representation for each side of the body represented on the the opposite side of the brain.

The amount of cortex devoted to any given body region is proportional to how many nerves are in that region, not to the region’s physical size. Areas of the body with greater or more complex sensory or motor connections are represented as larger in the homunculus. Those with fewer or less complex connections are represented as smaller. The resulting image is that of a distorted human body with disproportionately huge hands, lips, and face (because those regions have huge numbers of nerve endings).

1.2 – Sensory Absolute Thresholds

The absolute threshold is the lowest intensity at which a stimulus can be detected.

1.2.1 – Absolute Thresholds

Light at the end of the tunnel: the absolute threshold for vision: In a dark space, an individual’s saving grace can be the minimum amount of light needed to stimulate the eye in the dark environment and alert the brain that it is seeing light.

A threshold is the minimum level at which a given event can occur. In neuroscience and psychophysics, there are several types of sensory threshold. The recognition threshold is the level at which a stimulus can not only be detected but also recognized; the differential threshold is the level at which a difference in a detected stimulus can be perceived; the terminal threshold is the level beyond which a stimulus is no longer detected. However, perhaps the most important sensory threshold is the absolute threshold, which is the smallest detectable level of a stimulus.

The absolute threshold is defined as the lowest intensity at which a stimulus can be detected. (Recently, signal detection theory has offered a more nuanced definition of absolute threshold: the lowest intensity at which a stimulus will be specified a certain percentage of the time, often 50%.) A classic example of absolute threshold is an odor test, in which a fragrance is released into an environment. The absolute threshold in that scenario would be the least amount of fragrance necessary for a subject to detect that there is an odor.

Smell is not the only sense with absolute thresholds. Imagine you’re in a room and someone behind you slowly begins turning up the volume on the radio; the absolute threshold is the softest volume at which you would notice what they’re doing. Sound thresholds can be about more than volume; they can also be about frequency. For example, humans cannot hear dog whistles. This is because dog whistles are at a frequency higher than the absolute threshold for frequency for human hearing.

Similarly, the minimum amount of light necessary to see something in the dark is the absolute threshold for vision. Every sense has an absolute threshold.

1.2.2 – Influencing the Absolute Threshold

There are several factors that can influence the level of absolute threshold, including adaptation to the stimulus and individual motivations and expectations.

1.2.3 – Sensory Adaptation

Sensory adaptation happens when our senses no longer perceive a stimulus because of our sensory receptor ‘s continuous contact with it. If you’ve ever entered a room that has a terrible odor, but after a few minutes realized that you barely noticed it anymore, then you have experienced sensory adaptation. Like thresholds, adaptation can occur with any sense, whether it’s forgetting that the radio is on while you work or not noticing that the water in the pool is cold after you’ve been swimming for a while.

1.2.4 – Cognitive Processes

Additionally, an individual’s motivations and expectations can also influence whether a stimulus will be detected at the absolute threshold. For example, when you are in a crowded room where a lot of conversations are taking place, you tend to focus your attention on the individual with whom you are speaking. Because you are focused on one stimulus, the absolute threshold (in this case, the minimum volume at which you can hear) is lower for that stimulus than it would have been otherwise.

Expectations can also affect the absolute threshold. If you are in a dark hallway searching for the tiny glow of a nightlight, your expectation of spotting it decreases the absolute threshold for which you will actually be able to see it.

1.3 – Sensory Difference Thresholds

The minimum amount of change in sensory stimulation needed to recognize that a change has occurred is known as the just-noticeable difference.

1.3.1 – Just-Noticeable Difference

The just-noticeable difference (JND), also known as the difference limen or differential threshold, is the smallest detectable difference between a starting and secondary level of sensory stimulus. In other words, it is the difference in the level of the stimulus needed for a person to recognize that a change has occurred.

1.3.2 – Influencing the Just-Noticeable Difference

Turning Up the Volume: The difference threshold is the amount of stimulus change needed to recognize that a change has occurred. If someone changes the volume of a speaker, the difference threshold is the amount it has to be changed in order for listeners to notice a difference.

The JND is usually a fixed proportion of the reference sensory level. For example, consider holding a five-pound weight (the reference level), and then having a one pound weight added. This increase in weight is significant in comparison to the reference level (a 20% increase in weight). However, if you hold a fifty pound weight (the new reference level), you would not be likely to notice a difference if one pound is added. This is because the difference in the amount of additional weight from the reference level is not significantly greater (2% increase in weight) than the reference level.

The absolute threshold is the minimum volume of the radio we would need in order to notice that it was turned on at all. However, determining the just-noticeable difference, the amount of change needed in order to notice that the radio has become louder, depends on how much the volume has changed in comparison to where it started. It’s possible to turn the volume up only slightly, making the difference in volume undetectable. This is similar to adding only one pound of weight when you’re holding 50 pounds.

1.4 – Sensory Adaptation

Sensory adaptation, also called neural adaptation, is the change in the responsiveness of a sensory system that is confronted with a constant stimulus. This change can be positive or negative, and does not necessarily lead to completely ignoring a stimulus.

One example of sensory adaptation is sustained touching. When you rest your hands on a table or put clothes on your body, at first the touch receptors will recognize that they are being activated and you will feel the sensation of touching an object. However, after sustained exposure, the sensory receptors will no longer activate as strongly and you will no longer be aware that you are touching something.

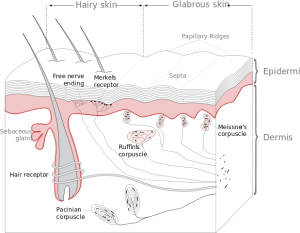

This follows the model of sensory adaptation presented by Georg Meissner, which is known as “Meissner’s corpuscles.” Meissner’s corpuscles are sensory triggers of physical sensations on the skin, especially areas of the skin that are sensitive to light and touch. These corpuscles rapidly change and adapt when a stimulus is added. Then they quickly decrease activity, and eventually cease to react to the stimulus. When the stimulus is removed, the corpuscles regain their sensitivity. For example, the constant touch of clothes on our skin leads to our sensory adaptation to the sensations of wearing clothing. Notice that when you put an article of clothing on, after a brief period you no longer feel it; however you continue to be able to feel other sensations through it. This is because the additional stimuli are new, and the body has not yet adapted to them.

In contrast, sensitization is an increase in behavioral responses following repeated applications of a particular stimulus. Unlike sensory adaptation, in which a large amount of stimulus is needed to incur any further responsive effects, in sensitization less and less stimulation is required to produce a large response. For example, if an animal hears a loud noise and experiences pain at the same time, it will startle more intensely the next time it hears a loud noise even if there is no pain.

There are many stimuli in life that we experience everyday and gradually ignore or forget, including sounds, images, and smells. Sensory adaptation and sensitization are thought to form an integral component of human learning and personality.

2 – Sensory Processes

2.1 – Vision: The Visual System, the Eye, and Color Vision

2.1.1 – Overview

In the human visual system, the eye receives physical stimuli in the form of light and sends those stimuli as electrical signals to the brain, which interprets the signals as images.

The human visual system gives our bodies the ability to see our physical environment. The system requires communication between its major sensory organ (the eye) and the core of the central nervous system (the brain) to interpret external stimuli (light waves) as images. Humans are highly visual creatures compared to many other animals which rely more on smell or hearing, and over our evolutionary history we have developed an incredibly complex sight system.

2.1.2 – Sensory Organs

Vision depends mainly on one sensory organ—the eye. Eye constructions vary in complexity depending on the needs of the organism. The human eye is one of the most complicated structures on earth, and it requires many components to allow our advanced visual capabilities. The eye has three major layers:

- the sclera, which maintains, protects, and supports the shape of the eye and includes the cornea;

- the choroid, which provides oxygen and nourishment to the eye and includes the pupil, iris, and lens; and

- the retina, which allows us to piece images together and includes cones and rods.

2.1.3 – The Process of Sight

Anatomy of the human eye: A cross-section of the human eye with its component pieces labeled. Clockwise from left: Optic nerve, optic disc, sclera, choroid, retina, zonular fibers, posterior chamber, iris, pupil, cornea, aqueous humor, ciliary muscle, suspensory ligament, fovea, retinal blood vessels. In center: Vitreous humour, hyaloid canal, lens.

All vision is based on the perception of electromagnetic rays. These rays pass through the cornea in the form of light; the cornea focuses the rays as they enter the eye through the pupil, the black aperture at the front of the eye. The pupil acts as a gatekeeper, allowing as much or as little light to enter as is necessary to see an image properly. The pigmented area around the pupil is the iris. Along with supplying a person’s eye color, the iris is responsible for acting as the pupil’s stop, or sphincter. Two layers of iris muscles contract or dilate the pupil to change the amount of light that enters the eye. Behind the pupil is the lens, which is similar in shape and function to a camera lens. Together with the cornea, the lens adjusts the focal length of the image being seen onto the back of the eye, the retina. Visual reception occurs at the retina where photoreceptor cells called cones and rods give an image color and shadow. The image is transduced into neural impulses and then transferred through the optic nerve to the rest of the brain for processing. The visual cortex in the brain interprets the image to extract form, meaning, memory, and context.

The left hemisphere of the brain controls the motor functions of the right half of the body, and vice versa; the same is true of vision. The left hemisphere of the brain processes visual images from the right-hand side of space, or the right visual field, and the right hemisphere processes visual images from the left-hand side of space, or the left visual field. The optic chiasm is a complicated crossover of optic nerve fibers behind the eyes at the bottom of the brain, allowing the right eye to “wire” to the left neural hemisphere and the left eye to “wire” to the right hemisphere. This allows the visual cortex to receive the same visual field from both eyes.

2.1.4 – Color Vision

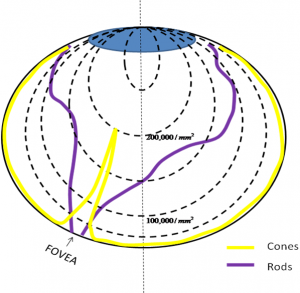

Cones and Rods: This density map shows the retina, which is made up of cones and rods. Cones perceive color and rods perceive shadow in images. In the fovea, which is responsible for sharp central vision, there is huge density of cones but no rods.

Human beings are capable of highly complex vision that allows us to perceive colors and depth in intricate detail. Visual stimulus transduction happens in the retina. Photoreceptor cells found in this region have the specialized capability of phototransduction, or the ability to convert light into electrical signals. There are two types of these photoreceptor cells: rods, which are responsible for scotopic vision (night vision), and cones, which are responsible for photopic vision (daytime vision).

Generally speaking, cones are for color vision and rods are for shadows and light differences. The front of your eye has many more cones than rods, while the sides have more rods than cones; for this reason, your peripheral vision is sharper than your direct vision in the darkness, but your peripheral vision is also in black and white.

Color vision is a critical component of human vision and plays an important role in both perception and communication. Color sensors are found within cones, which respond to relatively broad color bands in the three basic regions of red, green, and blue (RGB). Any colors in between these three are perceived as different linear combinations of RGB. The eye is much more sensitive to overall light and color intensity than changes in the color itself. Colors have three attributes: brightness, based on luminance and reflectivity; saturation, based on the amount of white present; and hue, based on color combinations. Sophisticated combinations of these receptors signals are transduced into chemical and electrical signals, which are sent to the brain for the dynamic process of color perception.

2.1.5 – Depth Perception

Depth perception refers to our ability to see the world in three dimensions. With this ability, we can interact with the physical world by accurately gauging the distance to a given object. While depth perception is often attributed to binocular vision (vision from two eyes), it also relies heavily on monocular cues (cues from only one eye) to function properly. These cues range from the convergence of our eyes and accommodation of the lens to optical flow and motion.

2.2 – Audition: Hearing, the Ear, and Sound Localization

2.2.1 – Overview

The human auditory system allows the body to collect and interpret sound waves into meaningful messages. The main sensory organ responsible for the ability to hear is the ear, which can be broken down into the outer ear, middle ear, and inner ear. The inner ear contains the receptor cells necessary for both hearing and equilibrium maintenance. Human beings also have the special ability of being able to estimate where sounds originate from, commonly called sound localization.

2.2.2 – The Ear

The ear is the main sensory organ of the auditory system. It performs the first processing of sound and houses all of the sensory receptors required for hearing. The ear’s three divisions (outer, middle, and inner) have specialized functions that combine to allow us to hear.

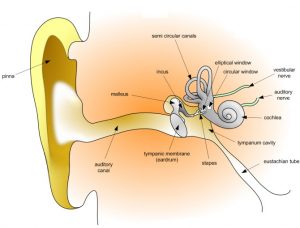

Anatomy of the human ear: The outer ear, middle ear, and inner ear.

The outer ear is the external portion of the ear, much of which can be seen on the outside of the human head. It includes the pinna, the ear canal, and the most superficial layer of the ear drum, the tympanic membrane. The outer ear’s main task is to gather sound energy and amplify sound pressure. The pinna, the fold of cartilage that surrounds the ear canal, reflects and attenuates sound waves, which helps the brain determine the location of the sound. The sound waves enter the ear canal, which amplifies the sound into the ear drum. Once the wave has vibrated the tympanic membrane, sound enters the middle ear.

The middle ear is an air-filled tympanic (drum-like) cavity that transmits acoustic energy from the ear canal to the cochlea in the inner ear. This is accomplished by a series of three bones in the middle ear: the malleus, the incus, and the stapes. The malleus (Latin for “hammer”) is connected to the mobile portion of the ear drum. It senses sound vibrations and transfers them onto the incus. The incus (Latin for “anvil”) is the bridge between the malleus and the stapes. The stapes (Latin for “stirrup”) transfers the vibrations from the incus to the oval window, the portion of the inner ear to which it is connected. Through these steps, the middle ear acts as a gatekeeper to the inner ear, protecting it from damage by loud sounds.

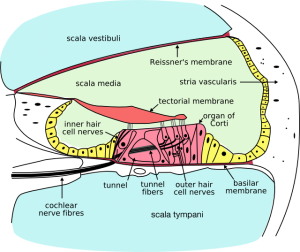

The cochlea: A cross-section of the cochlea, the main sensory organ of hearing, located in the inner ear.

Unlike the middle ear, the inner ear is filled with fluid. When the stapes footplate pushes down on the oval window in the inner ear, it causes movement in the fluid within the cochlea. The function of the cochlea is to transform mechanical sound waves into electrical or neural signals for use in the brain. Within the cochlea there are three fluid-filled spaces: the tympanic canal, the vestibular canal, and the middle canal. Fluid movement within these canals stimulates hair cells of the organ of Corti, a ribbon of sensory cells along the cochlea. These hair cells transform the fluid waves into electrical impulses using cilia, a specialized type of mechanosensor.

2.2.3 – The Process of Hearing

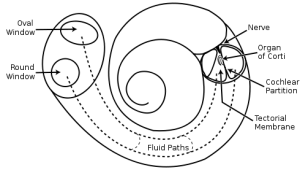

Structural diagram of the cochlea: The cochlea is the snail-shaped portion of the inner ear responsible for sound wave transduction.

Hearing begins with pressure waves hitting the auditory canal and ends when the brain perceives sounds. Sound reception occurs at the ears, where the pinna collects, reflects, attenuates, or amplifies sound waves. These waves travel along the auditory canal until they reach the ear drum, which vibrates in response to the change in pressure caused by the waves. The vibrations of the ear drum cause oscillations in the three bones in the middle ear, the last of which sets the fluid in the cochlea in motion. The cochlea separates sounds according to their place on the frequency spectrum. Hair cells in the cochlea perform the transduction of these sound waves into afferent electrical impulses. Auditory nerve fibers connected to the hair cells form the spiral ganglion, which transmits the electrical signals along the auditory nerve and eventually on to the brain stem. The brain responds to these separate frequencies and composes a complete sound from them. For individuals with hearing impairments, understanding hearing aid prices can be an important step in accessing devices that replicate this natural process. Modern hearing aids are designed to enhance frequency separation and clarity, helping the brain perceive sounds more effectively.

2.2.4 – Sound Localization

Humans are able to hear a wide variety of sound frequencies, from approximately 20 to 20,000 Hz. Our ability to judge or estimate where a sound originates, called sound localization, is dependent on the hearing ability of each ear and the exact quality of the sound. Since each ear lies on an opposite side of the head, a sound reaches the closest ear first, and the sound’s amplitude will be larger (and therefore louder) in that ear. Much of the brain’s ability to localize sound depends on these interaural (between-the-ears) differences in sound intensity and timing. Bushy neurons can resolve time differences as small as ten milliseconds, or approximately the time it takes for sound to pass one ear and reach the other.

2.3 – Gustation: Taste Buds and Taste

2.3.1 – Overview

The gustatory system creates the human sense of taste, allowing us to perceive different flavors from substances that we consume as food and drink. Gustation, along with olfaction (the sense of smell), is classified as chemoreception because it functions by reacting with molecular chemical compounds in a given substance. Specialized cells in the gustatory system that are located on the tongue are called taste buds, and they sense tastants (taste molecules). The taste buds send the information from the tastants to the brain, where a molecule is processed as a certain taste. There are five main tastes: bitter, salty, sweet, sour, and umami (savory). All the varieties of flavor we experience are a combination of some or all of these tastes.

2.3.2 – Tongue and Taste Buds

The Mouth: A cross-section of the human head, which displays the location of the mouth, tongue, pharynx, epiglottis, and throat.

The sense of taste is transduced by taste buds, which are clusters of 50-100 taste receptor cells located in the tongue, soft palate, epiglottis, pharynx, and esophagus. The tongue is the main sensory organ of the gustatory system. The tongue contains papillae, or specialized epithelial cells, which have taste buds on their surface. There are three types of papillae with taste buds in the human gustatory system:

- fungiform papillae, which are mushroom-shaped and located at the tip of the tongue;

- foliate papillae, which are ridges and grooves toward the back of the tongue;

- circumvallate papillae, which are circular-shaped and located in a row just in front of the end of the tongue.

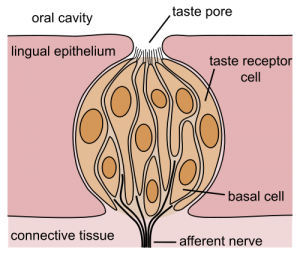

Taste Buds: A schematic drawing of a taste bud and its component pieces.

Each taste bud is flask-like in shape and formed by two types of cells: supporting cells and gustatory cells. Gustatory cells are short-lived and are continuously regenerating. They each contain a taste pore at the surface of the tongue which is the site of sensory transduction. Though there are small differences in sensation, all taste buds, no matter their location, can respond to all types of taste.

2.3.3 – Tastes

Traditionally, humans were thought to have just four main tastes: bitter, salty, sweet, and sour. Recently, umami, which is the Japanese word for “savory,” was added to this list of basic tastes. (Spicy is not a basic taste because the sensation of spicy foods does not come from taste buds but rather from heat and pain receptors.) In general, tastes can be appetitive (pleasant) or aversive (unpleasant), depending on the unique makeup of the material being tasted.There is one type of taste receptor for each flavor, and each type of taste stimulus is transduced by a different mechanism. Bitter, sweet, and umami tastes use similar mechanisms based on a G protein-coupled receptor, or GPCR.

2.3.3.1 – Bitter

There are several classes of bitter compounds which vary in chemical makeup. The human body has evolved a particularly sophisticated sense for bitter substances and can distinguish between the many radically different compounds that produce a bitter response. Evolutionary psychologists believe this to be a result of the role of bitterness in human survival: some bitter-tasting compounds can be hazardous to our health, so we learned to recognize and avoid bitter substances in general.

2.3.3.2 – Salty

The salt receptor, NaCl, is arguable the simplest of all the receptors found in the mouth. An ion channel in the taste cell wall allows Na+ions to enter the cell. This depolarizes the cell and floods it with ions, leading to a neurotransmitter release.

2.3.3.4 – Sweet

Like bitter tastes, sweet taste transduction involves GPCRs binding. The specific mechanism depends on the specific molecule flavor. Natural sweeteners such as saccharides activate the GPCRs to release gustducin. Synthetic sweeteners such as saccharin activate a separate set of GPCRs, initiating a similar but different process of protein transitions.

2.3.3.5 – Sour

Sour tastes signal the presence of acidic compounds in substances. There are three different receptor proteins at work in a sour taste. The first is a simple ion channel which allows hydrogen ions to flow directly into the cell. The second is a K+ channel which has H+ ions in order to block K+ ions from escaping the cell. The third allows sodium ions to flow down the concentration gradient into the cell. This involvement with sodium ions implies a relationship between salty and sour tastes receptors.

2.3.3.6 – Umami

Umami is the newest receptor to be recognized by western scientists in the family of basic tastes. This Japanese word means “savory” or “meaty.” It is thought that umami receptors act similarly to bitter and sweet receptors (involving GPCRs), but very little is known about their actual function. We do know that umami detects glutamates that are common in meats, cheese, and other protein-heavy foods and reacts specifically to foods treated with MSG.

2.4 – Olfaction: The Nasal Cavity and Smell

2.4.1 – Overview

The olfactory system gives humans their sense of smell by inhaling and detecting odorants in the environment. Olfaction is physiologically related to gustation, the sense of taste, because of its use of chemoreceptors to discern information about substances. Perceiving complex flavors requires recognizing taste and smell sensations at the same time, an interaction known as chemoreceptive sensory interaction. This causes foods to taste different if the olfactory system is compromised. However, olfaction is anatomically different from gustation because it uses the sensory organs of the nose and nasal cavity to capture smells. Humans can identify a large number of odors and use this information to interact successfully with their environment.

2.4.2 – The Nose and Nasal Cavity

Olfactory sensitivity is directly proportional to spatial area in the nose—specifically the olfactory epithelium, which is where odorant reception occurs. The area in the nasal cavity near the septum is reserved for the olfactory mucous membrane, where olfactory receptor cells are located. This area is a dime-sized region called the olfactory mucosa. In humans, there are about 10 million olfactory cells, each of which has 350 different receptor types composing the mucous membrane. Each of the 350 receptor types is characteristic of only one odorant type. Each functions using cilia, small hair-like projections that contain olfactory receptor proteins. These proteins carry out the transduction of odorants into electrical signals for neural processing.

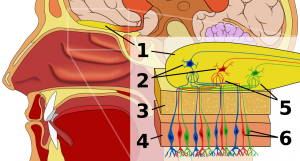

The Olfactory System: A cross-section of the olfactory system that labels all of the structures necessary to process odor information.

Olfactory transduction is a series of events in which odor molecules are detected by olfactory receptors. These chemical signals are transformed into electrical signals and sent to the brain, where they are perceived as smells.

Once ligands (odorant particles) bind to specific receptors on the external surface of cilia, olfactory transduction is initiated. In mammals, olfactory receptors have been shown to signal via G protein. This is a similar type of signaling of other known G protein-coupled receptors (GPCR). The binding of an odorant particle on an olfactory receptor activates a particular G protein (Gαolf), which then activates adenylate cyclase, leading to cAMP production. cAMP then binds and opens a cyclic nucleotide-gated ion channel. This opening allows for an influx of both Na+ and Ca2+ ions into the cell, thus depolarizing it. The Ca2+ in turn activates chloride channels, causing the departure of Cl–, which results in a further depolarization of the cell.

2.4.3 – Interpretation of Smells

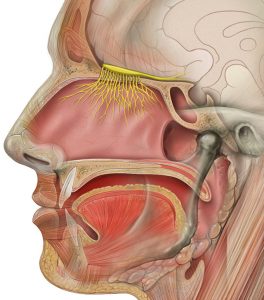

Olfactory Nerve: The olfactory nerve connects the olfactory system to the central nervous system to allow processing of odor information.

Individual features of odor molecules descend on various parts of the olfactory system in the brain and combine to form a representation of odor. Since most odor molecules have several individual features, the number of possible combinations allows the olfactory system to detect an impressively broad range of smells. A group of odorants that shares some chemical feature and causes similar patterns of neural firing is called an odotope.

Humans can differentiate between 10,000 different odors. People (wine or perfume experts, for example) can train their sense of smell to become expert in detecting subtle odors by practicing retrieving smells from memory.

2.4.4 – Smell and Memory

Odor information is easily stored in long-term memory and has strong connections to emotional memory. This is most likely due to the olfactory system’s close anatomical ties to the limbic system and the hippocampus, areas of the brain that have been known to be involved in emotion and place memory. Human and animal brains have this in common: the amygdala, which is involved in the processing of fear, causes olfactory memories of threats to lead animals to avoid dangerous situations. The human sense of smell is not quite as powerful as most other animals’ sense of smell, but smell is still deeply tied to human memory and emotion.

Pheromones are airborne, often odorless molecules that are crucial to the behavior of many animals. They are processed by an accessory of the olfactory system. Recent research shows that pheromones play a role in human attraction to potential mates, the synchronization of menstrual cycles among women, and the detection of moods and fear in others. Thanks in large part to the olfactory system, this information can be used to navigate the physical world and collect data about the people around us.

2.5 – Somatosensation: Pressure, Temperature, and Pain

The somatosensory system allows the human body to perceive the physical sensations of pressure, temperature, and pain.

2.5.1 – Overview

Human skin receptors: Mechanoreceptors can be free receptors or encapsulated. Examples of free receptors are the hair receptors at the roots of hairs, while encapsulated receptors are the Pacinian corpuscles and the receptors in the glabrous (hairless) skin: Meissner’s corpuscles, Ruffini’s corpuscles, and Merkel’s discs.

The human sense of touch is known as the somatic or somatosensory system. Touch is the first sense developed by the body, and the skin is the largest and most complex organ in the somatosensory system. By gathering external stimuli and interpreting them into useful information for the nervous system, skin allows the body to function successfully in the physical world. Touch receptors in the skin have three main subdivisions: mechanoreception (sense of pressure), thermoreception (sense of heat) and nociception (sense of pain). Receptor cells in the muscles and joints called proprioceptors also aid in the somatosensory system, but they are sometimes separated into another sensory category called kinesthesia.

2.5.2 – Somatosensory Systems

The somatosensory system uses specialized receptor cells in the skin and body to detect changes in the environment. The receptors collect and convert physical stimuli into electrical and chemical signals through the transduction process and send these impulses to the nervous system for processing. Sensory cell function in the somatosensory system is determined by location.

The receptors in the skin, also called cutaneous receptors, tell the body about the three main subdivisions mentioned above: pressure and surface texture (mechanoreceptors), temperature (thermoreceptors), and pain (nociceptors). The receptors in the muscles and joints provide information about muscle length, muscle tension, and joint angles.

2.5.3 – Mechanoreception

Mechanoreceptors in the skin give us a sense of pressure and texture. These receptors differ in their field size (small or large) and their speeds of adaptation (fast or slow). Thus, there are four types of mechanoreceptors based on the four possible combinations of fast vs. slow speed and large vs. small receptive fields. The speed of adaptation refers to how quickly the receptor will react to a stimulus and how long that reaction will be sustained after the stimulus is removed. Rapidly adapting cells allow us to adjust grip and force appropriately. Slowly adapting cells allow us to perceive form and texture. The receptive field size refers to the amount of skin area that responds to the stimulus, with smaller areas specializing in locating stimuli accurately.

2.5.4 – Thermoreception

Thermoreceptors detect changes in temperature through their free nerve endings. There are two types of thermoreceptors that signal temperature changes in our own skin: warm and cold receptors. Our sense of temperature is a result of the comparison of the signals from each of the two types of thermoreceptors. These receptors are not good indicators of absolute temperature, but they are very sensitive to changes in skin temperature.

2.5.5 – Nociception

Nociceptors use free nerve endings to detect pain. Functionally, nociceptors are specialized, high-threshold mechanoceptors or polymodal receptors. They respond not only to intense mechanical stimuli but also to heat and noxious chemicals—anything that may cause the body harm. Their response magnitude, or the amount of pain you feel, is directly related to the degree of tissue damage inflicted.

Pain signals can be separated into three types that correspond to the different types of nerve fibers used for transmitting these signals. The first type is a rapidly transmitted signal with a high spatial resolution, called first pain or cutaneous pricking pain. This type of signal is easy to locate and generally easy to tolerate. The second type is much slower and highly affective, called second pain or burning pain. This signal is more difficult to locate and not as easy to tolerate. The third type arises from viscera, musculature, and joints; it is called deep pain. This type of signal is very difficult to locate, and often it is intolerable and chronic.

2.5.6 – Proprioception

Proprioceptors are the receptor cells found in the body’s muscles and joints. They detect joint position and movement, and the direction and velocity of the movement. There are many receptors in the muscles, muscle fascia, joints, and ligaments, all of which are stimulated by stretching in the area in which they lie. Muscle receptors are most active in large joints such as the hip and knee joints, while joint and skin receptors are more meaningful to finger and toe joints. All of these receptors contribute to overall kinesthesia, or the perception of bodily movements.

2.5.7 – Somatic System Disorders

A somatic system disorder (formerly called a somatoform disorder) is a type of psychological disorder related to the somatosensory system. Somatic system disorders present symptoms of physical pain or illness that cannot be explained by a medical condition, injury, or substance. The patient must also be excessively worried about his symptoms, and this worry must be judged to be out of proportion to the severity of the physical complaints themselves. This class of disorders includes:

- Conversion disorder: A somatic symptom disorder involving an actual loss of bodily function such as blindness, paralysis, or numbness due to excessive anxiety.

- Illness anxiety disorder: A somatic symptom disorder involving persistent and excessive worry about developing a serious illness. This disorder has recently been reviewed and expanded into three different classifications.

- Body dysmorphic disorder: The afflicted individual is concerned with body image and is excessively concerned about and preoccupied with a perceived defect in his or her physical appearance.

- Pain disorder: Chronic pain experienced by a patient in one or more areas that is thought to be caused by psychological stress. The pain is often so severe that it prevents proper body function. Duration may be as short as a few days or as long as many years.

- Undifferentiated somatic symptom disorder – only one unexplained symptom is required for at least 6 months.

2.6 – Additional Sensory Systems

2.6.1 – Overview

Two additional sensory systems are proprioception (which interprets body position) and the vestibular system (which interprets balance).

No matter what your level of experience with psychology is, you have probably heard of the five basic senses, which consist of the visual, auditory (hearing), gustatory (taste), olfactory (smell), and somatosensory (touch) systems. However, recent advances in science have expanded this canonical list of five sense systems to include two more: proprioception, which is the sense of the positioning of parts of the body; and the vestibular system, which senses gravity and provides balance.

2.6.2 – Proprioception and Kinesthesia

Proprioception is the sense of the relative positioning of neighboring parts of the body, and sense of the strength of effort needed for movement. It is distinguished from exteroception, by which one perceives the outside world, and interoception, by which one perceives pain, hunger, and the movement of internal organs. A major component of proprioception is joint position sense (JPS), which involves an individual’s ability to perceive the position of a joint without the aid of vision. Proprioception is one of the subtler sensory systems, but it comes into play almost every moment. This system is activated when you step off a curb and know where to put your foot, or when you push an elevator button and control how hard you have to press down with your fingers.

Kinesthesia is the awareness of the position and movement of the parts of the body using sensory organs, which are known as proprioceptors, in joints and muscles. Kinesthesia is a key component in muscle memory and hand-eye coordination. The discovery of kinesthesia served as a precursor to the study of proprioception. While the terms proprioception and kinesthesia are often used interchangeably, they actually have many different components. Often the kinesthetic sense is differentiated from proprioception by excluding the sense of equilibrium or balance from kinesthesia. An inner ear infection, for example, might degrade the sense of balance. This would degrade the proprioceptive sense, but not the kinesthetic sense. The affected individual would be able to walk, but only by using the sense of sight to maintain balance; the person would be unable to walk with eyes closed. Another difference in proprioception and kinesthesia is that kinesthesia focuses on the body’s motion or movements, while proprioception focuses more on the body’s awareness of its movements and behaviors. This has led to the notion that kinesthesia is more behavioral, and proprioception is more cognitive.

2.6.3 – The Vestibular System

The inner ear and the vestibular system: The vestibular system, together with the cochlea, makes up the workings of the inner ear and provides us with our sense of balance.

The vestibular system is the sensory system that contributes to balance and the sense of spatial orientation. Together with the cochlea (a part of the auditory system) it constitutes the labyrinth of the inner ear in most mammals, situated within the vestibulum in the inner ear.

There are two main components of the vestibulum: the semicircular canal system, which indicates rotational movements; and the otoliths, which indicate linear accelerations. Some signals from the vestibular system are sent to the neural structures that control eye movements and provide us with clear vision, a process known as the vestibulo-ocular reflex. Other signals are sent to the muscles that control posture and keep us upright.

2.6.4 – Proprioception vs. Vestibular System

While both the vestibular system and proprioception contribute to the “sense of balance,” they have different functions. Proprioception has to do with the positioning of limbs and awareness of body parts in relation to one another, while the vestibular system contributes to the understanding of where the entire body is in space. If there was a problem with your proprioception, you might fall over if you tried to walk because you would lose your innate understanding of where your feet and legs were in space. On the other hand, if there was a problem with your vestibular system (such as vertigo), you might feel like your entire body was spinning in space and be unable to walk for that reason.

3 – Introduction to Perception

3.1 – The Perception Process

Perception is the set of unconscious processes we undergo to make sense of the stimuli and sensations we encounter.

3.1.1 – Introduction

Perception refers to the set of processes we use to make sense of all the stimuli you encounter every second, from the glow of the computer screen in front of you to the smell of the room to the itch on your ankle. Our perceptions are based on how we interpret all these different sensations, which are sensory impressions we get from the stimuli in the world around us. Perception enables us to navigate the world and to make decisions about everything, from which T-shirt to wear or how fast to run away from a bear.

Close your eyes. What do you remember about the room you are in? The color of the walls, the angle of the shadows? Whether or not we know it, we selectively attend to different things in our environment. Our brains simply don’t have the capacity to attend to every single detail in the world around us. Optical illusions highlight this tendency. Have you ever looked at an optical illusion and seen one thing, while a friend sees something completely different? Our brains engage in a three-step process when presented with stimuli: selection, organization, and interpretation.

Rubin’s Vase: Rubin’s Vase is a popular optical illusion used to illustrate differences in perception of stimuli.

For example, think of Rubin’s Vase, a well-known optical illusion depicted above. First we select the item to attend to and block out most of everything else. It’s our brain’s way of focusing on the task at hand to give it our attention. In this case, we have chosen to attend to the image. Then, we organize the elements in our brain. Some individuals organize the dark parts of the image as the foreground and the light parts as the background, while others have the opposite interpretation.

Some individuals see a vase because they attend to the black part of the image, while some individuals see two faces because they attend to the white parts of the image. Most people can see both, but only one at a time, depending on the processes described above. All stages of the perception process often happen unconsciously and in less than a second.

3.1.2 – The Perception Process

The perceptual process is a sequence of steps that begins with stimuli in the environment and ends with our interpretation of those stimuli. This process is typically unconscious and happens hundreds of thousands of times a day. An unconscious process is simply one that happens without awareness or intention. When you open your eyes, you do not need to tell your brain to interpret the light falling onto your retinas from the object in front of you as “computer” because this has happened unconsciously. When you step out into a chilly night, your brain does not need to be told “cold” because the stimuli trigger the processes and categories automatically.

3.1.3 – Selection

The world around us is filled with an infinite number of stimuli that we might attend to, but our brains do not have the resources to pay attention to everything. Thus, the first step of perception is the (usually unconscious, but sometimes intentional) decision of what to attend to. Depending on the environment, and depending on us as individuals, we might focus on a familiar stimulus or something new. When we attend to one specific thing in our environment—whether it is a smell, a feeling, a sound, or something else entirely—it becomes the attended stimulus.

3.1.4 – Organization

Once we have chosen to attend to a stimulus in the environment (consciously or unconsciously, though usually the latter), the choice sets off a series of reactions in our brain. This neural process starts with the activation of our sensory receptors (touch, taste, smell, sight, and hearing). The receptors transduce the input energy into neural activity, which is transmitted to our brains, where we construct a mental representation of the stimulus (or, in most cases, the multiple related stimuli) called a percept. An ambiguous stimulus may be translated into multiple percepts, experienced randomly, one at a time, in what is called “multistable perception.”

3.1.5 – Interpretation

Duck or Rabbit?: In this famous optical illusion, your interpretation of this image as a duck or a rabbit depends on how you organize the information that you attend to.

After we have attended to a stimulus, and our brains have received and organized the information, we interpret it in a way that makes sense using our existing information about the world. Interpretation simply means that we take the information that we have sensed and organized and turn it into something that we can categorize. For instance, in the Rubin’s Vase illusion mentioned earlier, some individuals will interpret the sensory information as “vase,” while some will interpret it as “faces.” This happens unconsciously thousands of times a day. By putting different stimuli into categories, we can better understand and react to the world around us.

3.2 – Selection

3.2.1 – Introduction

Selection, the first stage of perception, is the process through which we attend to some stimuli in our environment and not others.

3.2.2 – The Influence of Motives

Motivation has an enormous impact on the perceptions people form about the world. A simple example comes from a short-term drive, like hunger: the smell of cooking food will catch the attention of a person who hasn’t eaten for several hours, while a person who is full might not attend to that detail. Long-term motivations also influence what stimuli we attend to. For example, an art historian who has spent many years looking at visual art might be more likely to pay attention to the detailed carvings on the outside of a building; an architect might be more likely to notice the structure of the columns supporting the building.

Perceptual expectancy, also called perceptual set, is a predisposition to perceive things in a certain way based on expectations and assumptions about the world. A simple demonstration of perceptual expectancy involves very brief presentations of non-words such as “sael.” Subjects who were told to expect words about animals read it as “seal,” but others who were expecting boat-related words read it as “sail.”

Emotional drives can also influence the selective attention humans pay to stimuli. Some examples of this phenomenon are:

- Selective retention: recalling only what reinforces your beliefs, values, and expectations. For example, if you are a fan of a particular basketball team, you are more likely to remember statistics about that team than other teams that you don’t care about.

- Selective perception: the tendency to perceive what you want to. To continue the basketball team example, you might be more likely to perceive a referee who makes a call against your favorite team as being wrong because you want to believe that your team is perfect.

- Selective exposure: you select what you want to expose yourself to based on your beliefs, values, and expectations. For example, you might associate more with people who are also fans of your favorite basketball team, thus limiting your exposure to other stimuli. This is commonly seen in individuals who associate with a political party or religion: they tend to spend time with others who reinforce their beliefs.

3.2.3 – The Cocktail Party Effect

Cocktail Party Effect: One will selectively attend to their name being spoken in a crowded room, even if they were not listening for it to begin with.

Selective attention shows up across all ages. Babies begin to turn their heads toward a sound that is familiar to them, such as their parents’ voices. This shows that infants selectively attend to specific stimuli in their environment. Their accuracy in noticing these physical differences amid background noise improves over time.

Some examples of messages that catch people’s attention include personal names and taboo words. The ability to selectively attend to one’s own name has been found in infants as young as 5 months of age and appears to be fully developed by 13 months. This is known as the ” cocktail party effect.” (This term can also be used generally to describe the ability of people to attend to one conversation while tuning out others.)

3.2.4 – The Influence of Stimulus Intensity

A stimulus that is particularly intense, like a bright light or bright color, a loud sound, a strong odor, a spicy taste, or a painful contact, is most likely to catch your attention. Evolutionary psychologists theorize that we selectively attend to these kinds of stimuli for survival purposes. Humans who could attend closely to these stimuli were more likely to survive than their counterparts, since some intense stimuli (like pain, powerful smells, or loud noises) can indicate danger. More than half the brain is devoted to processing sensory information, and the brain itself consumes roughly one-fourth of one’s metabolic resources, so the senses must provide exceptional benefits to fitness.

3.3 – Organization

3.3.1 – Overview

Organization is the stage in the perception process in which we mentally arrange stimuli into meaningful and comprehensible patterns.

After the brain has decided which of the millions of stimuli it will attend to, it needs to organize the information that it has taken in. Organization is the process by which we mentally arrange the information we’ve just attended to in order to make sense of it; we turn it into meaningful and digestible patterns. Below is a discussion of some of the different ways we organize stimuli.

3.3.2 – Gestalt Laws of Grouping

The Gestalt laws of grouping is a set of principles in psychology first proposed by Gestalt psychologists to explain how humans naturally perceive stimuli as organized patterns and objects. Gestalt psychology tries to understand the laws of our ability to acquire and maintain meaningful perceptions in an apparently chaotic world. The central principle of gestalt psychology is that the mind forms a global whole with self-organizing tendencies. The gestalt effect is the capability of our brain to generate whole forms, particularly with respect to the visual recognition of global figures, instead of just collections of simpler and unrelated elements. Essentially, gestalt psychology says that our brain groups elements together whenever possible instead of keeping them as separate elements.

A few of these laws of grouping include the laws of proximity, similarity, and closure and the figure-ground law.

3.3.3 – The Law of Proximity

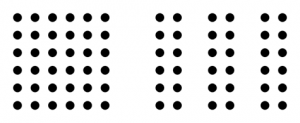

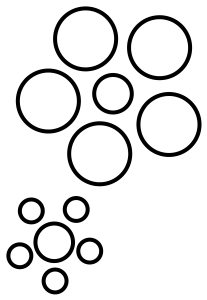

Gestalt law of proximity: Because of the law of proximity, people tend to see clusters of dots on a page instead of a large number of individual dots.

This law posits that when we perceive a collection of objects we will perceptually group together objects that are physically close to each other. This allows for the grouping together of elements into larger sets, and reduces the need to process a larger number of smaller stimuli. For this reason, people tend to see clusters of dots on a page instead of a large number of individual dots. The brain groups together the elements instead of processing a large number of smaller stimuli, allowing us to understand and conceptualize information more quickly.

3.3.4 – The Law of Similarity

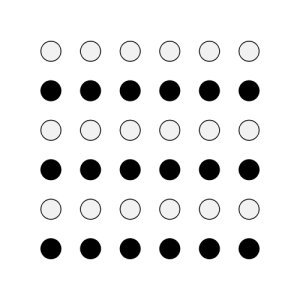

The law of similarity: Because of the law of similarity, people tend to see this as six clusters of black and white dots rather than 36 individual dots.

This law states that people will perceive similar elements will be perceptually grouped together. This allows us to distinguish between adjacent and overlapping objects based on their visual texture and resemblance.

3.3.5 – The Figure-Ground Law

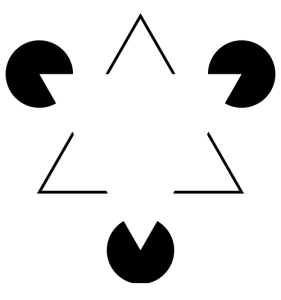

The figure-ground law: In the Kanizsa triangle illusion, the figure-ground law causes most people to perceive a white triangle in the foreground, which makes the black shapes recede into the background.

A visual field can be separated into two distinct regions: the figures (prominent objects) and the ground (the objects that recede into the background. Many optical illusions play on this perceptual tendency.

3.3.6 – The Law of Closure

IBM logo: The IBM logo plays on the law of closure. While it is made up of just lines, we perceive the three letters.

The law of closure explains that our perception will complete incomplete objects, such as the lines of the IBM logo.

3.3.7 – Organizing People

Human and animal brains are structured in a modular way, with different areas processing different kinds of sensory information. A special part of our brain known as the fusiform face area (FFA) is dedicated to the recognition and organization of people. This module developed in response to our need as humans to recognize and organize people into different categories to help us survive.

3.3.8 – Perceptual Schemas

We develop perceptual schemas in order to organize impressions of people based on their appearance, social roles, interaction, or other traits; these schemas then influence how we perceive other things in the world. These schemas are heuristics, or shortcuts that save time and effort on computation. For example, you might have a perceptual schema that the building where you go to class is symmetrical on the outside (sometimes called the “symmetry heuristic,” or the tendency to remember things as being more symmetrical than they are). Even if it isn’t, making that assumption saved your mind some time. This is the blessing and curse of schemas and heuristics: they are useful for making sense of a complex world, but they can be inaccurate.

3.3.9 – Stereotypes

We also develop stereotypes to help us make sense of the world. Stereotypes are categories of objects or people that help to simplify and systematize information so the information is easier to be identified, recalled, predicted, and reacted to. Between stereotypes, objects or people are as different from each other as possible. Within stereotypes, objects or people are as similar to each other as possible.

While our tendency to group stimuli together helps us to organize our sensations quickly and efficiently, it can also lead to misguided perceptions. Stereotypes become dangerous when they no longer reflect reality, or when they attribute certain characteristics to entire groups. They can contribute to bias, discriminatory behavior, and oppression.

3.4 – Interpretation

3.4.1 – Overview

Interpretation, the final stage of perception, is the subjective process through which we represent and understand stimuli.

In the interpretation stage of perception, we attach meaning to stimuli. Each stimulus or group of stimuli can be interpreted in many different ways. Interpretation refers to the process by which we represent and understand stimuli that affect us. Our interpretations are subjective and based on personal factors. It is in this final stage of the perception process that individuals most directly display their subjective views of the world around them.

3.4.2 – Factors that Influence Interpretation

Cultural values, needs, beliefs, experiences, expectations, involvement, self-concept, and other personal influences all have tremendous bearing on how we interpret stimuli in our environment.

3.4.3 – Experiences

Prior experience plays a major role in the way a person interprets stimuli. For example, an individual who has experienced abuse might see someone raise their hand and flinch, expecting to be hit. That is their interpretation of the stimulus (a raised hand). Someone who has not experienced abuse but has played sports, however, might see this stimulus as a signal for a high five. Different individuals react differently to the same stimuli, depending on their prior experience of that stimuli.

3.4.4 – Values and Culture

Culture provides structure, guidelines, expectations, and rules to help people understand and interpret behaviors. Ethnographic studies suggest there are cultural differences in social understanding, interpretation, and response to behavior and emotion. Cultural scripts dictate how positive and negative stimuli should be interpreted. For example, ethnographic accounts suggest that American mothers generally think that it is important to focus on their children’s successes while Chinese mothers tend to think it is more important to provide discipline for their children. Therefore, a Chinese mother might interpret a good grade on her child’s test (stimulus) as her child having guessed on most of the questions (interpretation) and therefore as worthy of discipline, while an American mother will interpret her child as being very smart and worthy of praise. Another example is that Eastern cultures typically perceive successes as being arrived at by a group effort, while Western cultures like to attribute successes to individuals.

3.4.5 – Expectation and Desire

An individual’s hopes and expectations about a stimulus can affect their interpretation of it. In one experiment, students were allocated to pleasant or unpleasant tasks by a computer. They were told that either a number or a letter would flash on the screen to say whether they were going to taste orange juice or an unpleasant-tasting health drink. In fact, an ambiguous figure (stimulus) was flashed on screen, which could either be read as the letter B or the number 13 (interpretation). When the letters were associated with the pleasant task, subjects were more likely to perceive a letter B, and when letters were associated with the unpleasant task they tended to perceive a number 13. The individuals’ desire to avoid the unpleasant drink led them to interpret a stimulus in a particular way.

Similarly, a classic psychological experiment showed slower reaction times and less accurate answers when a deck of playing cards reversed the color of the suit symbol for some cards (e.g. red spades and black hearts). Peoples’ expectations about the stimulus (“if it’s red, it must be diamonds or hearts”) affected their ability to accurately interpret it.

3.4.6 – Self-Concept

This term describes the collection of beliefs people have about themselves, including elements such as intelligence, gender roles, sexuality, racial identity, and many others. If I believe myself to be an attractive person, I might interpret stares from strangers (stimulus) as admiration (interpretation). However, if I believe that I am unattractive, I might interpret those same stares as negative judgments.

3.5 – Perceptual Constancy

3.5.1 – Introduction

Perceptual constancy is perceiving objects as having constant shape, size, and color regardless of changes in perspective, distance, and lighting.

Have you ever noticed how snow looks just as “white” in the middle of the night under dim moonlight as it does during the day under the bright sun? When you walk away from an object, have you noticed how the object gets smaller in your visual field, yet you know that it actually has not changed in size? Thanks to perceptual constancy, we have stable perceptions of an object’s qualities even under changing circumstances.

Perceptual constancy is the tendency to see familiar objects as having standard shape, size, color, or location, regardless of changes in the angle of perspective, distance, or lighting. The impression tends to conform to the object as it is assumed to be, rather than to the actual stimulus presented to the eye. Perceptual constancy is responsible for the ability to identify objects under various conditions by taking these conditions into account during mental reconstitution of the image.

Even though the retinal image of a receding automobile shrinks in size, a person with normal experience perceives the size of the object to remain constant. One of the most impressive features of perception is the tendency of objects to appear stable despite their continually changing features: we have stable perceptions despite unstable stimuli. Such matches between the object as it is perceived and the object as it is understood to actually exist are called perceptual constancies.

3.5.2 – Visual Perceptual Constancies

There are many common visual and perceptual constancies that we experience during the perception process.

3.5.3 – Size Constancy

The Ponzo illusion: This famous optical illusion uses size constancy to trick us into thinking the top yellow line is longer than the bottom; they are actually the exact same length.

Within a certain range, people’s perception of a particular object’s size will not change, regardless of changes in distance or size change on the retina. The perception of the image is still based upon the actual size of the perceptual characteristics. The visual perception of size constancy has given rise to many optical illusions.

3.5.4 – Shape Constancy

Shape constancy: This form of perceptual constancy allows us to perceive that the door is made of the same shapes despite different images being delivered to our retinae.

Regardless of changes to an object’s orientation, the shape of the object as it is perceived is constant. Or, perhaps more accurately, the actual shape of the object is sensed by the eye as changing but then perceived by the brain as the same. This happens when we watch a door open: the actual image on our retinas is different each time the door swings in either direction, but we perceive it as being the same door made of the same shapes.

3.5.5 – Distance Constancy

This refers to the relationship between apparent distance and physical distance. An example of this illusion in daily life is the moon. When it is near the horizon, it is perceived as closer to Earth than when it is directly overhead.

3.5.6 – Color Constancy

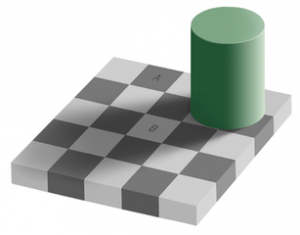

Checker-shadow illusion: Color constancy tricks our brains into seeing squares A and B as two different colors; however, they are the exact same shade of gray.

This is a feature of the human color perception system that ensures that the color of an object remains similar under varying conditions. Consider the shade illusion: our perception of how colors are affected by bright light versus shade causes us to perceive the two squares as different colors. In fact, they are the same exact shade of gray.

3.5.7 – Auditory Perceptual Constancies

Our eyes aren’t the only sensory organs that “trick” us into perceptual constancy. Our ears do the job as well. In music, we can identify a guitar as a guitar throughout a song, even when its timbre, pitch, loudness, or environment change. In speech perception, vowels and consonants are perceived as constant even if they sound very different due to the speaker’s age, sex, or dialect. For example, the word “apple” sounds very different when a two year-old boy and a 30 year-old woman say it, because their voices are at different frequencies and their mouths form the word differently… but we perceive the sounds to be the same. This is thanks to auditory perceptual constancy!

4 – Advanced Topics in Perception

4.1 – Perceiving Depth, Distance, and Size

4.1.1 – Overview

Perception of depth, size, and distance is achieved using both monocular and binocular cues.

How do we perceive images? Are the images we see directly mapped onto our brain like a projector? In reality, perception and vision are far more complicated than that. Visual stimuli enter as light through the photoreceptors in the retina, where they are changed into neural impulses. These impulses travel through the central nervous system, stop at the sensory way-station of the thalamus, and then are routed to the visual cortex. From the visual cortex, the information goes to the parietal lobe and the temporal lobe. Approximately one-third of the cerebral cortex plays a role in processing visual stimuli.

4.1.2 – Depth Perception

Convergence: The train tracks look as though they come to a single point in the distance, illustrating the concept of convergence.

Depth perception is the visual ability to perceive the world in three dimensions, coupled with the ability to gauge how far away an object is. Depth perception, size, and distance are ascertained through both monocular (one eye) and binocular (two eyes) cues. Monocular vision is poor at determining depth. When an image is projected onto a single retina, cues about the relative size of the object compared to other objects are obtained. In binocular vision, these relative sizes are compared, since each individual eye is seeing a slightly different image from a different angle.

Depth perception relies on the convergence of both eyes upon a single object, the relative differences between the shape and size of the images on each retina, the relative size of objects in relation to each other, and other cues such as texture and constancy. For example, shape constancy allows the individual to see an object as a constant shape from different angles, so that each eye is recognizing a single shape and not two distinct images. When the input from both eyes is compared, stereopsis, or the impression of depth, occurs.

4.1.3 – Relative Size of Objects

Ebbinghaus illusion: The Ebbinghaus illusion illustrates how the perception of size is altered by the relative sizes of other objects. The two center circles are the same size, though they may be perceived to be different sizes.

Size and distance of objects are also determined in relation to each other. Visual cues (for instance, far-away objects appearing smaller and near objects appearing larger) develop in the early years of life. Convergence upon a single point is another visual cue that provides information about distance. As objects move farther away into the distance, they converge into a single point. An example of this can be seen in the image of train tracks disappearing into the distance.

Optical illusions, such as the Ebbinghaus illusion, show how our perception of size is altered by the relative sizes of other objects around us. In the Ebbinghaus illusion, the size of the center circles is the same, but looks different due to the size of the surrounding circles.

4.1.4 – Depth from Motion

When an object moves toward an observer, the retinal projection of the object expands over a period of time, which leads to the perception of movement in a line toward the observer. This change in stimulus enables the observer not only to see the object as moving, but to perceive the distance of the moving object. This is useful when you cross the street: as you watch a car come toward you, your brain uses the change in size projected on your retina to determine how far away it is.

4.2 – Perceiving Motion

4.2.1 – Overview

Motion is perceived when two different retinal pathways, which rely on specific features and luminance, converge together.

Motion perception is the process of inferring the speed and direction of elements in a scene based on visual input. Monocular vision, or vision from one eye, can detect nearby motion; however, this type of vision is poor at depth perception. For this reason, binocular vision is better at perceiving motion from a distance. In monocular vision, the eye sees a two-dimensional image in motion, which is sufficient at near distances but not from farther away. In binocular vision, both eyes are used together to perceive motion of an object by tracking the differences in size, location, and angle of the object between the two eyes. Motion perception happens in two ways that are generally referred to as first-order motion perception and second-order motion perception.

4.2.2 – First-Order Motion Perception

First-order motion perception occurs through specialized neurons located in the retina, which track motion through luminance. However, this type of motion perception is limited. An object must be directly in front of the retina, with motion perpendicular to the retina, in order to be perceived as moving. The motion-sensing neurons detect a change in luminance at one point on the retina and correlate it with a change in luminance at a neighboring point on the retina after a short delay.

4.2.3 – Second-Order Motion Perception

Second-order motion perception occurs by examining the changes in an objects’ position over time through feature tracking on the retina. This method detects motion through changes in size, texture, contrast, and other features. One advantage to feature-tracking is that motion can be separated both by motion and by blank intervals where no motion is occurring. This type of motion perception can be used to figure out how fast something is moving toward you—TTC, or “time to contact.”

4.2.4 – Visual Illusions

Barber pole illusion: In the barber pole illusion, a barber pole is rotated along the x-axis, but the diagonal stripes appear to move down the pole’s y-axis in a way that is inconsistent with the actual direction the pole is turning in.

Visual illusions offer insight into how motion is perceived. The phi phenomenon is an illusion involving a regular sequence of luminous impulses. Due to first-order motion perception, the luminous impulses are seen as a continual movement. The phi phenomenon explains how early animation worked: it involves taking a series of still images that change slightly, and moving through them very quickly so that the image appears to be moving, rather than the series of still images that it is.

Another visual illusion is the barber pole illusion. In the barber pole illusion, a barber pole is rotated along the x-axis, but the diagonal stripes appear to move along the pole in a vertical fashion (y-axis) that is inconsistent with the actual direction the pole is turning in. The barber pole illusion also demonstrates how motion is perceived through first-order perception, which only sees movement as continual. The feature-tracking aspect of second-order perception does not perceive the aftereffects of a motion; it perceives movement as stroboscopic, or as a series of still images.

4.3 – Unconscious Perception

4.3.1 – Overview

We encounter more stimuli than we can attend to; unconscious perception helps the brain process all stimuli, not just those we take in consciously.

Individuals take in more stimuli from their environment than they can consciously attend to at any given moment. The brain is constantly processing all the stimuli it is exposed to, not just those that it consciously attends to. Unconscious perception involves the processing of sensory inputs that are not selected for conscious perception. The brain takes in these unnoticed signals and interprets them in ways that influence how individuals respond to their environment.

4.3.2 – Priming

Experience affects the activation of neural networks: When information from an initial stimulus enters the brain, neural pathways associated with that stimulus are activated, and the stimulus is interpreted in a specific manner.

The perceptual learning of unconscious processing occurs through priming. Priming occurs when an unconscious response to an initial stimulus affects responses to future stimuli. One of the classic examples is word recognition, thanks to some of the earliest experiments on priming in the early 1970s: the work of David Meyer and Roger Schvaneveldt showed that people decided that a string of letters was a word when the letters followed an associatively or semantically related word. For example, NURSE was recognized more quickly when it followed DOCTOR than when it followed BREAD. This is one of the simplest examples of priming. When information from an initial stimulus enters the brain, neural pathways associated with that stimulus are activated, and a second stimulus is interpreted through that specific context.

One example of priming is in the childhood game Simon Says. Simon is able to trick the players because of priming. By saying “Simon says touch your nose,” “Simon says touch your ear,” and so on, participants are primed to follow the “Simon says” direction and are likely to slip up when that phrase is omitted because they expect it to be there.

In another example, individuals in a study were primed with neutral, polite, or rude words prior to an interview with an investigator. Priming the participants with words prior to the interview activated the neural circuits associated with reactions to those words. The participants who had been primed with rude words interrupted the investigator most often, and those primed with polite words did so the least often.

4.3.3 – Subliminal Stimulation

The presentation of an unattended stimulus can prime our brains for a future response to that stimulus. This process is known as subliminal stimulation. A number of studies have examined how unconscious stimuli influence human perception. Researchers, for example, have demonstrated how the type of music that is played in supermarkets can influence the buying habits of consumers. In another study, researchers discovered that holding a cold or hot beverage prior to an interview can influence how the individual perceives the interviewer. While subliminal stimulation appears to have a temporary effect, there is no evidence yet that it produces an enduring effect on behavior.

Originally published by Lumen Learning – Boundless Psychology under a Creative Commons Attribution-ShareAlike 3.0 Unported license.