These technologies did not start together, either in time or (more importantly) in context.

By Dr. Stephen Robertson

Professor and Director of the MA Program in History

George Mason University

In the Beginning . . .

Overview

Ever since the dawn of recorded history, and before, we have been trying to learn how to do things with information.

This is not at all the grand claim it may seem to be. Rather, it is a tautology. We could not begin recorded history until we had ways and means of recording—and recording information is one of the things we have been learning how to do. This is perhaps one of the few necessities of recorded history. We didn’t have to come down from the trees, or even out of the ocean, before beginning our recorded history—though in fact we did both of those things. We didn’t have to learn how to plant crops instead of relying on hunting and gathering; we didn’t have to build towns, invent trade, organise markets and establish trade routes—though probably we did all of those things, and probably they all helped to stimulate the invention of writing. We certainly didn’t have to invent the wheel, and indeed it’s not clear whether we invented the wheel before or after we learnt how to write. But we did have to learn how to write.

The written message is a specific human invention, just as much as the means to make that message. Once this was invented, information technology had begun to emerge.

Technology

The components of the phrase information technology need a little discussion. First, what do I mean by ‘technology’?

In today’s usage, technology is frequently bracketed with science, and has come to mean almost exclusively the gadgets and devices that we have invented to allow us to do things—as summed up in the advertising slogan ‘the appliance of science’. But this is a very limited view of technology. My (1944 edition) Shorter Oxford Dictionary defines technology as ‘a discourse or treatise on an art or arts; the scientific study of the practical or industrial arts; practical arts collectively’. It is no accident that this definition contains the word art(s) four times and the word science/scientific only once. Technology is the art of doing things, of changing the world. We might also think of the word technique, concerning ways of doing things, whether in the arts or the sciences.

In this respect, technology is in some sense the opposite of science. Science is about understanding why the universe is as it is; it is about the rules and regulations, and the structures and regularities. Cyril Northcote Parkinson, in Parkinson’s Law, says

It is not the business of the botanist to eradicate the weeds. Enough for him if he can tell us just how fast they grow.

The ultimate achievement to which any scientist aspires is the discovery of a law; and a law of nature, just as much as a human law, is about what cannot be done—what possibly imaginable states of the universe are in fact forbidden. There is an old paradox, ‘What happens when an irresistible force meets an immovable object?’ But Newton has given us some laws, which tell us (among other things) that no force is resistible except by another force, but that any force is resistible by another equal and opposite force; and that no object is immovable.

Technology’s view of the world is quite different. For technology, the existence of the universe in its present state is a constant challenge: how do we modify it? How do we mould it to our own ends? How do we avoid these famous scientific laws, or make them work on our behalf, enlist them to our service? Of course, we cannot actually break the laws of science (though sometimes technology discovers that the scientists had it wrong, and that what they thought was a law could in fact be evaded). But the ways in which we can make use of them are many and wonderful. The laws of mechanics, including those that govern leverage, are one thing that we learnt about the universe. But when Archimedes said (as a comment on those laws) ‘Give me a place to stand, and I shall move the world’, he was talking (metaphorically at least) technology, not science.

In short, technology is about changing the universe, about knowing how to do things. Not necessarily on a large scale, of course—in fact some technology is about very small changes. But the knowing how is a necessary part. A tool or device is not technology per se; it is only technology insofar as it enables us to do things.

Furthermore, technological change requires choice. Often, technological advances are proclaimed as liberating, as simply expanding our horizons and our opportunities. But as we adopt new ways of doing things through technology, we not only leave behind older ones, we render the older worlds impossible, unattainable (as discussed, for example, in David Rothenberg’s Hand’s End). The huge social change brought about by the availability of the personal automobile, for example, has now spread to almost all corners of the world. Even if the exigencies of climate change fail to force changes in this mode of operating, such changes will perforce come about when the oil runs out. But a return to the pre-car world of 1890 is simply out of the question—we have lost those ways forever.

Information

The word information is also a little tricky, and has been used in many ways at different times and by different people. Theses and books have been devoted to the question ‘what is information?’ and to discussing the consequences of the possible answers (two examples: Luciano Floridi’s Information—A Very Short Introduction, and Antonio Badia’s The Information Manifold). However, to go down that route would take me away from my main purpose. In this book I will take a rather naïve view of information. When a speaker speaks and a listener hears and understands; when the speaker’s voice is transformed by the telephone handset into electrical signals, and possibly again into radio waves, and something at the other end does the reverse process; when a writer writes and a reader reads and understands; when someone puts data into a database, and later someone else enquires of the database, and gets out these same data in a different form but still understandable—all these are processes involving information.

The only general assumption I shall make is that there are indeed human agents involved at some point (even if I am temporarily concerned only with mechanisms and devices). That is, I shall assume that for some thing to be or carry information, there has to be at least the possibility that the result will at some time reach a human being and be understood. We may think of information as residing somehow in records, and in some sense this book is entirely about records and recorded information—but the human recipient is implicit in everything.

There are certainly notions of information that do not depend on this assumption. However, identifying or understanding some general notion of information that would encompass all these is hard.

There is a well-known theory of information, due in part to Claude Shannon (see his paper of 1948), which uses the idea that ‘information is that which reduces uncertainty’. It is possible to read such a definition as requiring no human; however, if we ask “Who or what is experiencing the uncertainty?”, it becomes clear that assuming a human (or at least a sentient being) helps with this conception as well.

Shannon himself thought of his theory as having nothing to do with human beings or with meaning. His theory had huge influence in the years that followed, in many different fields, as described by James Gleick in his fascinating book, The Information. Gleick’s book takes off from many of the same historical starting points as will appear in the pages that follow. To Gleick, Shannon’s theory is central to the IT revolution, and encompasses all notions of information. To me, (and also to Badia, cited above) for all its undoubted power and usefulness, this theory fails to address some of the central features of information in the context of human-to-human communication. I shall make no further use of it.

Language

As we have studied animals over the last half-century or so, we have come to realise that many animals have some form of communicative behaviour. We know that bees dance to tell each other about good sources of food, that whales communicate over large stretches of ocean, that chimps learn from each other. Nevertheless, the human behaviours that come under the general heading of language are extraordinary in their range and scope.

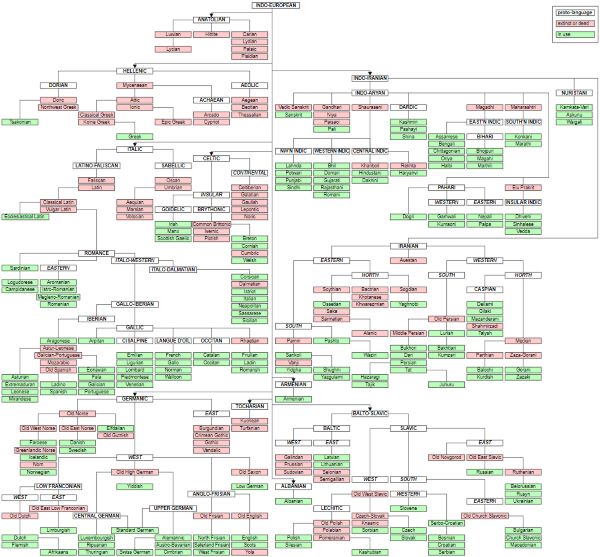

The evolution and invention of language is one of the great events in human pre-history. I say ‘evolution and invention’ deliberately. Steven Pinker argues strongly in his book of the same name that ‘the language instinct’ is exactly that, a basic instinct that has evolved and is part of what makes us human, common to all humanity. In this sense, language in the abstract does not really belong in this history of human invention. However, all those parts of language that are learnt and constructed, all the specifics of actual languages, must be regarded as invention.

The invention of a language is a continuous process. Like most inventions, but even more so than most, languages are not the product of a lone inventor in a garret, but of a social process. Every writer or speaker who uses language inventively or creatively is contributing to the invention of the language, and every writer or speaker who copies or borrows from or imitates a previous speaker or writer is also contributing to the establishment of that invention. Language, in addition to being a basic instinct, is a technology that we use to change the world, by communicating with other people, and the social process by which particular languages develop is one long invention.

We often refer to such a process of social invention as evolution. This is in homage to Darwin’s biological theory, but is not a part of it—it is an analogy rather than an application. Probably it is also the case that the biological evolution of humanity in matters relating to language continues to this day—perhaps in obviously physiological features such as voice production, but perhaps also in our language-processing capabilities. That is harder to see happening, however.

It is also hard to know much about the origins of either the social or the biological process, and I will not attempt to go into either. The true starting point of this book, the point from which a genuine technology of information takes off, is the invention of writing.

Writing

Overview

The question “What makes humans different from animals?” has been asked many times, and answered in many different ways—including, of course, the answer “They aren’t!”. Other answers have included intelligence, language, abstract thought and many other things. However, many of these possible answers have been undermined by discoveries in relation to other species. But it may be argued that one characteristic that really does distinguish us from other species is writing. Whether or not you feel the necessity for such a distinguishing feature, writing is certainly a strong candidate.

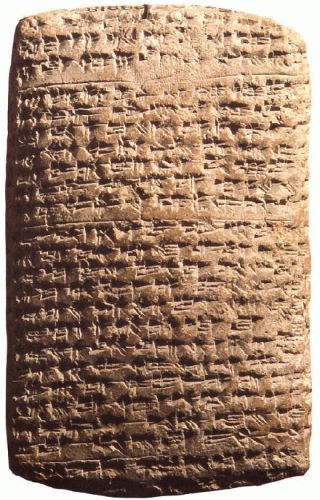

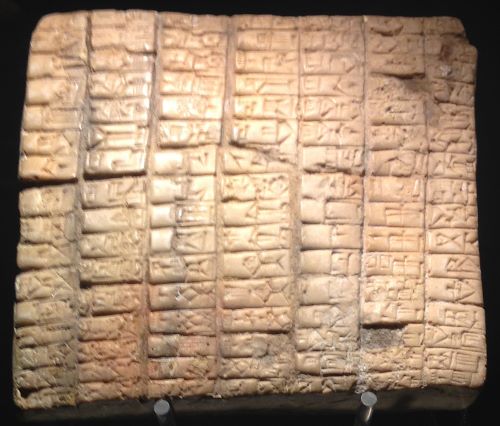

Writing began, we believe, some time in the fourth millennium BCE—say five-and-a-half millennia ago—in Mesopotamia. A great account of the development of writing is given by Andrew Robinson in The Story of Writing. The first purposes of writing were relatively mundane; certainly not the recording of human history. They had to do with commerce and administration—with accounting, recording transactions, listing stock, identifying ownership, and so on. Later writing came to be used to glorify leaders, and to tell stories. These stories (and the people who wrote them) did not distinguish between myth and history. Later still came chronicles and real history, and philosophy and science and religious tracts and laws and administrative rulebooks and poetry and advertising and all the rest.

But the receipts and the tallies and the laundry lists that started it off, however mundane, are central to the history. The kinds of information they represent are the mothers of invention. The people who invented writing felt the need to do several things. They wanted to supplement their own memory, they wanted to organise and impose order on the world, and they wanted to be able inform others of the validity of their own memory and their organisation of the world.

Later I will expand these reasons for writing.

Systems of Writing

In order to make this invention of writing work, for any of the purposes mentioned, we need to have a notion of a system of writing. In principle, any mark (on paper or skin or stone or cloth or in clay or whatever) could mean anything we choose it to mean, as in Humpty Dumpty’s way with words (‘“When I use a word … it means just what I choose it to mean”’—Through the Looking-Glass, Lewis Carroll). But that is not a lot of use unless there is some reasonable chance that someone, either the writer at a later date, or someone else to whom the message is directed, will be able to recognise the meaning. So we have to be systematic, at least to some degree, about assigning meaning to marks.

The same problem has occurred long before, in language generally. We have some notion already of the relationship between words and meaning—that is, we can say in some way what a word means, and then construct new sentences out of existing words, whose meaning can be inferred from a knowledge of the words. Of course, this is a gross oversimplification of the notion of meaning, and in any case our present notion of words is rather highly dependent on written language. Consider for example German, where the written form allows some words to be run together to build longer words, or Chinese, which has no word boundaries in its written form. Nevertheless, it is a useful starting place for writing.

At the very least, we might expect our system of writing to tie in with the words of our language, in the sense that the same word is represented in the same way when repeated. This assumes that written language does indeed represent spoken language, and that spoken language is made up of words. It provides us with one of the major ways in which early written languages were constructed—with symbols that may start as stylised pictures representing words.

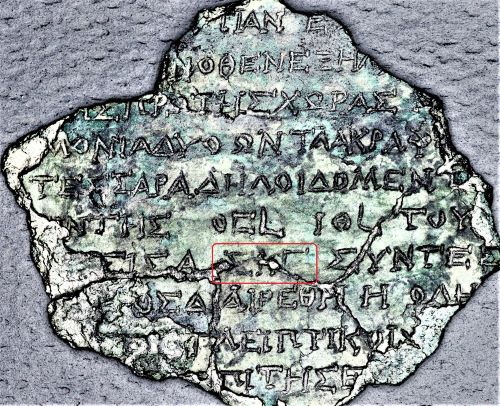

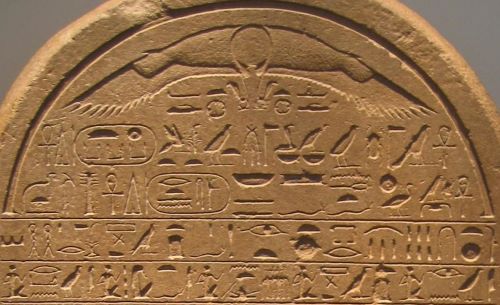

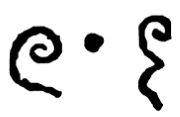

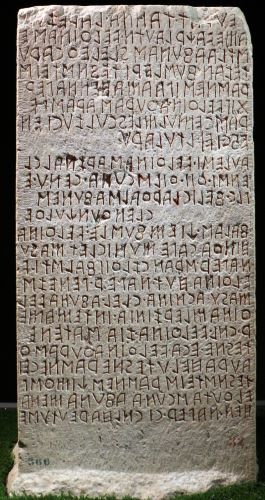

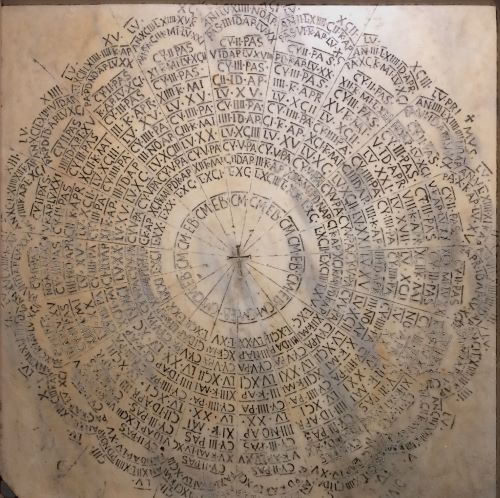

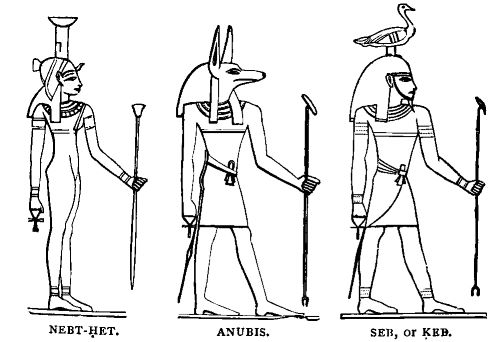

The system of writing that most clearly illustrates this method is ancient Egyptian—see Figure 1. Small pictures can be seen in many examples of Egyptian writing, and these can sometimes be translated via the words that the pictures represent (though actually ‘ancient Egyptian’ covers several different writing systems). But even the Cuneiform system, the wedge-shaped marks in wet clay that came out of Mesopotamia, has similar roots.

In the earliest writing systems, a method based on puns was commonly used. That is, you may want to represent a word about which it is hard to draw a picture (an abstract concept, say). Then one of the methods open to you is to draw a picture of another, more concrete word, which sounds similar, and allow the punning picture to represent the abstract word. This picture now comes to represent the sound rather than the concept that it originally pictured. For example (using modern English words) if I want to represent the word son (‘my son’), I would find it difficult to represent that meaning directly with a picture. But the language has another word which is pronounced in the same way and represents a very concrete physical object, sun. A picture of the sun will do quite well to represent the word ‘son’, almost certainly unambiguously in the context of a sentence.

This process can be taken one interesting stage further. If the word you want to represent has multiple syllables, it may be hard to find another word that sounds like it. However, you may be able to divide it into smaller parts (shorter words or single syllables or even alphabet-like sounds) and draw a picture to stand for each of the parts. Then you have a representation of the abstract word as a combination of pictures. You have taken a vital step towards a modern system! When each symbol represents a syllable of sound, we have a syllabary. Several such systems were invented, and indeed they still exist in languages such as Chinese and Japanese.

The Alphabet

For two thousand years or so, writing systems developed slowly; new systems were invented, and borrowed ideas from each other or introduced new ones, but the changes were not huge. Then, around the late second millennium BCE and the beginning of the first, a huge change took place. The alphabet was invented. In the account that follows, I have oversimplified many things—and borrowed a great deal from John Man’s book, Alphabeta.

When children in cultures with alphabetic written languages are taught to write, they learn about alphabetic characters and sounds. The idea is that letters represent sounds, and that you can at least to some degree work out what (spoken) word is intended by putting together the sounds of the individual letters of the written word. It seems to be suggested that the natural units of sound are those encapsulated in the letters.

Part of the theory of spoken language is based on the view that the smallest unit of sound that can be distinguished is something like a letter—a phoneme. But this is somewhat misleading, because it is hard to pronounce individual letters. Vowels may be pronounced on their own, but consonants usually need the addition of a vowel before we can actually speak them (hence the way, in English, we vocalise the alphabet as bee, cee, dee, eff etc.). In some sense, the natural units of sound in terms of which spoken language may be understood are not really letter-like; they are much more akin to syllables. Which makes the first stage of developing an alphabet—a syllabary—readily understandable, but lends an air of mystery to the next stage.

So let’s step through the process. We have already seen how puns may be used, and how (as a result) a word may be broken into smaller words before it is written down. If we follow that process to its conclusion, we would try to think of an elementary set of single-syllable words, represent them as best we could (by pictograms or whatever), and then construct all multi-syllable words as combinations of these elementary words. Modern Chinese illustrates this approach very well. (At this point I am skating over some rather complex notions of the relation between spoken and written language, which certainly come into play with Chinese.)

But a syllabary—a set of symbols to represent every possible spoken syllable—is a clumsy thing. From our vantage point of an alphabetic system, we may think of generating syllables from every possible consonantal sound, followed by every possible vowel sound, followed by every possible consonantal sound. There may easily be thousands of such combinations, therefore thousands of different symbols required, all of which have to be learnt (as any Chinese schoolchild will tell you!).

How could we simplify it? Well, we need a couple of historical accidents. First, we need a language in which consonant sounds are always followed by vowel sounds—so that we can associate each consonant with its following vowel and not the preceding one. Among modern languages, both Japanese and Italian have some of this character. This means that we can get away with an open syllabary or ‘abugida’ (equivalent to consonant-vowel instead of consonant-vowel-consonant). An open syllabary can be very much smaller than a closed one. Modern Japanese makes use of three different scripts, two of which are essentially open syllabaries. For example, the hiragana script has 46 base characters.

As a second historical accident, we need a language in which the vowel sounds do not vary too much. If most consonants, most of the time, are followed by ‘ah’ sounds, then we may be able to do without the vowels altogether. Modern Arabic is like this—it can be written without the vowels, and still be understood by the reader, because the vowel sounds are sufficiently predictable, and the ambiguities that sometimes arise can generally be resolved easily enough by context. Now all we need is a consonant alphabet or ‘abjad’—say 20-30 symbols.

This sequence of events probably took place in the second half of the second millennium BCE, around the eastern end of the Mediterranean and the Horn of Africa. One of the cultures to adopt a consonantal alphabet was that of the Phoenicians, a people who traded throughout the Mediterranean region around the turn of the millennium. The consonantal alphabet was broadcast widely, and its survival was ensured. Note once again that it was the necessities of trade, rather than of literature or philosophy or history or science, that drove this spread.

The final step towards the modern alphabet was an explicit invention, made by the ancient Greeks in the very early first millennium BCE. They observed the Phoenician system and realised just how useful and powerful an alphabetic system of writing could be. Unfortunately their own language was rich in vowel sounds, and would have resisted a purely consonantal solution. So they invented vowels to represent the vowel components of the sounds of language. Well, actually, they borrowed some of the symbols previously used as consonants, but which were not required for their language, and re-assigned them as vowels. And the modern alphabet was born.

Later, of course, the Greeks would invent history and philosophy, and bring science and mathematics and many of the arts to new heights (I exaggerate only slightly!). Beside these, the final step in the invention of the alphabet might seem like small beer. Nevertheless, it is hard to overstress its influence.

As this account suggests, the alphabet was (we believe) invented only once, albeit in several stages. It seems that the syllabary, which is perhaps the more obvious development of the idea of trying to represent the sounds of words, was reinvented more than once. But the alphabet is something altogether more peculiar. Its economy, the fact that we can get away with some 25 symbols to represent the entirety of our language, including words that have not yet been coined, is nothing short of astonishing. And its implications are going to reach far into the following 3000 years.

Numbers

Overview

Having achieved the astonishing knowledge that we only need a small number of symbols to represent the whole of past and future language, let us put general language aside for a while and think about numbers. Numbers figured strongly in early writing systems, being a very important component of the kinds of information we wanted to represent. And given that we have words for them, we can (in principle) write them down using the same system. However, they do have some peculiar characteristics, which we should perhaps worry about.

For one thing, beyond a certain point we need to be systematic about how we name numbers—we can’t simply coin a new name for every new number we come across: there are far too many of them. This applies as much in spoken language as in writing, though whether the development of systematic ways of constructing names for numbers preceded the development of writing is not clear. Secondly, it seems obvious (though again, when it became obvious is not clear) that it makes sense to use the characteristics of numbers to guide us in devising a systematic representation. Thus if we can see a new number as the sum of two numbers for which we already have names, then it might make sense to use the names of the two known numbers to construct a name for the new one. Of course this requires that the idea of addition is already understood, but the early uses of writing suggest that this was the case.

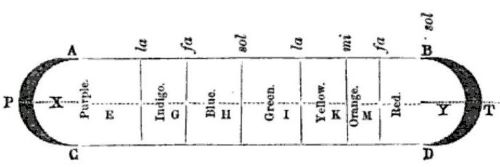

More generally, we would like the representations of numbers (verbal and/or written) to help us with the kinds of operation that we want to do with them. This general principle will take a long time to reach its final fruition—the Arabic number system with which we are familiar today. But in the meantime, early literate civilisations such as the Babylonians and Egyptians developed number systems of some sophistication, and the great mathematicians of classical Greece explored some of the ramifications.

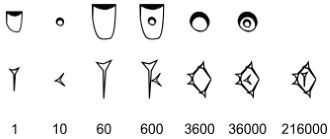

Systems of Numbering

Before the alphabet took hold, numbering systems tended to use special symbols. The basic principle, that you have a symbol for each number of a special set, and indicate intermediate numbers (which don’t have their own symbols) as sums (additions) of these basic numbers, was established by the Sumerians in Mesopotamia and remained in place until the Arabic system took over. In the Sumerian system, the special numbers were one, six, ten, sixty, six hundred, and so on. Our 60-minute hour is supposed to be a relic of that system. But we are now much more familiar with the Roman system, where the special numbers are one, five, ten, fifty, etc.

By the time of the Romans, the alphabet was established, and they did not need to devise special symbols for their special numbers—they followed the general principle of re-using their existing small set of alphabetic symbols for a new purpose. However, as we shall see later, this is not a true alphabetic solution to the number-representation problem.

The Greeks actually had a variety of number systems. One of their methods had separate symbols for each of the numbers from one to ten, then twenty, thirty, forty, etc. This required 28 symbols to reach what we would now call 900, allowing numbers up to 999. This was a rather profligate use of symbols—they used the letters of their alphabet, but had to borrow a couple of extra ones from someone else’s alphabet. Also, 999 was rather early to stop, so they then repeated the alphabet but with a special extra mark on each letter, to get them up to 999,999. But it gave a rather more compact representation of numbers than the Roman one.

A characteristic of all these number systems is that they run out. A point is reached where you have exhausted all the allowable combinations of all the defined symbols, and simply cannot represent the next number (without, that is, defining a new symbol for the purpose). Probably this did not, in general, bother the pragmatic Romans—as engineers and administrators they had the range of numbers that they required, and abstract notions of numbers which they could not represent, but would not need in any case, were of no concern.

But the limitation has some interesting Roman consequences. Consider for example Julius Caesar’s books about his military campaigns. His armies or smaller forces are always measured in cohorts and legions, rather than in men. Those were, no doubt, convenient units to use; but it is also the case that Caesar would have had difficulty in expressing the size of his army as a number of men. The Roman system contained both names and symbols up to M (1000), and could therefore represent numbers up to 3999: if we wanted to represent 4000 in the usual Roman system, we would need a symbol for 5000, just as 400 (CD) makes use of the symbol for 500 (D). But a legion was between 3000 and 6000 men, and Caesar normally had several legions under his command. When he referred to the armies against him, he tended to use a mixture of numbers and words, such as ‘LX mille’—60 thousand, or 60,000. This is not unlike the modern habit of mixing numbers with the words ‘million’ or ‘billion’, but is forced on Caesar. In effect, he has to treat ‘one thousand men’, in words, as a single unit and then apply the usual numerical system.

However, these limitations on all such number systems most certainly did bother the great classical Greek mathematicians. Archimedes, in the third century BCE, was particularly exercised, and invented his own number system which allowed him to express seriously large numbers (as an illustration, he calculated the number of grains of sand in the universe). But it was still essentially limited by an upper bound on the numbers that could be represented, albeit a very large upper bound.

A true alphabetic solution to the number representation problem eluded even the Greeks. It had to wait another millennium or so.

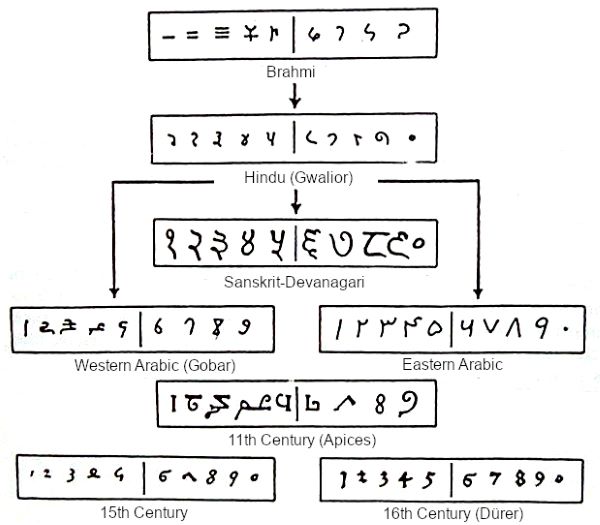

The Great Hindu Invention

The positional notation that we use today, whereby the same symbol can stand for many different numbers (for example, a “1” can mean one or ten or one hundred, depending on its position) was the revolution we needed. This in turn depended on the zero, as a position marker for an otherwise empty position.

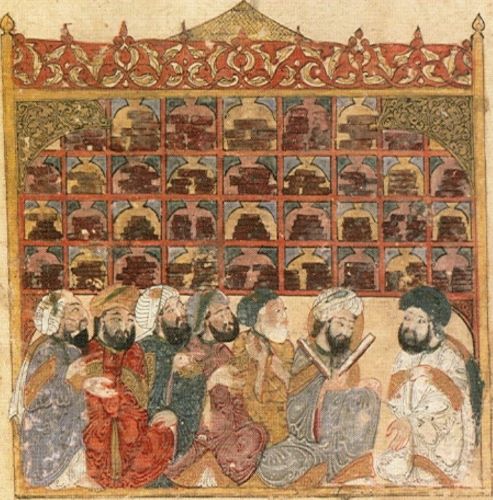

This invention was made by Hindu mathematicians in about the seventh century CE. Though once again, I am summarising with gay abandon a complex and not fully understood process, which may have occurred independently in different places at different times, and/or may have been influenced by earlier ideas—there is a great account in the book by Robert Kaplan, The Nothing That Is. The idea that reached the House of Wisdom in Baghdad, then the centre of the civilised world, was formulated as a general system of both numbering and arithmetic, and re-exported to the rest of the world as the Arabic system—as described in Jim Al-Khalili’s Pathfinders. One of the masters of this formulation was the Persian mathematician Al-Khuwārizmi, whose name has given us the English word algorithm.

The Arabic system provided for numbers what the alphabet had provided for words—a way of representing any number, including those for which no-one has yet found a need. The ten decimal digits and the positional notation allow for the representation of any positive whole number. The Arabs also had a precursor to our (relatively modern) decimal point or comma for representing the decimal part of a number—allowing the same rules of arithmetic to apply to non-whole numbers too. It also provided a simple set of rules for arithmetic operations, again applicable to all numbers, which stood us in good stead a millennium later when we began to try to mechanise arithmetic.

Following the original revolutionary invention of writing, these two revolutionary and not at all obvious inventions, the alphabet and the Arabic numbering system, are two of the cornerstones of the developing technology of information. In ways that are quite unimaginable to their progenitors, they will reverberate down the centuries: they will give us ideas, and allow us to think things, that would otherwise have been, quite simply, beyond our ken.

Sending Messages: The Post

Overview

Why do we want to write things down? Here are some (not exclusive) reasons:

- in order to organise our thoughts

- in order to remember (remind our future selves)

- in order to communicate with someone else

- in order to communicate with many other people.

The first, organising information, I will discuss later, in the section “Organizing Information”. The second, writing as a memory device, I will simply assume. This section and the next two are devoted to the idea of sending messages, over space and (usually of necessity) over time. We are concerned with the occasions when the author of the message and the intended recipient(s) are apart, and the message cannot be passed by simply talking across a room.

Messengers

You don’t absolutely need to write something down in order to send a message to another person. A human intermediary, who can remember a spoken message, go and find the recipient, and repeat it (exactly or in essence) is of course a perfectly plausible means, which has been used no doubt since spoken language was invented and continues to this day. Many early societies relied heavily on such messengers.

But one of the reasons why writing is so important is exactly that we no longer need to rely on the memory of a single messenger. This method is hardly feasible if the message might have to pass through many intermediaries before it gets to the recipient. If the sender can write the message or cause it to be written down, then she can be much more confident that the recipient will receive what she intended, and not some garbled version.

Once you have a system of writing, it is possible to think about systematising the transmission of messages.

The Medium

One limitation in this regard is the medium used for writing.

The clay tablets of ancient Mesopotamia were not terribly suitable for carrying around over distances—they were better suited to local record-keeping, individual memory or message transmission over time rather than space. Carved stone is even harder to move around (despite the story of Moses bringing the tablets down from the mountain). So serious letter-writing had to await the invention of a suitably transportable medium.

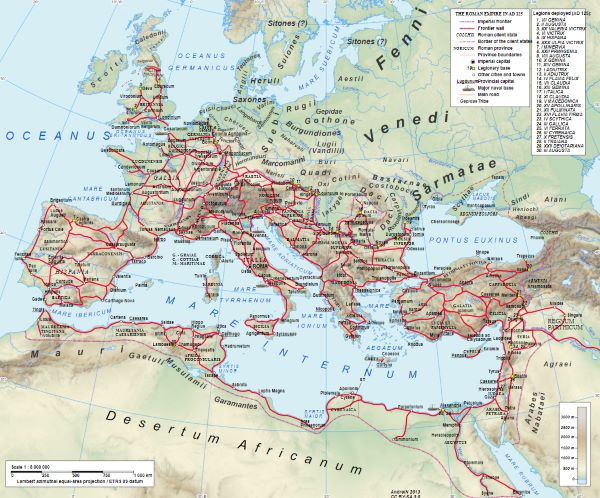

Over the millennia, several such media have found use. But pride of place in the classical world belongs to papyrus. Made from the dried leaves of the papyrus plant, this medium could be used to construct very substantial messages—whole books were written on papyrus scrolls.

From the time of its invention by the Egyptians, probably in the fourth millennium BCE, the papyrus scroll acquired a huge importance in the affairs of empires. If you want to run an empire extending over a large area, you need effective means of administering it. One requirement is effective communication. In a relatively static hierarchical society such as the Egyptian, where you may have been able to rely on the people in power locally knowing how they were supposed to run their domains, this may not be such a critical requirement. But if you want a dynamic, highly interactive structure, this requires systematic communications. The obvious example here is the Roman Empire.

Many other empires, both earlier and later than the Roman, failed at least in part because they did not have such systematic communications. Of course other things are also necessary, but it is hard to exaggerate the importance of this component. Furthermore, if you are dependent for this on the papyrus plant, control of the papyrus supply becomes a vital factor in the survival of your empire.

Roads

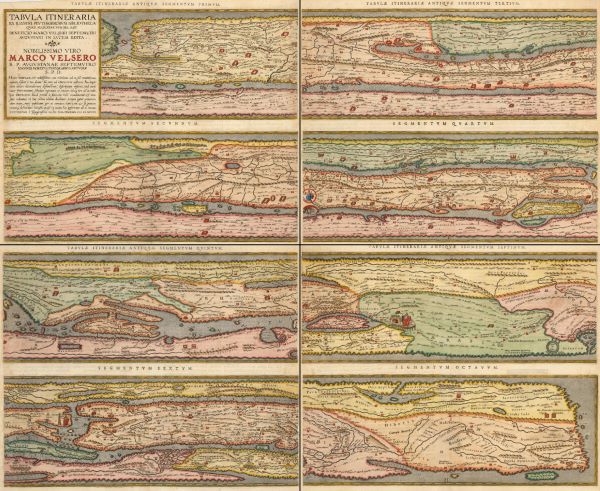

The destination of your message may be just across town, but again, if you have an empire to run, it may be days or weeks away. For a large part of our history, the best way to send anything (goods or letters) across any distance involved boat journeys. But boat journeys are slow and perilous—and they often have to go a long way round. If messengers are to carry your written message at some speed over great distances, they will need roads. Some roads are established simply by people walking them, but your budding empire may need some more reliable and extensive system. Again, the champions here are the Romans.

The Romans are famous for building roads. Straight, well-made roads ran the length and breadth of the Roman empire. For whom were they built? Partly for the soldiers or the administrators: a legion or a governor doing a turn of duty in a remote province would use the established roads where possible, though of course the soldiers at least normally had their main activities in areas not well covered by roads. They may have been built partly for the tradesmen—Rome depended very heavily on trading, and some goods were traded over large distances. But trade was primarily a private concern, and the access that the tradesmen had to roads was a by-product rather than their primary purpose.

But the main reason for the road-building activity of the Romans was for the messengers. The road network, together with the boat routes across and around the Mediterranean, formed the primary communications network of the empire.

In more recent times, for example in the Victorian era, the word ‘communications’ came to refer just as much to the road and rail networks as to, for example, the postal system. This is no accident. Road and rail, and the shipping lanes, were as much about communicating information as they were about moving people and goods.

The Cursus

Efficient empire-wide communication to serve the needs of imperial administration needs to be highly systematic. An official in Rome who wants to instruct another official in one of the far-flung provinces will need to entrust his message to a (human) system, with the confidence that it will reach its destination. Thus was the concept of a postal system born.

Several early empires had postal systems for this purpose; these were not generally accessible to private individuals, but for the use of government only. But, once again, the Roman system introduced by the emperor Augustus was second to none. Called the Cursus Publicus, it relied on supplies of messengers running or riding stages and fresh horses at each stage. There were two classes of post—the normal class could be expected to cover 50 miles a day, but urgent letters could go at twice that speed. Its domain was the whole of the Roman empire, and it played a significant role in the success of that institution.

When the Roman empire fell apart, and was replaced by many local administrations, often warring petty kingdoms, both the system of roads and the postal system declined too. The kind of speed with which a Roman official could get a letter to (say) a governor in Gaul was not rivaled again until the end of the eighteenth century.

To the east, some five centuries before Augustus, the Persian emperor Cyrus had initiated a postal system called the Chapar Khaneh. Later, after the decline of Rome, during the (relatively) Dark Ages in Europe, a system called the Barı̄d was established in the Islamic world. An account of these systems is given by Adam Silverstein in Postal Systems in the Pre-Modern Islamic World.

Postal systems in the ancient world, being primarily organisations for the benefit of the rulers and the government, were closely associated with espionage—one of their main functions was to enable the rulers to discover all they thought they needed to know about what was going on in their domains.

The Birth of the Modern Postal System

The Cursus was confined to government business, but in medieval times, some non-government organisations (some universities, for example) were large enough to require their own internal messenger services. The idea of an organisation devoted to providing this service to individuals and other organisations emerged gradually from this need.

The most successful of these private firms, by a long way, was Thurn und Taxis. This started as a private Italian family business, but in the fifteenth century, the family acquired from the Hapsburg emperors a licence—in effect, a state-assigned monopoly—to run all the postal services throughout the Holy Roman Empire. Thurn und Taxis held this monopoly for a little over 300 years. The family were variously ennobled by successive emperors until by the end of the seventeenth century they were princes.

They built a modern and (at its best) highly efficient postal service of a sort we might recognise today. They carried government and private letters, and had an extensive distribution system based, like the Cursus, on horse relays with staging posts between the major cities of the empire. It was they who, by the end of the eighteenth century, could rival or beat the kinds of mail delivery speed established by the Cursus.

But, again like the Cursus, they depended on the authority of the state they served. As stronger national governments developed in Europe, they saw a foreign-run postal service as a threat to their own control over their communications. Countries began to develop their own postal systems. Issues concerning the relation between government and private enterprise, all too familiar today, complicated the development process. On the one hand, some governments preferred a system that was run entirely for their benefit, not serving the public in any way. On the other hand, they were not too keen on any purely private postal service being outside their control. One of the concerns, which again is familiar today, was with security—just think of the horrors that might arise if conspirators were able to communicate freely by letter!

What gradually emerged as the standard approach was to have a government-owned and -run postal monopoly, offering services to the public. The postal charges were often treated by government as a form of taxation, which could be raised to pay for a war or whatever else was required.

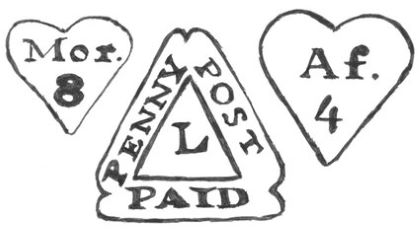

A good example of this ambiguous relationship was the experience of William Dockwra in London in the late seventeenth century. He organised a private ‘penny post’ in London, which quickly became very successful. But its success alarmed the authorities, and they (almost equally quickly) took it over and merged it with the public service.

The Penny Post

Actually, one of the most significant subversive uses of the public postal system arose from the cost of sending a letter. The usual system of payment was for the sender to send the letter without payment, and for the postman to collect the required fee from the recipient on delivery. The fee could be high and quite complex, depending not only on weight but also on the distance travelled and perhaps on the route taken. But it was not hard to work out that simple messages could be coded, for example, by the way the name and address of the recipient was written on the envelope. So when the letter was delivered, the recipient could look at it and then return it to the postman, refusing to pay, on grounds of poverty or whatever—having nevertheless understood the message from the sender.

The obvious solution to this problem, from the point of view of the authorities, was to force prepayment. But it took an enlightened visionary, Rowland Hill (together with another who will reappear later in this book, Charles Babbage), to see that was only part of the solution. Prepayment would actually make the system much more efficient anyway, because delivery would not depend on the postman finding the recipient at home. Hill not only understood this, but also realised that the cost of delivering a letter depended very little on distance, and that a cheaper service would be used very much more widely. When the Penny Post, with pre-payment postage stamps, was introduced in Britain 1840, the effect on the postal service was immediate and far-reaching. It became the universal communications medium, accessible to everyone.

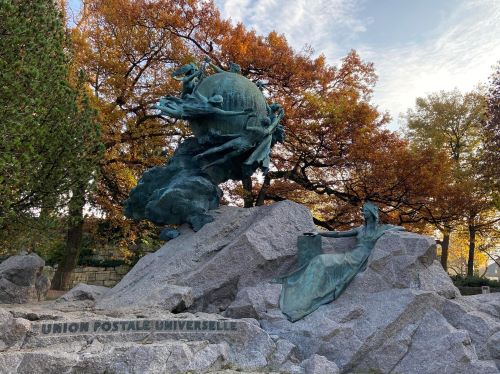

The Universal Postal Union

National postal organisations such as the British Post Office gradually unified and simplified their own internal services, but international mail was a different matter. In order to send an international letter, you would have to know the route and how it was going to be charged by the various carriers involved. Certain national post offices had bilateral agreements with each other, but these might involve a specific fee for each letter. A letter might have to cross several countries in the course of its journey.

All this complication was swept aside in 1874, with the Bern agreement based on Heinrich von Stephan’s proposal for a General Postal Union. This laid the foundation for what came to be called the Universal Postal Union. This was a union of national postal services, agreeing to carry each other’s international mail to its destination without further charge or accounting on specific items. Initially twenty-two countries joined, but very rapidly it expanded to include practically all postal services throughout the world.

This was a truly revolutionary move, a supra-national agreement to allow a simple system of point-to-point communication across the globe. Anyone could send a letter to anyone else in the world (well, at least to an address, a location). The Universal Postal Union must be regarded as one of the great triumphs of civilisation.

The Heyday of Post

Universal literacy, with the help of the Penny Post and the Universal Postal Union, ushered in a golden age for postal services. Letter-writing took off as never before. Before radio, before the telephone, long before the arrival of the Internet, the world became a connected place.

From the vantage-point of the twenty-first century, when we have such a variety of ways of communicating, and when the postal service has largely degenerated into a mechanism for delivering purchased goods and spam, it is difficult to imagine the importance of post in the nineteenth and early twentieth centuries. It is also a little difficult to get a grasp of how efficient the service could be. The following letter to the editor of The Times of London reveals not only the efficiency (despite the author’s protestations to the contrary), but also the importance attached to it:

May 25th, 1881

Sir,—I believe that the inhabitants of London are under the impression that letters posted for delivery within the metropolitan district commonly reach their destination within, at the outside, three hours of the time of postage. I myself, however, have constantly suffered from irregularities in the delivery of letters, and I have now got two instances of neglect which I should really like to have cleared up. I posted a letter in the Gray’s Inn post office on Saturday, at half-past 1 o’clock, addressed to a person living close to Westminster Abbey, which was not delivered till next 9 o’clock the same evening, and I posted another letter in the same post office, addressed to the same place, on Monday morning before 9 o’clock, which was not delivered till past 4 o’clock in the afternoon. Now, sir, why is this? If there is any good reason why letters should not be delivered in less than eight hours after their postage, let the state of the case be understood; but the belief that one can communicate with another person in two or three hours whereas in reality the time required is eight or nine, may be productive of the most disastrous consequences.

I am, Sir, your obedient servant. K.

I would not be surprised if the letterboxes which K used are still there, but if you were to post a letter nowadays, at Gray’s Inn at 1.30 p.m. on a Saturday, it would not even be collected from the letterbox before Monday.

The importance of the postal system in the late 19th and early 20th century is indicated by the following statistic: at the start of the First World War, the totality of the Civil Service in Britain was approximately 168,000 people, of whom about 124,000 were employed by the Post Office. During the First World War, the postal service contributed greatly to the public perception of the war, at least for those who were in correspondence with soldiers at the front, which was very far removed from the picture provided by the news media. This sense is vividly conveyed in Vera Britain’s book Testament of Youth. In a way, despite the inevitable delays of post in wartime, it evokes the kind of feeling of immediacy achieved by television in later conflicts such as Vietnam.

In the 1930s, the British General Post Office produced a wonderful documentary called Night Mail. With words by W. H. Auden and music by Benjamin Britten, this short film celebrated a mail-train journey the length of Britain, and at the same time caught the essence of the postal service, as it was seen by the public who used it.

The Decline of Post

Old media seldom die, but they change. A succession of developments (telegraph, telephone, email and so on) have taken their toll on the concept of a postal service. Paper documents are still important, but for different reasons than those which inspired the letter-writers and -readers of the nineteenth and twentieth centuries. No doubt there are still people in the world who wait on the arrival of the post in the same way that K or Vera Britain did, but this particular manifestation of the global village is surely in decline.

Sending Messages: Electricity

A New Medium

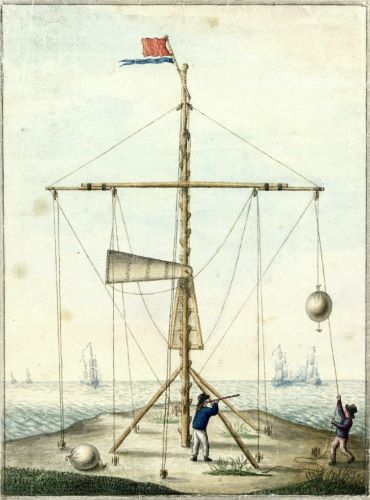

The ideal method of sending messages over a distance would not involve the physical transfer of an object at all. The use of bonfire beacons is an old method suitable for a limited number of tasks; slightly more sophisticated is the smoke signal. Both of these have a venerable history. A more recent (eighteenth-century) idea was semaphore, sometimes used for Naval signalling, using hand-held flags or mechanical arms, involving a simple alphabetic code. But major developments in this direction arose from the evolving understanding of electricity. The idea of using electricity for point-to-point communication is almost as old as the serious investigation of electricity as a physical phenomenon. It is certainly older than the notions of using electricity for power, heat or light.

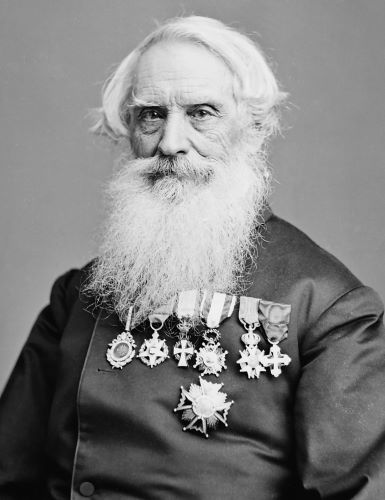

Various systems of signaling using electrical methods were proposed in the early nineteenth century, but the one that had the greatest impact was the system of telegraphy devised by Samuel Morse. This, unlike the earlier proposals, used just one wire, but had a distinct electrical code for each letter of the alphabet. This is the famous Morse Code, consisting of dots and dashes (short and long signals), still occasionally in use today. (At the time of writing this paragraph, one particular brand of mobile phone has, as its default audible signal for the arrival of a text—that is, an SMS—message, the letters SMS in Morse code.)

Morse’s electrical system, transmitting codes down a wire, takes us one small step further from visual signals, which is nevertheless a giant leap towards the huge developments of the late twentieth century.

We might also notice how the invention of the alphabet, some three millennia earlier, paved the way. Given that we can construct any message using only the letters of the alphabet (perhaps with a few extra characters such as digits and some punctuation), the notion of using a similar small number of codes, which may be manipulated by some physical mechanism, is simple but revolutionary. Now we can transmit any message in our language, via writing and the alphabet, using on-off electrical pulses sent along a single wire. It’s enough to blow the mind.

The Telegraph

As Tom Standage’s book The Victorian Internet shows us, Morse’s telegraph became, around the middle of the nineteenth century, a huge success—not just commercially, but in revolutionising our view of the world in general and communication in particular. Suddenly the speed of physical communication, messengers carrying messages, was no longer the limiting factor in long-distance communication. The achievements of the Cursus Publicus and Thurn und Taxis no longer mattered. Provided you had a wire running from A to B, messages could be delivered to all intents and purposes instantly. And wires there were. Networks of telegraph wires spread like wildfire across the developed (and sometimes the less developed) portions of the globe.

But what is most extraordinary about this process is the way in which people suddenly discovered the necessity for fast communication, and embraced the medium. Just as the far cheaper and easier penny post was at the same time inviting vast numbers of people to enter the letter-writing age, other groups were discovering the wonders of instant communication. Governments, military authorities, businessmen and news organisations all found it was a medium that they could not do without.

There was never any serious competition between the postal system and the telegraph. The needs for communication expanded to such an extent that both media could simultaneously grow at a prodigious rate. We have not yet reached the heyday of post; the telegraph will in the end turn out to be a rather short-lived medium, because of real competition from the telephone and other media.

Printing Telegraph

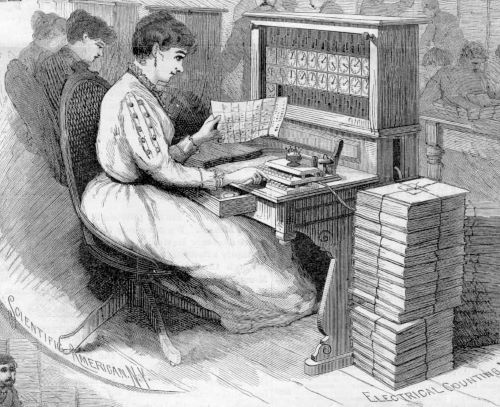

We are now well into the period of Victorian invention, and many challenges were quickly recognised and taken up by the inventors of the time. Morse telegraphy required a human operator at each end, to make the conversion both ways between the written letters and the dot-dash code. How much easier it would be, people realised, to have machines do these conversions.

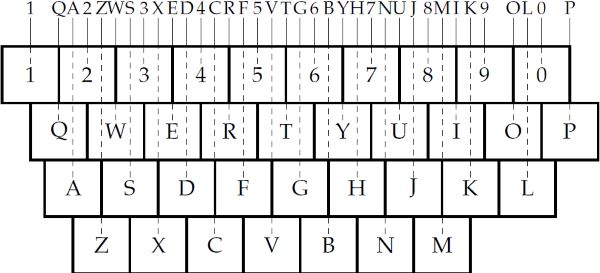

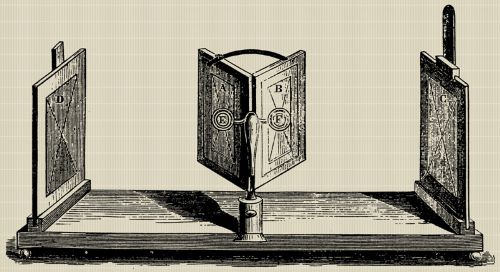

Although the eventually successful printing telegraph service, the telex, was a twentieth-century development (actually later than the telephone), there were several nineteenth-century precursors that achieved some degree of success. One of these was due to David Hughes. In the tradition of inventors of the time, he was a polymath; he was eventually honoured as a physicist, and has a Royal Society medal named after him. But in 1855, when he was a professor of music at a college in the United States, he devised a system with a keyboard and a printing wheel. The sender would type out the message letter by letter on marked keys, and the receiving machine would print the message on a sort of ticker tape.

The image that comes to mind from this description is probably the typewriter-like keyboard with which we are now so familiar. I will be talking about the QWERTY keyboard later, but the modern typewriter had not yet been invented in 1855. However, Hughes took his inspiration from the much older keyboard tradition with which he personally was particularly familiar. His keyboard, with alternate black and white keys, looks like nothing so much as that of a piano.

In fact he was not the only, nor even the first, person to consider using something like a piano keyboard for keying alphabetic messages. A slightly earlier device in the vein of printing telegraph was developed by Royal Earl House—his keyboard too was piano-like. In truth, until the invention of the QWERTY keyboard late in the nineteenth century, the piano and its predecessors defined the canonical idea of keyboard control.

Telephony

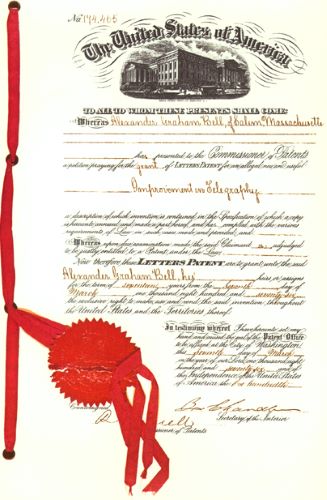

Even better than writing a message out on a keyboard and then reading a printed version at the other end, would be to speak and hear it. Again, this was a challenge to which the Victorians rose with enthusiasm. In 1876, Alexander Graham Bell won that particular race by a short head over Elisha Gray. David Hughes was not involved in this race, but he did, within two years of Bell’s patent, invent the carbon microphone. The era of the telephone had begun.

But quite quickly, a new dimension was added. The telegraph was a specialist point-to-point messaging system rather like the postal system, with wires strung between offices that acted as gateways for the messages. With telephones, everyone wanted a piece of the action.

The wires had to go to people’s homes, and the gateway became the switchboard or exchange, operated by a human being. Directing calls involved connecting one bit of wire to another, via a plugboard. Although manual exchanges continued for a long time, and are familiar to us through films, already in the nineteenth century people were devising automatic exchanges.

The earliest automatic exchanges were of the rotary type. The rotary telephone dial in effect controlled a rotary switch, which moved in synchronisation with the dial. In this system, the number dialled was not held in the exchange (except implicitly in the position of the dials), and not used in any other way. However, already by the 1930s there were exchanges that remembered the dialled digits in a register (like the register in a calculator) and had embedded decision rules about how to route different numbers. This is a form of information processing to which I will return.

Over the course of a century of development of the telephone system, we eventually reached a universal addressing system—a system of numbers defining not only the line on the local exchange, but the exchange itself, then the city or wider area, then the country—so that by the late twentieth-century, a full telephone number represents a single household on the planet. This is comparable to a postal address, somewhat less transparent to a human reader but more amenable to mechanical manipulation.

Radio

By this time, of course, we also had radio: wireless electrical messages. Radio broadcasting will be discussed further below, but it was also used for direct one-to-one communication from very early. Point-to-point radio, radio telephones, international telephone calls routed via satellite, and mobile cellphones, all make use of this medium.

This is a slightly curious development, because radio is naturally a broadcasting medium. That is, a message transmitted by radio can be received by anyone within range and with a suitable receiver. Basically, in order to use it for point-to-point communication, we have to subvert its primary nature. Later, we will see other examples of subverting media to serve other purposes than their nature would suggest.

The technicalities of constructing a temporary link between two telephones for the purpose of making a call (now more like a virtual link than a physical wire) have of course become somewhat more complex, and depend heavily on other late-twentieth-century developments in information technology. The addressing system in the form of telephone numbers has been pushed a little further—now, in the mobile phone age, it designates a unique individual on the globe. Well, that is a slight exaggeration—really it designates a unique phone, but given the present-day spread and use of mobile phones, it’s coming close.

Email and Text Messaging

Perhaps the medium that has provided the closest rival to the postal service is electronic mail. Email systems followed the development of computer networks in the last third of the twentieth century, but really took off with the Internet in the 1990s.

Email is also similar to post in that a one-way message is self-contained—a package with an address on the outside. It does not matter much to the sender or recipient what route it takes; it may go through any number of switches, and some delay at some of the switches is not generally critical. Nevertheless, it took email some time to learn the lessons that the postal services had learnt in the previous century, namely that what was required was a universal addressing system and transparent interfaces between the networks. If you wanted to send a long-distance or international email in the 1970s, you would have had to specify the route to be taken, or at least the main staging-posts along the way. One system used the so-called ‘bang notation’, leading to an address like this:

utzoo!decvax!harpo!eagle!mhtsa!ihnss!ihuxp!grg

This means that I want to reach a user called grg, whose mail account lives on a machine called ihuxp—but my machine does not know about ihuxp. Instead, I tell my mail system to send it to a machine called utzoo, which should forward it to decvax, which should send it on to harpo—with three more intermediate machines before it reaches its destination. In order to send the email, I have to know the route. Furthermore, each staging-post would add another address wrapper around my message, so that even a short message would arrive encased in several layers of headers.

But the Internet and the universal addressing system eventually arrived, and the niche occupied by email in the assembly of communication methods open to us has expanded vastly. For all its similarities to conventional mail, it turns out to have some substantial differences also, and its usage reflects these differences. For example, while it is possible to write the kinds of letters one used to send by post, it is also possible to use email in a much more informal and immediate way—to hold conversations by email that have at least some of the characteristics of spoken conversation.

Another medium that has emerged in the last few years is text messaging. This is a most interesting development, because it has no obvious precursor. As a result, the niche that it has now come to occupy was practically invisible until texting started to become popular (although the informal end of the email spectrum provides some clues). But it shows clearly that despite the huge and obvious advantages of speech, written communication has some distinct advantages of its own. It might be hard for generations not brought up with it to recognise texting as a written form of communication; nevertheless, that is what it is.

A Note on Electricity

In this section, I have regarded electricity purely as a ‘medium’ for communication. Although we have known tiny bits about electricity for millenia, the serious scientific study of the phenomenon did not begin until around the seventeenth century. But in the nineteenth, we began to discover some of its uses. And our love affair has proceeded at pace. By the end of the nineteenth century, we have made serious inroads into electrical engineering, and have begun to think of it as a resource with many functions. In the twentieth century, it will come to be seen as a vital service to which everyone should have access, with a status almost comparable to the supply of fresh water. Nowadays I have a plethora of electrical devices, and the expectation (even if I am occasionally disappointed) that I can get the electricity needed to run them anywhere in the world, in a standardised form.

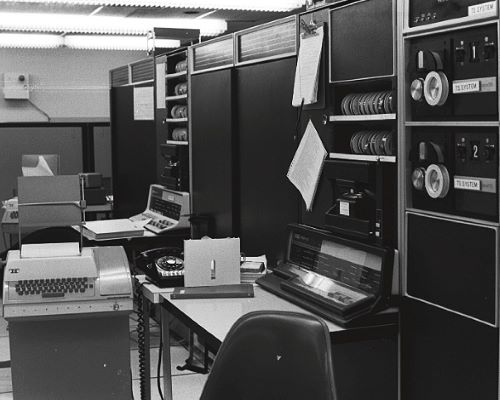

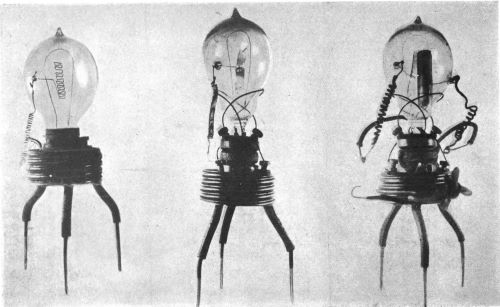

And then in the twentieth century our understanding of electricity spawns a monstrous offspring—electronics. Already by 1883 we have photosensors; then the thermionic valve (1904), the flip-flop circuit (the original electronic form of a single-bit memory, 1918), the transistor (1947), integrated circuits (1958), a whole variety of sensors, and so on. In the electronics era, the uses of electricity multiply a thousandfold, leading up to and including the entire digital world.

A full exploration of this aspect of our history would take me too far away from the main themes of this book—though it certainly counts as one of the necessary precursors of the digital age.

The Connected World

Now, at the beginning of the third millennium CE, we have a range of methods of communicating with others, which is unparalleled in history. Whether the person we wish to communicate with is in the next office, across the street, across town, the other side of the country, or half way round the world, we have ways to make our messages heard. With a variety of media, at least three global addressing systems, and transparent routing, we are spoilt for choice. In this sense at least, it’s a small world.

In the next section, we go back again in time, in order to consider the idea of broadcasting.

Spreading the Word

Overview

At the beginning of the previous section, I talked about writing things down in order to communicate with many other people. We might describe this as broadcasting. For much of recorded history, the notion of broadcasting was strongly distinguished from point-to-point messaging. If the originator (a) wants many people to receive the communication, and (b) does not know who all the people might be, the message needs to be thrown out in some sense, like seeds being spread on a field. We will see at the end of this section how this distinction might be blurring or even disappearing, and younger people brought up in the era of social media might even find it a little strange or unfamiliar. But its historical importance is huge.

Further, the word broadcast is usually associated with radio, and its derivative, television, because of the way the medium of radio is, by its nature, broadcast into the ether. But long before the discovery of radio, a number of technologies were harnessed to the task of spreading messages among many people.

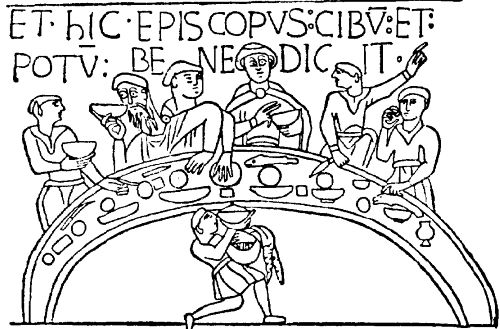

A proclamation read by a crier in a town square is a form of broadcasting (it may be more or less effective in that role, depending on the environment and social structure). Another method that was used extensively in the Middle Ages and for much longer was the pulpit. This mechanism provided not only for broadcasting to a local community, but also allowed a well-organised church to co-ordinate its message across a country or region.

A latter-day form of proclamation, which broadcasts a message to a local community, many of whom might be expected to visit the church or town square, is the poster on a wall. Since the twentieth century, particularly in the west, we have associated posters with commercial advertising, but in some environments they have acquired quite different connotations of public debate. For example, during the cultural revolution in China in the 1960s, major political arguments were conducted through the medium of posters on walls.

However, this mode requires a community in which literacy is widespread. During that much longer period of human history in which literacy was a relatively specialised accomplishment, broadcasting via writing took various forms.

The Library

The first great mechanism, device, technology that was brought to bear on the problem of broadcasting was the library.

Nowadays we see libraries in various lights—as repositories or archives, as a form of entertainment, as part of the system of education, and so on. Fundamental to these ways of understanding the notion of a library is that libraries, over time, make written information available to many people.

This is, indeed, a major technology. A piece of writing in the pre-computer world can, by its very nature, normally be read by, at most, one person at a time (of course a huge poster may be read by several people at once, but this is the exception). Furthermore, writing on paper is normally in the possession of one person. That person may read it more than once, or may lend it or pass it on to a friend or acquaintance—but this is a very limited form of broadcasting. If broadcasting is seen as desirable, a much more efficient mechanism is required. Given that, in the era we are discussing, we do not yet have the technology for multiple reproduction of a written text, we need to establish a place where people may come and consult different writings, and then to make sure that that place contains all the texts that people might want to consult. Placing a book in a library is broadcasting it—making it available to many people over its potential lifetime, people you do not know.

Libraries have been around for quite a while—in particular, for at least two millennia prior to Gutenberg’s invention of movable metal type and the start of the mass printing of books. Indeed, in some sense, libraries were all the more important because there were no mass-produced copies of books. A well-organised archive of clay tablets dating from around 2250 BCE was found at Elba in Syria. We know that there was a library in Assur-bani-pal’s palace at Nineveh, in the Tigris-Euphrates basin, when it was sacked in 612 BC—more of that below, as of the Royal Library of the Ptolemys at Alexandria. The House of Wisdom in Baghdad, which I mentioned in the first section in connection with the Hindu/Arabic numbering system, was essentially a library and a meeting-place that scholars from all over that world came to visit.

The model that I shall take for my description of the functioning of libraries as broadcasting devices is that of the medieval monasteries in Europe. In the period (the very early Middle Ages, or as they used to be called, the Dark Ages) after the fall of the Roman empire and before the recovery of European civilisation, the monastery system provided the major repository of knowledge and the resources for its spread—the universities came along a little later. But first, a bit about attitudes to libraries.

Burning the Library

When Nineveh was overrun (as with Elba more than a millennium earlier), the invaders who sacked the palace also burned down the library it contained. Almost certainly, they had no knowledge that what they were burning was a library—the palace was the seat and symbol of power, but the library was simply part of the palace. As it happens, the burning of the library turned out to be one of the greatest acts of cultural preservation in history. The books in the library used the medium of the time and place—clay tablets. Thousands of these clay tablets were baked hard in the fire, and then buried in ash and sand, and as a result can be seen to this day, in the British Museum. We have, from that event, among many other treasures, the best version of the earliest known written story, the Epic of Gilgamesh. This story was old already, probably a millennium or more, but the survival of the Nineveh version is one of those extraordinarily valuable accidents of history.

By, say, half a millennium or so later, library burning had acquired an altogether different character and meaning. The first emperor of China, in the second century BCE, systematically burnt books because of the subversive ideas they contained—a mode of behaviour repeated many times over the following centuries, including most famously the library at Alexandria. The notion of a library had changed: it had become a repository of knowledge, not a building or an administrative archive, and furthermore the technologies of the time (such as papyrus) were very susceptible to fire. If you happened to regard knowledge as a bad thing, subversive in some sense—any knowledge or just some of the particular knowledge held in the library—then one recourse open to you was to burn it.

The story goes that the library at Alexandria was subject to this kind of attack, possibly more than once in the early Christian era (a time when subversion of established ideas was not treated lightly). By this time the perpetrators would have known perfectly well what they were burning. Apart from burning down the building, they would (still according to the story) form vigilante patrols to seek out books that had somehow escaped or been rescued from the flames, and burn them as well. This brings to mind Ray Bradbury’s Fahrenheit 451, about a future world in which the function of ‘firemen’ is exactly to root out and burn books. This may be fiction, but some of the attitudes it represents have existed in the real world for a couple of millennia.

Actually, the current consensus is that the story of the burning of the Alexandria library is essentially myth. But even as myth, it supports my argument. It was told (certainly from the very early Christian era) as a cautionary tale—burning a library is at the very least an act of cultural vandalism. At its worst, it is an attack on knowledge itself.

The Medieval Scholar

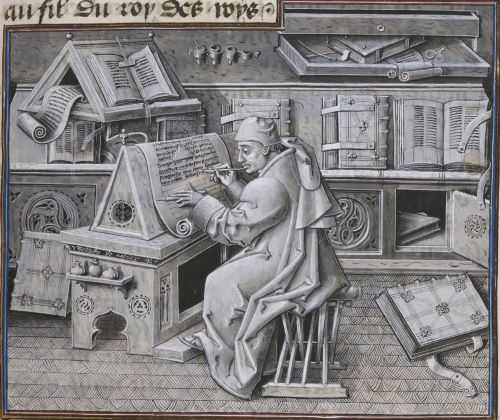

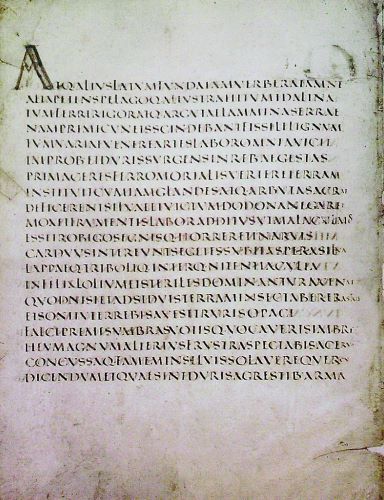

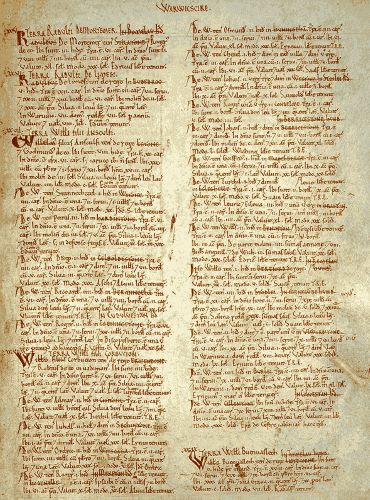

In Europe, scholarship was associated with religious life. If you wanted to study, you would join a monastic order and seek in that environment the teachers and teachings you would need. And while personal teaching is, of course, as necessary as it has always been, many of the teachings since classical times now reside in books. Your monastery library would contain copies of some of these books.

But every single one has been laboriously copied by hand. While you might eventually hope to write a book yourself, other duties associated with the spread of knowledge may intervene. You may have to undertake arduous journeys to other monasteries to consult books that your library does not hold. And above all, you may have to copy out books by hand, and transport them to other places. For many monks, indeed, copying out other books would be the nearest they would ever get to writing a book.

The business of copying books by hand and carrying them from one library to another was a major occupation of medieval scholarship. At some places and times it acquired an almost industrial flavour. The normal way of copying a book ties up both the original being copied and the monk-scribe for a considerable period, and only produces one extra copy. In one or two monasteries, possessing particularly valuable and sought-after books and many scribes, it was possible to go in for a form of mass-production. A single reader would read the book aloud, and a number of scribes would take it down as dictation. Thus many copies could be produced simultaneously.

So when, in the fifteenth century, Gutenberg’s form of printing came along, in some sense the western world was ready and waiting.

Printing and Publishing

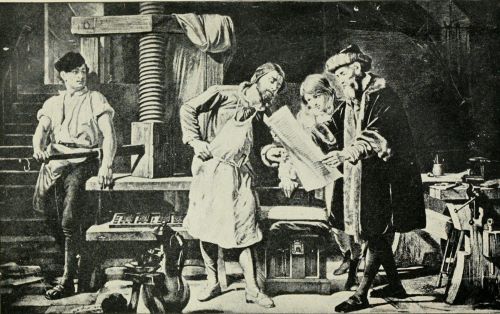

The ability to reproduce written material exactly, in multiple copies, by mechanical means, was the second great invention to change the face of broadcasting utterly. Once again, I am indebted to John Man’s The Gutenberg Revolution for this account.

Gutenberg is credited with this invention in Europe, with the proviso that many aspects of printing had previously been invented in the Far East (Gutenberg was probably not aware of this work). From the ninth century, documents were being printed in China, at first with a specially carved wooden printing block for each page, but later with a system of movable type. Individual characters were carved or modelled in clay and stocks of the common characters were built up; rarer characters had to be specially made for a page. In one system in use in the eleventh century, the characters chosen to make up a page were temporarily fixed in resin in a metal frame.

We may note the characteristics of Chinese versus European languages, which might help or hinder this process. Chinese characters are all of the same size, with no breaks between words. Thus the arrangement in the frame is simple—each printed line contains the same number of characters, and the sequence may be broken anywhere for a new line (the same applies if the characters are arranged in columns rather than horizontal lines).

However, the Chinese language suffers one considerable disadvantage compared to European languages: the lack of an alphabet. Chinese has tens of thousands of distinct characters. Even though the number in daily use is somewhat smaller, there is little possibility of building sufficient stocks of characters that every new page can simply be made from stock. And certainly the creation of a mould, from which many new instances of a character can be cast in metal as required, would have made no sense in the Chinese context. Both of these were characteristics of the Gutenberg system.

We can think of printing in economic terms, in a way that may help us to see its revolutionary status. At the core of the industrial revolution is the notion of investing in machinery—to enable the cheap reproduction of goods for which people will pay. The Chinese system of printing involves a lot of investment in the individual printed object—the book or whatever—and might gain a little from a generic investment in printing characters, but not a lot. Gutenberg’s system involves a significant prior investment, in the moulds from which the individual characters of type are cast. This makes the typesetting of different books (as well as different pages of a long book) very much cheaper.

This precursor of the industrial revolution is remarkable, not only for being approximately three centuries early, but also for being devoted to the production of information rather than of more material goods. Well, that’s an overstatement—books are of course material goods. Nevertheless, their primary value lies in their content rather than their physical nature.

But the mechanical process of printing was only part of the invention. The other part was the system of publishing. The real Gutenberg revolution was to make it possible for the first time for people outside of monasteries or governments to obtain books, build libraries, and take full part in intellectual life and the construction of mankind’s fund of knowledge. Publishing joined libraries as a core mechanism for the broadcasting of information.

Publishing

Publishing was not a single datable invention in the way that we might see printing. On the contrary, the idea of publishing started out as a not-very-radical extension of what had been common practice before printing. But the notion has been growing and changing ever since.

When books have to be individually copied, the copies are often (usually) allocated, their destinations predetermined, before they come into existence. In the early days of printing, a book would be prepared for a predefined list of ‘subscribers’: people who expected, and probably paid upfront, to receive a copy. The idea of printing a large number of copies speculatively, hoping to be able to sell them, emerged only gradually. Also the idea of subscription was transformed, over several centuries, into periodical publications. In the seventeenth century, scientific journals began. If you subscribed to such a publication, you would not know exactly what to expect, but you would have some confidence that it had gone through some selection process before it got into print. Newspapers and other periodical publications eventually followed. Even without subscriptions, the publisher would put out new issues according to some regular schedule, and could reasonably expect many people to buy regularly.

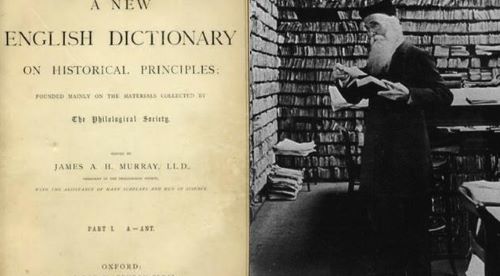

This model has at different times been used for many kinds of publication, not necessarily those we would associate with it today. For example, both the novels of Dickens and the Oxford English Dictionary first appeared in serialised form. Indeed, the model applies at different levels. If you like reading novels, there is some chance that you will try a new novel; if you like Dickens, there is a fair chance that you will try a new Dickens; if you liked the last instalment of Bleak House, there is a very high chance that you will try the next.

Far from settling down into some steady state, models of publishing continue to change radically, as we shall see further below.

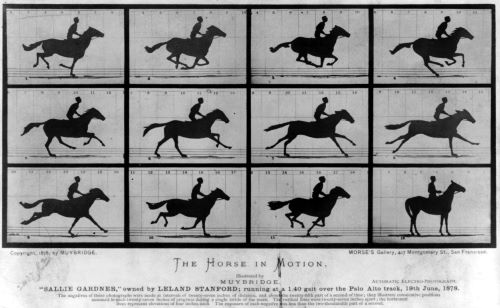

Cinema

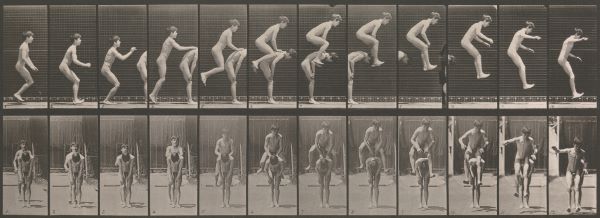

I will later be considering the technologies associated with images and their development over the period of slightly less than two centuries since the invention of photography. However, the role of film as a method of broadcasting belongs here.

Photography itself is no more a natural broadcasting medium than writing. The analogue of the library is the art exhibition or gallery, which has been around for a time and to which photography can contribute. Later, when it becomes feasible to print them in a similar fashion to the printing of text, photographs become part of the publishing world. But film is something different.

In order to see a film, you have to put aside some time and not only reserve that copy of the film for that period, but also have exclusive use of some equipment—including a screen, which means an entire room. The film is equally available to everyone in the room; there is no problem about some people reading faster than others, because the timing is fixed. So it becomes not only feasible but desirable to have a number of people watching at the same time.