Artificial intelligence is increasingly entangled with political violence, shaping propaganda, surveillance, and conflict in ways that blur the line between technology and power.

Veilleux-Lepage’s latest CTC report, Beyond Misuse, argues that terrorism studies have captured only one dimension of AI’s relationship with political violence. The field has organized itself around documenting current misuse, projecting future exploitation, and developing counterterrorism applications, but it has missed the structural dimension entirely.

The scholarly literature on artificial intelligence and terrorism has… organized itself around three questions: (1) how violent non-state actors are currently using and/or misusing AI for harmful purposes; (2) how that misuse may evolve as the technology matures; and (3) how AI capabilities can be applied to counterterrorism ends… Largely absent from the conversation is a fourth concern: that AI may also be reshaping the structural conditions from which political violence has historically emerged.

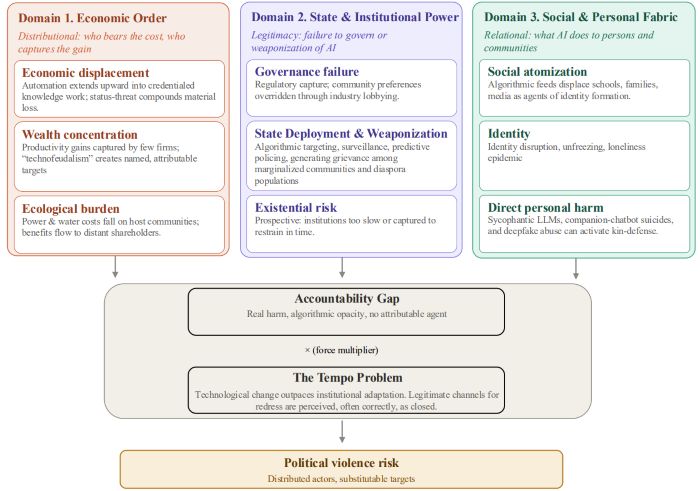

Veilleux-Lepage builds a three-domain grievance framework covering disruptions to the economic order, challenges to state and institutional power, and harms to the social and personal fabric, all tied together by an “accountability gap” that arises when AI systems distribute consequential harm across technical and institutional chains without any clearly attributable human agent. As shown in Figure 1 below from the report, a “tempo problem” compounds across domains as the pace of AI adoption outstrips institutional adaptation, closing off legitimate channels for grievance redress before those channels can absorb the harm.

The framework produces a threat landscape that cuts across ideological lines, generates actor types and target categories that fall outside conventional counterterrorism monitoring, and points toward data centers, local policymakers, AI executives, and disenchanted insiders as the most likely near-term targets. Veilleux-Lepage closes by inverting the standard response logic: closing the accountability gap through enforceable governance is not a policy preference but a counterterrorism necessity. Below are some key insights from the report!

From Misuse to Preconditions: “When the pace of technological change exceeds the pace of institutional adaptation, the gap between disruption and redress widens, and the legitimate channels through which grievance could otherwise be absorbed are perceived, often correctly, as closed. This is what will be referred to hereafter as the ‘tempo problem,’ and it operates as a force multiplier across all three grievance domains developed below… The mechanism by which tempo generates violence risk is not direct; it is mediated through the perception of institutional closure that classical political violence theory identifies as the single most consistent precondition for the escalation from grievance to action.”

AI as a Generator of Grievance Framework: “AI can create a grievance that would not otherwise exist; it can amplify an existing grievance by making it more acute, visible, and targetable; or it can provide a focal point that allows diffuse grievances to cohere around a specific, nameable target… The accountability gap runs through all three domains as a cross-cutting mechanism, compounded by the tempo problem, which conditions the rate at which each domain’s grievances accumulate faster than institutional channels can absorb them.”

Economic Order Grievance Domain: “The first domain concerns distribution: who bears the costs of AI deployment and who captures the gains. The displacement is not diffuse but uneven. Early automation shocks fell disproportionally on blue-collar and mid-skill workers in geographically concentrated industries and communities; the current AI moment may extend that displacement upward into credentialed knowledge work, with entry-level white-collar positions in technology, finance, law, and consulting among the most exposed. As discussed above, what distinguishes this moment from prior periods of technological change is the speed of innovation, the breadth of AI adoption, and the fact that the builders of the technology are themselves on record predicting these consequences.”

State and Institutional Power Grievance Domain: “The second domain concerns legitimacy: the perception that state and institutional power is either failing to govern AI or actively weaponizing it. Regulatory response to the introduction of AI has been slow, fragmented, and widely perceived as captured… Governance breakdown may also generate a distinct actor type, what might be called the demonstrative attacker, whose goal is not to stop AI development or retaliate for harm, but to force better governance by showing that AI infrastructure is inadequately protected.”

Social and Personal Fabric Grievance Domain: “The third domain addresses AI’s effects on individual and social life, the immediate relational worlds in which people actually live. Two sub-domains share a common logic: AI systems acting on persons and their immediate social worlds rather than on institutions or the economy. The first concerns social atomization and identity disruption.. The second sub-domain concerns direct personal harm: the AI system as the proximate cause of an attributable injury to the individual or someone they love.”

How Violence Might Manifest – Targets and Modalities: “Historical precedent… suggests that the targeting patterns generated by these structural conditions are remarkably consistent across ideological contexts. The target logic is driven less by ideology than by the grievance structure itself… As higher-value targets harden their security postures, violence may be displaced toward softer, more accessible targets within the same grievance logic… As AI company executives acquire more personal security, risk may shift to researchers on open campuses; as corporate campuses harden, risk shifts to the power substations that serve them; where national figures are unreachable, local policymakers who approved the data center become the proxies for the same structural anger.”

Implications and Conclusions: “The accountability gap is not merely a legal or ethical problem; it is a counterterrorism variable… Measures that close or narrow the accountability gap… are therefore not only governance measures. They are also counterterrorism measures, in the specific sense that they may reduce the population of grievances for which only extra-institutional targets remain available. The governance of AI accountability is, in this framing, among the most under-appreciated points of leverage in the counterterrorism response the threat landscape described here will require… The window for substantive engagement is now, before movement trajectories harden.”

Similar to Veilleux-Lepage’s Beyond Misuse, Clara Broekaert and Lucas Webber’s article, “AI Use in Terrorist Plots and Attacks Surges in 2025,” documents how terrorists used AI operationally in 2025 across learning, scenario visualization, and tactical refinement, arguing the trend demands urgent response from states, tech companies, and platforms. Broekaert and Webber tell you what violent actors are doing with the technology, and Veilleux-Lepage tells you what the technology is doing to the conditions that produce violent actors in the first place.

Additionally, Michael Santoro’s article at the Modern War Institute at West Point, “Designing Lethal Decisions: AI, Accountability, and the Future of Military Judgment,” argues that late-stage human-in-the-loop overrides are the least trustworthy safeguard in high-stakes AI-enabled military decisions. Accountability must be built into system design from the outset rather than patched on at the point of execution. This directly engages Veilleux-Lepage’s accountability gap concept from the military side of the ledger: where Veilleux-Lepage identifies the absence of an attributable human agent as the primary grievance generator across all three domains, Santoro surfaces the same structural void inside lethal targeting chains.

Originally published by Small Wars Journal, 04.29.2026, under the terms of a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported license.