A design for a flying machine, by Leonardo da Vinci, 1488 / Institut de France, Paris

By Lisa M. Lane / 09.20.2016

Professor of History

MiraCosta College

Introduction

What is Technology?

Technology is an extension of ourselves that we create. For example, a hammer is an extension of ones arm, increasing its effectiveness. Technology can also be anything that isn’t “natural”, that is manufactured by humans.

We will focus on the technologies that emerged in Western culture, from the beginnings to today. But we don’t want a laundry list of inventions and devices. Rather we want to explore how those devices came about, and what they say about us and our history.

Whenever we learn about history, any kind of history, we are influenced by our own era. At present, to look at technology means to focus on innovation (1). I think this is partly because of the business emphasis on new products and inventions, which our contemporary society values highly. That focus is then reflected back through the past. Anything new or different is seen as a turning point, and items used for years or centuries are often ignored. Another trend at the moment is the idea that technology causes things, such as social change. This may be because in our own time it often feels like technology has a will of its own, that technology is changing faster than we can handle.

I think both of these perspectives, the focus on innovation and technological causation, have some merit but cannot tell the whole story. To focus only on new inventions, or to see innovation as something conscious in past societies, is deceptive. It may well be that inventions used over very long periods of time are those which have staying power, and thus reflect our history better than new items. The idea that technology is deterministic is also problematic. To me it seems like putting animate objectives onto inanimate objects. When a particular technology causes something, or when it “wants” something (2), we imply that its power is beyond our control.

Kevin Kelley on What Technology Wants, TED Talk (20 minutes)

Although there have been times when technology has indeed been beyond our control, to study history within a framework beyond human control is dangerous. It is hazardous in terms of accuracy, but it also is morally dangerous in that it may imply lack of responsibility for our own creations.

How Does Technology Happen?

Da Vinci’s idea for an helicopter, 500 years before modern helicopters were invented / Wikimedia Commons

The old saying goes, necessity is the mother of invention. In other words, when there is a need, someone invents something to fill the gap.

If this is so, there would be many incremental inventions and technological changes over the years. Something works, but not as well as it could, so an insightful user adds a part or changes something slightly. The more useful designs, presumably, get adopted.

And yet, when we study history, we are often presented with Great Inventions and Great Inventors (Gutenberg, Leonardo, Maxim, Oppenheimer). This fits with the “Great Man” theory of history. It’s the same kind of thinking that leads us to talk about “revolutions” – the Neolithic Revolution (suddenly, agriculture!), the Agricultural Revolution (suddenly, lots of food!), the Industrial Revolution (suddenly, industry!). As you can tell, I distrust this kind of history. It’s useful to help explain when certain elements of technology come together to create social, economic or political change.

I prefer the idea of continual development, where incremental changes make technology more useful, and ideas emerge and re-emerge. Take, for example, Leonardo da Vinci’s work. His Aerial Screw is a15th century design for what we would today call a helicopter. The idea of human-powered flight emerges and re-emerges. So does submarine transport (there were submarines spying in the American Revolution) and body part replacement ( there was a leg transplant in the 3rd century).

Paleolithic and Neolithic Technology

Early humans gathered together for protection against the elements and efficiency of food collection. Early technologies would have included the control of fire, shelter building, and ways of gathering food and saving water, but some of the most interesting are those that created art.

From Paleolithic to Agricultural?

Mother Goddess, The Neolithic site of Çatalhöyük / Anatolian Civilizations Museum, Ankara, Turkey

The Paleolithic (paleo=old, lith=stone) age indeed featured stone tools of all sorts, and we assume that early humans perfected designs of arrowheads, scrapers, baskets and containers, and other implements to increase the efficiency of hunting animals for food. Animals provided not only protein, but also skins (which could be used for clothing and as wraps for carrying things), bone (useful for small tools and ornamentation) and organs (for medical remedies).

I once attended a lecture in an anthropology class here at MiraCosta, given by a Dr. Ford. As a historian, I had heard about hunting and gathering, but I didn’t know until I sat in on his class about scavenging. Apparently hunting, being very hard work, doesn’t bring in much meat, or at least not on a predictable schedule. Gathering (of plants and berries) doesn’t supplement the diet but is rather its foundation. I learned that scavenging dead animals who had been killed by other animals was a major source of food. I had never thought of humans as scavengers, but it made perfect sense. It did make me wonder, though, whether cooking had been used not only to make meat more tender, but to burn off the toothmarks of other animals.

Back when I went to college, I was taught that agriculture was a natural progression from hunting and gathering. Different groups of humans took this leap at different times, likely discovering that planting seeds could be more efficient than gathering. Saving the seeds from the biggest, strongest plants also makes logical sense. But I was taught that it was agriculture that caused humans to settle down, to slow down their nomadic lifestyle of following animals, in order to farm. The first major communities, then, built up around these farming settlements.

That theory is being questioned now, partly because of new dating methods at very old sites such as Göbekli Tepe and Çatalhöyük. Both communities had many buildings, permanent structures, and for a long time it’s been assumed that they must have grown their food just outside of town. But further excavations and carbon dating methods have discovered no evidence of agriculture at all. The assumption may well have been wrong. If so, it means that large ceremonial centers may have been build for spiritual reasons rather than physical protection and a center of farming production.

This sort of shift in thinking is one of the reasons why History is not a “dead” subject. Many people believe that history is simply a set of facts from the past, that we know what happened. So, for example, a history book written in 1855 about ancient Greece must have all the same information as a book written today. But of course, that isn’t so. History is not a collection of facts, but rather of interpretations based on those facts we have. Sometimes new facts are discovered (or a lack of facts as noted at Göbekli Tepe and Çatal Höyük). This changes the interpretation. In this case, we may have to consider whether spiritual needs might have been, in some places and in some ways, as important as protection, food, and other basic needs.

The Role of Shamans

Certainly the shamans and other spiritual leaders were the first to be fed by the community. When people come together to hunt, gather, scavenge, and farm, almost all of them are working on survival for themselves and the group. There is little spare time – all energy is used for subsistence activities. As the community expands, if it does well, there may be some surplus food. This means that not everyone is required to engage directly in food production. The group can afford to “pay” a member to do something else by feeding him or her. The “first job” was almost always that of the shaman.

The shamans were paid to intercede with supernatural forces on behalf of the community. What did this have to do with technology? The shamans’ responsibility to provide spiritual guidance for the community was in many ways dependent on his or her ability to interpret the natural environment. Weather was a particular issue, since drought or flooding could kill crops and make animal movements shift, threatening the lifeblood of the group. Shamans became very good at astronomical and climate observations, determining and recording patterns in order to make predictions and keep the community prepared. They were, in fact, the first scientists, basing their recommendations on direct observation of the natural world.

Art as Technology

It is easy to see how the construction of buildings at places like Çatal Höyük would be technology, but a great deal of time and effort was also spent on items we would consider art. In many places in Europe, particularly in the caves of France, it is possible to view cave paintings of extraordinary craftsmanship. There are handprints on walls, and detailed paintings of animals. Some of the most intricate have been only recently discovered, within the last hundred years or so.

For a long time, scholars simply admired the work. But recently some have begun seeing it in a new way, noting particular patterns in the paintings. Take a look at our secondary source reading for this week (below), which suggests that artists may have been trying to convey motion, as in a comic.

Here’s an animation:

What we have here is evidence of what I think is a necessary part of society: narrative. Narrative, or story-telling, implies a self-consciousness in a culture, a desire to record the present in a way that will be useful to the future. Telling stories is part of every society we know of on the planet. Narrative defines who we are, whether it is the story of a hunt on a cave wall, or the Epic of Gilgamesh, or stories of heroes and saints. Shamans told stories to explain the natural world. And narrative can be a powerful force: we criticize national leaders who “rewrite history” to gloss over their country’s bad behaviors and teach future generations a more generous view of their people. We can trace the history of narratives from cave paintings and stories, to oral traditions passed down through memorization, to the development of writing, to printing, to the internet.

Effects of Agriculture

Once agriculture began (the Neolithic Age), which it did in fits and starts, life did change. From foraging to deliberately planting seeds, from returning to last year’s seed plot to deciding to stay, is the presumed pattern. We know that when people start planting, their diet shifts. Less scavenged and hunted meat is consumed, and more vegetable matter is eaten. Grains become central to the diet, as they evolved from grasses and contained concentrated carbohydrates. Sometimes the dietary shift could be deadly, as in the case of the Cahokia civilization in the Americas. They became so dependent on corn (maize) that they developed malnutrition and disease.

But for most the settling down provided opportunity. Planted crops could be improved by selecting out the largest or best-tasting fruits and grains to save seeds. Plants could be hybridized by hand, cross-pollinating to create new, stronger forms. Settlement appears to have led to the division of labor, with child-care being tied to an interruptible activity (farming) rather than one that could not be interrupted (hunting). Some historians believe that this sexual division of labor, where women tended continually while men went off to work occasionally, began here, with the Neolithic Revolution.

Technology as Art

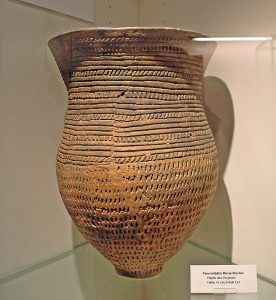

Left: 3,500 years old, “Giant Beaker of Pavenstädt”, Stadtmuseum Gütersloh, Germany

Right: Neolithic stone arrowheads / British Museum

As one would expect, the technologies used served the purpose of ensuring survival and subsistence. Hunting with spears or bows and arrows requires arrowheads, which are still found today by archaeologists. Grain and other foods could be dried and saved, necessitating containers such as baskets or pots.

Late in the Neolithic era, monumental stone “henges” were a feature in some areas of Europe. Evidence of the oldest henge was found in 2002 near Goseck, Germany. The Goseck henge is estimated to be 7000 years old, and its southern gates line up with the summer and winter solstice. Like other henges (Stonehenge being most famous) the astronomical alignment of many henges has led archaeologists to believe they served as observatories. Certainly the need for shamans to observe nature closely would support this idea.

From what we know of more basic cultures that exist today, and from our own histories of legends and tales, we assume that Paleolithic and Neolithic people told stories. The cave paintings may have represented not only the animation in their daily lives, but specific tales of hunts and observing animal life. Container designs and even weapons may have been decorated to tell stories. Because we have nothing that is considered “writing” from this era, we call it “pre-historic” – before history. In the next unit we move on to evidence that goes beyond archaeology into an exploration of the tools used for civilization.

Conclusion

While it’s easy to see prehistoric technologies only in terms of arrowheads, hunting, and connections with the supernatural for the purposes of survival, we are discovering more connections all the time. The goods carried by Ötzi, a prehistoric man discovered in Alpine ice in 1991, included tools and remedies. Analysis of the body has indicated the use of acupuncture or something similar, in a pattern. He carried small bundles of fungus that were likely used as medicine. The shoes were made with bearskin soles and were waterproof. He carried a copper axe and arrows, and several plants designed to start fires under different conditions. Just this one discovery gives us an idea of the possible complexity we’re missing about this era.

Ancient Mesopotamia, Egypt, and the Levant

One definition of “civilization” is the culture that surrounded the creation of cities. There is also an implication that “civilization” refers to cultures that are not only urbanized, but that have organizations and structures for many areas of life, including politics and religion. We also tend to assume that these cultures keep records of themselves, not through pictures, but through writing. In ancient times, technology was based on fulfilling the need for social organization, including the structures connecting civilizations with the forces of nature.

Mesopotamia

Ancient Mesopotamia / Wikimedia Commons

The region of Mesopotamia is now modern-day Iraq, but in ancient times it was the location of three different civilizations.

The Tigris and Euphrates Rivers drain into what is now called the Persian Gulf. Nowadays they meet up and form one stream to the Gulf, but archaeologists discovered that in ancient times the shoreline was much further to the north. The ancient town of Uruk, now far inland, was on the sea. Over the centuries, silt carried down the rivers has built up the land and moved the shoreline southward.

Geographically, the rivers dominate the area, but they are not easy to work with. The Tigris and Euphrates rivers don’t have predictable cycles – they flood unexpectedly, and there are years of drought where the rivers don’t rise at all. Since the fertile, watered areas are closest to the rivers, this situation presented a challenge for early agriculture.

The theory of geographic determinism supports the idea that early Mesopotamian cultures were inventive, pessimistic, and warlike, all because of the rivers. The insecure environment for agriculture meant that a certain amount of creativity was called for, particularly in designing irrigation. The pessimism can be seen in the Mesopotamian view of the afterlife, a dark, dusty place where souls ate dirt and were shadows of their former live selves. It can also be seen in the Mesopotamian view of the gods – fickle, powerful forces who played with humans for their own purposes and really didn’t care what happened to them. For example, in the Epic of Gilgamesh, the goddess Ishtar falls in love with the human king Gilgamesh, and wants him for her lover. He has no desire to become one in a long line of discarded men, so he tells her no. She complains to her father, who sends the Bull of Heaven down to kill Gilgamesh’s whole town.

Gilgamesh and his lieutenant Enkidu ultimately slay the bull, but Enkidu dies in the process. They are heroes for standing up to the Bull, the tool of the gods sent to destroy them. Thus it is possible to triumph over supernatural forces, but at a human cost. This seems to reflect Mesopotamians’ view of the natural world – a place where unpredictable, fickle forces make life difficult.

The Sumerians, who gave us this story, emerged in the south. Among their achievements was the development of cuneiform writing, “wedge writing” made by pressing the end of a reed into wet clay. Unlike the pictographic writing of other cultures (such as the hieroglyphics of Egypt), cuneiform came to be more abstract and symbolic. While certainly not the first writing system, symbolic cuneiform marks a shift in literacy. Pictographs, though limited in number, can be interpreted easily because they look like the word they represent. Cuneiform came to look like just shapes in different patterns. Thus, to learn Sumerian, one would have to learn thousands of different symbols, or combinations of wedge strokes. Literacy, then, would be confined to people who had time to learn how to read.

Figures at top of stele above Hammurabi’s code of laws depicting the code being given to Hammurabi, c.1750 BC / Louvre Museum, Paris

Nevertheless, by the time of the second great civilization in Mesopotamia, cuneiform was being used to make sure everyone knew the law. Babylonia was ruled in the 18th century BC by king Hammurabi. Hammurabi (or more likely his scribes) compiled many of the disparate law codes of the region into one. Hammurabi’s Code was then written on stone stellae, carved into monoliths and mounted in the center of towns. The punishments were often harsh, as befits a society that lives for today.

The punishments in Hammurabi’s Code vary by the status of the offender. Typically, slaves had to pay with a body part (for example, stealing leads to your hand being cut off) while wealthier free citizens can pay a fine in silver. Crimes of slaves against free people were punished more harshly.

When we study a law code, we are looking at what I call a “prescriptive” source. It tells people what they’re supposed to do. That must mean they aren’t doing it. There would be no reason for a law unless a number of people were doing the thing forbidden by the law. So prescriptive sources can tell us a lot about what’s really going on in a culture.

So because it was forbidden, we know that people in ancient Mesopotamia were stealing, allowing their animals to trample others’ property, sleeping with people they weren’t supposed to, watering down drinks in bars, and striking each other a lot. We know that men tried to abandon wives who were sick, because the law forbids it. We know there was adultery, with the punishment that if caught in the act, the couple was tied together, weighted with a rock, and drowned in the river.

But we also know from the architecture that the reason for this harshness was to preserve the community. The ziggurat was the great architectural achievement of ancient Mesopotamia. The ziggurat itself was a kind of stepped pyramid with a temple at the top, and it was surrounded by the temple services. Located in the center of town and dedicated to the town’s particular god, the ziggurat was a center for life and ceremony.

Kings were mortal (unlike in Egypt), and when they died the new king was proclaimed at the ziggurat. In order to affirm his connection to the supernatural, he had to have sex with the top priestess of the temple to confer legitimacy. That’s another reason why law codes were necessary and very detailed – in Egypt, there were few law codes because the pharaoh was a god, and thus his word was simply the law in any particular matter. A mortal king must lay out the rules in advance.

The Nimrud lens / British Museum, London

Egypt

Unlike Mesopotamia, ancient Egypt was highly stable. The Nile River, which flows from the south to the north into the Mediterranean, was so predictable that it was possible to measure its rise exactly, and determine what it would be in the future. The flat lands on either side of the Nile contains the fertile silt from the river, and when it rises each year it floods the land, leaving moisture and more good soil. Agricultural life was thus easy in Egypt.

The Goddess Ma’at wearing the “Feather of Truth” / Wikimedia Commons

As a result, Egyptian gods were seen as helpful and dependable. The goddess Ma’at brought justice by weighing the heart of the accused against a feather of truth. Anubis, the jackal-headed god, helped people pass into the afterlife, a place of peace and joy.

At first, this lovely afterlife was a gift only for pharaohs and very important elites, but as time went by the idea evolved that ordinary people deserved the trappings of an afterlife also.

The Great Pyramids were constructed to assure this afterlife for the pharaohs. Technology in Egypt was thus at the service of a deep spirituality. Unlike ziggurats, pyramids were places of death, and the ceremonial reanimation of life as the pharaoh entered the next world. As the afterlife was “democratized”, more and more workers were employed in the funeral industry, constructing pyramids, painting the interior walls, carving the stone.

The Egyptians also excelled in astronomical observation, needed for proper worship and holidays. Their personal care was complex enough to qualify as technology. They created makeup that was in demand on the trade routes, and even invented the vaginal sponge for birth control. This sponge was made of real sponges from the Mediterranean, soaked in lactic acid from the tips of acacia trees, embedding them with spermicide. We know about these methods because of the Ebers Papyrus, which dates from about 1500 BC. It contains information on various remedies, such as herbal inhalations for asthma and various laxatives, and a treatise on the heart indicating an understanding of the circulatory system.

Egyptians also produced papyrus, an inexpensive “paper” made from reeds. Although they held to a pictographic writing system, the abundance of papyrus meant that much knowledge was written for future generations.

The Alphabet

Most early writing, including Egyptian hieroglyphics, were pictographic – each symbol meant a word, and each symbol looked like a picture of what it represented. Sumerian writing had been symbolic, in that gradually pictographs developed into abstract symbols:

The Phoenicians, however, broke with tradition, creating symbols that represented sounds instead of words. The Greeks adopted this “alphabet” idea from the Phoenicians, and (although vastly simplified) the evolution of alphabet technology then went something like this:

The Hebrew People

The Hebrews emerged in the eastern Mediterranean as a pastoral group, and it is unclear when they became monotheistic. What we do know is that the belief in one god became the base of their culture and the source of their difference from other cultures. Among the contributions of the Hebrews to our civilization include a belief in progress and the idea of a portable god, one who is invisible and not tied to a particular place.

When looking at the technology of the Hebrews, we can find some in the Bible (the measurements for the ark and the Tabernacle, for example), but I believe the most significant is text glossing. A core Jewish belief is that the word of God, as represented in the Hebrew Bible after the 9th century or so, is meant to be studied and examined. While the books themselves are precious and special, the ongoing examination and analysis of the Bible (and, indeed, all Jewish texts) is a particular responsibility. Although the Hebrews had priests, the role of these priests over time was reduced to that of ceremonial leaders. The spiritual leadership devolved to the rabbis, the ones who studied the text.

This page from the Talmud shows the text being analyzed in the middle, then commentary around it, then commentary on the commentary. This organizes the material and analysis visually, creating deeper study. So I guess the innovation here is rabbinical learning. The concept of “glossing” the text creates resources for later analysis. This allows for the development of law by precedent, where cases can be decided by looking back to previous analysis and determining the extent to which it may apply to a current circumstance. / Wikimedia Commons

Conclusion

Writing itself, as I mentioned with prehistoric narratives, is a self-conscious act for any society. Whether on stone stele or papyrus or parchment, the recording of commercial transactions, dynastic succession, calenders and other events in writing suggests a sense of history. Writing means we mean to preserve something beyond a single person’s lifetime, for others to learn or benefit from. Gadgets and inventions, individual pieces of technology, may be interesting in themselves, but they only become important when they reflect or represent a system of some kind. The system itself, whether writing, or irrigation, or embalming, tells us more about how people lived long ago than any single piece of engineering.

Ancient Greece and the Hellenistic Era

Hellenic or Classical Greece

“Hellenic” refers to the Greek word for Greece: “Hellas”. It is the era between the Archaic Age (the time of Homer) and the Hellenistic Era (following Alexander the Great). During this time most of Greece was divided into city-states (poleis), each with its own political system, and most in competition with the others for land or trade. The era is also called “Classical” Greece, since so much of the classic forms of architecture and sculpture developed during that time.

But much of what we call Hellenic or Classical Greece is really focused on the 5th century BC in Athens. The polis of Athens between the Persian Wars (which they won) and the Peloponnesian War with Sparta (which they lost) developed a rich culture that carried forward intellectual influences from before and added a new, naturalistic view of knowledge. Athens’ intellectual achievements were the product of a highly commercial society, supported by slavery and a democracy consisting of Greek, adult, male citizens.

The wealth of the society meant that there was time for learning. In cultures that must focus on subsistence, there is little time for intellectual exploration or education beyond that required to provide food and needed goods. Most “knowledge workers” would be shamans or others whose spiritual connections and scientific understanding of nature would make them of value. But surplus of agricultural goods, the first major advance out of the Neolithic Age, led not only to further specialized labor but the rise of a knowledgeable class of people. Those with extra wealth from trade could afford to hire smarter people to teach their children. Leisure time allowed for thinking about things.

Although many 6th-3rd century BC Greek writings have not come down to us in their original form, some were preserved and others were translated and retranslated outside of Europe. We have many more works showing us the Greek mind than we have from ancient Babylonia or Egypt. What we notice first in the Greek works is their emphasis on exploring and explaining the natural world. Greek scholars, for example, considered astronomy to be a branch of mathematics. They studied the stars and created models of planetary motion. They created maps of the known world, built cranes to move large objects, and designed plumbing and city systems. They used an abacus to do calculations.

Modern Technology Reveals Ancient Technology

The Antikythera Mechanism, found in a shipwreck a century ago, also indicates a high level of understanding of math and science. By the time sophisticated x-ray technologies were available to examine it carefully (around 2005), the computer had already changed our lives. The device is now interpreted as an ancient computer. This is another way that history is always changing – it is the new emphasis that our current culture has on computers that led to a new interpretation of an ancient object.

Writing and Its Influence

Another way in which our current view of the world influences our interpretations can be seen in the Greek view of writing. I’ve mentioned before that writing is a self-conscious act for a society, a way of preserving the past and present for the future. But Socrates, one of the most famous Greek philosophers, was against writing as a way of preserving knowledge. He thought it stultified knowledge, frozen it in time in a way that made ideas difficult to question, and thus new knowledge harder to create. His student, Plato, wrote a dialogue between Socrates and another character to explain his mentor’s point of view:

Plato, The Phaedrus – a dialogue between Socrates and Phaedrus (~370 B.C.)

Socrates: I cannot help feeling, Phaedrus, that writing is unfortunately like painting; for the creations of the painter have the attitude of life, and yet if you ask them a question they preserve a solemn silence. And the same may be said of speeches. You would imagine that they had intelligence, but if you want to know anything and put a question to one of them, the speaker always gives one unvarying answer. And when they have been once written down they are tumbled about anywhere among those who may or may not understand them, and know not to whom they should reply, to whom not: and, if they are maltreated or abused, they have no parent to protect them; and they cannot protect or defend themselves.

Phaedrus: That again is most true.

Socrates: Is there not another kind of word or speech far better than this, and having far greater power-a son of the same family, but lawfully begotten?

Phaedrus: Whom do you mean, and what is his origin?

Socrates: I mean an intelligent word graven in the soul of the learner, which can defend itself, and knows when to speak and when to be silent.

Phaedrus: You mean the living word of knowledge which has a soul, and of which written word is properly no more than an image?

Socrates: Yes, of course that is what I mean.

On the one hand, Socrates’ view may seem archaic. But on the other, we are now entering a world where there is so much information, and its format is always in flux. Some see the internet as a way to not only share writing, but to argue against it, to fight against it becoming static. Or perhaps Socrates would see the internet as just a collection of images representing ideas, rather than the development of ideas themselves.

Hellenic Art

Red figure artists sometimes signed their work. This is a Kylix signed by Phintias as painter, c.510 BC / Johns Hopkins Archaeological Museum

Greek art and architecture are often seen as a visual way to understand ancient Greek culture. Certainly the Greeks valued moderation and balance – that is evident everywhere from the design of the Parthenon to their medical works to the vases and pots they used every day. The Parthenon itself, commissioned by Pericles as a symbol of Athenian glory, was made of marble and had a huge painted statue of Athena inside it. The Greeks developed techniques for free-standing sculpture, and used it to express ideals of form. Many original Greek works, however, were cast in bronze. During and after the wars with Sparta, bronze works were often melted down for weapons. Many of the “ancient Greek” sculptures we have today are marble copies from Hellenistic and (primarily) Roman times.

The famous Attic pottery (Attica was the region surrounding Athens) is another representation of Greek artistic style, but it also represents their technological achievements. The pots themselves were beautifully made and used for everything from wine to olive oil. Styles were originally geometric, but by the 7th century BC had started to feature figures of humans and animals. “Black figure” painting was achieved by applying a clay slurry to a dry pot before firing. The paint could be incised and other colors applied on top. The iron-rich clay of Attica made for superior pots, and figures tended to be mythological. By the 6th century BC many pots were painted with images of (presumably famous) athletes, and some showed erotic scenes. Different artists had different styles, and historians today are able to distinguish among the masters.

Around 530 BC, the “red figure” technique was developed, a significant innovation that allowed not only for a black background, but also much finer detail. It was achieved through a three-stage firing technique, with the last stage at a lower temperature to melt and seal the images. The paint was applied directly to a smooth surface, making possible figures in profile, with detailed faces, and clear depictions of fur and feather on animals. Clothing could be more detailed, and additional colors used. Unlike in black figure pottery, the outlines carved into the pot disappeared during firing, melting into the black background, making for a much clearer image. A single technological innovation changed the field.

The Hellenistic Age

After the Peloponnesian War between Athens and Sparta ended, Spartan rule led to cultural decline. To the north of mainland Greece, in Macedonia, a warrior emerged who wanted to regain the glories of classical Greece. When Alexander conquered Greece and went on to create a huge empire to the east, he deliberately brought classical Greek culture with him. Alexander had been tutored by Aristotle, who had been a pupil of Plato, who had been a student of Socrates. Although he was not Athenian, Alexander saw himself as inheritor of Greek philosophy and science. Aristotle in particular had been concerned with categorizing knowledge, of both the natural and human world. Along with Alexander’s armies went scholars and natural philosophers, to study the world being conquered.

Campaigns and empire of Alexander the Great / Wikimedia Commons

Most of the people in Alexander’s huge empire weren’t Greek – they were Bactrian and Persian and Indian and many other cultures. Although the political empire Alexander set up did not survive his death, trade was free among the empire, and with goods flow ideas. The technologies of the east, including water-lifting devices, creating artworks with glass, cataract surgery techniques, and mathematical advances such as the independent work in what would be called the Pythagorean theorem spread across the empire. The city Alexander built in Egypt, Alexandria, featured a huge library and attracted many scholars. (Humility was neither a Greek nor Macedonian virtue – Alexander named a lot of cities he founded “Alexandria”.)

The list of scholars and intellectual achievements usually called “Ancient Greek” actually are from the Hellenistic Era (after Alexander’s death in 323 BC). Herophilus’s new ideas of systematic anatomy, Erasistratus’ work on the heart as the motor of the circulatory system, Galen’s development of the idea of bodily systems, Aristarchus’ idea that the sun is the center of the astronomical system, Euclidian geometry, the Archimedes screw – all of these are Hellenistic. Although historians often separate science and technology, Archimedes provides an excellent example of “practical” science, in his case in hydrostatics. The water-lifting screw he likely helped develop was used to lift water from the Nile for agriculture – unlike earlier devices, it was portable.

The Tower of the Winds or the Horologion of Andronikos Kyrrhestes, Athens / Wikimedia Commons

Around 50 BC, Andronikos constructed in Athens, not the first water clock, but an ancient one that still exists. Water clocks work without a need for astronomical observation, and through much of history they have been either novelties or related to religious observance, tracking months and celestial phenomenon rather than the hours of the day. His “Tower of the Winds” contained not only a water clock, but also eight sundials in the Cardinal and Primary Intercardinal directions (N, NE, E, SE, S, SW, W, NW) and a weather vane, designed to be seen from the Agora, so it might have helped people know how long they’d been shopping or attending government meetings!

One of the great horrors for historians likely occurred in AD 4th century, when the Library of Alexandria burned. It contained so many scrolls that its burning, which may have occurred in several separate fires, is a symbol of lost knowledge. The Library was not just a collection of documents, but a state-funded institution that sponsored scholars doing original research.

Practical technological development also occurred during this era. Experiments were done with the torsion-spring catapult, which could hurl large objects at enemy troops. Kickwheels were added to potter’s wheels, speeding up the production of ceramics. The works of Heron show the invention of a surveyor’s instrument (startlingly similar to what we use today), a carpenter’s level, and the screw press, which saved labor by fully pressing grapes and olives with ease. Heron also created toys for fun, many using “steam power” to work – balls rotating in a steam flume, little figures offering drinks when an altar’s candle is lit.

Aristotle

In the 4th century BC, a natural philosopher emerged who had an extraordinary influence on Western thought for the next 800 years. The scientific world view he created influenced not only philosophy but the way in which many people assumed the world (and the universe) worked.

Aristotle was a student of Plato, who had proposed a world of ideal forms. Perhaps for this reason, Aristotle saw nature as perfect and organized, and sought to reveal that organization. His view of the heavens was that the earth was still, and perfectly spherical objects moved in perfectly spherical motion around it. He dealt with the obvious problem of retrograde motion (some stars and planets appear to move backwards at certain times) by adding more spheres to the system. But Aristotle worked in other fields than cosmology: ethics, rhetoric, metaphysics, anatomy, and logic, to name a few. And even though he was not a tinkerer with engineering or objects, the systems he developed reflected what people saw every day. We do not feel the earth move, and the stars and planets do seem (from our view) to be spheres and to move in orbits. The division of matter into earth, air, fire and water also makes sense, as does the idea that earth and water are heavy and so naturally move toward the core of the earth, while air and fire are light and move upward. Motion that is not naturally occurring must be “forced” by an identifiable mover.

Aristotle also created classifications of animals into a hierarchy, identified three types of soul corresponding to vegetable/animal/human, and saw the practical arts as necessities while science was a luxury. He knew that only people with leisure could engage in deep thought, and did so out of curiosity.

Many of the practical technologies which developed apart from (or at least without reference to) Hellenistic science, however, occurred in Roman times, our next unit.

Conclusion

It would be a mistake to oversimplify and glorify the Greek advances. Until the Greeks, we simply do not know how technologies fit in with intellectual life. The scarcity of written evidence has led to historians viewing pre-Hellenic societies as primitive in their understanding, basing their thought on supernatural connections. According to Auguste Comte, a 19th century philosopher, mankind has three stages. The first, primitive stage he called the Theological Stage. Here people assume supernatural causation for natural phenomenon: the gods cause rain, inanimate objects have spirits inside them that determine their character. In the Metaphysical Stage, these forces become metaphysical instead: abstract concepts explain causation, such as warm air rising because it is in its nature to rise. In the final, Positive Stage, explanations are scientific, based on experimentation, observation and reason. We tend to assume that everyone before the Greeks was in the Theological Stage, imbuing rocks and weather with spirits, instead of acknowledging the observation and reason demonstrated by the shamans. When we acknowledge the scientific activities of the pre-Hellenic shamans, the Greek experience loses some of its novelty if none of its interest.

Roman, Byzantine, and Arab Worlds

The story of the expansion of Rome is a military adventure, but it is also a technological adventure. As the western half of the Empire fell to the barbarian invaders, Byzantium preserved Greek and Roman methods. By the 9th century, Arab scholars had not only reclaimed this knowledge but moved it forward. Technology during this era of “Late Antiquity” was used to hold together an empire and recover lost knowledge.

Rome

The Roman Republic, according to legend, was founded in 509BC with the overthrow of the monarchy. During the 5th and 4th centuries BC, Rome expanded from the center of the Italian peninsula, both northward to defeat the Etruscans and southward to the Greek colonies. I find it interesting that in this expansion, Romans always saw themselves as defending from attack rather than conquering and expanding.

Farming was the main activity of many in the Republic, and we can see some of the techniques by reading Cato. He was one of the first to write texts in Latin rather than Greek (the language of the intellectual world at the time), and he wrote a book on farming called De Agri Cultura. Among the advice he offered was the use of compost and manure to ensure good yields, ploughing deeply so that surface roots don’t form on olive trees, and the use of amurca (olive oil sediment) as a pesticide. It is all practical – there is no reference to science even though Cato himself was a learned man. In additional to providing practical techniques, Cato’s book glorified the role of the farmer in Roman life.

That said, many Roman men spent time soldiering, even if they were farmers. As Rome expanded, competition occurred with goods for the new regions (Sicilian grain, with its lower price, is one example), and farming became a more difficult way to earn a living. Military technological advancements were often achieved by improving on Greek technologies. One example is the javelin, a throwing spear. Javelins were the first line of attack in a Roman battle, but they didn’t always kill. The head was designed to twist on the shaft as the point entered a shield. This meant the javelin couldn’t be recovered, but it also meant it was hard for the enemy to remove, which they had to do to make the shield useful again. The enemy thus spent valuable time trying to pull out javelins while the Roman army attacked with swords. The Greek catapult was improved, adding pins and holes to improved accuracy. Catapults were basically siege engines, whose purpose was to beat down the walls of a fortification.

The Roman Empire

As the Republic became an Empire (around 27 BC), Roman technologies focused on construction that tied Rome’s disparate territories together while demonstrating Rome’s glory. The centralized government could order and pay for major projects.

Pont du Gard, in Vers-Pont-du-Gard, Gard department, South France. The Pont du Gard is the most famous part of the roman aqueduct which carried water from Uzès to Nîmes until roughly the 9th century when maintenance was abandoned. The monument is 49m high and now 275m long (it was 360m when intact) at its top. It’s the highest roman aqueduct, but also one of the best preserved (with the aqueduct of Segovia) / Wikimedia Commons

Aqueducts served multiple purposes. Designed to carry water from distant snowpacks to lowland towns, they were built to a high standard. Stone arches supported clay-lined channels above, which could then be diverted into different areas of town. In Rome itself, which was comprised of about 33% wealthy villas, 33% horrible slums, and 33% public areas, water to each could be controlled. In a drought, slums were cut off first, because people who lived there could go to the public areas, such as fountains and baths. Water to these areas could be reduced if the elite villas needed more. Thus the technology reinforced, and a very practical and evident way, the class structure of Rome. In rural areas, the huge rows of arches crossing the landscape reminded people that they were part of a huge empire, to which they owed their loyalty.

Aqueducts, and monumental Roman buildings, could be so large because of the use of concrete. Although not invented by the Romans, Roman concrete (a mix of quicklime, pozzolana ash and pumice stone) could be poured into forms and used as a core to support masonry.

Roman roads also tied the empire together, and were intended for moving troops quickly from place to place. (This is why we still find Roman roads under fields and rural areas – they were not built to connect towns or trade.) Originally the roads were made of wooden planks, on which troops could march and equipment could be rolled. But plank roads were subject to warping over time – they weathered badly. The Romans developed a sophisticated technique for building roads and streets, first digging out a trench, then layering it with different-sized gravel and stones, with large fitted stones on the surface. The roads were obviously intended to last forever, just like the Empire.

From City: A Story of Roman Planning and Construction, illustrated by David Macaulay

One major street innovation was standardization. In Roman towns, carts and wagons could clog the streets, so stepping stones were inserted across the streets at the corners. This made it possible for pedestrians to keep their feet clean crossing from sidewalk to sidewalk (another Roman invention). Since they were placed at set intervals, cart wheels had to go between them. The distance between stepping stones meant that all carts and wagons had to be the same size, and able to pass each other. This was a technological method for enforcing social habits, creating a better situation for all.

The ultimate Roman technology to me, though, is the geared waterwheel.

Might as well learn this now – I’m into waterwheels. And mills. And textile manufacturing. But that will come later!

Vitruvius is the font of information for historians on Roman waterwheels and many other constructions. He was a Roman officer and an engineer. His De Architectura is one of the few surviving written works on architecture from this time (it was rediscovered in 1414 by Renaissance collector Poggio Bracciolini). In his works, he drew and described technologies in use at the time, such as water clocks, cranes and catapults. We know about Greek technologies (such as Archimedes’ screw) because he described them. And archaeology has helped us see how the mills worked:

Grist mill animation: This animation shows the mechanical workings of a grist mill for grinding grains into meal or flour. The mill is powered by water. A stream or river is channeled through a channel (flume) that deposits the water over a wheel that has buckets evenly spaced around the edge (waterwheel). The weight and energy of the moving water turns the wheel to produce energy for the mill. The axle of the wheel is a long horizontal shaft that has a large gear (crown gear) on the the other end. This large gear drives a smaller gear (lantern pinion gear) attached to a vertical shaft that turns faster. This vertical shaft is also attached to a circular flat stone (runner stone) that then rotates on top of another static circular stone (bed stone). There is a hole in the center of the rotating top stone where grain is slowly fed in for grinding. The grain is ground between the stones and drops out at the edges of the stones where it is collected as meal or flour depending on how long and fine it is ground.

Why was massive flour production so important? Because in addition to feeding the military, we have those slums. Roman cities had many slaves and many poor, who posed a continual threat to the order of the empire. Keeping them fed was part of what historians call “bread and circuses” – Rome providing basic food and entertainment to prevent revolt.

Ptolemy’s Map

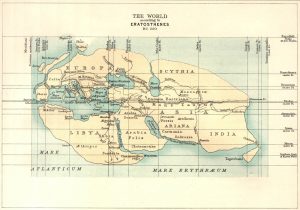

How did the Romans know their place in the world, and expand so confidently? Hellenistic mathematicians and geographers Eratosthenes (3rd century BC, 3-volume Geographica lost but pieced together in excerpts from other sources) and Claudius Ptolemy (AD 2nd century, work rediscovered in the late 13th century by Maximus Planudes) had developed sophisticated world maps. Eratosthenes correctly calculated the circumference of the earth and provided a map, dividing the world into polar, temperate and tropical zones. Ptolemy improved the projection and added current knowledge. Here are their maps, in 19th century versions:

Ptolemy Maps / Wikimedia Commons

The Roman Empire declined in AD 5th century, having been subjected to poor leadership, barbarian migrations, and religious turmoil. The first of these, poor leadership, has a possible technological aspect, at least according to early 20th century historian Rudolf Kobert and popularized in 1965 by historian S.C. Gilfillan. Lead was a common element in the Roman world. It was used as a foundation for face makeup (to make skin white), a material for cups and tableware, and as a durable lining for water pipes. These uses meant that the wealthy Romans were the most exposed to lead. The cups in particular would have been a problem, since wine would cause the lead to leach out of the cup into the wine. In contrast, poorer people didn’t wear makeup, used ceramic tableware, drank water from clay pipes, and tended to drink water or milk (an antidote for lead, as it happens) instead of expensive wine. The theory is that the elite class was slowly poisoned.

In 1984 historian John Scarborough pointed out that this theory had become popular as a replacement for that of Victorian historian Edward Gibbon, who had blamed the fall of the Empire on Christianity. I can certainly see why 1965 would be the time when it would be popular – during that time we were discovering the damage that man-made chemicals were doing to our environment and our health. So this is yet another example of how what historians look at varies according to the issues of their own time. While the lead-poisoning theory has been discredited in recent years, in the case of certain individuals (I’m thinking of a couple of particularly insane emperors), it may have been a factor. The popularity of the theory also shows the continuing disbelief that such a huge and well-organized empire as Rome could ever fall.

But fall it did, as Germanic peoples moved in to the Empire. This was a migration taking centuries, and in fact many Germanic tribes collaborated with Rome and protected the borders. But population pressure proved too great, as many groups pushed their way west due to climate factors in Asia. The culture they brought with them was quite different, and their technological achievements difficult to document. They tended to be rural farmers or hunters or woodspeople, living off the land. Terrified Roman sources tell us that they had no respect for concrete buildings, or engineering, or churches, or baths (or bathing, for that matter). They were mostly illiterate, and brought in an oral culture. Many Greek and Roman scientific works “disappeared” – some had been traded by Greek and Roman merchants to places eastward, some were acquired by the Church and were kept in monastic libraries, and some simply vanished. Those finding their way east were preserved in the eastern Roman Empire, which had always been primarily Greek and had separated from the west in the 4th century. This Byzantine Empire was ruled by a strong emperor with deep ties to the Christian Church, and the classical and Hellenistic tradition of scholarship could be said to have devolved to the Byzantine Empire for several centuries.

Wikimedia Commons

Byzantium had its own technological innovations. The pendentive dome over the Hagia Sophia church (AD 2nd-3rd century) was another technological innovation. The technique of modeling a dome over a square space by cutting out a sphere would not be duplicated again in Europe until the Renaissance. They developed rafted grain mills that could be used on rivers and moved as needed, and counter-weighted trebuchets that were better than catapults. The infamous Greek fire, which was likely a pressurized fuel propellant flame-thrower on a large scale, was used in naval warfare. It was feared throughout the known world, and the chemical composition of the mixture was a state secret. Greek fire could also be sealed in pots for highly effective grenades.

Theotokos mosaic / Hagia Sophia, Istanbul

Mosaics had been invented by the Greeks many centuries before, but in the late Roman Empire they took on different characteristics due to new methods. Although in Western Rome, mosaics were mostly created as floors in villas, in Byzantium they covered walls and ceilings because the use of mosaics was in churches, and you didn’t want religious icons walked upon. Mosaics are made up of small pieces of colored substance. The earliest mosaics used colored stone, at first natural pebbles but by the Romans, engineered stone. In Byzantium, they began to import colored glass from Italy, and developed pastes made of glass, which allowed the light to dance through the mosaic. Mother of pearl, gold leaf, and silver added even more sparkle. Sponsored by the Christian state, mosaics got larger and more beautiful throughout Byzantium.

Wikimedia Commons

During these centuries a new power arose in the east, Islam. In converting to the new religion in the 7th century, many commercial tribes in Arabia and beyond repeated a pattern of collaboration and adaptation for the sake of trade. The ongoing commercial contact between Arab traders and those in Mesopotamia, Egypt and the Hellenistic Empire meant that books, ideas and inventions diffused throughout the emerging Islamic Empire. By the 9th century, the cultural and scientific center of this empire was Baghdad, heart of the Abbasid Caliphate, where Persian and Arabic scholars pored over the works of classical Greece and Rome. Like the researchers at Alexandria centuries before, these scholars were funded by the state to engage in their research. Some historians believe that state funding leads to more “pure” research – abstract explorations into the natural world, without practical intent. That would be science rather than technology, even though the knowledge might later influence technology.

Islamic scholars thus studied and advanced classical knowledge. In medicine, they divided hospitals into wards to prevent cross-infection, and taught medical students in the hands-on environment. They engaged in advanced surgical techniques, inhalant herbs for pain and anesthesia, and the application of sulfur for skin complaints. Al-Rāzī (known as Rhazes in Europe) was one of the most famous physicians of the day. His De variolis et morbillis (A Treatise on the Smallpox and Measles) showed the difference between these two diseases, and his Kitāb al-ḥāwī, the “Comprehensive Book,” surveyed Greek, Syrian and early Arab medicine. His books contained not only factual information, but his own experiences as a physician.

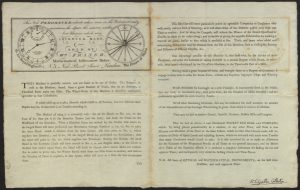

Another Abbassid specialty was an improved astrolabe. We think astrolabes were used in ancient Greece, at least in a simpler design. And we know they were used in Europe in the Middle Ages, after improvements were made by the Arabs. View this 9-minute TED talk on the astrolabe, because it not only tells how an astrolabe works, but also what we’ve lost now that we don’t use it.

https://www.youtube.com/watch?v=b5LJVR4yU08

Tom Wujec, Learn to Use the 13th-Century Astrolabe

Conclusion

Empires need vast resources to maintain them, but they also create vast resources. These cultural resources were funded by centralized states and merchants who can trade goods safely within their zones. They may include vast networks for moving armies, but they also can be cosmopolitan, recognizing and appreciating contributions from other cultures. Within such an environment, the collection of knowledge can be financed by empires with vision, who want to connect themselves to both the past and the future. Thus, when western Rome fell, Byzantium was able to preserve Roman as well as ancient Greek knowledge. And under the Abbasid caliphs it became apparent that a centralized state can provide good support for scientific endeavor – even today there is an appreciation that government is often the best benefactor for scientific experimentation and learning. With a longer view, it is possible to recognize the benefit of such sponsorship, beyond immediate profits.

The Middle Ages (800-1350)

Introduction

The settling of the Germanic tribes represents the founding of European culture. Although Greek and Roman knowledge would impact culture somewhat during the medieval period, the era from AD 800-1300 represents an explosion of technological rather than scientific development.

I consider economic change to be the driver during the Middle Ages. Politically, Europe in the early Middle Ages was a place of local culture, local politics, and local loyalties. The single unifying factor was Christianity, making the Church in Rome the spiritual center. Missionaries from Rome spread ideas of the orthodoxy of the church. By the 9th century, new invaders again insured local rather than broader interactions. Muslim Saracens launched raids on the southern coast of Europe, Slavic tribes exerted pressure from the east, and Vikings raided the northern coasts, stealing goods from churches and terrorizing the population. These new attacks would eventually, like the Germanic invasions of the western Roman empire, become migrations instead of raids. But during the 9th and 10th centuries, raids forced a local response.

Feudalism is the word most commonly used to describe the political set-up of the time. German chieftains had become kings, and they had distributed lands among their vassals as they fought for territory. One, Charlemagne, really became an emperor, a king over many states, and launched a cultural program to revive classical knowledge. Even after his empire disintegrated under his descendants, it became obvious that kings and emperors could not make an effective armed response to a coastal raid hundreds of miles away. Thus the lords controlling the land became local rulers, and often more powerful than their overlords.

Agricultural Revolution

The lands they controlled produced more wealth during this era than before, and the population grew. Towns emerged, likely at the points of trade exchanges and fairs. People in towns made their livings from trade and manufacturing, importing their food from the countryside. Agricultural surplus from the lord’s manor could be sold on the market. Many history books mention the population increase first, and talk about the pressure it caused on the land. If this is true, it provided a motivation for agricultural technologies that some call an Agricultural Revolution. While that makes sense, how did this population increase occur? It would have to be from an increase in the food supply, so it is possible that a warmer, drier climate was also helpful.

Three major innovations mark this revolution.

The first was the heavy plow. Southern Europe had sandy soil, where a lightweight, wheeled plow worked well. In northern Europe, the soil was heavier, with more clay. The development of a heavier plow, with a mouldboard to turn the heavy soil over, made deeper furrows. This meant that seed could be sown more deeply, out of reach of birds and animals. That increased yields. An iron plowshare not only added to the weight but made a deeper cut. Large plows could be pulled by oxen who, though difficult to turn, were very strong.

The second was the horse collar. Before the 9th century, horses pulled plows and wagons with a harness the strapped across its neck. This put pressure on its windpipe, making heavy loads impossible (the horse would pass out). The horse collar put the pressure on the horse’s shoulders, so it could pull more weight easily. Horses were easier to maneuver than oxen, and were more versatile. Although they ate more, they were faster and could increase production on a manor by 30%.

The third was three-field rotation. Previously, arable land was divided into two plots – one for spring planting and the other to lay fallow (empty). This was necessary so that the fallow field would recover – if the same crops are planted year after year, it exhausts the soil and yields go down. The innovation in the medieval period was to divide fields into three parts: 1/3 for grains, 1/3 for legumes, and 1/3 fallow. This increased the amount of land in production during the growing season from 50% to 66%. The increase in food production was enormous.

There is an English nursery rhyme that starts, “Oats, peas, beans and barley grow”. This was the other aspect of the innovation – the legumes planted provided more protein for the diet, which was helpful when few people could eat much expensive meat. Peas and beans are also nitrogen-fixing crops – they contain nodes on their roots that collect and store nitrogen from the air. When plowed under after harvest, these crops do more than allow the soil to rest. They help it recover quickly.

Towns and Guild Production

Population increase and more food meant more customers in the towns. Medieval towns were run (and often founded) by gild merchants (merchant guilds). These were organizations of merchants who controlled prices and trade within the town. Only members of the guild could buy and sell goods in the town, and by the 11th century they comprised the town government. Large towns were often “chartered” – they received a renewable lease from the lord or king who owned the land they were on, and in return paid a fixed tax. As towns expanded and more money was made, these charters were a very good deal. They paid a pittance and had total self-government.

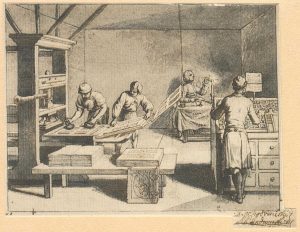

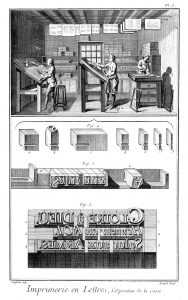

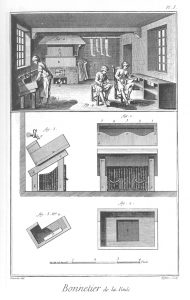

Goods within the town were manufactured by craftspeople, organized into crafts guilds. They controlled production and prices for their section of the town economy. There were guilds of bakers, wire-drawers, leather tanners, blacksmiths and wheelwrights. The cloth industry was the largest sector of the medieval economy. There were spinners’ guilds, weavers’ guilds, fullers’ guilds, dyers’ guilds, and finishers’ guilds.

The focus of my own research was fulling. Fulling is the process that follows the weaving of the spun wool into cloth. The idea is to use chemicals to treat the cloth while it’s being pounded. This felts the woolen fibers together, making a soft, waterproof cloth. In Roman times, fulling was done in large, shallow troughs with stones at the bottom. The woolen cloth was laid out in the trough, and the fullers would pee into the trough, adding water and alum or fuller’s earth. This provided the mix of caustic and fixing chemicals needed. Then they would walk the cloth, treading it on the stones. (If you know anyone named Walker, or Tucker, or Fuller, at least one of their ancestors was a fuller.) Afterwards, the cloth was stretched on tenter-hooks to dry, then sheared and finished.

The major industrial innovation of the Middle Ages related to (yes!) water power. Although there has been some recent evidence of water power for an occasional industrial process in ancient Rome, almost all ancient milling was related to grinding grain into flour. Fulling appears to be the first use of the medieval innovation, the cam. The cam protruded from the waterwheel shaft, and as the shaft turned, the cams pushed down the back of a hammer, allowing it to fall. This could automate the fulling process.

Earlier mills had used the rotary motion of the wheel to create rotary motion of millstones. The cam converted the rotary motion of the wheel to reciprocal motion.

While it was possible to build a fulling mill in town, the rivers in town tended to be slow and sluggish. Waterwheels usually had to be “undershot”; that is, pushed by the slow river from the bottom of the wheel. They weren’t that powerful. The great waterwheels of Rome had been in rural areas, running down hillsides with the water shooting over the top of the wheel to provide more velocity and power. But the fullers’ guild held power in the towns, not outside it.

Europe’s first entrepreneurs broke the rules and made arrangements for fulling mills to be built on lords’ lands in areas with falling water. The guilds responded by trying to prevent industrial espionage – they posted guards at the town gates to search anyone leaving with bundles of unfulled cloth. But eventually rural watermills would use the cam to run bellows for blacksmithing, stamps for minting coins, and other purposes. In addition to the cam, a crank could also be hooked up to a waterwheel, running sawmills and pumps.

Rural water power was the wave of the future, and threatened the power of the guilds. In lowland areas with wind but no fast-flowing water, windmills did the work.

Codices, Tablets, and Literacy

The Middle Ages also saw the invention of the codex, but very early – really around AD 4th century. I find it interesting that the book was invented about the time of the lowest literacy rate in Europe, during the Germanic migrations and the fall of the western Roman empire.

The Cookery Scroll / Morgan Library & Museum, New York

When we think of ancient writings, like at the Library of Alexandria or in Hellenistic marketplaces, we have to think of scrolls, usually of papyrus. Parchment, made of animal skin, was also sometimes used. Since papyrus was only grown in Egypt, any interruption in trade with Egypt could cause a shortage. Scrolls are interesting pieces of technology – they have advantages and disadvantages. Writing was on only one side (the inside) of the roll, so the letters didn’t touch each other, and they were durable (any damage done was usually only to the outside). Scrolls weren’t always rolled – Julius Caesar was known to fold his accordian-style for easier access and storage.

Folding papyrus or parchment sheets into quarters or eighths and binding them at one edge creates a codex. A popular conception is that the Christian Church deliberately adopted the codex so that Christian texts would look different from Jewish and pagan scrolled works, but I haven’t been able to verify that. It is just as likely that the advantages of the codex made it popular. It was easier to store, could be closed by one person (big scrolls were hard to roll), and the folded pages created an edge on which something could be written to help define it.

Left: Writing with the stylus in Roman period, they wrote the notes with the flat part of the stylus wiped one writing out again. / Photo by Peter van der Sluijs, Wikimedia Commons

Right: The Codex Gigas, 13th century, Bohemia / Kungl. biblioteket

Gradually the codex replaced the scroll, and as it did so items that weren’t converted were lost. We see this happen now – every time information storage changes format (records and cassettes to CDs, or movies to Blu-ray), some unique items are lost. Also lost may have been the linear nature of the scroll – pages are permanently stitched together in a scroll, and it doesn’t require a bookmark. You are always where you left off.

In addition to the codex, the Roman period also saw the advent of tabulae, wax tablets written on with a stylus. A few of these could be tied together into a stack, and even sealed as official documents. Unlike scrolls or codices, tablets were reusable – you could melt the whole page and erase everything. They could be designed to be lightweight (certain lightweight woods or bone were often preferred) and portable, with carrying cases. They could be used for drafting letters or other documents that would later be written in more permanent form, notebooks, sketchbooks, accounting books and diaries. Charlemagne apparently wore one around his neck in his unsuccessful efforts to learn how to read and write. (1)

These means of keeping records increased in importance throughout the Middle Ages. Early Germanic settlers were not literate, and their culture kept records verbally and visually. When land changed hands, for example, a ceremony was conducted in which the seller put a clod of earth into the buyer’s hand in front of witnesses. In fact, witnesses were of extreme importance in illiterate cultures, as they carried history. So did older people, who were valued for their age and memory. And lest we think that their memory was somehow faulty, the memories of people in the 4th century, say, were far better than ours.

In cultures without writing, people have prodigious memories. Medieval singers and troubadours who couldn’t write would move from town to town entertaining people with stories lasting two hours or more. Entire epics were memorized and sung or spoken. Often audiences could repeat an hour-long song they’d only heard once.

Literacy enables us to write things down and record them. But literacy also means things are lost. We have already noted Socrates’ concerns about writing via Plato – Socrates felt that writing entombed ideas in stone where they were harder to question. In the medieval period, as tribes and towns and people became literate, they lost their prodigious memories. Over the centuries they learned not to remember anymore, because they could always write it down. By the 20th century, I couldn’t go into a grocery store without a list if I were buying more than five things. And the hypertexted nature of the internet has made our memories even worse – the understanding that we can look it up on our phone means we need remember nothing at all.

Christ in Majesty from the Aberdeen Bestiary (folio 4v) / University of Aberdeen

Illuminated manuscripts were also communication technology, as well as artworks. Benedictine monks in particular supported literary activities, copying ancient and medieval texts by hand, usually into codices, beginning in the 10th century. The labor of scribes and illustrators was appreciated as work appropriate to Benedictines, who considered manual labor crucial to spirituality. Monks working in scriptoria copied works on religion, botany, herbs, and medicine. They made copies of Jerome’s Latin Vulgate Bible for use in churches. Monasteries could make money by selling the books, and they were needed by the 12th century for the new universities of learning. And those with artistic talent illuminated the text with images. Brilliant pigments were made of ground stone and applied with egg whites, and gold was pounded into foil sheets or powder. The illuminations took up more and more of the page as time went on – by the 15th century, when printing was popularized, some books were almost all illuminations.

Some historians believe that herbal medicine advanced most in monasteries during the medieval period, and that medical texts were of particular importance. Greek texts on medicine were often copied, preserving that knowledge. Most monasteries had an herb garden, and some monks who were experts in herbal medicine. It was a Benedictine tenet to care for the sick. That said, some historians believe that most monks were illiterate (and scribes just servants to the monastery) and learned little of value from the text they copied. The true repository of medical knowledge during the medieval period may actually have been the “wise women” who catered to the needs of sick villagers and townspeople every day. Even after medical schools were established (several at Italian universities like Salerno), when Arabic medicine was brought into the curriculum, most people trusted these lay healers.

Medical potions in an illuminated manuscript / Wikimedia Commons

There are a number of illuminations and drawings related to the practice of medicine that have survived, and one of the things I find most interesting is the pictures showing uroscopy. Some of the first writings translated from Arabic were medical treatises on diagnosis using the pulse (Galen had identified at least 27 types of pulse). The examination of a patient’s urine had been done since ancient times, and took on increased sophistication in the medieval period. The image on the left shows Constantine the African lecturing on the subject (13th century). The listeners seem to have brought flasks of urine for examination or examples – the shape of the urine flask (called a jordan) changed little for hundreds of years. The illuminations and drawings showing urine for diagnosis were created in brilliant color. Diagnosis was often dependent the on the color of the urine (books refer to “black wyne” color and “liver colored”, for example) (2) as well as the color of any sediment or stones. Consistency could also be better shown with color. The techniques developed for illuminating manuscripts were useful for anything requiring visual information. Art served science (or at least medical knowledge) in the development of such works.

The Gothic Cathedral

Façade of Reims Cathedral, France / Wikimedia Commons

Gothic architecture is a style of architecture that flourished in Europe. It evolved from Romanesque architecture and was succeeded by Renaissance architecture. Originating in 12th-century France and lasting into the 16th century, Gothic architecture was known during the period as Opus Francigenum (“French work”) with the term Gothic first appearing during the later part of the Renaissance. Its characteristics include the pointed arch, the ribbed vault (which evolved from the joint vaulting of romanesque architecture) and the flying buttress. Gothic architecture is most familiar as the architecture of many of the great cathedrals, abbeys and churches of Europe. It is also the architecture of many castles, palaces, town halls, guild halls, universities and to a less prominent extent, private dwellings, such as dorms and rooms.

It is in the great churches and cathedrals and in a number of civic buildings that the Gothic style was expressed most powerfully, its characteristics lending themselves to appeals to the emotions, whether springing from faith or from civic pride. A great number of ecclesiastical buildings remain from this period, of which even the smallest are often structures of architectural distinction while many of the larger churches are considered priceless works of art and are listed with UNESCO as World Heritage Sites. For this reason a study of Gothic architecture is largely a study of cathedrals and churches.

A series of Gothic revivals began in mid-18th-century England, spread through 19th-century Europe and continued, largely for ecclesiastical and university structures, into the 20th century.

Cavalry, Cannon, and Warfare Technologies

It is commonly thought that the development of the stirrup, a simple device, holds huge importance in medieval warfare. Stirrups likely came into use by cavalry around the 8th century. They provide for faster mounting and dismounting, and enable greater stability on the horse, particularly when wielding weapons. Interestingly, for awhile historians equated the stirrup with the rise of feudalism as a political system. While this view has fallen into disfavor (particularly because the Byzantines and Arabs adopted the stirrup around the same time, but not feudalism) the two do coincide. Stirrups made cavalry much more effective as a fighting force.

Medieval warfare focused on capturing and holding fortified outposts and the lands they defended. In 1095, Pope Urban II called the first international Crusade to the Holy Land. In addition to increasing his own power as pope (because he could call on soldiers from all nations), Urban was trying to redirect internal European violence caused by the cessation of localized warfare with invaders. By 1095 there were no more raids, and yet everywhere knights trained and lords fought with each other, having nothing else to do with the militarized system of feudalism. Urban saw a way to turn this violence to “good” by fighting the non-Christian forces who had occupied Jerusalem for hundreds of years. Crusades thus added another element to warfare, since with a Crusade long distances had to be covered with a great deal of equipment.

Siege warfare was the primary form of war in the medieval period. Whether on Crusade or fighting a local lord, territory could only be won by capturing the stronghold. Thus many technologies were focused on battering down walls and gates, and interfering with supplies of food and water. Pitched battles on battlefields could be profitable, however, if one captured a high-ranking member of the enemy and held him for ransom – most kings that engaged in combat were at one time captured and ransomed back. Some historians believed that full engagement was avoided when possible, as it was costly and difficult to hold territory through field combat. Castles were chosen strategically to be fortified as strongholds or left to fall. Sieges, by their nature, could be lengthy, continuing into bad-weather months and leading to disease among the troops.

Battle of Crécy. Image from a 15th-century illuminated manuscript of Jean Froissart’s Chronicles. / Bibliothèque nationale de France