Surveillance evolved from human informants and physical observation into databases, metadata, biometrics, and AI-driven systems that reshape privacy and power.

By Matthew A. McIntosh

Public Historian

Brewminate

Introduction: From Watching Bodies to Reading Data

Surveillance began as an intensely human act. Someone watched a road, listened at a door, followed a suspect, opened a letter, cultivated an informant, or reported suspicious conduct to a ruler, magistrate, employer, priest, landlord, or police official. In its earliest and most durable forms, surveillance depended on proximity, memory, trust, fear, and social position. It was embodied work, carried out by people who observed other people and translated those observations into rumor, testimony, accusation, intelligence, or administrative knowledge. Yet even in these older forms, surveillance was never merely passive looking. It was a means of organizing power. To watch was to decide who required attention, who appeared dangerous, who belonged within the circle of trust, and who might be marked as deviant, disloyal, criminal, foreign, or politically suspect.

The modern history of surveillance is not simply a story of better tools. It is a story of changing relationships among power, identity, information, and time. Premodern authorities depended on informants and spies because knowledge had to travel through human channels. Modern states and institutions gradually learned to stabilize that knowledge in files, registers, photographs, fingerprints, dossiers, police records, census forms, and identification systems. The watched person became not only a body in view, but an entry in an archive. That shift mattered profoundly. A face could be forgotten, an informant could lie, and an observer could disappear, but a file could travel across offices, survive across decades, and attach suspicion to a name long after the original encounter had passed. The archive also changed the scale of surveillance. It allowed institutions to compare people who had never met, connect events separated by years, and treat identity as something that could be verified through accumulated records rather than local recognition. Police departments, courts, prisons, immigration offices, welfare agencies, employers, and commercial firms all participated in this expansion of recorded identity, even when their purposes differed. Surveillance became bureaucratic before it became digital. It moved from the eye to the document, from the encounter to the record, and from immediate suspicion to the long institutional memory of names, categories, and classifications.

The digital age intensified that transformation by separating surveillance even further from the visible act of watching. By the late 20th century, computers, networked databases, electronic transactions, telecommunications systems, and later internet platforms made ordinary life increasingly legible through data traces. A person no longer had to be physically followed to be tracked. Phone records, credit histories, browsing patterns, location data, search queries, travel records, employment files, biometric identifiers, and social media interactions could be aggregated into profiles that revealed habits, associations, preferences, vulnerabilities, and risks. Roger Clarke’s term “dataveillance” captured this decisive turn: the systematic monitoring of people’s actions or communications through information technology. In that world, surveillance did not disappear behind the screen. It became more pervasive precisely because it no longer required continuous human attention. The database could remember what no individual observer could hold in mind.

This traces that long movement from watching bodies to reading data. It begins with the informant, the spy, and the physical observer, then follows surveillance into the bureaucratic world of files, fingerprints, wiretaps, cameras, computerized records, metadata, corporate data brokerage, facial recognition, and artificial intelligence. The central argument is that surveillance has evolved from a selective practice aimed at particular persons into a layered infrastructure capable of monitoring populations, modeling behavior, and anticipating risk. Its danger lies not only in the possibility of abuse, though that danger is real. Its deeper historical significance lies in the way surveillance reshapes social life before any punishment occurs. It alters what anonymity means, what privacy can protect, how institutions define suspicion, and how ordinary people behave when they know that their movements, communications, purchases, images, and associations may be stored, sorted, and interpreted by systems they cannot see.

Informants, Spies, and the Political Origins of Surveillance

Surveillance long preceded the camera, the fingerprint file, the police database, and the algorithm. Its earliest political forms arose from the basic problem of rule: no king, emperor, magistrate, priesthood, or military commander could personally see the full territory over which authority was claimed. Power depended on intermediaries who could extend the ruler’s senses beyond the palace, camp, temple, courthouse, or city gate. Messengers, servants, household agents, tax collectors, soldiers, merchants, priests, local notables, and professional informants all carried information upward through systems of dependence and fear. Their reports did not merely describe events. They translated ordinary conduct into matters of loyalty, danger, rebellion, corruption, impiety, treason, or disorder. Before surveillance became an archive, it was a chain of human observation, shaped by status, proximity, rumor, and political need.

Ancient empires made this need especially visible because imperial government required knowledge across distance. The larger the territory, the more rulers depended on networks of couriers, provincial officials, scouts, and trusted observers to detect unrest, monitor tribute, identify unreliable subordinates, and maintain military security. In the Persian, Hellenistic, Roman, Chinese, and Byzantine worlds, surveillance was not a separate institution in the modern sense, but a routine element of imperial administration. It existed wherever information about people, resources, roads, armies, and local loyalties had to be gathered and transmitted to centers of command. The political meaning of such practices was double-edged. On one hand, rulers presented intelligence gathering as necessary for stability, justice, taxation, and defense. On the other hand, the same channels could turn suspicion into accusation and convert private rivalry into public danger. Surveillance emerged early as a technology of political interpretation: it told rulers not only what had happened, but who should be feared.

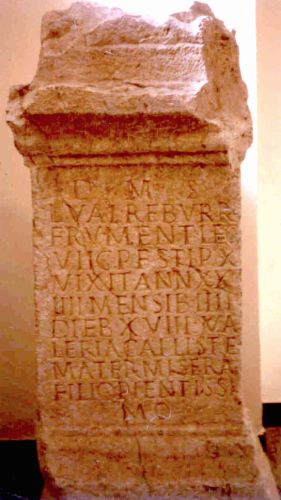

Roman political life reveals this ambiguity with particular force. The Roman Republic depended heavily on public reputation, patronage, accusation, and elite networks of information, but the imperial period intensified the dangers of denunciation. Under emperors who feared conspiracy, the informer could become a powerful and corrosive figure, especially when charges of treason blurred the line between real plotting and political convenience. Tacitus’s accounts of the early empire repeatedly portray a world in which speech, friendship, gesture, writing, and silence could be drawn into the orbit of suspicion. The delatores, or professional informers, were especially feared because they operated at the unstable boundary between legal accusation and political opportunism. Their power depended not only on what they knew, but on what anxious rulers were prepared to believe. A careless remark, an old association, an ambiguous letter, or the possession of wealth could be reframed as evidence of disloyalty when political conditions rewarded accusation. The significance of the Roman example is not that Rome invented surveillance, but that it shows how surveillance becomes politically poisonous when information flows toward rulers who reward accusation and punish ambiguity. The informer is not merely a witness. He becomes a participant in power, able to transform private life into evidence and fear into a method of government.

Medieval and early modern societies inherited these older practices while embedding them in religious, legal, and local institutions. Lords relied on stewards and bailiffs, monarchs on sheriffs and envoys, bishops and inquisitors on testimony, confession, visitation, and denunciation. Communities were often expected to police themselves, and surveillance operated through neighbors as much as officials. Heresy, vagrancy, sedition, theft, illicit trade, sexual conduct, and religious nonconformity could all become matters of organized attention. This form of surveillance was intimate because it drew upon the social closeness of village, parish, guild, household, and town. Yet it was also political because local knowledge could be absorbed by larger systems of authority. The person who watched from nearby might serve a distant power, and the report that began as gossip could enter ecclesiastical, seigneurial, or royal channels of discipline.

The early modern state gave these older practices a broader administrative reach. As monarchies expanded their fiscal, military, diplomatic, and policing capacities, they developed more systematic methods for gathering intelligence. Postal interception, diplomatic espionage, household spies, paid informers, censorship, licensing systems, and secret correspondence all helped rulers monitor threats imagined as internal as well as external. Reformation and counter-Reformation conflicts sharpened this tendency by making belief itself a matter of state security. A subject’s conscience, associations, books, letters, sermons, and rituals could become signs of loyalty or danger.

By the 18th and 19th centuries, these human systems of watching had not disappeared, but they had begun to merge with more durable administrative forms. Informers, spies, and police agents still mattered, especially in moments of revolution, war, labor unrest, imperial rebellion, and ideological conflict. Yet their reports increasingly entered written files, police archives, court records, intelligence memoranda, and centralized offices. This transition is crucial. It marks the point at which the political origins of surveillance began to move toward the bureaucratic systems that would later define modern identification and dataveillance. The informant remained important because human beings still noticed what machines could not. But once his report entered the record, surveillance acquired a longer life. A suspicion could be preserved, copied, circulated, misread, revived, and attached to a person beyond the original encounter. The old political art of watching people prepared the ground for the modern administrative habit of recording them.

Files, Fingerprints, and the Bureaucratic Identification of Persons

The next major transformation in the history of surveillance came when institutions learned to make identity portable. Informants and spies could report what they had seen, but their knowledge remained fragile, local, and often dependent on personal memory. Modern bureaucracy sought something more durable. It wanted records that could outlast the encounter, travel between offices, and identify a person beyond the immediate judgment of a witness. This shift became especially important in the 19th century, when urbanization, migration, industrial labor, colonial administration, prisons, police departments, and expanding welfare and taxation systems placed more strangers in contact with institutions that needed to classify them. The problem was no longer only how to watch a suspect. It was how to know whether the person standing before an official had appeared somewhere else under another name.

The file became one answer to that problem. Registers, case notes, prison records, poor-law documents, military papers, passports, employment records, photographs, and police dossiers turned persons into retrievable administrative objects. The file did not simply preserve information. It reorganized identity into categories that institutions could use: name, age, residence, occupation, physical description, offense, family status, nationality, race, religion, health, poverty, military service, or political affiliation. These records were often produced for practical reasons, but they also changed the structure of power. A person could be watched in absentia because the file stood in for the body. Once recorded, identity became something that could be compared, transferred, corrected, suspected, or misapplied by people who had never met the individual being classified. The file also created a new kind of institutional memory, one that could survive the death, retirement, prejudice, forgetfulness, or personal discretion of any single official. It allowed suspicion to harden into documentation and documentation to become the basis for future decisions. A past arrest, a poor-law application, an immigration notation, a prison entry, or an intelligence report could follow a person across time, shaping how later officials interpreted that person before any new encounter occurred. Bureaucratic surveillance depended less on seeing and more on filing, less on immediate behavior and more on the administrative afterlife of recorded facts.

Criminal identification was one of the clearest laboratories for this new logic. Police forces faced the recurring problem of aliases, repeat offenders, and mobile populations who could disappear into cities or cross jurisdictions. In the late 19th century, Alphonse Bertillon’s anthropometric system attempted to solve this by measuring the body with scientific precision. Head length, arm span, ear shape, height, scars, photographs, and standardized descriptions could be combined into a record that promised to distinguish one person from another. Bertillonage reflected the broader confidence of the period: the belief that the body could be translated into reliable data, and that institutional order could be built from measurement. Its importance lay not only in its accuracy, which was later challenged, but in its ambition. It treated identity as a problem of classification, indexing, and comparison.

Fingerprinting eventually displaced anthropometry because it offered a simpler and more persuasive claim to individual uniqueness. The history of fingerprint identification was not linear, and it developed across several imperial, colonial, scientific, and police contexts. William James Herschel used handprints and fingerprints in British India for identification in the mid-19th century, while later figures such as Francis Galton, Edward Henry, and Juan Vucetich helped systematize fingerprint classification and criminal application. By the early 20th century, fingerprints had become powerful because they joined the physical body to the archive. A fingerprint was intimate, bodily, and seemingly permanent, but its institutional usefulness depended on being collected, classified, stored, and searched. It turned the body into an index card. That is why fingerprinting belongs not only to forensic science, but to the history of surveillance itself: it made identity both biological and bureaucratic.

The creation of organized identification bureaus completed this transition from local suspicion to shared institutional memory. In the United States, the National Association of Chiefs of Police of the United States and Canada opened the National Bureau of Identification in Chicago in 1897, with files that included mugshots, fingerprints, and Bertillon records. That development matters because it points toward the later logic of centralized databases: information gathered in one place could be made useful somewhere else. Identification no longer depended entirely on the memory of a local officer, the testimony of an informant, or the visibility of a suspect in a familiar neighborhood. It could be produced through records, photographs, measurements, and prints that moved across jurisdictions. The bureaucratic identification of persons marked a decisive stage in surveillance history. Before computers made databases searchable at mass scale, police files and fingerprint systems had already taught institutions to imagine human beings as records waiting to be matched.

Wiretaps, Telegraphs, and the Surveillance of Communication

The bureaucratic identification of persons made identity durable, but the surveillance of communication made relationships visible. Modern states and police agencies did not only want to know who a person was. They wanted to know whom that person contacted, what messages passed between them, how information moved, and whether political, criminal, commercial, or military activity could be detected through communication itself. This marked another major shift in the history of surveillance. Earlier informants had overheard conversations, intercepted letters, or reported rumors, but modern communication systems created technical channels through which messages could be monitored at scale. Telegraph wires, postal networks, telephone exchanges, radio transmissions, and later digital networks promised speed and connection, yet they also concentrated communication along routes that could be tapped, delayed, copied, decoded, or mapped.

The telegraph was one of the first technologies to make this contradiction unmistakable. It compressed distance by allowing messages to travel faster than bodies, horses, ships, or trains, but it also required messages to pass through offices, operators, wires, and institutional systems. Privacy depended not only on the sender and recipient, but on the integrity of the network and the people who handled it. This meant that communication entered a semi-public technical environment even when the content remained personal, commercial, diplomatic, or military. Operators could read messages, companies could retain records, states could demand access, and military authorities could treat communication lines as strategic infrastructure. In wartime, diplomatic crisis, labor conflict, and colonial administration, telegraphy became an instrument of command and a target of interception. Governments could censor messages, seize lines, compel cooperation from operators, or monitor traffic patterns. The telegraph helped create a new kind of surveillance problem: communication could be watched even when the communicating persons were physically absent. The message became separable from the messenger, and that separation made modern interception possible. It also made surveillance less dependent on following bodies through space. To monitor a telegraph line was to monitor the movement of information itself, and in modern political life that movement could be as revealing as physical presence.

The telephone intensified the issue because it carried the human voice into a technical system. Unlike letters or telegrams, telephone conversations felt immediate, intimate, and private, yet they depended on wires, switches, exchanges, operators, and corporate infrastructure. Wiretapping exploited that dependence. A conversation could be captured without entering a house, confronting a suspect, or seizing a document. This unsettled older legal and moral assumptions because surveillance no longer necessarily required physical trespass. The state could reach into communication while remaining outside the walls. That ambiguity became central to 20th-century debates over privacy, policing, and constitutional protection. The telephone did not simply create a new way to talk. It forced courts, legislatures, police agencies, and citizens to ask whether privacy attached only to physical places and papers, or also to the invisible passage of speech through technological systems.

The United States Supreme Court’s 1928 decision in Olmstead v. United States revealed how difficult that question had become. Federal agents had used wiretaps in a Prohibition investigation, and the Court held that the Fourth Amendment had not been violated because there had been no physical trespass into the defendants’ homes or offices. The majority treated constitutional protection largely through the older language of property, physical intrusion, and tangible seizure. Justice Louis Brandeis’s dissent looked forward rather than backward. He understood that new technologies could expose private life without breaking doors, opening desks, or taking papers. His warning mattered because it recognized a deeper constitutional problem: the state’s power to invade privacy was no longer limited by the physical difficulty of entering private places. Technological surveillance could be quieter, broader, and more efficient than older forms of search. It could collect words spoken in presumed confidence while leaving no visible sign that surveillance had occurred. The significance of Olmstead lies not only in its immediate ruling, but in the historical tension it exposed: modern surveillance had begun to outgrow legal categories built around physical space. Communication could now be invaded without the body being touched, and the law had to decide whether privacy was a matter of walls or a condition of personal liberty.

That older framework eventually gave way. In Katz v. United States in 1967, the Court rejected the narrow idea that Fourth Amendment protection depended only on physical trespass or property boundaries. The case involved electronic listening at a public phone booth, but its importance reached far beyond that setting. The Court’s reasoning helped establish the idea that constitutional privacy could follow a person’s reasonable expectations, not merely the walls of a home. This did not end electronic surveillance. It reframed it. Wiretapping could be legitimate under certain legal procedures, especially with warrants, but it could no longer be treated as constitutionally irrelevant simply because no official had stepped across a threshold. Surveillance law began to recognize what technology had already made obvious: communication networks had become extensions of social and private life.

The deeper historical point is that communication surveillance prepared the ground for metadata surveillance. Long before digital databases made call records, email headers, IP addresses, and location signals searchable at enormous scale, telegraph and telephone systems had already shown that the pattern of communication could matter as much as the message itself. Who contacted whom, how often, from where, at what hour, and through which channel could reveal networks of association even without full access to content. This distinction between content and traffic became increasingly important in intelligence, policing, and later digital surveillance. Telegraphs and wiretaps belong at the center of surveillance history. They show how modern communication created both freedom and exposure, allowing people to reach across distance while placing their words, voices, and relationships inside systems that others could monitor.

Cameras, Closed Circuits, and the Analog Expansion of Watching

The rise of files, fingerprints, and wiretaps made identity and communication available to institutional scrutiny, but camera surveillance expanded the reach of observation itself. Photography had already changed identification by fixing the face into a durable image, especially in mugshots, passports, colonial records, and police files. Closed-circuit television carried that visual logic into space. It allowed people, property, streets, workplaces, stores, transport systems, and public events to be watched from elsewhere. The historical significance of this shift was not simply that cameras could see what officials could not. It was that vision could be technically extended, centralized, and increasingly normalized. Surveillance no longer required an officer standing on the corner, a guard walking the corridor, or a witness waiting nearby. Watching could be displaced into a control room.

Closed-circuit television developed first as a limited technical system rather than a mass public infrastructure. A widely cited early use came in Nazi Germany in 1942, when CCTV was used to monitor V-2 rocket launches, allowing observation of a dangerous process from a safer distance. Later accounts identify engineer Walter Bruch with this early system, and commercial CCTV systems began appearing after the war. The point is not that wartime rocketry directly created the modern surveillance society, but that the early use of closed-circuit viewing revealed the technology’s basic logic: the camera could separate sight from bodily presence. The observer no longer had to occupy the same space as the observed. This mattered because surveillance became less limited by danger, distance, or inconvenience. A remote image could substitute for direct attendance, and that substitution changed what institutions imagined they could monitor. It also altered the authority of observation itself. What had once required a person to stand, risk, witness, and remember could now be mediated through apparatus: lens, cable, monitor, and operator. The image seemed to offer a neutral record, but it was already shaped by placement, angle, resolution, institutional purpose, and the choices of those who decided what needed to be watched. CCTV did not simply extend vision. It disciplined vision, directing attention toward spaces and behaviors that authorities had already defined as operationally important, risky, valuable, or suspicious.

By the 1950s and 1960s, CCTV began moving from specialized military, industrial, and institutional settings into commercial and municipal environments. Banks, shops, transit systems, factories, and public authorities adopted cameras for security, crowd management, workplace supervision, and crime prevention. In 1968, Olean, New York, became an early and frequently cited U.S. example of municipal street-camera surveillance, installing cameras along its main business street and transmitting images to police. That experiment represented a new relationship between public space and police observation. The street had always been visible, but CCTV made it continuously available to remote official attention. It also helped recast surveillance as an ordinary feature of urban management rather than an exceptional response to a particular suspect.

Early CCTV systems remained constrained by their analog form. They often required live monitoring, limited storage, fixed camera positions, expensive equipment, and human operators capable of deciding what mattered. This created a practical paradox. Cameras seemed to extend vision, but their usefulness still depended on attention. Someone had to watch the screen, interpret the image, and respond quickly enough for the observation to matter. In that sense, analog camera surveillance did not eliminate the human observer. It reorganized the observer’s position. Instead of walking through space, the guard or officer sat before a bank of monitors. Instead of encountering people directly, the observer encountered fragments of motion, angle, distance, and display. This could make surveillance more expansive, but also more selective. Operators noticed some behaviors and missed others; some bodies appeared suspicious because of social expectation rather than visible evidence; some spaces were watched intensely while others remained ignored. The camera’s apparent objectivity could conceal the interpretive labor behind the screen. The human act of watching remained, but it was mediated by lenses, cables, screens, and institutional routines.

Recording changed this arrangement by turning visual surveillance into an archive. Videotape and later videocassette systems allowed institutions to review events after they occurred, making the camera useful even when no one was watching at the precise moment something happened. This was a profound change in surveillance logic. Live CCTV emphasized immediate detection, but recorded video emphasized retrospective investigation, evidence, discipline, and reconstruction. A theft, assault, workplace violation, traffic incident, or suspicious encounter could be replayed, slowed down, copied, circulated, and interpreted after the fact. Visual surveillance began to resemble the file: it preserved traces of behavior for later institutional use. The camera watched in the present, but the recording made that present available to the future.

The analog expansion of watching served as a bridge between older physical observation and later digital monitoring. It normalized the idea that public and semi-public spaces could be continuously visible to institutions, while also teaching police, employers, businesses, and governments to treat recorded behavior as evidence. Yet analog CCTV still had limits that later digital systems would overcome. It could see only where cameras were placed, store only what equipment allowed, and retrieve footage only through laborious review. Its power was real, but bounded. The next transformation would come when cameras, files, and communication records entered computerized systems capable not merely of watching and recording, but of searching, linking, analyzing, and eventually predicting. Analog surveillance expanded the eye. Digital surveillance would teach that eye to remember at scale.

The Computerized Turn: Databases, Bureaucracy, and the Birth of Dataveillance

The computerized turn did not replace older forms of surveillance so much as reorganize them. Files, fingerprints, photographs, wiretap records, personnel dossiers, welfare records, tax forms, credit histories, police reports, and administrative registers had already made persons visible to institutions before the digital age. What computers changed was the speed, scale, and relational power of those records. Information that once sat in separate cabinets, local offices, paper folders, card indexes, and institutional silos could now be stored, searched, duplicated, transmitted, and compared with far greater efficiency. Surveillance became less dependent on watching a person directly and more dependent on querying the traces that person had left behind. The computer did not merely preserve information. It made information operational.

This change mattered because bureaucracy had always wanted memory, but paper memory was slow. A file could follow a person, but only if someone knew where to look, requested the document, interpreted its contents, and physically moved or copied it. Computerized records altered that relationship by making retrieval itself a form of power. Names, addresses, identification numbers, dates of birth, employment histories, arrest records, school records, medical information, tax filings, and financial activity could be searched through standardized fields rather than remembered through local familiarity. Classification became faster and more abstract. The person did not have to appear before the institution in order for the institution to act upon them. They could appear as a record, a match, a flag, a category, an anomaly, or a risk indicator.

The database marked a deeper transformation than the digitization of paper. It changed the architecture of institutional knowledge. A paper archive often preserved events in sequence, embedded in narrative, correspondence, forms, and case histories. A database, by contrast, breaks experience into fields that can be sorted and recombined. This can make administration more efficient, but it also reshapes the person being administered. The individual becomes legible through fragments: one field for income, another for residence, another for criminal history, another for immigration status, another for benefits, another for credit, another for health, another for school enrollment, another for employment eligibility. Each field may appear neutral, but together they construct an institutional double. This double is not identical to the human being, yet it can determine whether that human being receives credit, employment, housing, investigation, exclusion, suspicion, assistance, or punishment. The danger lies partly in abstraction. Databases encourage institutions to treat information as complete because it is available, standardized, and retrievable, even when the record is partial, outdated, mistaken, or stripped of context. A field can record an arrest without explaining its circumstances, an address without explaining instability, a missed payment without explaining illness, or a benefit claim without explaining need. Once converted into structured data, human complexity becomes easier to rank, sort, deny, approve, or investigate. The database did not merely store bureaucratic knowledge. It altered the form of knowledge itself.

Clarke’s concept of “dataveillance” showed this historical shift with unusual precision. Writing in the late 1980s, Clarke described a world in which information technology allowed people’s actions and communications to be monitored through personal data systems. The term mattered because it named a form of surveillance that no longer required continuous visual observation. A person could be watched through transactions, records, and informational traces. This was not simply a matter of government intelligence or criminal policing. It included consumer databases, employment systems, insurance records, financial institutions, welfare administration, educational records, and other bureaucratic structures that used personal data to make judgments. Dataveillance widened the field of surveillance studies. It showed that the ordinary administrative handling of data could become a mechanism of monitoring, sorting, and social control. It also clarified why digital surveillance could feel less dramatic than older forms while being more pervasive. The police officer on the corner, the detective at the door, and the camera in the ceiling are visible enough to be recognized as surveillance. A credit query, database match, benefits eligibility screen, insurance risk category, employee background check, or automated fraud flag may be far less visible, yet it can carry equal or greater practical force. Dataveillance works through routine transactions, not only exceptional investigations. Its power is often hidden in ordinary administration, where monitoring appears as efficiency, verification, risk management, or customer service.

The computerized turn also blurred the boundary between state and commercial surveillance. Governments had long collected records for taxation, policing, military service, border control, public health, and welfare administration. Corporations increasingly collected data for credit scoring, marketing, insurance, employment screening, customer profiling, and risk assessment. The two worlds were never fully separate. State agencies could rely on commercial records, while private firms could benefit from public identification systems, court records, postal data, property records, and later digital traces. This interdependence created a new political economy of surveillance. Personal information became administratively useful, commercially valuable, and increasingly mobile. A record produced for one purpose could be reused for another, and the person described by the record often had little knowledge of how far that information traveled.

The birth of dataveillance represents one of the decisive thresholds in the history of modern surveillance. Earlier systems had watched bodies, intercepted messages, photographed faces, measured limbs, collected fingerprints, and stored files. Computerized systems made those older practices interoperable in principle, even before they became fully networked in practice. They taught institutions to think of people as data subjects whose lives could be reconstructed through linked records and patterned behavior. This did not mean that human observers disappeared. Officials, analysts, clerks, police officers, employers, and investigators still made decisions. But their decisions were increasingly mediated by screens, fields, codes, indexes, matches, and database outputs. Surveillance had moved from the eye to the file, and now from the file to the searchable system. The next stage would come when everyday communication, commerce, and social life moved online, multiplying the traces available for monitoring beyond anything paper bureaucracy could have produced.

The Internet, Metadata, and the Expansion of Trace Surveillance

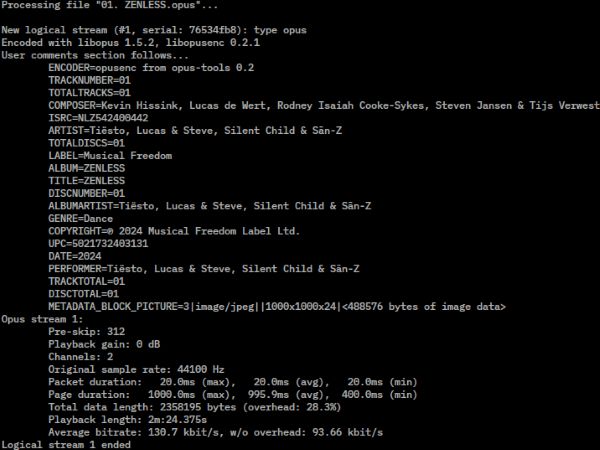

The internet extended dataveillance from institutional records into the ordinary texture of daily life. Earlier databases depended heavily on information gathered by governments, employers, banks, police departments, courts, schools, insurers, and other formal organizations. Networked computing changed the density and frequency of data production. Email, websites, search engines, online shopping, message boards, social media platforms, mobile phones, cloud services, and app ecosystems made communication, consumption, entertainment, work, friendship, and political expression increasingly dependent on digital infrastructures. This did not mean that every act was intentionally watched by a human observer. It meant that more acts generated traces capable of being stored, searched, aggregated, analyzed, sold, subpoenaed, or repurposed. Surveillance expanded because the conditions of participation increasingly required leaving data behind.

Metadata became central to this transformation because it revealed the structure of behavior without necessarily disclosing the full content of communication. A phone call, email, text message, website visit, card payment, location ping, or login event produces information about time, source, destination, device, duration, frequency, and relationship. Such information can seem less intimate than the words spoken or written, but in aggregate it can expose patterns that content alone might not reveal. Who communicates with whom, how often, from where, at what hour, after which event, and in relation to which other contacts can map social networks, routines, associations, emotional rhythms, professional ties, political activity, and personal vulnerabilities. Metadata is especially powerful because it turns life into relational structure. It does not need to know what someone said to identify the cluster of people they speak with most often, the route they take each morning, the moment their habits change, the organizations they approach, the services they rely on, or the private crises suggested by searches, calls, payments, and movements. It can reveal the outline of a life by recording its coordinates. This is why official claims that metadata is less intrusive than content have often been misleading. Content may reveal meaning directly, but metadata can reveal context, and context is often what gives meaning its social and political force. The older distinction between watching bodies and reading records became increasingly inadequate. Metadata made conduct visible as pattern.

The expansion of trace surveillance also changed the relationship between voluntary action and institutional knowledge. People chose to search, buy, post, message, travel, browse, stream, register, subscribe, and connect, but those choices occurred inside systems designed to log activity. The resulting data trails were often produced incidentally, not as deliberate self-disclosure in the older sense. A person might understand that an email had been sent, a product purchased, or a search entered, but not fully grasp the surrounding informational exhaust: IP address, browser fingerprint, cookies, device identifiers, geolocation, referral paths, advertising profiles, retention policies, third-party sharing, and later algorithmic inferences. This is why the internet intensified rather than merely digitized earlier surveillance. It made the ordinary act of living through networks into a source of continuing institutional visibility.

The legal and political problem was that these traces often moved through private infrastructure before becoming available to state power. Internet service providers, telecommunications companies, search engines, platforms, advertisers, analytics firms, payment processors, app developers, and data brokers collected information for commercial, technical, security, or administrative reasons. Governments then gained access through subpoenas, warrants, national security authorities, voluntary cooperation, bulk collection programs, or purchases from commercial data markets. The result was not a simple opposition between state surveillance and corporate surveillance, but a layered arrangement in which each could amplify the other. Language of “digital dossiers” remains useful here because it captures how scattered data points can accumulate into consequential portraits of persons. Some work emphasizes that networked environments are not neutral containers for personal freedom; they are structured by technical, legal, and economic rules that shape what information flows freely and what remains protected.

By the early 21st century, trace surveillance had become one of the defining conditions of digital society. It did not always resemble the obvious surveillance of cameras, wiretaps, or police files. Much of it appeared as convenience, personalization, fraud prevention, security, analytics, recommendation, navigation, or social connection. Yet its historical importance lies precisely in that ordinariness. Surveillance no longer required a special moment of suspicion. It could arise from the normal operation of systems people used every day. The internet prepared the ground for the post-9/11 expansion of mass metadata collection by creating both the technical infrastructure and the cultural habit of continuous data generation. The next stage would bring this logic into the center of national security policy, where the traces of ordinary communication became objects of extraordinary governmental interest.

9/11, the PATRIOT Act, and the Normalization of Mass Metadata Collection

The attacks of September 11, 2001, did not create digital surveillance, but they transformed its political urgency, legal justification, and institutional scale. The infrastructure for trace surveillance already existed in telecommunications networks, internet service providers, financial systems, airline records, immigration databases, credit bureaus, and government files. What changed after 9/11 was the governing assumption about risk. Intelligence agencies and law enforcement officials increasingly argued that the central failure had been one of connection: too many fragments of information existed in separate places, and too few had been linked in time. In that atmosphere, surveillance was recast as prevention. The goal was not only to investigate crimes after they occurred, but to detect patterns, associations, and signals before violence could happen. This preventive logic made metadata especially attractive because it promised to reveal networks without requiring officials to know in advance precisely which communication contained danger.

The USA PATRIOT Act, signed into law in October 2001, became the most important legislative symbol of this shift. Its full title, the Uniting and Strengthening America by Providing Appropriate Tools Required to Intercept and Obstruct Terrorism Act, captured the emergency language of the moment. The law expanded search, surveillance, information-sharing, and investigative powers across multiple legal domains, including amendments affecting the Foreign Intelligence Surveillance Act and other federal statutes. Section 215 became especially controversial because it allowed the government to seek “tangible things,” including business records, for foreign intelligence and terrorism investigations. That phrase mattered because it linked national security surveillance to the vast record systems of modern life. A business record was not merely a receipt or a file in a cabinet. In a computerized society, it could include transactional data, communication logs, account information, travel records, financial activity, and other structured traces produced by ordinary participation in commercial and technical systems. The importance of Section 215 was not only legal, but conceptual. It helped move surveillance away from the older image of direct observation or targeted interception and toward the acquisition of records held by third parties. The state did not need to watch every act as it happened if private systems were already producing durable traces of those acts. The PATRIOT Act translated the anxieties of the post-9/11 security state into the language of databases, business records, and institutional access.

The post-9/11 surveillance state did not depend only on spies, wiretaps, or covert listening devices. It depended on the ordinary recordkeeping practices of telecommunications companies, banks, travel firms, internet providers, and other private institutions. This was a crucial development in the history of surveillance because it made private infrastructure part of public security governance. Phone companies already generated call-detail records for billing, routing, and network management. Financial firms already recorded transactions. Airlines already held passenger data. Internet companies already logged user activity. The state’s expanding interest lay in gaining access to these reservoirs of information and turning them toward intelligence analysis. The older distinction between state surveillance and commercial data collection became increasingly unstable. The government did not always need to build a new database from scratch when one already existed in private hands.

The National Security Agency’s bulk telephone metadata program became the clearest public example of this logic after Edward Snowden’s 2013 disclosures. Under interpretations of Section 215, the government collected large volumes of telephone metadata associated with calls, including information about numbers dialed and call timing, while generally defending the program on the ground that it did not collect call content. Yet the controversy revealed how inadequate the content-metadata distinction had become. A database of call records could be queried to reconstruct associations, routines, organizations, contacts, and social networks. The Privacy and Civil Liberties Oversight Board’s 2014 report criticized the Section 215 telephone records program and examined both the program itself and the operations of the Foreign Intelligence Surveillance Court. In 2015, the United States Court of Appeals for the Second Circuit ruled in ACLU v. Clapper that the bulk telephone metadata program exceeded what Section 215 authorized, and Congress soon after passed the USA FREEDOM Act, which amended the legal framework and prohibited the bulk collection of Americans’ call records under that authority.

The deeper transformation was cultural as well as legal. Mass metadata collection normalized the idea that security could require large-scale acquisition before individualized suspicion had fully formed. Earlier forms of surveillance often began with a target: a suspect, organization, political movement, foreign agent, or criminal network. Post-9/11 metadata surveillance could begin with a dataset. Analysts could search backward from a known number, outward through contacts, and across patterns that suggested association or relevance. This altered the temporal structure of investigation. Surveillance was no longer only retrospective, following a crime, or targeted, following a suspect. It could be speculative and relational, collecting first so that possible significance might be discovered later. In that respect, bulk metadata collection extended the logic of dataveillance into national security: the person became visible not only through what they said or did, but through their position inside a field of connections.

The legacy of 9/11 surveillance is not reducible to one statute or one program. The PATRIOT Act, the Foreign Intelligence Surveillance Court, National Security Agency programs, telecom cooperation, litigation, congressional reforms, and public disclosures all belong to a broader transition in which digital traces became instruments of preventive governance. Even after reforms followed the Snowden disclosures, the underlying premise remained powerful: in a networked society, security institutions would seek not only to watch suspects, but to map the informational environment in which suspicion itself could be generated.

Corporate Surveillance, Data Brokers, and the Privatization of Intelligence

The expansion of trace surveillance did not belong only to states. By the late 20th and early 21st centuries, corporations had become some of the most powerful surveillance institutions in modern life. Their motives were not always those of police or intelligence agencies. They sought profit, efficiency, market prediction, fraud prevention, customer retention, advertising precision, workplace control, risk management, and competitive advantage. Yet the result was still surveillance in the historical sense: organized observation, recording, classification, and interpretation of human behavior for institutional purposes. The commercial world learned to watch not by standing over people, but by collecting the traces left by purchasing, browsing, searching, traveling, working, borrowing, liking, sharing, downloading, subscribing, and moving through networked environments.

This development grew from older forms of commercial recordkeeping. Credit bureaus, insurers, department stores, direct marketers, banks, employers, landlords, and mailing-list companies had long collected information about consumers and workers. What changed in the digital period was the scale, speed, and granularity of that collection. Commercial data systems no longer relied only on formal applications, billing histories, or declared preferences. They increasingly captured behavior as it happened. A search query, click, pause, route, abandoned shopping cart, loyalty-card purchase, app permission, device identifier, or location signal could become part of a larger profile. The consumer was no longer known only through what they told a company. They became known through what systems inferred from their conduct. This altered the meaning of ordinary market participation. Buying, browsing, comparing prices, reading reviews, joining a platform, installing an app, or using a loyalty program could all become acts of data production. Even refusal could become informational, as an ignored advertisement, skipped video, declined offer, or abandoned form helped refine a profile. Corporate surveillance moved from recordkeeping to behavioral analysis, and from behavioral analysis toward prediction. The goal was not merely to know what a person had done, but to estimate what that person might want, fear, buy, believe, avoid, or become.

Data brokers gave this new economy one of its most important institutional forms. They collected, purchased, aggregated, analyzed, and sold personal information drawn from public records, commercial transactions, online behavior, surveys, warranty cards, property records, court documents, voter files, social media traces, and other sources. The Federal Trade Commission’s 2014 report, Data Brokers: A Call for Transparency and Accountability, emphasized that many consumers had little knowledge of the existence of these companies, the sources of their information, or the ways their profiles were used. The data broker’s power lies partly in invisibility. Unlike a police officer, camera, or employer, the broker usually does not appear in the ordinary person’s field of awareness. It watches through aggregation. It knows by assembling fragments that were produced elsewhere and making them useful for marketing, identity verification, people search products, fraud detection, eligibility decisions, or risk classification.

This privatization of intelligence changed the political economy of surveillance. Information about persons became a commodity, and predictive knowledge became a business model. The concept of the “panoptic sort” is especially useful here because it describes the sorting of people according to estimated value, risk, and usefulness. Commercial surveillance does not simply identify people. It ranks them. It asks who is likely to buy, default, donate, move, vote, click, churn, file a claim, become ill, need credit, respond emotionally, or cost too much. Such sorting may appear less coercive than state surveillance, but its effects can be deeply consequential. Profiles may shape access to credit, insurance, housing, employment opportunities, prices, political messages, health information, and social visibility. The market does not need to imprison a person to constrain them. It can sort, exclude, target, nudge, or neglect.

The boundary between corporate surveillance and state surveillance became increasingly porous. Government agencies could obtain information from private companies through warrants, subpoenas, national security authorities, contracts, partnerships, or purchases from commercial data markets. This mattered because privately collected data could sometimes provide state agencies with access to information they might have found harder to collect directly. A government may face legal, political, or practical limits when it attempts to gather certain information itself, but commercially produced data can create alternative routes into the same terrain of knowledge. Location data, financial traces, consumer profiles, property records, social media analytics, and identity-verification tools can all become useful to public agencies once they exist in private hands. Meanwhile, corporations benefited from public records, government identity systems, legal infrastructures, and security demands that normalized data retention. The result was not a simple handoff from private business to public authority, but an ecosystem. Companies collected information for profit and administration; governments used information for security, policing, immigration, taxation, and intelligence; and data flowed between them through legal, commercial, and technical channels. Surveillance became less a single institution than a marketplace of visibility, in which private actors built much of the infrastructure through which public power could later see.

The rise of surveillance capitalism sharpened this development by making behavioral prediction itself central to digital business. Search engines, social media platforms, advertising networks, mobile apps, e-commerce systems, and analytics companies learned to extract value from attention and behavior. This made surveillance feel ordinary because it was wrapped in convenience, personalization, connection, entertainment, navigation, and free services. Yet its deeper significance was structural. Corporate surveillance helped build a world in which intelligence about persons could be produced continuously, monetized privately, and made available to other institutions. In the long history of surveillance, this was a decisive turn: watching was no longer only an instrument of sovereignty or discipline. It had become a business model.

Biometrics, Facial Recognition, and the Return of the Body as Data

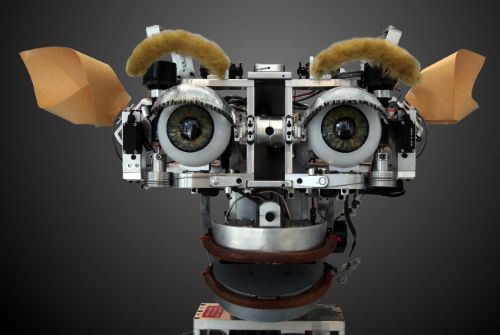

The history of digital surveillance can sometimes appear to move away from the body. Databases, metadata, consumer profiles, search histories, and commercial traces seem to describe persons through records rather than physical presence. Yet biometric surveillance shows that the body never disappeared. It returned as data. Fingerprints had already established the basic principle that a bodily feature could become an administrative identifier, but later biometric systems expanded that principle across the face, iris, voice, gait, DNA, palm, and even patterns of behavior. The body became not merely something that could be observed by another person, but something that could be captured, measured, encoded, compared, and searched by machines. Biometrics joined older identification practices to the digital logic of databases.

Facial recognition became especially important because it connected public visibility to automated identification. A face has always been socially meaningful. People recognize one another through faces, and institutions have long used photographs in passports, police files, driver’s licenses, personnel records, and immigration documents. Facial recognition changed the scale and speed of that process. It allowed an image captured by a camera, uploaded to a platform, stored in a database, or collected in public space to be compared against other images for possible identification. In principle, this made the face searchable. The significance is profound. Earlier visual surveillance could show that someone had been present in a place. Facial recognition could attempt to attach that presence to a name, a record, a history, and a network of institutional knowledge. The camera no longer merely watched the body. It queried the archive.

This marked a return to the 19th-century dream of identifying the person through the measurable body, but with far greater computational power. Bertillonage translated the body into measurements. Fingerprinting translated touch into classification. Facial recognition translates appearance into mathematical representation. In each case, surveillance depends on abstraction: a complex human being is reduced to features that can be stored and compared. Yet the digital version is more expansive because it can operate across large image collections and dispersed camera systems. A passport photograph, mugshot, social media image, driver’s license photo, or security-camera frame may become part of a searchable environment. The face, once encountered in a specific social situation, becomes a biometric key that can connect one context to another. This is why facial recognition belongs at the center of modern surveillance history. It fuses observation, identification, database search, and networked comparison.

The power of biometric surveillance also lies in its asymmetry. A person can usually choose whether to disclose a password, present a document, or answer a question, at least in ordinary circumstances. A face, by contrast, is exposed whenever one moves through public or semi-public space. This makes facial recognition different from many earlier identification systems. It can operate at a distance, without contact, and sometimes without the knowledge of the person being scanned. The National Academies’ 2024 report on facial recognition technology emphasizes the broad range of current and possible applications as well as the legal, social, ethical, and governance questions raised by its use. That governance problem is inseparable from the technology’s basic structure. Biometric identifiers are difficult to change, and errors or misuse can follow a person precisely because the identifier is attached to the body itself.

Accuracy and bias have been among the most important concerns. Facial recognition systems do not simply “see” in a neutral human sense. They are trained, tested, and deployed through datasets, technical thresholds, institutional procedures, and policy choices. The National Institute of Standards and Technology’s 2019 demographic effects report found measurable demographic differences across many face recognition algorithms, demonstrating that performance can vary depending on the system, task, and population being evaluated. Such differences matter because biometric error is not evenly distributed in its consequences. A false match in a casual commercial setting may be inconvenient; a false match in policing can lead to interrogation, arrest, reputational harm, or worse. The problem becomes more serious when an algorithmic suggestion is treated as investigative confirmation rather than a lead requiring independent scrutiny. A face recognition match may carry an aura of technical certainty even when it depends on image quality, lighting, camera angle, database composition, confidence thresholds, and human interpretation. In law enforcement settings, that aura can encourage overreliance, especially when officers, prosecutors, or judges do not fully understand the limits of the system. The issue is not only whether the technology works in the abstract. It is where it is used, against whom, under what safeguards, with what human review, with what disclosure to defendants, and with what possibility of correction. Biometric surveillance raises questions not simply about privacy, but about evidence, accountability, and institutional trust.

Biometrics transformed surveillance by making identity more immediate and less escapable. The body became a password one cannot easily reset, a document one cannot leave at home, and a data source one may reveal simply by existing in view. Yet this return of the body as data did not restore older forms of direct observation. It folded the body into the database. Facial recognition, iris scanning, DNA indexing, voice identification, and gait analysis all extend the logic of dataveillance by making biological and behavioral features searchable across institutional systems. The implications reach beyond privacy alone. They concern due process, public anonymity, racial and gender equity, consent, governance, and the right to move through shared spaces without being continuously identified. In this respect, biometric surveillance is both ancient and new: ancient in its desire to know the person through the body, new in its ability to make the body machine-readable at scale.

Artificial Intelligence, Predictive Systems, and the Shift from Observation to Anticipation

Artificial intelligence did not create surveillance, but it changed the ambitions attached to it. Earlier surveillance systems watched, recorded, identified, intercepted, filed, searched, and classified. Artificial intelligence added a stronger claim to anticipation. It promised to detect patterns too complex for ordinary human review, connect signals across large datasets, flag anomalies, forecast risks, and recommend interventions before an event fully unfolded. This did not mean that surveillance became magically objective or omniscient. It meant that older practices of watching and sorting were increasingly joined to statistical modeling, machine learning, automated decision systems, and predictive analytics. The historical movement was not from human judgment to machine certainty, but from visible observation toward computational inference. Surveillance began to ask not only what a person had done, but what a system predicted that person, place, or group might do next.

Predictive policing made this shift especially visible. Police departments and technology vendors presented algorithmic tools as ways to allocate patrols, identify possible crime hot spots, assess risk, and focus attention on people or places believed to be associated with future violence or disorder. These systems varied in design, but they shared a common premise: past data could help anticipate future events. That premise was powerful because it aligned with the preventive logic that had grown after 9/11 and with older police desires to act before harm occurred. Yet predictive policing also exposed one of the central dangers of AI surveillance. If historical police data reflects unequal enforcement, racialized suspicion, neighborhood over-policing, or discretionary bias, then an algorithm trained on that data can reproduce those patterns while appearing neutral. The machine does not escape history. It can encode it.

Artificial intelligence also expanded surveillance beyond policing into welfare systems, workplaces, schools, borders, insurance, finance, hiring, health care, and digital platforms. Automated eligibility systems, fraud detection tools, risk scores, productivity monitors, content-ranking systems, behavioral analytics, and identity-verification platforms all rely on the same broad movement: institutions increasingly use data models to classify people and guide decisions. Work on automated welfare and social-service systems is important because it shows that predictive systems often fall most heavily on poor and working-class people, who are already more exposed to bureaucratic scrutiny. The issue is not only whether an algorithm makes a technically accurate prediction. It is whether automated judgment intensifies existing inequalities, narrows human discretion, hides responsibility, and makes institutional decisions harder to contest. A flawed database entry, a missed appointment, a confusing form, a suspicious transaction pattern, or a statistical resemblance to others can become the basis for institutional action before any human being has meaningfully heard the affected person’s explanation. In workplaces, AI-enabled surveillance can measure keystrokes, location, productivity, customer interactions, screen activity, delivery routes, and biometric or behavioral signals, converting labor into continuous performance data. In schools and universities, monitoring tools may frame students as risks to be detected rather than persons to be educated. At borders, automated systems can sort travelers, migrants, and asylum seekers through risk categories that may be opaque, difficult to challenge, and shaped by geopolitical assumptions. Across these settings, AI surveillance does not simply gather information. It distributes suspicion, attention, opportunity, and constraint. AI surveillance is powerful because it can make governance look like calculation.

The shift from observation to anticipation also changes the meaning of evidence. Traditional surveillance often produced records of something that had happened: a report, photograph, fingerprint, intercepted message, video recording, or database entry. Predictive systems produce probabilities, scores, alerts, rankings, and recommendations. These outputs can influence real decisions even when they are uncertain, opaque, or based on correlations rather than causes. A person may be treated as risky because of location, association, prior contact with institutions, demographic proxies, spending patterns, online behavior, or similarity to others in a dataset. A neighborhood may receive more patrols because prior patrols generated more recorded incidents there, producing a feedback loop in which surveillance confirms its own assumptions. This is why AI surveillance demands historical analysis rather than technical description alone. It turns the archive into a forecast, and then uses the forecast to justify action in the present.

By the early 21st century, the most consequential surveillance systems were no longer defined only by cameras, wiretaps, files, or databases, but by their integration. License plate readers, facial recognition, CCTV networks, social media monitoring, location data, gunshot detection systems, real-time crime centers, commercial databases, and predictive analytics could be combined into platforms that promised situational awareness and rapid response. The National Academies’ recent work on facial recognition technology emphasizes that these systems raise legal, social, ethical, and governance questions precisely because they interact with other technologies and institutional practices. AI is best understood as an intensifier. It makes surveillance faster, more scalable, more relational, and more future-oriented, but it does not remove the political choices embedded in surveillance. It may decide where attention goes, but people and institutions decide what counts as risk, whose data matters, which errors are tolerable, and who bears the cost when the system is wrong.

Consequences: Anonymity, Conformity, Security, and Democratic Accountability

The long evolution from informants to databases changed not only the methods of surveillance, but the conditions under which social life unfolds. Earlier systems could be intrusive, violent, and politically dangerous, but they were often limited by geography, labor, memory, and institutional reach. A ruler needed informants; a police officer needed a suspect; a camera needed placement; a file needed retrieval; a wiretap needed access to a line. Contemporary surveillance has weakened many of those limits. It operates through infrastructures that people use for communication, commerce, travel, employment, entertainment, security, and social participation. The result is not simply more watching. It is a world in which observation, recording, identification, classification, and prediction are woven into ordinary systems. Surveillance becomes harder to separate from the basic mechanisms of modern life.

One of the clearest consequences is the erosion of practical anonymity. Anonymity has never been absolute, and many societies have long required people to be recognizable to neighbors, officials, employers, kinship networks, or religious communities. Yet modern urban life once offered forms of partial anonymity created by scale, mobility, crowding, weak records, and the ability to move beyond the reach of local memory. Digital and biometric surveillance narrow that space. A person may pass through a city, browse the internet, use a phone, enter a workplace, board transit, purchase goods, attend a protest, or walk past a camera while leaving traces that can later be linked to identity. The problem is not only that someone may be watched in the moment. It is that actions can become retrospectively identifiable. The past becomes searchable. Anonymity erodes when people no longer know whether ordinary acts will remain ordinary, or later become evidence, risk signals, marketing categories, investigative leads, or political vulnerabilities.

Surveillance also encourages conformity, often without direct coercion. Michel Foucault’s analysis of disciplinary power remains useful because it explains how observation changes behavior before punishment occurs. People who believe they may be watched often regulate themselves. They may avoid controversial searches, refrain from political association, soften criticism, limit movement, accept workplace monitoring, or alter speech in public and digital spaces. This does not require an all-seeing state or a perfectly functioning system. The uncertainty itself can be disciplining. A person does not need to know that every message is read, every camera monitored, or every dataset analyzed. It may be enough to know that records exist, that institutions can access them, and that interpretation may occur later without context or appeal. Surveillance acts not only through detection, but through anticipation. It produces citizens, workers, consumers, and subjects who learn to ask how their behavior might look to systems they cannot see. This anticipatory self-discipline is especially powerful because it can masquerade as prudence, professionalism, safety, or common sense. A worker may tolerate invasive monitoring because refusing it could appear suspicious or uncooperative. A student may avoid researching controversial subjects because a flagged search history could be misunderstood. A citizen may hesitate before attending a protest, signing a petition, or criticizing authority because the future audience for that record is unknowable. Surveillance does not need to silence everyone. It only needs to make expression feel calculable, risky, and permanently recoverable. The result is a quieter form of control, one that narrows conduct by making people internalize the possibility of institutional judgment.

Security is the most durable justification for surveillance because it addresses real fears. States have responsibilities to prevent violence, investigate crime, protect infrastructure, counter espionage, respond to terrorism, and defend vulnerable people. Businesses also face fraud, theft, cyberattacks, workplace safety concerns, and obligations to protect customers. Surveillance can serve legitimate purposes, but the harder question is how societies prevent the logic of security from becoming limitless. A tool introduced for emergency, crime prevention, counterterrorism, or fraud control can migrate into ordinary administration, commercial profiling, political monitoring, workplace discipline, immigration enforcement, or social sorting.

Data security adds another danger: the more information institutions collect, the more they create targets for abuse, breach, theft, and misuse. Surveillance systems often promise protection, but they also concentrate vulnerability. Identity records, biometric templates, location histories, financial data, medical information, communications metadata, workplace monitoring records, and consumer profiles can all be exposed, stolen, sold, leaked, or repurposed. Unlike a password, many forms of personal data cannot easily be changed once compromised. A person cannot replace a face, fingerprint, DNA profile, childhood address history, or long record of associations. Large-scale data collection creates a paradox. Institutions gather information to manage risk, but the accumulation itself becomes a source of risk. The danger does not come only from malicious hackers. It can also come from careless retention, weak governance, opaque sharing, discriminatory use, mission creep, and the routine assumption that more data is always better.

The democratic problem is accountability. Surveillance expands most easily where visibility is unequal: institutions see people more clearly than people see institutions. Citizens may not know what is collected, how long it is retained, who can access it, what inferences are drawn, or how to challenge errors. Automated systems deepen that problem when decisions emerge from proprietary models, classified programs, complex databases, or public-private partnerships that evade ordinary scrutiny. Democratic accountability requires more than trust in officials or confidence in technology. It requires law, transparency, independent oversight, limits on collection, meaningful consent where possible, due process, auditability, public debate, and remedies for abuse. The history traced here shows that surveillance has repeatedly expanded by attaching itself to practical needs: rule, policing, identification, communication, commerce, security, efficiency, and prediction. Its consequences now reach the foundations of public life. A democracy cannot survive merely by watching danger. It must also watch the watchers, or the infrastructure built to protect liberty can quietly become one of the means by which liberty is narrowed.

Conclusion: The Database as the New Watchtower

The history of surveillance is a history of changing distance between power and the person. In its older forms, surveillance required bodies: the informer in the crowd, the spy at court, the officer in the street, the censor at the desk, the operator at the switchboard, the guard before the monitor. These figures did not disappear, but their work was gradually absorbed into systems that could remember, compare, and act without continuous face-to-face observation. Files made suspicion durable. Fingerprints made the body classifiable. Wiretaps made communication vulnerable through technical networks. Cameras displaced vision into control rooms and recordings. Computers made records searchable. The internet turned ordinary life into traceable activity. Biometrics returned the body as machine-readable data. Artificial intelligence then promised to convert accumulated records into forecasts. At each stage, surveillance became less dependent on watching a person directly and more dependent on systems capable of rendering that person institutionally visible.

The database is the new watchtower, though it does not resemble the tower in Foucault’s prison metaphor in any simple architectural sense. It is dispersed rather than centralized, often commercial as well as governmental, and frequently hidden inside services that appear convenient, neutral, or necessary. Its power lies not in one observer looking down at confined bodies, but in the ability to assemble fragments from many places into a usable image of a person or population. A database can preserve the past, sort the present, and shape the future by influencing decisions about credit, employment, policing, travel, insurance, benefits, advertising, immigration, education, and political communication. It does not need perfect knowledge to be consequential. Partial, probabilistic, or erroneous knowledge can still guide action when institutions trust the system enough to act upon its outputs.

This transformation also changes the meaning of resistance and accountability. Earlier surveillance could sometimes be confronted because it had visible agents and physical sites: the officer, the informant, the camera, the file room, the checkpoint, the wiretap order. Contemporary surveillance is harder to grasp because it operates through infrastructures that citizens rely upon and cannot easily avoid. The problem is not simply secrecy, though secrecy matters. It is opacity: the difficulty of knowing what has been collected, how it has been interpreted, where it has traveled, who has purchased or accessed it, what assumptions structure the model, and how errors can be corrected. A democratic society cannot answer these questions merely by demanding trust. It must build limits into collection, transparency into use, contestability into decisions, and public oversight into systems that otherwise expand through convenience, fear, profit, and administrative habit.

The long movement from informants to databases shows that surveillance has never been only a technical matter. It has always been a moral and political question about how power sees human beings. The danger of modern surveillance is not that every person is watched at every moment by a single all-knowing authority. The danger is more subtle and more ordinary: that life becomes increasingly organized around systems that remember more than individuals can control, infer more than they knowingly disclose, and judge more than they can meaningfully contest. The database as watchtower does not merely observe society from above. It helps produce the categories through which society is governed. That is why the history of surveillance ends not with the triumph of technology, but with an unresolved democratic question: whether institutions built to see more can still be made answerable to the people they see.

Bibliography

- American Civil Liberties Union. ACLU v. Clapper: Challenge to NSA Mass Call-Tracking Program. New York: American Civil Liberties Union, 2015.

- American Civil Liberties Union v. Clapper, 785 F.3d 787. 2nd Cir. 2015.

- Andrew, Christopher. The Secret World: A History of Intelligence. New Haven: Yale University Press, 2018.

- Armstrong, Gary, and Clive Norris. The Maximum Surveillance Society: The Rise of CCTV. Oxford: Berg, 1998.